07b_regression.pdf

Transcript of 07b_regression.pdf

-

7/29/2019 07b_regression.pdf

1/24

Linear Re ression

J eff Howbert Introduction to Machine Learning Winter 2012 1

-

7/29/2019 07b_regression.pdf

2/24

J eff Howbert Introduction to Machine Learning Winter 2012 2

slide thanks to Greg Shakhnarovich (CS195-5, Brown Univ., 2006)

-

7/29/2019 07b_regression.pdf

3/24

J eff Howbert Introduction to Machine Learning Winter 2012 3

slide thanks to Greg Shakhnarovich (CS195-5, Brown Univ., 2006)

-

7/29/2019 07b_regression.pdf

4/24

J eff Howbert Introduction to Machine Learning Winter 2012 4

slide thanks to Greg Shakhnarovich (CS195-5, Brown Univ., 2006)

-

7/29/2019 07b_regression.pdf

5/24

J eff Howbert Introduction to Machine Learning Winter 2012 5

slide thanks to Greg Shakhnarovich (CS195-5, Brown Univ., 2006)

-

7/29/2019 07b_regression.pdf

6/24

J eff Howbert Introduction to Machine Learning Winter 2012 6

slide thanks to Greg Shakhnarovich (CS195-5, Brown Univ., 2006)

-

7/29/2019 07b_regression.pdf

7/24

Loss function

z Suppose target labels come from setY

Binar classification: Y = 0, 1

Regression: Y = (real numbers)z A loss function maps decisions to costs:

defines the penalty for predicting when the

true value is .

),( yyL y

yz an ar c o ce or c ass ca on:

0/1 loss (same asmisclassification error)

=

=otherwise1

if0),(1/0

yyyyL

z Standard choice for regression: 2

J eff Howbert Introduction to Machine Learning Winter 2012 7

squared loss,

-

7/29/2019 07b_regression.pdf

8/24

Least squares linear fit to data

z Most popular estimation method is least squares:

Determine linear coefficients , that minimize sumof squared loss (SSL).

Use standard (multivariate) differential calculus:

eren a e w respec o , find zeros of each partial differential equation

solve for , z One dimension:

N

jj Nxy samplesofnumber))((SSL2 =+=

j

x, yx,yxyx

yxtrainingofmeans

]var[

],cov[1

===

=

J eff Howbert Introduction to Machine Learning Winter 2012 8

ttt xxy samplefor test +=

-

7/29/2019 07b_regression.pdf

9/24

Least squares linear fit to data

z Multiple dimensions

To sim lif notation and derivation, chan e to ,

and add a new feature x0 = 1 to feature vector x:x =+=

d

ii xy 0 1

Calculate SSL and determine :=i 1

N d

j

j i

iij

y

xy

y

XyXy

responsestrainingallofvector

)()()(SSL1

T2

0

=

=== =

j

yXXX

xX

)(

samplestrainingallofmatrix

T1T=

=

J eff Howbert Introduction to Machine Learning Winter 2012 9

ttty xx samplefor test =

-

7/29/2019 07b_regression.pdf

10/24

Least squares linear fit to data

J eff Howbert Introduction to Machine Learning Winter 2012 10

-

7/29/2019 07b_regression.pdf

11/24

Least squares linear fit to data

J eff Howbert Introduction to Machine Learning Winter 2012 11

-

7/29/2019 07b_regression.pdf

12/24

Extending application of linear regression

zThe inputs X for linear regression can be:

Ori inal uantitative in uts

Transformation of quantitative inputs, e.g. log, exp,square root, square, etc.

Polynomial transformation

example: y =0

+1x +

2x2 +

3x3

Dummy coding of categorical inputs

example: x3 =x1 x2

zThis allows use of linear regression techniques to fit much

J eff Howbert Introduction to Machine Learning Winter 2012 12

more complicated non-linear datasets.

-

7/29/2019 07b_regression.pdf

13/24

Example of fitting polynomial curve with linear model

J eff Howbert Introduction to Machine Learning Winter 2012 13

-

7/29/2019 07b_regression.pdf

14/24

Prostate cancer dataset

z 97 samples, partitioned into:

67 trainin sam les

30 test samplesz Eight predictors (features):

6 continuous (4 log transforms)

1 binary 1 ordinal

z Continuous outcome variable:

l psa: og pros a e spec c an gen eve

J eff Howbert Introduction to Machine Learning Winter 2012 14

-

7/29/2019 07b_regression.pdf

15/24

Correlations of predictors in prostate cancer dataset

J eff Howbert Introduction to Machine Learning Winter 2012 15

-

7/29/2019 07b_regression.pdf

16/24

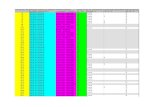

Fit of linear model to prostate cancer dataset

J eff Howbert Introduction to Machine Learning Winter 2012 16

-

7/29/2019 07b_regression.pdf

17/24

Regularization

z Complex models (lots of parameters) often prone to overfitting.

z Overfitting can be reduced by imposing a constraint on the overallmagn u e o e parame ers.

z Two common types of regularization in linear regression:

L re ularization (a.k.a. rid e re ression). Find which minimizes:

= ==+

N d

ii

d

iiij

xy1 1

22

0 )(

is the regularization parameter: bigger imposes more constraint

L1 regularization (a.k.a. lasso). Find which minimizes:+

N d

i

d

ii xy2 ||)(

J eff Howbert Introduction to Machine Learning Winter 2012 17

= ==j ii1 10

-

7/29/2019 07b_regression.pdf

18/24

Example of L2 regularization

L2 regularization

towards (but not to)zero, and towards

each other.

J eff Howbert Introduction to Machine Learning Winter 2012 18

-

7/29/2019 07b_regression.pdf

19/24

Example of L1 regularization

L1 regularization shrinkscoefficients to zero at

different rates; differentvalues of give models

w eren su se s ofeatures.

J eff Howbert Introduction to Machine Learning Winter 2012 19

-

7/29/2019 07b_regression.pdf

20/24

Example of subset selection

J eff Howbert Introduction to Machine Learning Winter 2012 20

-

7/29/2019 07b_regression.pdf

21/24

Comparison of various selection and shrinkage methods

J eff Howbert Introduction to Machine Learning Winter 2012 21

-

7/29/2019 07b_regression.pdf

22/24

L1 regularization gives sparse models, L2 does not

J eff Howbert Introduction to Machine Learning Winter 2012 22

-

7/29/2019 07b_regression.pdf

23/24

Other types of regression

z In addition to linear regression, there are:

-

decision trees

neural networks

su ort vector machines

locally linear regression

etc.

J eff Howbert Introduction to Machine Learning Winter 2012 23

-

7/29/2019 07b_regression.pdf

24/24

MATLAB interlude

_ _ .

art

J eff Howbert Introduction to Machine Learning Winter 2012 24