Value-Added Guidebookstatic.battelleforkids.org/documents/ohio/VALeaderGuide.pdf · to answer...

Transcript of Value-Added Guidebookstatic.battelleforkids.org/documents/ohio/VALeaderGuide.pdf · to answer...

1

Value-Added GuidebookFOR SCHOOL LEADERS

Designed for school leaders supporting educators in the use of value-added data to impact teaching and learning

© 2018, Battelle for Kids. All Rights Reserved.

2

TABLE OF CONTENTS

3 Introduction

4 Access to and Navigation of SAS® EVAAS® Reporting Site

5 Interpreting Accountability Reports

6 Interpreting District and School Value-Added Reports Investigating growth produced at each grade or course across the district or school

8 Interpreting District and School Diagnostic Reports Investigating which students benefited most (and least) from instruction

10 Interpreting District and School 1-Year Projection Summaries

11 Interpreting eTPES and Teacher Value-Added Reports in EVAAS®

13 Leading Teachers through Value-Added Reports

14 Sample Teacher Value-Added and Diagnostic Reports #1

17 Sample Teacher Value-Added and Diagnostic Reports #2

20 Sample Teacher Value-Added and Diagnostic Reports #3

23 EVAAS® District and School Value-Added Reports at a Glance

25 Interpreting and Analyzing District and School Value-Added Reports Investigation #1: How much growth was produced at each grade or course across the district or school

26 Apply Your Learning: Interpreting and Analyzing of District and School Value-Added Reports

28 Interpreting and Analyzing District and School Diagnostic Reports Investigation #2: Which students benefited most (and least) from the instruction in your district/school?

29 Apply Your Learning: Interpreting and Analyzing District and School Diagnostic Reports

32 Identifying Contributing Factors Resource 33 Checklist for Effective Use of Data

Please note: This guide uses visual representations of copyrighted EVAAS® Web reporting software from SAS Institute Inc. for instructional purposes.

3

INTRODUCTION

This School Leader Value-Added Guidebook is designed to help you support educators in using value-added data to impact teaching and learning. Prior to using these guidebooks, it is recommended that you watch the Value-Added Video Series and complete the accompanying facilitation guides, designed to help you acquire a basic, conceptual understanding of Ohio’s value-added measures.

In the materials that follow, you will be taken through a scenario in which various SAS® EVAAS® reports are used to answer particular questions about a teacher’s value-added results, providing helpful information for accurate interpretation, and sample analysis questioning. The reports used throughout this guidebook use reports generated from both value-added models:

• MRM: Used in grades 4–8 math and English language arts• URM: Used in grades 5 and 8 science, grade 6 social studies, and high school

end-of-course tests

While the reports look slightly different depending on the value-added model, interpretation and analysis of the reports are the same.

Throughout the use of this guidebook, you will:

• Understand how to interpret key SAS® EVAAS® reports.• Understand how to analyze data to prioritize strengths and opportunities for growth.• Identify possible contributing factors that impacted value-added results.• Develop capacity to use value-added data to inform professional practices.• Use analysis of the reports to support the development of professional growth plans within the Ohio Teacher

and Principal Evaluation System.

ADDITIONAL TOOLS AND RESOURCES:

Value-Added Guidebook for TeachersDesigned to support teachers using value-added data to impact teaching and student learning, the Value-Added Guidebook for Teachers is also a helpful resource for leaders to engage teachers in professional learning.

Data Coaching FrameworkTo begin building the data literacy of the educators with whom you work, it is essential to establish the right conditions and incorporate the right processes to enable you to effectively support data inquiry and use. Throughout this guidebook, you’ll see references to the processes of awareness building, understanding, interpretation, analysis, and use. See the Data Coaching Framework on the Ohio Portal for more information about building data literacy in your school or district.

ESTA

BLISHING THE RIGHT CONDITIONS

INCORPORATING THE RIGHT PROCES

SES

ADATA-

LITERATE TEAM

INCORPORATING THE RIGHT PROCES

SES

4

Visit ohiova.sas.com/ to access value-added reports.

The public has access to a limited number of value-added reports at the district and school level through the Education Value-Added Assessment System (EVAAS®). Educators have access to additional reporting that requires a username and password. Emails are automatically sent to educators containing their username and password when reports are released. If you do not remember your login information, click Forgot username or password from the Login screen. The EVAAS® website offers resources on the home page including links to the technical guide, FAQ document, and even provides a step-by-step guide to access reports.

This guide will focus on only a few of the reports available in SAS® EVAAS®. Once logged in, you can access various reports through the reports menu. Access to certain reports is restricted based on permissions determined by the district administrator.

SAS® EVAAS® Help FeatureWithin the SAS® EVAAS® reporting site, you can access the help screen by selecting the icon in the upper right corner. This feature provides additional support to promote awareness and understanding of the measures and interpretation of the reports. A navigation menu appears on the left-hand side of the help screen to allow you to easily access information about all available reports. You can also hover the mouse over terminology and features included on the reports to see pop-up boxes with additional information to assist in understanding and interpretation.

ACCESS TO AND NAVIGATION OF SAS® EVAAS® REPORTING SITE

5

The Ohio A-F District Report Card system includes Value-Added as graded measures within the Progress component. This includes theOverall Value-Added, as well as the following subgroups: Gifted, Students with Disabilities, and the Lowest 20% of Achievement in English

language arts, math, science and social studies.

Beginning with the 2016 reporting, Value-Added for science grades five and eight, social studies grade six, and end-of-course is included inthe across grades and across subjects measures. These measures do not report a growth measure or standard error across assessments

because each assessment uses a different scale and the value-added measures for individual assessments are reported in the scalingunits of the test.

In calculating the index values across grades and across subjects, the growth measures for each subject and grade or course are weightedbased on the number of students used to generate each measure. Refer to Ohio Department of Education's Technical Documentation of

SAS ® EVAAS ® Analyses for more information.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved. 1

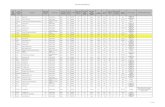

Report: Accountability Value-Added Report District: Kettering City School District (044180)Year: 2016

Ohio District Report Card Value-Added Measures

Accountability Measure Index Letter Grade

Overall 1.47 B

2-year

1-year 1.47 B

Gifted 4.79 A

Lowest 20% -3.63 F

Students with Disabilities -1.30 D

Grade/Subject Level (Overall Value-Added)

Subject Grade Number of Students Growth Measure Standard Error Index

Math All 3427 0.31

Math 4 544 3.5540 0.5420 6.56

Math 5 549 -4.5696 0.5115 -8.93

Math 6 534 -1.8973 0.4889 -3.88

Math 7 515 0.2805 0.4898 0.57

Math 8 379 -1.3086 0.5627 -2.32

Algebra I All 449 5.9158 0.7697 7.69

Geometry All 457 4.8755 0.7316 6.66

English Language Arts All 3711 2.77

English Language Arts 4 543 0.6120 0.6181 0.99

English Language Arts 5 549 -1.3744 0.6043 -2.27

English Language Arts 6 536 1.8951 0.5664 3.35

English Language Arts 7 514 2.4439 0.5683 4.30

English Language Arts 8 530 -2.3820 0.5623 -4.23

English Language Arts I All 502 0.6328 0.6376 0.99

English Language Arts II All 537 4.3804 0.6656 6.58

USE OF VALUE-ADDED ON OHIO’S REPORT CARD

The District or School Accountability Value-Added Report shows overall growth data and includes value-added measures that are shown on the progress component of the state report card.

1 Growth Measure: Conservative estimate of a school’s influence on students’ academic progress, compared to the growth standard.

2 Standard Error: Measure of certainty around the estimate and helps determine if the growth measure is significantly different than the growth standard.

3 Index: Basis for establishing an effectiveness level. Dividing the estimated growth measure by the associated standard error produces the index score. Associated with the performance level/color.

INTERPRETING ACCOUNTABILITY REPORTS

TIP FOR USING ACCOUNTABILITY VALUE-ADDED REPORTS

These reports are publicly available on the SAS® EVAAS® website. Letting community members know that these reports are available will provide transparency to the school report card.

Full report not shown

Accessible to:

District Leaders School Leaders Teachers Public

1 2The Ohio A-F District Report Card system includes Value-Added as graded measures within the Progress component. This includes theOverall Value-Added, as well as the following subgroups: Gifted, Students with Disabilities, and the Lowest 20% of Achievement in English

language arts, math, science and social studies.

Beginning with the 2016 reporting, Value-Added for science grades five and eight, social studies grade six, and end-of-course is included inthe across grades and across subjects measures. These measures do not report a growth measure or standard error across assessments

because each assessment uses a different scale and the value-added measures for individual assessments are reported in the scalingunits of the test.

In calculating the index values across grades and across subjects, the growth measures for each subject and grade or course are weightedbased on the number of students used to generate each measure. Refer to Ohio Department of Education's Technical Documentation of

SAS ® EVAAS ® Analyses for more information.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved. 1

Report: Accountability Value-Added Report District: Kettering City School District (044180)Year: 2016

Ohio District Report Card Value-Added Measures

Accountability Measure Index Letter Grade

Overall 1.47 B

2-year

1-year 1.47 B

Gifted 4.79 A

Lowest 20% -3.63 F

Students with Disabilities -1.30 D

Grade/Subject Level (Overall Value-Added)

Subject Grade Number of Students Growth Measure Standard Error Index

Math All 3427 0.31

Math 4 544 3.5540 0.5420 6.56

Math 5 549 -4.5696 0.5115 -8.93

Math 6 534 -1.8973 0.4889 -3.88

Math 7 515 0.2805 0.4898 0.57

Math 8 379 -1.3086 0.5627 -2.32

Algebra I All 449 5.9158 0.7697 7.69

Geometry All 457 4.8755 0.7316 6.66

English Language Arts All 3711 2.77

English Language Arts 4 543 0.6120 0.6181 0.99

English Language Arts 5 549 -1.3744 0.6043 -2.27

English Language Arts 6 536 1.8951 0.5664 3.35

English Language Arts 7 514 2.4439 0.5683 4.30

English Language Arts 8 530 -2.3820 0.5623 -4.23

English Language Arts I All 502 0.6328 0.6376 0.99

English Language Arts II All 537 4.3804 0.6656 6.58

3

3

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved. 2

Grade/Subject Level (Overall Value-Added)

Subject Grade Number of Students Growth Measure Standard Error Index

Science All 1042 -3.14

Science 5 525 -1.4589 1.1689 -1.24

Science 8 517 -3.4129 1.0604 -3.21

Social Studies All 527 -3.80

Social Studies 6 527 -3.6532 0.9589 -3.80

All Tests All 1.47

All Subjects 4 4.67

All Subjects 5 -6.74

All Subjects 6 -1.67

All Subjects 7 3.38

All Subjects 8 -5.55

Significant evidence that the district's students made more progress than the Growth Standard

Moderate evidence that the district's students made more progress than the Growth Standard

Evidence that the district's students made progress similar to the Growth Standard

Moderate evidence that the district's students made less progress than the Growth Standard

Significant evidence that the district's students made less progress than the Growth Standard

6

INVESTIGATING GROWTH PRODUCED AT EACH GRADE OR COURSE ACROSS THE DISTRICT OR SCHOOL

The District and School Value-Added Report summarizes the aggregate results and helps evaluate the overall effectiveness of programs. This report allows leaders to evaluate how a district or school has performed across years, from year to year, and across grade levels in a particular subject or course.

SCHOOL-LEVEL MEAN GAIN (MRM VALUE-ADDED REPORT)

1 Growth Standard: Represents the minimal amount of growth expected of students from one grade level to the next and is represented on reports by a value of 0.

2 Growth Measure: Conservative estimate of a school’s influence on students’ academic progress, compared to the growth standard expressed in NCEs.

3 Standard Error: Measure of certainty around the estimate and helps determine if the growth measure is significantly different than the growth standard.

4 State Average: Represents the average achievement in the state and can be used to compare how students in the district/school are performing compared to the average student in Ohio.

5 Average Achievement: Represents the average NCE for a cohort of students.

Accessible to:

District Leaders School Leaders Teachers Public

INTERPRETING DISTRICT AND SCHOOL VALUE-ADDED REPORTS

12

3

4

5

7

SCHOOL-LEVEL PREDICTED MEAN (URM) VALUE-ADDED REPORT

1 Average Score: Average of students’ actual score, reported in the scaling units of the test.2 Average Predicted Score: Average of students’ predicted score, reported in the scaling units of the test.3 Growth Measure: Conservative estimate of a school’s influence on students’ academic progress compared to the

growth standard. Expressed in scale score points. 4 Standard Error: Measure of certainty around the estimate and helps determine if the growth measure is

significantly different than the growth standard.

1 2

INTERPRETING A VALUE-ADDED REPORT

• The growth measure is a measure of progress relative to the growth of students in the average district/school in the state, called the growth standard.

• A positive growth measure indicates that students grew more than the average district/school statewide.

• A growth measure near 0 doesn’t indicate a lack of growth. Rather, it indicates that this group of students grew as much as the average district/school statewide.

• A negative growth measure doesn’t necessarily indicate that student’s lost information. Rather, it indicates that student didn’t grow as much as the average district/school statewide.

• Colors used in the table represent the gain index, which can be referenced on page 5 of this guidebook.

3 4

8

INVESTIGATING WHICH STUDENTS BENEFITED MOST (AND LEAST) FROM INSTRUCTION

The Diagnostic Report allows educators to identify patterns of progress across different achievement levels. Even though a district or school may have positive value-added results, it is possible that the needs of all students are not being met.

1 Growth: Conservative estimate of a school’s influence on students’ academic progress, compared to the growth standard. • MRM: Expressed in NCEs • URM: Expressed in scale score points

2 Achievement Groups: Students are placed into one of five achievement groups, or quintiles, based on their achievement. The lowest achievement quintile represents the students in the lowest-achieving fifth of the state while the highest achievement quintile represents the highest-achieving fifth of the state.• MRM: Students are assigned to an achievement group based on the average of the current year and

prior year NCE.• URM: Students are assigned to an achievement group based on their predicted score.

INTERPRETING DISTRICT AND SCHOOL DIAGNOSTIC REPORTS

Accessible to:

District Leaders School Leaders Teachers

Public

Growth is defined as the average difference between students' current year and prior year NCEs.

The OST Tested reports include all students who were tested at the school or district. Reporting that includes only those students who wereaccountable to that school or district is available under the OST Accountable test.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved. 1

Report: School Diagnostic Test: Ohio's State Tests (3-8)School: Kettering Middle School (018911) Subject: English Language ArtsDistrict: Kettering City School District (044180) Type: Accountable

Year: 2016 Grade: 7th-Grade

Achievement Groups

1 (Lowest) 2 3 (Middle) 4 5 (Highest)

English Language Arts Growth Standard 0.0 0.0 0.0 0.0 0.0

2016

Growth -3.4 -0.5 0.8 3.7 7.4

Standard Error 3.1 1.4 1.2 1.4 1.3

Number of Students 25 57 59 75 84

Percent of Students 8.3 19.0 19.7 25.0 28.0

PreviousYears

Growth

Standard Error

Number of Students 0 0 0 0 0

Percent of Students 0.0 0.0 0.0 0.0 0.0Growth is defined as the average difference between students' current year and prior year NCEs.

The OST Tested reports include all students who were tested at the school or district. Reporting that includes only those students who wereaccountable to that school or district is available under the OST Accountable test.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved. 1

Report: School Diagnostic Test: Ohio's State Tests (3-8)School: Kettering Middle School (018911) Subject: English Language ArtsDistrict: Kettering City School District (044180) Type: Accountable

Year: 2016 Grade: 7th-Grade

Achievement Groups

1 (Lowest) 2 3 (Middle) 4 5 (Highest)

English Language Arts Growth Standard 0.0 0.0 0.0 0.0 0.0

2016

Growth -3.4 -0.5 0.8 3.7 7.4

Standard Error 3.1 1.4 1.2 1.4 1.3

Number of Students 25 57 59 75 84

Percent of Students 8.3 19.0 19.7 25.0 28.0

PreviousYears

Growth

Standard Error

Number of Students 0 0 0 0 0

Percent of Students 0.0 0.0 0.0 0.0 0.0

1

2

9

INTERPRETING A DIAGNOSTIC REPORT

• The most recent year growth measure is represented by the vertical blue bar while the gold bars represent growth for up to three previous years when available.

• The growth standard is represented by a green, horizontal line.

• There is measurement error involved with any estimate, and this error is expressed in terms of the standard error. This measure represents the certainty of the growth measure, and helps determine if the growth measure is significantly different than the growth standard. It is dependent upon the quantity and quality of student-level data.

One standard error unit is represented by the solid black line on the graph and is also available in the table, and two standard error units is represented by the dotted black line on the graph.

If the one standard error line, the solid black line, is entirely above the growth standard, or the green horizontal line, then the group of students exceeded expectations. If the line is crossing the growth standard, the group of students met expected growth and if the line is entirely below the growth standard the group of students did not meet expectations.

If the bar (the growth measure) is at least two standard errors above the green line (the growth standard), the evidence of growth is even stronger.

10

INTERPRETING DISTRICT AND SCHOOL 1-YEAR PROJECTION SUMMARIES

INVESTIGATING HOW STUDENTS ARE PROJECTED TO PERFORM ON ASSESSMENTS

The 1-Year Projection Summary Report shows how many students in a district or school are projected to perform on a specific assessment in the future. This projection is based upon their historical testing performance and includes students currently enrolled in the district.

1. Enrollment, Test, and Projection: Captures the population of students, the assessment the report is projecting, and the state percentile or performance level used for the projection.

2. Probability of Success: Probability range for achieving at the selected performance level.

Accessible to:

District Leaders School Leaders Teachers

PublicOhio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: District 1-Yr. (Single Grade) Projection Summary Grade: 9th-GradeDistrict: Kettering City School District (044180) Projection: OST EOC ELA I (Proficient)

Year: 2016

Enrolled 9th-Grade Projected to OST EOC ELA I (Proficient)

Probability of Success Nr of Students Percentage

On Target: 90% or Greater 245 41%

At Risk: 50% or Greater, but 89.9% or Less 157 26%

Not Likely To Achieve Level: 49.9% or Less 170 28%

Students who lack sufficient data 5 1%

Students at or above proficiency 20 3%

On-target (90-100% probability). Students with a high likelihood of achieving level, and should be provided with advancedenrichment activities and instruction.

At-risk (50-89.9% probability). Students that are likely to achieve level, but are at-risk and should be a focus.

Not likely to achieve level (0-49.9% probability). Students that are not likely to achieve level and need extensive intervention.

Students who do not have a projection due to a lack of sufficient data.

Students who tested at or above proficiency and therefore do not have a projection

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: District 1-Yr. (Single Grade) Projection Summary Grade: 9th-GradeDistrict: Kettering City School District (044180) Projection: OST EOC ELA I (Proficient)

Year: 2016

Enrolled 9th-Grade Projected to OST EOC ELA I (Proficient)

Probability of Success Nr of Students Percentage

On Target: 90% or Greater 245 41%

At Risk: 50% or Greater, but 89.9% or Less 157 26%

Not Likely To Achieve Level: 49.9% or Less 170 28%

Students who lack sufficient data 5 1%

Students at or above proficiency 20 3%

On-target (90-100% probability). Students with a high likelihood of achieving level, and should be provided with advancedenrichment activities and instruction.

At-risk (50-89.9% probability). Students that are likely to achieve level, but are at-risk and should be a focus.

Not likely to achieve level (0-49.9% probability). Students that are not likely to achieve level and need extensive intervention.

Students who do not have a projection due to a lack of sufficient data.

Students who tested at or above proficiency and therefore do not have a projection

INTERPRETING A PROJECTION SUMMARY REPORT

• This report looks at how currently enrolled grade 9 students are projected to perform at the proficient performance level on the English 1 End of Course (EOC) test.

• In this sample report, 245 students have a 90% chance, or greater, of achieving the Proficient performance level; 157 students have between a 50% and 90% chance of achieving the Proficient level; 170 students have less than a 50% chance of achieving Proficient.

• Students must have three prior test scores across grades and subjects to have a projection. Students who lack sufficient data may still be used in other reports on the SAS® EVAAS® site.

• In this sample report, there are 20 currently enrolled 9th graders who already took the English I EOC and who scored at or above proficiency.

PROJECTION SUMMARY REPORTS CAN PROVIDE VALUABLE INFORMATION TO HELP LEADERS:

• Determine how to allocate resources for interventions, supports, and enrichment opportunities,• Make course placement decisions,• Make scheduling decisions,• Plan school improvement programs, and• Help teachers collaborate on instructional strategies for groups of students.

TIPS FOR USING PROJECTION SUMMARY REPORTS

• Filters can be applied by clicking . Use this tool to view a projection report for a certain demographic or any combination of race, gender, and demographic group.

• This particular report is populated in EVAAS® only for those districts that submitted current student enrollment data to SAS®. Please contact your district superintendent, data contact and/or Information Technology Center (ITC) with questions on data submission.

11

1A A1 and A2 teachers will see their State Value-Added Composite result on a 1–5 scale. Having a State Value-Added Composite result means that the teacher has a value-added growth measure for state tested areas.

A scale of 1-5 is used to represent the performance level associated with value-added composite measures.

1B Applicable B teachers will have a Vendor Value-Added Composite on a 1–5 scale. Having a Vendor Value-Added

Most Effective 5

Index 2 or greater: Significant evidence of exceeding the growth standard, expectation.

Above Average4

Index equal to or greater than 1, but less than 2: Moderate evidence of exceeding the growth standard, expectation.

Above Average3

Index equal to or greater than -1, but less than 1: Evidence of meeting the growth standard, expectation.

Approaching Average2

Index equal to or greater than -2, but less than -1: Moderate evidence of not meeting the growth standard, expectation.

Least Effective1

Index: Less than -2: Growth is more than 2 standard errors below the Growth Standard (0)

Composite result means that the teacher has a value-added growth measure for vendor-based assessments. This is an optional growth measure for districts and schools in Ohio.

Battelle for Kids is using visual representations of eTPES evaluation software from RANDA Solutions in this document for instructional purposes.

INTERPRETING eTPES AND TEACHER VALUE-ADDED REPORTS IN EVAAS®

CROSSWALKING TEACHER-LEVEL VALUE-ADDED REPORTS AND eTPES

1 View your Value-Added Composite result in eTPES (ohiotpes.com) both in the Student Growth Worksheet and the Ratings tab within the Summative Evaluation feature. Both are similar views of information and can be exported into a PDF.

1

1A1B

Accessible to:District LeadersSchool LeadersTeachers Public

12

TEACHER VALUE-ADDED SUMMARY

2 Visit ohiova.sas.com to review the Teacher Value-Added Summary Report, which is the landing page for teachers when they access their reports in the SAS® EVAAS® website. The summary report displays the growth measures, standard errors, index values, and levels for each grade/subject or course for which a teacher value-added report is available in the current year, as well as the teacher’s composite index and level.

2A The State Teacher Value-Added Composite is shown in both the chart and the table of the Teacher Value-Added Summary. It is the result that is also reflected in the eTPES. In EVAAS®, this composite result is shown as an index value (4.06) and level (Most Effective). See the chart on page 10 for additional details on the value-added performance levels.

By clicking on the State Composite link in the table, the user can see details on which growth measures from state tested areas are included in the composite result.

2B The Teacher Value-Added Summary also includes the teacher’s growth measures results for each grade/subject or course in the most recent year. These results are shown in both the chart and the table of the Teacher Value-Added Summary. The table includes the growth measure, standard errors and index value for each grade/subject area result. This teacher has a result for Ohio State Test (OST) Tested Math, Grade 6 and OST Tested Math, Grade 7 in the most recent year. The gain index allows educators to make comparisons from year to year and subject to subject.

OST Composite

Ohio’s State Tests (3–8) Tested Mathematics Grade 6

Ohio’s State Tests (3–8) Tested Mathematics Grade 7

2A

2B

OST Composite

OST Tested Math, Grade 6

OST Tested Math, Grade 7

2A

2B

13

LEADING TEACHERS THROUGH VALUE-ADDED REPORTS

All educators want to have a positive impact on student growth. The measures available in SAS® EVAAS® reporting are one measure educators can use to evaluate their impact.

You can provide targeted support to teachers by helping them to:

• Identify successes and opportunities for growth

• Inform professional reflection

• Leverage best practices

• Develop professional growth plans

It is important to recognize how personal the data is to classroom educators. Approach collaborative conversations with sensitivity to ensure educators can engage in candid conversations. Each teacher report will be unique, but the process for engaging in conversations follows the same four steps as outlined below and highlighted on the sample teacher reports on the following pages.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

Encourage teachers to identify successes that they see in their value-added and diagnostic reports. If necessary, highlight teachers’ successes.

Ask questions like:• What successes do you notice in your value-added report?• What are some possible contributing factors to these successes?

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

Explore the reports and ask teachers to identify opportunities for growth that are apparent in their reports.

Ask questions like:• What opportunities for growth exist?• What are some possible contributing factors to these opportunities for growth?

DEVELOP A PLAN

Assist teachers in completing an educator professional growth or improvement plan.

Ask questions like:• How might you leverage your strengths to address your opportunities for growth?• How might you collaborate with other teachers?• What professional learning or resources are needed?

STEP1

STEP2

STEP3

14

Teacher growth measures are from SAS ® EVAAS ® multivariate, longitudinal analyses using all available data for each student (up to 5 years). The multi-year trend incorporates the most recentstudent data, and the previous years' gains used in that trend may have changed from what was reported in previous years. ODE has requested, for consistency, the single-year growth measures in thechart and table above/below are the same as those that have been reported in previous years.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Value Added Test: OAASchool: Boxer Elementary/Middle School Subject: MathematicsDistrict: Big City School District Type: Tested

Teacher: CARINA CUELLAR (523717) Grade: 6th-Grade

Teacher Growth Measures and Standard Errors

Year Growth Measure Standard Error Index Level

2012 -7.3 1.7 -4.26 Least Effective

2013 -1.7 1.6 -1.03 Approaching Average

2014 2.4 2.1 1.12 Above Average

Multi-Yr Trend -2.2 1.1 -2.08 Least Effective

Teacher growth measures are from SAS ® EVAAS ® multivariate, longitudinal analyses using all available data for each student (up to 5 years). The multi-year trend incorporates the most recentstudent data, and the previous years' gains used in that trend may have changed from what was reported in previous years. ODE has requested, for consistency, the single-year growth measures in thechart and table above/below are the same as those that have been reported in previous years.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Value Added Test: OAASchool: Boxer Elementary/Middle School Subject: MathematicsDistrict: Big City School District Type: Tested

Teacher: CARINA CUELLAR (523717) Grade: 6th-Grade

Teacher Growth Measures and Standard Errors

Year Growth Measure Standard Error Index Level

2012 -7.3 1.7 -4.26 Least Effective

2013 -1.7 1.6 -1.03 Approaching Average

2014 2.4 2.1 1.12 Above Average

Multi-Yr Trend -2.2 1.1 -2.08 Least Effective

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Diagnostic Test: OAASchool: Boxer Elementary/Middle School Subject: MathematicsDistrict: Big City School District Type: Tested

Teacher: CARINA CUELLAR (523717) Grade: 6th-Grade

Achievement Groups

1 (Lowest) 2 (Middle) 3 (Highest)

Standard for Academic Growth 0.0 0.0 0.0

2014

Growth 4.2 7.9 -12.0

Standard Error 2.6 0.9 4.4

No. of Students 24 19 7

Percent of Students 48.0 38.0 14.0

2013Growth -4.0 5.3 -5.7

Standard Error 2.1 2.3 2.2

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Diagnostic Test: OAASchool: Boxer Elementary/Middle School Subject: MathematicsDistrict: Big City School District Type: Tested

Teacher: CARINA CUELLAR (523717) Grade: 6th-Grade

Achievement Groups

1 (Lowest) 2 (Middle) 3 (Highest)

Standard for Academic Growth 0.0 0.0 0.0

2014

Growth 4.2 7.9 -12.0

Standard Error 2.6 0.9 4.4

No. of Students 24 19 7

Percent of Students 48.0 38.0 14.0

2013Growth -4.0 5.3 -5.7

Standard Error 2.1 2.3 2.2

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #1: APPLY MY LEARNING

Use the sample value-added and diagnostic reports below to apply your learning by simulating a collaborative conversation with a teacher. Follow the steps on the next page.

15

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #1: APPLY MY LEARNING

Follow these steps to simulate how a teacher might respond to the questions provided. Suggested responses are on the following page. Use the resource on page 32 to view sample contributing factors.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

What successes do you notice in your value-added report?

What are some possible contributing factors to these successes?

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

What opportunities for growth exist?

What are some possible contributing factors to these opportunities?

DEVELOP A PLAN

How might you leverage your strengths to address your opportunities for growth? How might you collaborate with other teachers? What professional learning or resources are needed?

STEP1

STEP2

STEP3

16

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #1: CHECK FOR UNDERSTANDING

Use the following suggested responses to check your understanding. It is important to remember to explore multiple contributing factors, including instructional and systemic factors. Use the resource on page 32 to view sample contributing factors.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

What successes do you notice in your value-added report?• Continued improvement in growth measure and gain index• Exceeding expectations in 2014• Lowest- and middle-achieving students exceeding growth expectation in 2014

What are some possible contributing factors to these successes?• Strong content expertise• Use of effective questioning strategies• Small group instruction for middle-achieving students

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

What opportunities for growth exist?• Prior years and Multi-Yr Trend did not meet expectations• Highest-achieving students did not meet the growth expectation

What are some possible contributing factors to these opportunities?• Instructional materials meet needs of some, not all, students• Highest-achieving students did not participate in intervention/enrichment small groups

DEVELOP A PLAN

How might you leverage your strengths to address your opportunities for growth? How might you collaborate with other teachers? What professional learning or resources are needed?• Professional learning on differentiation• Peer-to-peer observation with a teacher having success with high-achieving students• Replicate small group, personalized instruction being done with middle-achieving students

STEP1

STEP2

STEP3

17

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #2: APPLY MY LEARNING

Use the sample value-added and diagnostic reports below to apply your learning by simulating a collaborative conversation with a teacher. Follow the steps on the next page.

Teacher growth measures are from SAS ® EVAAS ® multivariate, longitudinal analyses using all available data for each student (up to 5 years). The multi-year trend incorporates the most recentstudent data, and the previous years' gains used in that trend may have changed from what was reported in previous years. ODE has requested, for consistency, the single-year growth measures in thechart and table above/below are the same as those that have been reported in previous years.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Value Added Test: OAASchool: Boxer Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: AMOS BURLESON (145500) Grade: 8th-Grade

Teacher Growth Measures and Standard Errors

Year Growth Measure Standard Error Index Level

2012 3.5 1.9 1.79 Above Average

2013 4.6 3.1 1.48 Above Average

2014 2.5 1.9 1.33 Above Average

Multi-Yr Trend 3.5 1.3 2.64 Most Effective

Teacher growth measures are from SAS ® EVAAS ® multivariate, longitudinal analyses using all available data for each student (up to 5 years). The multi-year trend incorporates the most recentstudent data, and the previous years' gains used in that trend may have changed from what was reported in previous years. ODE has requested, for consistency, the single-year growth measures in thechart and table above/below are the same as those that have been reported in previous years.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Value Added Test: OAASchool: Boxer Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: AMOS BURLESON (145500) Grade: 8th-Grade

Teacher Growth Measures and Standard Errors

Year Growth Measure Standard Error Index Level

2012 3.5 1.9 1.79 Above Average

2013 4.6 3.1 1.48 Above Average

2014 2.5 1.9 1.33 Above Average

Multi-Yr Trend 3.5 1.3 2.64 Most Effective

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Diagnostic Test: OAASchool: Boxer Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: AMOS BURLESON (145500) Grade: 8th-Grade

Achievement Groups

1 (Lowest) 2 (Middle) 3 (Highest)

Standard for Academic Growth 0.0 0.0 0.0

2014

Growth 2.7 7.2 -0.2

Standard Error 3.9 1.2 2.0

No. of Students 19 21 18

Percent of Students 32.8 36.2 31.0

2013Growth 24.8 8.1 -11.8

Standard Error 5.7 1.4 3.9

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Diagnostic Test: OAASchool: Boxer Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: AMOS BURLESON (145500) Grade: 8th-Grade

Achievement Groups

1 (Lowest) 2 (Middle) 3 (Highest)

Standard for Academic Growth 0.0 0.0 0.0

2014

Growth 2.7 7.2 -0.2

Standard Error 3.9 1.2 2.0

No. of Students 19 21 18

Percent of Students 32.8 36.2 31.0

2013Growth 24.8 8.1 -11.8

Standard Error 5.7 1.4 3.9

18

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #2: APPLY MY LEARNING

Follow these steps to simulate how a teacher might respond to the questions provided. Suggested responses are on the following page. Use the resource on page 32 to view sample contributing factors.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

What successes do you notice in your value-added report?

What are some possible contributing factors to these successes?

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

What opportunities for growth exist?

What are some possible contributing factors to these opportunities?

DEVELOP A PLAN

How might you leverage your strengths to address your opportunities for growth? How might you collaborate with other teachers? What professional learning or resources are needed?

STEP1

STEP2

STEP3

19

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #2: CHECK FOR UNDERSTANDING

Use the following suggested responses to check your understanding. It is important to remember to explore multiple contributing factors, including instructional and systemic factors. Use the resource on page 32 to view sample contributing factors.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

What successes do you notice in your value-added report?• Exceeded the growth expectation for the past three years• Exceeding or meeting the growth expectation with all achievement groups• Improvement with high-achieving students in most recent year

What are some possible contributing factors to these successes?• Formative instructional practices used in the classroom• Data-informed decisions used to provide targeted instruction

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

What opportunities for growth exist?• Lowest-achieving students met expected growth and have historically exceeded expectations

What are some possible contributing factors to these opportunities?• Change in instructional resources• Use of support personnel

DEVELOP A PLAN

How might you leverage your strengths to address your opportunities for growth? How might you collaborate with other teachers? What professional learning or resources are needed?• Collaborate with colleagues to provide data-informed targeted intervention• Restructure time to include intervention and enrichment

STEP1

STEP2

STEP3

20

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #3: APPLY MY LEARNING

Use the sample value-added and diagnostic reports below to apply your learning by simulating a collaborative conversation with a teacher. Follow the steps on the next page.

Teacher growth measures are from SAS ® EVAAS ® multivariate, longitudinal analyses using all available data for each student (up to 5 years). The multi-year trend incorporates the most recentstudent data, and the previous years' gains used in that trend may have changed from what was reported in previous years. ODE has requested, for consistency, the single-year growth measures in thechart and table above/below are the same as those that have been reported in previous years.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Value Added Test: OAASchool: Billygoat Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: IMELDA SOLORZANO (453115) Grade: 5th-Grade

Teacher Growth Measures and Standard Errors

Year Growth Measure Standard Error Index Level

2012 3.1 1.8 1.71 Above Average

2013 -4.4 3.1 -1.45 Approaching Average

2014 -4.4 1.5 -2.82 Least Effective

Multi-Yr Trend -1.9 1.2 -1.54 Approaching AverageTeacher growth measures are from SAS ® EVAAS ® multivariate, longitudinal analyses using all available data for each student (up to 5 years). The multi-year trend incorporates the most recentstudent data, and the previous years' gains used in that trend may have changed from what was reported in previous years. ODE has requested, for consistency, the single-year growth measures in thechart and table above/below are the same as those that have been reported in previous years.

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Value Added Test: OAASchool: Billygoat Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: IMELDA SOLORZANO (453115) Grade: 5th-Grade

Teacher Growth Measures and Standard Errors

Year Growth Measure Standard Error Index Level

2012 3.1 1.8 1.71 Above Average

2013 -4.4 3.1 -1.45 Approaching Average

2014 -4.4 1.5 -2.82 Least Effective

Multi-Yr Trend -1.9 1.2 -1.54 Approaching Average

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Diagnostic Test: OAASchool: Billygoat Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: IMELDA SOLORZANO (453115) Grade: 5th-Grade

Achievement Groups

1 (Lowest) 2 (Middle) 3 (Highest)

Standard for Academic Growth 0.0 0.0 0.0

2014

Growth -0.1 -7.0 -10.8

Standard Error 2.9 1.3 2.9

No. of Students 8 23 8

Percent of Students 20.5 59.0 20.5

2013Growth -13.2 -2.2 -6.1

Standard Error 4.4 4.0 3.5

Ohio Department of Education

Copyright © 2016 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: Teacher Diagnostic Test: OAASchool: Billygoat Elementary/Middle School Subject: ReadingDistrict: Big City School District Type: Tested

Teacher: IMELDA SOLORZANO (453115) Grade: 5th-Grade

Achievement Groups

1 (Lowest) 2 (Middle) 3 (Highest)

Standard for Academic Growth 0.0 0.0 0.0

2014

Growth -0.1 -7.0 -10.8

Standard Error 2.9 1.3 2.9

No. of Students 8 23 8

Percent of Students 20.5 59.0 20.5

2013Growth -13.2 -2.2 -6.1

Standard Error 4.4 4.0 3.5

21

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #3: APPLY MY LEARNING

Follow these steps to simulate how a teacher might respond to the questions provided. Suggested responses are on the following page. Use the resource on page 32 to view sample contributing factors.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

What successes do you notice in your value-added report?

What are some possible contributing factors to these successes?

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

What opportunities for growth exist?

What are some possible contributing factors to these opportunities?

DEVELOP A PLAN

How might you leverage your strengths to address your opportunities for growth? How might you collaborate with other teachers? What professional learning or resources are needed?

STEP1

STEP2

STEP3

22

SAMPLE TEACHER VALUE-ADDED AND DIAGNOSTIC REPORTS #3: CHECK FOR UNDERSTANDING

Use the following suggested responses to check your understanding. It is important to remember to explore multiple contributing factors, including instructional and systemic factors. Use the resource on page 32 to view sample contributing factors.

IDENTIFY STRENGTHS AND BRAINSTORM CONTRIBUTING FACTORS

What successes do you notice in your value-added report?• Exceeded the growth expectation in 2012• Met growth expectations with lowest-achieving students in the current year• Met growth expectations with middle-achieving students in prior year

What are some possible contributing factors to these successes?• Provided after school intervention for lowest-achieving students

IDENTIFY OPPORTUNITIES FOR GROWTH AND BRAINSTORM CONTRIBUTING FACTORS

What opportunities for growth exist?• Current year growth measure did not meet the growth expectation• Middle- and highest-achieving students did not meet the growth expectation in the most recent year• Students, overall, did not grow as much as the average class in the state

What are some possible contributing factors to these opportunities?• Length of class period• Instructional materials alignment to state standards

DEVELOP A PLAN

How might you leverage your strengths to address your opportunities for growth? How might you collaborate with other teachers? What professional learning or resources are needed?• Provide time to work with teacher based team to analyze and use student level data• Professional learning on differentiation strategies

STEP1

STEP2

STEP3

23

EVAAS® DISTRICT AND SCHOOL VALUE-ADDED REPORTS AT A GLANCE

The following reports are available on the SAS® EVAAS® website and support efficiency through summarizing large amounts of data at the district/school level. These reports reflect those most commonly used by district and school leaders.

DISTRICT VALUE-ADDED SUMMARY REPORT

DISTRICT DIAGNOSTIC SUMMARY REPORT

SCHOOL VALUE-ADDED DECISION DASHBOARD

DISTRICT ONE-YEAR PROJECTION SUMMARY REPORT

Purpose

Purpose

Purpose

Purpose

Possible Questions

Possible Questions

Possible Questions

Possible Questions

This report provides grade-level by grade-level comparisons of the progress rates of students in all the schools in the district.

This report provides a school-by-schoolcomparison of the progress of all the achievement subgroups.

This report depicts, on one page: value- added growth categories for all Ohio’s State Tests (OST) subjects and grades tested in the school; multi-year average growth measures, when available; and includes the diagnostic information or the growth of students at different attainment levels.

This report shows probability of success that the current year’s students will score on state assessments relative to the 50thpercentile or the 80th percentile. The table shows the number and percentage of students in each probability group, as well as the number and percentage of students with insufficient data for a projection.Students who tested with an Alternative Assessment are not included in the table or the pie chart.

• How do the gains for other schools in my district compare to the gains for my school?

• How can I leverage what is being done in other schools in my district to improve my school’s results?

• Which schools are producing statistically significant growth with low-achieving students in a particular grade level and subject area?

• Which school is producing the most growth with high-achieving students?

• What growth patterns exist across grades and subjects, and student achievement levels at our school?

• How are my students in grades 5 through 8 projected to perform in the future?

• How can I use the data to plan for targeted intervention or enrichment?

24

SCHOOL SEARCHPurpose Possible QuestionsThis function enables district leaders to generate a list of schools that match the demographic profile of a selected school. Results of the School Search allow you to see a school’s ranking among a group of demographically similar schools.

• How does my school compare to other schools by grade level, cumulative gain, etc.?

• How does the growth in my school compare with the growth of other schools that are demographically similar?

SCATTERPLOTPurpose Possible QuestionsScatterplots enable you to plot any public school(s) according to the criteria you select. You may choose the horizontal andvertical axes from several options, and limit the display by school, district, demographic categories, and year. Pre-defined graphs include: Growth v. % Free/Reduced Lunch Growth v. Performance Index; Achievement v. % Students w/ Disabilities; and more.

• How does our school’s growth and achievement results compare to similar schools?

• Is there a relationship between schools’ growth results and % of disadvantaged students?

TEACHING EFFECTIVENESS SUMMARYPurpose Possible QuestionsThis summary indicates the number of teachers that fell into each effectiveness level by school, district, and state.Some teachers may be included multiple times because they teach multiple grade/subject combinations. The number in each level is hyperlinked to the Teacher Effectiveness Listing.

• How does the distribution of effectiveness levels compare across the school, district, and state?

• Are there a disproportionate number of teachers in a particular level?

• Who are the teachers in the least effective or below average categories?

• How can I use the data to build teacher effectiveness and improve student achievement and progress?

SCHOOL PERFORMANCE DIAGNOSTIC REPORTPurpose Possible QuestionsThis report provides achievement subgroup comparisons of student progress organized by grade level and subject area. This report also displays progress by Ohio’s State Tests (OST) performance levels. Educators can generate reports for user-selected subgroups.

• Which achievement subgroups are making statistically significant growth?

• Which achievement subgroups are struggling?

• Is this pattern true across grade levels and/or subjects?

25

INVESTIGATION #1: HOW MUCH GROWTH WAS PRODUCED AT EACH GRADE OR COURSE ACROSS THE DISTRICT OR SCHOOL

The District and School Value-Added Report summarizes the aggregate results and helps evaluate the overall effectiveness of programs. This reports allows leaders to evaluate how a district or school has performed across years, from year to year, and across grade levels in a particular subject or course.

INTERPRETING A VALUE-ADDED REPORT• The growth measure is a measure of progress relative to the growth of students in the average district/school in the

state, called the growth standard.• A positive growth measure indicates that students grew more than the average district/school statewide.• A growth measure near 0 doesn’t indicate a lack of growth. Rather, it indicates that this group of students grew as much

as the average district/school statewide.• A negative growth measure doesn’t necessarily indicate that students lost information. Rather, it indicates that

students didn’t grow as much as the average district/school statewide.• Colors used in the table represent the gain index, which can be referenced on page 5.

INTERPRETING AND ANALYZING DISTRICT AND SCHOOL VALUE-ADDED REPORTS

Accessible to:

District Leaders School Leaders Teachers Public

School Mean Gain (MRM) Math Value-Added Report

School Predicted Mean (URM) Science Value-Added Report

Please note the OAA Tested reports include students that were tested at the school or district and not only those students that wereaccountable to that school or district. Reporting including only those students that were accountable is available under the OAAAccountable test.

The values in the table below are rounded for display purposes. Note that the gain is divided by the standard error to obtain a gain index,which is displayed on the local report card's Progress report. For this reason, the numbers on the two reports may be very different.

Achievement results and growth results must be used together to get a complete picture of student learning.

Ohio Department of Education

Copyright © 2017 SAS Institute Inc., Cary, NC, USA. All Rights Reserved.

Report: School Value-Added Test: OAASchool: Barn Owl Upper Elementary School Subject: ScienceDistrict: Big City School District Type: Tested

Year: 2014

Subject Grade YearNo. of

StudentsAvg

Score Avg %-ile

AvgPredicted

ScorePredictedAvg %-ile

GrowthMeasure

StandardError

Schoolvs RefGroupAvg

Science 5

2012 132 386.0 24 391.2 27 -3.1 O 2.7 MidLo

2013 119 478.0 86 457.6 78 11.8 DG 3.2 Above

2014 124 430.9 62 440.1 68 -5.4 O 2.8 MidLo

DG Significant evidence that the school's students made more progress than the Growth Standard

LG Moderate evidence that the school's students made more progress than the Growth Standard

Y Evidence that the school's students made progress similar to the Growth Standard

O Moderate evidence that the school's students made less progress than the Growth Standard

R Significant evidence that the school's students made less progress than the Growth Standard

TIPS FOR USING DISTRICT- AND SCHOOL-LEVEL VALUE-ADDED REPORT

Clicking on the underlined numbers and text in a report gives you access to additional reports to help strengthen the analysis phase. Clicking on an underlined growth measure in the district and school-level value-added report takes you to a diagnostic report for that particular grade and subject.

26

APPLY YOUR LEARNING: INTERPRETING AND ANALYZING SCHOOL VALUE-ADDED REPORTS

1 Which grade level has the highest mean achievement in math for 2014? The lowest?

2 Which grade(s) and subject(s) reached or exceeded the expected level of growth in 2013?

3 Why is the 2014 grade six math growth measure colored light green?

4 By analyzing value-added reports, educators can prioritize the areas of strength and opportunities for growth that can be addressed at the classroom level. What are some strengths that you notice on this value-added report?

5 What are some opportunities for growth?

6 What might be contributing factors for this school’s strengths and opportunities to grow? See page 32 to view sample contributing factors.

27

CHECK FOR UNDERSTANDING: INTERPRETING AND ANALYZING SCHOOL VALUE-ADDED REPORTS

1 Which grade level has the highest mean achievement in math for 2014? The lowest?

2 Which grade(s) and subject(s) reached or exceeded the expected level of growth in 2013?

3 Why is the 2014 6th grade math growth measure colored light green?

4 By analyzing value-added reports, educators can prioritize the areas of strength and opportunities for growth that can be addressed at the classroom level. What are some strengths that you notice on this Value-Added Report?

5 What are some opportunities for growth?

6 While it might be difficult to analyze another school’s reports, what might be some contributing factors for this school’s strengths and opportunities to grow? Use page 32 to view a sample analysis.

The highest mean achievement in 2014 was grade 5, with an average NCE of 60.5. Grade 6 had the lowest mean achievement with an NCE of 41.3

In 2013, grade 5 was the only grade to reach or exceed the expected level of growth. This is indicated by a growth measure equal to or greater than 0 in both the MRM and URM reports.

The colors used in the value-added report represent the gain index, not the growth measure. While the growth measure is a 3.3, the gain index value is 1.65. The gain index is found by dividing the growth measure (3.3) by the standard error (2.0).

The growth measure in the most recent year for grade 4 and grade 6 in math exceeded the growth standard. Grade 5 has historically exceeded the growth standard and the three-year average indicates that overall, they are exceeding the growth standard. The 2013 growth measure in grade 5 is another area of celebration since there is significant evidence that this cohort of students grew more than they were expected to. This is also evident in comparing their average predicted scaled score to the actual average scaled score.

The 3-year average growth measure for math in grades 4 and 6 as well as the most recent year growth measure in grade 5 science are opportunities for growth.

The leadership team of this school may have system-level meetings that are data oriented, which could contribute to particular strengths. However, teacher teams may not have the opportunity to regularly examine data, which could be contributing to the opportunities for growth. This school may also have deep content expertise in some teachers, but not all.

28

INVESTIGATION #2: WHICH STUDENTS BENEFITED MOST (AND LEAST) FROM THE INSTRUCTION IN YOUR DISTRICT/SCHOOL?

The Diagnostic Report allows you to identify patterns of progress across different achievement levels. Even though a district or school may have positive value-added results, it is possible that the needs of all students are not being met.

INTERPRETING A DIAGNOSTIC REPORT

• The current year growth measure is represented by the vertical blue bar while the gold bars represent growth for up to three previous years.

• The growth standard is represented by a green, horizontal line.

• There is measurement error involved with any estimate, and this error is expressed in terms of the standard error. This measure represents the certainty of the growth measure, and helps determine if the growth measure is significantly different than the growth standard. It is dependent upon the quantity and quality of student-level data.

If the one standard error line is entirely above the growth standard, or the green horizontal line, then the group of students exceeded expectations. If the line is crossing the growth standard, the group of students met expected growth and if the line is entirely below the growth standard the group of students did not meet expectations.

In recent years, the District and School Diagnostic Report includes both a one and a two standard error line. If the bar (the growth measure) is at least two standard errors above the green line (the growth standard), the evidence of growth is even stronger.

INTERPRETING AND ANALYZING DISTRICT AND SCHOOL DIAGNOSTIC REPORTS

Accessible to:

District Leaders School Leaders Teachers

Public

29

APPLY YOUR LEARNING: INTERPRETING AND ANALYZING DISTRICT AND SCHOOL DIAGNOSTIC REPORTS

1 What was the growth measure for the most recent year for the lowest quintile?

2 What percent of students are in the highest achieving quintile in previous years?

3 Which students benefitted most (and least) from the math instruction in the most recent year?

4 What trends emerge in the growth measures over time?

5 What are the greatest areas of strength and opportunities for growth in terms of student groups?

6 What might be contributing factors for this school’s strengths and opportunities to grow? Use page 32 to view sample contributing factors.

30

CHECK FOR UNDERSTANDING: INTERPRETING AND ANALYZING DISTRICT AND SCHOOL DIAGNOSTIC REPORTS

1 What was the growth measure for the most recent year for the lowest quintile?

2 What percent of students are in the highest achieving quintile in previous years?

3 Which students benefitted most (and least) from the math instruction in the most recent year?

4 What trends emerge in the growth measures over time?

5 What are the greatest areas of strength and opportunities for growth in terms of student groups?

6 What might be contributing factors for this school’s strengths and opportunities to grow? Use page 32 to view sample contributing factors.

For the most recent year, -8.5 was the growth measure for the lowest quintile.

In previous years, 27.5 percent of students are in the highest-achieving quintile.

The second, third, and fourth quintiles benefited most and the first and fifth benefited least from the instruction in the most recent year. The second, third, and fourth quintiles have positive growth measures and the standard error is entirely above the growth standard. This means that these groups of students grew more than expected, while the first and fifth grew less than expected because their growth measure is negative and the standard error line does not cross the growth standard line.

The second, third, and fourth quintiles (students achieving in the 21–79 percentile) exceeded expectations in the current and previous three years, on average. Students achieving in the 0–20 percentile and 80–100 percentile consistently grow less than is expected.

The middle- or average-achieving students seem to be an area of strength while student achievement groups at the tails of the distribution are an opportunity for growth.

While the curriculum may be aligned to state standards in this grade/subject, it is not adequately meeting the needs of all students, specifically high- and low-achieving students. This school might be providing support to students achieving in the middle three quintiles through the use of tutors or paraprofessionals and could replicate that practice with all achievement groups.

31

TIPS FOR USING DISTRICT- AND SCHOOL-LEVEL DIAGNOSTIC REPORTS

The following paterns describe some trends you might find on diagnostic reports

Learning experiences benefit all students similarly in a Flat Pattern.

Learning experiences benefit higher-achieving students more than their lower-achieving peers in the Upward Shed while the Downward Shed shows lower-achieving students benefitting more than higher-achieving peers.

Upward Shed

Teepee

Downward Shed

Reverse Teepee

Flat

Learning experiences benefit middle-achieving students more than their lower- and higher-achieving peers in the Teepee Pattern, while the Reverse Teepee shows higher-and lower-achieving students benefiting more than their lower-achieving peers.

The following gain patterns describe the trends shown on the diagnostic reports. Use the blue bars for this exercise.

Identifying Gain Patterns in School Diagnostic Reports

Copyright, 2009. Battelle for Kids. All rights reserved.

Upward Shed Pattern:School learning experiences benefit higher-achieving students more than their lower-achieving peers.

Downward Shed Pattern:School learning experiences benefit lower-achieving students more than their higher-achieving peers.

Teepee Pattern:School learning experiences benefit middle-achieving students more than their lower- and higher-achieving peers.

Reverse Teepee Pattern:School learning experiences benefit middle-achieving students less than their lower- and higher-achieving peers.

The following gain patterns describe the trends shown on the diagnostic reports. Use the blue bars for this exercise.

Identifying Gain Patterns in School Diagnostic Reports

Copyright, 2009. Battelle for Kids. All rights reserved.

Upward Shed Pattern:School learning experiences benefit higher-achieving students more than their lower-achieving peers.

Downward Shed Pattern:School learning experiences benefit lower-achieving students more than their higher-achieving peers.

Teepee Pattern:School learning experiences benefit middle-achieving students more than their lower- and higher-achieving peers.

Reverse Teepee Pattern:School learning experiences benefit middle-achieving students less than their lower- and higher-achieving peers.

The following gain patterns describe the trends shown on the diagnostic reports. Use the blue bars for this exercise.

Identifying Gain Patterns in School Diagnostic Reports

Copyright, 2009. Battelle for Kids. All rights reserved.

Upward Shed Pattern:School learning experiences benefit higher-achieving students more than their lower-achieving peers.

Downward Shed Pattern:School learning experiences benefit lower-achieving students more than their higher-achieving peers.

Teepee Pattern:School learning experiences benefit middle-achieving students more than their lower- and higher-achieving peers.

Reverse Teepee Pattern:School learning experiences benefit middle-achieving students less than their lower- and higher-achieving peers.

The following gain patterns describe the trends shown on the diagnostic reports. Use the blue bars for this exercise.

Identifying Gain Patterns in School Diagnostic Reports

Copyright, 2009. Battelle for Kids. All rights reserved.

Upward Shed Pattern:School learning experiences benefit higher-achieving students more than their lower-achieving peers.

Downward Shed Pattern:School learning experiences benefit lower-achieving students more than their higher-achieving peers.

Teepee Pattern:School learning experiences benefit middle-achieving students more than their lower- and higher-achieving peers.

Reverse Teepee Pattern:School learning experiences benefit middle-achieving students less than their lower- and higher-achieving peers.

Copyright, 2009. Battelle for Kids. All rights reserved.

Flat Pattern:School learning experiences benefit students of all achievement levels similarly.

Random Pattern:No discernible pattern exists that relates achievement levels to gains.

Identifying Gain Patterns in School Diagnostic Reports - Continued

32

PREPARING TO ANALYZE SAS® EVAAS® REPORTS It is important to uncover factors that produce particular strengths and areas for growth in academic outcomes. The primary reason for uncovering contributing factors is that they provide a significant lever for improving instructional practice and accelerating student learning. If something is working and educators know why, practices can be maintained and protected. If something is not working and educators know why, resources needed to discover a solution can be identified.

Because this guidebook is aimed at improving instructional practice that impacts student results at a district or school level, it is important to prioritize the areas of strength and opportunities for growth that can be addressed by educators at the district or school level. When identifying factors, focus reflection on things like:

• What educational opportunities were available for students? • Was data used to inform decisions? • Were there changes in the curriculum? • Were there systemic changes? • What professional learning opportunities were available?

Sample contributing factors are provided below. This list is not all-inclusive, rather it provides several examples that might represent assessment, professional learning, curriculum, and systemic factors.

IDENTIFYING CONTRIBUTING FACTORS RESOURCE

Strengths Opportunities for Growth• Instruction is differentiated to meet student needs• Staff owns responsibility for success of all students• Principal initiates and supports

instructional improvement• Professional development is differentiated and linked

to improvement goals• Principal structures time for teachers to work together• Instructional materials aligned to state standards• Solid learning progressions across grade levels• System-level meetings are data and results oriented• Resources aligned with improvement goals

and strategies• Parents, students, and community enlisted as

education partners• Teachers have deep content expertise• Staff builds deep relationships with students• Educators collaborate to improve instruction• Staff examines data and sets goals• Regular use of descriptive feedback• School-wide intervention plan in place

• Curriculum has redundancies and/or gaps• Leaders unclear on how to use data to

improve instruction• Staff does not build deep relationships with students• Joint planning time is not focused on

improved instruction• Practices of highly effective teachers are not leveraged• Curriculum misaligned to state standards• Insufficient access to rigor for all students• Educators unclear how to use data to

inform instruction• Classrooms are ineffectively managed• Instruction is not engaging to all students• Educators do not collaborate• System-wide meetings confuse activity with results• Resources poorly aligned with improvement goals

and strategies• Teachers are not working in their content area• Data is not used to inform instruction• Learning goals are not clearly articulated• Little use of formative instructional practices

33

Here are guiding questions you can use to reflect and analyze your value-added reports.

• Is our district/school meeting the needs of all students?

• What trends exist across groups? Across years?

• Which groups of students are meeting the growth expectation? Which groups are exceeding the growth expectation? Which groups are not meeting the growth expectation?

• What does the data suggest about the curriculum and instruction?

• How is our district/school challenging all students regardless of achievement group?

• What additional data supports strengths and opportunities for growth?

• How can strengths be levered to improve instructional practices and accelerate student learning across the district/school?

34

CHECKLIST FOR EFFECTIVE USE OF DATA

Use the following checklist to guide your planning as you prepare to engage your staff with value-added data.

Review district/school value-added data

Prepare talking points to share with staff (include additional data sources)

Build awareness and understanding of the value-added measures with staff

Allow time for individual reflection of teacher reports

Meet with teachers to support professional growth planning

Monitor growth plan implementation, adjust as needed