LLR_PengWang

Transcript of LLR_PengWang

-

7/29/2019 LLR_PengWang

1/22

Estimating Long Run Risk: A Panel Data Approach

Peng Wang

Hong Kong University of Science and Technology

December, 2010

Abstract

The long-run risk (LLR) model of Bansal and Yaron (2004) provides a building

block for the resolution of the equity-premium puzzle, among many other puzzles.

However, in their calibration exercise, the magnitude of this long-run risk of consump-

tion is rather small and dicult to detect using only several macro data series. It is

hard for traditional small-scale models to estimate this component due to the very

small signal-noise ratio. For the LLR argument to work in practice, the representa-

tive consumer must be able to make inference about the long-run risk based on the

information set available. The original paper of Bansal and Yaron (2004) assumes that

the representative agent observes the long-run risk, and then the agents consumption

plan becomes a function of the underlying long-run risk. This paper assumes that theagent is endowed with a rich information set of dividend-price ratios, from which the

consumer may learn the long-run risk. Then I propose a panel data approach such that

the small risk component of consumption can be accurately estimated, and thus lends

support for the presence of the long-run risk. Based on the factor representation for

cross-sectional asset price-dividend ratios implied by the theoretical model, a two-stage

factor augmented maximum likelihood estimator will provide more robust estimation

results than existing small-scale estimation methods. In the rst stage, the principal

components estimators oer precise estimates of the space spanned by the state vari-

ables, namely the long-run component of consumption and the stochastic volatility.In the second stage, maximum likelihood estimation is carried out to recover the true

state variables and the structural parameters. The method used in this paper has

far-reaching implications for a more general class of the dynamic general equilibrium

models, where the estimation of the state variables is of direct relevance.

Department of Economics, Hong Kong University of Science and Technology, Clear Water Bay, Kowloon,Hong Kong. Tel: +852-23587630. Fax: +852-23582084. Email: [email protected]

1

-

7/29/2019 LLR_PengWang

2/22

1 Introduction

This paper concerns an application of large dimensional factor models to the analysis of

dynamic stochastic economic models. Specically I consider the analysis of structural dy-

namic factor models where both the cross-sectional dimension and the time dimension tendto innity, which is called the large-(N; T) framework. The economic model I consider is

the long-run risk (LLR) model of Bansal and Yaron (2004). The LLR model is of particular

interest to motivate the use of large dimensional factor models in estimating economically

important time series which is statistically dicult to measure using aggregate data.

In the LLR model, all economic variables of interest are functions of the long-run risk of

consumption growth.1 This component is very small in magnitude but is crucial in resolving

the equity premium puzzle. It is hard for traditional small-scale models to estimate this

component due to the very small signal-noise ratio. However, modern factor models provide

a way to consistently estimate this long-run component. The asymptotic property of theestimators is built upon double asymptotics of large (N; T) ; in which both the cross-section

dimension and the time dimension tend to innity. Such a methodology allows one to make

inference about the long-run component itself besides its parametric dynamic process. The

estimators in this paper will be structural in the sense that the factor structure is implied

by the model. Specically, the logarithm of cross-sectional asset price-dividend ratios is

approximately a linear function of the long run component, which is the state variable of

the economic model. If we treat the price-dividend ratios as measured with i.i.d. errors,

principal components estimators of the long run component oer consistent estimators for

the state variables, which further help the estimation of deep parameters.

There is a large body of work that has studied the implications of large factor models

to economic analysis. Bernanke, et al (2004) proposed a factor-augmented VAR model to

overcome the limited-information problem in the conventional small VAR models and was

able to resolve the price puzzle. Boivin and Giannoni (2006) studied the DSGE models in

a data rich environment, using the implied factors to augment a state-space representation of

the DSGE model to overcome the measurement errors problem. Other applications of factor

models include Ng and Ludvigson (2007, 2009), who studied the risk-return tradeo and the

bond risk premia, Ng and Moench (2010), who studied the housing market dynamics, Mum-taz and Surico (2009), who studied the transmission of international shocks, etc. My work

diers from all the above in that I am considering a factor-augmented maximum likelihood

approach for a general likelihood function, not necessarily for a VAR or linear state-space

1 This is indeed a common feature of the DSGE models where linearized or log-linearized solutions areavailable.

2

-

7/29/2019 LLR_PengWang

3/22

system. A two-step method will be shown to be parsimonious while eective.

2 Modeling the consumption growth

Centered around Bansal and Yaron (2004)s model setup are two key assumptions. Firstly,

the consumption growth contains a small stationary component, which is highly persistent.

They named this component the long-run risk of consumption growth. Secondly, the economy

is characterized by a representative agent, who is endowed with an Epstein-Zin recursive

preference. With such a preference, the agent cares about the consumption growth risk

far into the future in contrast to the case with additive utility functions. Although the

consumption growth process is statistically indistinguishable from an i.i.d. process, the role

of the predictable long run component is amplied by the Epstein-Zin preference, and the

feedback is shown in asset prices.Let Ct be the consumption at time t; and ct = log (Ct) log(Ct1) be a measure for

the consumption growth, which is modeled as follows:

ct+1 = c + xt + tt+1

xt+1 = xt + 'etet+1

2t+1 2 = v(2t 2) + wwt+1

where xt is a small but persistent component capturing the long-run risk in the consumptiongrowth, and 2t is the conditional volatility of the consumption growth.

2.1 Representative agents problem

The representative agent has the Epstein-Zin recursive preference, characterized by

Vt = [(1 )C1

t + (Et[V1t+1 ])

1

]

1 ;

where is the coecient of relative risk aversion, 1

11= ; and is the intertemporalelasticity of substitution (IES). Time t budget constraint is given by

Wt+1 = (Wt Ct)Rc;t+1;

where Rc;t+1 Pt+1+Ct+1Pt is the gross return on consumption claim.

3

-

7/29/2019 LLR_PengWang

4/22

The agents problem at time t is to maximize Vt subject to the time t budget constraint,

which results in the following Euler equation

Et(Mt+1Rj;t+1) = 1; or

Et(exp(mt+1 + rj;t+1)) = 1

with mt log(Mt) and rj;t log(Rj;t): The log version of intertemporal marginal rate ofsubstitution (IMRS) is given by

mt+1 = log

ct+1 + ( 1)rc;t+1

2.2 Estimating the LLR: from the agents Prospective

The relevant state variables of this economy are xt and 2t : For any dividend paying asset j;

the log of price-dividend ratio is a linear function of state variables.

zj;t = A0;j + A1;jxt + A2;j2t ;

where Ai;j is function of model parameters (Bansal and Yaron, 2004, and BKY, 2007):

In Bansal and Yaron (2004)s economy, the long-run component xt and the stochastic

volatility 2t are both in the agents information set. The assumption that the agent observes

not only the consumption growth but also xt and 2t seems a rather strong one because the

latter two series are statistically insignicant. The rational expectations assumption equatesthe agents belief of the hidden process to that of the objective process. I will show that if

the agent can fully utilize the information from a large cross section of observed asset prices,

xt and 2t can be treated as known. To x idea, I start from the agents prospective and use

a simple setup where the parametrized dynamic process of consumption growth is known to

the agent, however, the historical time series of xt and 2t are unobserved. Thus the agent

must draw inference and make predictions of xt and 2t based on observables.

I will compare two scenarios categorized by the agents information set. The small in-

formation scenario assumes that the agent uses only the information from aggregate series,

namely, the consumption growth and the asset price index, to estimate the hidden states.

The large information scenario assumes that the agent incorporate information from the

disaggregate asset prices when estimating the hidden states. I will show that the latter dis-

aggregate approach is superior to the former aggregate method in the sense that the hidden

states can be precisely estimated.

4

-

7/29/2019 LLR_PengWang

5/22

The consumption process is calibrated as in Bansal and Yaron (2004).

ct+1 = c + xt + tt+1

xt+1 = xt + 'etet+1

2t+1 2 = v(2t 2) + wwt+1

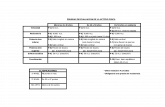

The error terms t; et; and wt are i.i.d. N(0; 1): The following table summarizes parameter

values.Parameters c 'e v w

0:0015 0:979 0:044 0:0078 0:987 0:23 105

I simulate the time series fct; xt; 2tg according to the calibrated model. Then I generatea panel of logarithm of dividend-price ratios according to

zj;t = A0;j + A1;jxt + A2;j2t + uj;t; j = 1; :::; 100; t = 1;:::; 100

where N = 100; T = 100; Ai;j are i.i.d. draws from U(0; 1); and uj;t is i.i.d. N(0; 1) serving

the role as the measurement error. The aggregate index of log dividend-price ratio z0;t is

formed as the sum of the cross-sectional average of zj;t and an i.i.d. N(0; 2) measurement

error:

z0;t =1

N

N

Xj=1 zj;t =A0 + A1xt + A2

2t + ut + u0;t

p! A0 + A1xt + A22t + u0;t;

where the last part comes from the fact that utp! 0 as N!1:

2.2.1 Estimating the hidden state variables

Given the available information, an agent will look at the state space representation of the

model, and optimally update his prediction about the hidden state variables using some

ltering technique. A very unique feature of the above model is the low signal-noise ratio.

The conditional variances ofct and zj;t are much larger than that ofxt and 2t : It is thus very

dicult to eectively detect the process of hidden states using only two observed aggregate

series. In contrast, in spite of the high noise, the disaggregate approach can eectively detect

the hidden state. To focus on the main idea, I rst use a quasi-maximum likelihood method,

treating t as a constant although a time-varying t is used to generate the data. In this way,

the agent is able to form a linear state-space system and use the Kalman lter to update his

5

-

7/29/2019 LLR_PengWang

6/22

belief about the hidden long-run component xt:2 The following graph compares the estimates

from two information scenarios with the true long-run component.

0 20 40 60 80 100-2.5

-2

-1.5

-1

-0.5

0

0.5

1

1.5

2

2.5x 10

-3 LLR component of consumption growth

x

x1(small info)

x2(large info)

The following graph shows the relative magnitude of hidden states with respect to the con-

sumption growth.

0 20 40 60 80 100-0.02

-0.015

-0.01

-0.005

0

0.005

0.01

0.015

0.02

0.025

Consumption growth

ct

c+x

t

t

2 In general, the state-space system is of a nonlinear form due to the presence oft: Instead of using acomputationally intensive nonlinear ltering approach, I will consider a two-step factor-augmented maximumlikelihood approach later.

6

-

7/29/2019 LLR_PengWang

7/22

Given the small magnitude of the long-run component, the conditional variance of the ob-

served consumption growth is largely due to the error term. It comes at no surprise that

small information approach yields unsatisfactory results. However, the large information ap-

proach is able to accumulate the signal from the disaggregate data and provides consistent

estimation of the small long-run component. Although the agent is assumed to have thefalse belief that t is a constant, the disaggregate approach is able to precisely estimate the

entire time series of xt: This is due to the fact that 2t is a slow moving series, the variance

of which is negligible compared to that of xt and ct:

2.2.2 Monte Carlo experiments

In this section, I conduct some Monte Carlo experiments to demonstrate how the size of

information aects the estimation of hidden state variables. For the same data generating

process, I will consider dierent combinations of N and T: I will also consider dierentDGPs for the coecients Ai;j in the log dividend-price ratio equation. The following table

summarizes the Monte Carlo average of mean squared errors of x for each model design

for M = 200 repetitions (Monte Carlo standard errors are in parenthesis): MSE(x) =1

MT

PMm=1

PTt=1

hx(m)t x(m)t

i2. All values are reported as units of 105:

Ai;j N(0; 1) Ai;j U[0; 1]T N Small Info. Large Info. Small Info. Large Info.

50 5 0.3249(0.4118) 0.0891(0.0516) 0.3765(0.4073) 0.1140(0.0694)

10 0.3071(0.3849) 0.0657(0.0353) 0.4372(0.5409) 0.0990(0.0629)

50 0.1993(0.1834) 0.0316(0.0124) 0.4106(0.4776) 0.0561(0.0267)

100 0.1805(0.1726) 0.0212(0.0065) 0.4495(0.5318) 0.0397(0.0160)

200 0.1810(0.1614) 0.0141(0.0036) 0.4221(0.4943) 0.0264(0.0085)

100 5 0.3813(0.5106) 0.0917(0.0411) 0.4857(0.5282) 0.1135(0.0532)

10 0.3202(0.3108) 0.0688(0.0306) 0.5127(0.6447) 0.1020(0.0558)

50 0.2466(0.2204) 0.0325(0.0091) 0.5343(0.5896) 0.0547(0.0159)

100 0.2196(0.1550) 0.0217(0.0058) 0.4900(0.6127) 0.0396(0.0114)

200 0.2140(0.1504) 0.0137(0.0029) 0.4347(0.4599) 0.0276(0.0068)200 5 0.4605(0.5367) 0.0917(0.0357) 0.4979(0.5018) 0.1171(0.0448)

10 0.3183(0.3523) 0.0697(0.0228) 0.5520(0.6837) 0.1051(0.0394)

50 0.2681(0.1714) 0.0317(0.0075) 0.5392(0.5827) 0.0543(0.0131)

100 0.2308(0.1143) 0.0212(0.0039) 0.5279(0.5549) 0.0398(0.0090)

200 0.2486(0.1506) 0.0140(0.0022) 0.4775(0.4726) 0.0265(0.0045)

7

-

7/29/2019 LLR_PengWang

8/22

To summarize, it comes at no surprise that the large information approach uniformly

outperform the small information method. I will focus on the role of the size of information

on the estimation precision using the large information approach. For N xed, increasing

the time dimension T seems not to be necessarily accompanied by a decline in the mean

squared errors. On the other hand, for a given T; a larger cross-sectional dimension alwayshelps the estimation of the hidden long-run component. Such properties hold for both DGPs

for the loadings coecients Ai;j .

3 Estimation: from the econometricians prospective

The previous exercise focuses on the state estimation from the representative agents prospec-

tive, where all the model parameters are treated as known. Such an estimation practice can

be viewed as the learning process of the agent, who update his belief about the hidden statevariables using available information set.

The following part will look at the state estimation problem from an econometricians

prospective, where all the model parameters have to be estimated. And the parameter

estimates will be used to form the estimates for the hidden states.

3.1 GMM/SMM estimation

Exploring moment conditions implied by the Euler equation, a simulated method of moment

(SMM) procedure based on the GMM principle is proposed by Bansal, Kiku, and Yaron(BKY, 2007). The Euler equation for an asset with return rj;t is given by

Et fexp[mt+1 + rj;t+1]g = 1:

where the logarithm of IMRS is given by

mt+1 = log

ct+1 + ( 1) rc;t+1:

Crucial to the construction of moment conditions is to construct estimates for the consump-tion return process rc;t+1.

Using a log-linear approximation for log(Pt+1Ct+1

+ 1); BKY approximate rc;t+1 = log(Pt+1Ct+1

+

1) + ct+1 zt byrc;t+1 k0 + k1zt+1 + ct+1 zt

where zt log(Pt=Ct) is the price-dividend ratio for consumption claim, k1 = exp(z)1+exp(z) ;

8

-

7/29/2019 LLR_PengWang

9/22

k0 = log(1 + exp(z)) k1z; with z = E(zt):The log of price-dividend ratio is a linear function of state variables,

zt = A0;c + A1;cxt + A2;c2t :

where Ai;c is function of model parameters. Let A = [A0;c A1;c A2;c] = A(z); Yt = [1 xt 2t ]0;

then z is solved as the following xed-point problem

z = A(z) Y ; where Y E(Yt):

To close the construction of rc;t+1; one needs to recover the time series of state variables

xt and 2t and thus to recover the time series of zt: BKY (2007) applied a two-step method.

In the rst step, xt is identied by regressing the consumption growth on the risk-free rate

and the market price-dividend ratio,

ct+1 = b0xYt + tt+1; with Yt = [1; zm;t; rf;t]

0 :

In the second step, the conditional volatility 2t is obtained by regressing the squared con-

sumption residual on the same set of observables,

2t Eth

(ct+1 b0xYt)2i

= b0Yt

Once the construction of the consumption return process is done, we are able to formulate

the pricing kernel mt+1 for the GMM estimation. Due to the lack of a closed-form solution,

computationally intensive simulation method such as the simulated method of moments is

implemented in BKY (2007) to estimate the model.

The method proposed by this paper avoids the estimation of the consumption return

process, which requires numerically solving a xed-point problem. Instead of looking at

model restrictions on asset returns, I emphasize the importance of the linear approaximate

solution of log dividend-price ratios.

3.2 Estimate state variables using cross-section P/D data

Let zj;t = A0;j + A1;jxt + A2;j2t + ej;t; where ej;t is the unobserved idiosyncratic error (or

the measurement error) of asset js log price-dividend ratio. Then the model implies the

following factor representation

Zt = Z + Ft + Et; or Z = Z 10 + F + E

9

-

7/29/2019 LLR_PengWang

10/22

with ZNT being an (possibly unbalanced) panel of asset price-dividend ratios, Z being the

time-invariant individual xed eect, N2 being the factor loadings, Ft = [xt; 2t ]0 being the

unobserved factors. Also we can include the risk-free rate in Zt:

The rst two leading eigenvectors F of the matrix (ZZ 10)0(ZZ 1

0)NT oer a consistent

estimator for the column space of true F:3 Then we may denote the estimator for the statevector as,

xt2t

= R Ft; or(

xt = R11 F1t + R12 F2t2t = R21 F1t + R22 F2t

;

for some rotation matrix R: We still need an estimator for the rotation matrix. Here we

exploit the dynamic structure of consumption growth. Rewrite the demeaned consumptiongrowth process as

ct+1 = R11 F1t + R12 F2t + tt+1:

The above equation is a member of a larger system, namely,

"ct+1

Zt

#=

"R11; R12

#"F1t

F2t

#+

"tt+1

Et

#:

To estimate the predictive component of ct+1; one may apply the principal components

estimator for the above system of equations to obtain a consistent estimator xt = R11 F1t + R12 F2t; 4 where xt is the estimated long-run component of consumption growth.Alternatively, on may estimate Ft by using only Zt and regress ct+1 on F1t and F2t to

obtain xt = R11 F1t + R12 F2t: The alternative strategy works because E

tt+1jF

=

E

E

tt+1jF;Z; jF = Ett+1jZ; = 0 and 1TPTt=1 Ft HFt2 = op(1): The

large sample properties of xt from both approaches are the same.

To estimate the conditional volatility 2t ; BKY (2007) explored the conditional expecta-

tion representation of 2t : 2t Et[(ct+1 xt)2]: The projection of (ct+1 xt)2 onto the

time-t information set, e.g.f

xsgt

s=1provides a possible way to recover the process 2

t : My

3 Bai and Ng (2002) showed that when (N; T)! 1; consistency of the factors can be established in thesense that 1

T

PTt=1

Ft HFt2 = op(1) for some deterministic matrix H: Bai (2003) further showed thatpNFt HFt

!d N(0; Vt) under very general conditions on the model.

4 Although in the factor model, xit = 0

ift + eit;the factors ft and factor loadings i cannot be separatelyidentied without further restrictions, the common component cit 0ift is consistently estimated by cit 0

ift, see Bai and Ng (2002) and Bai (2003).

10

-

7/29/2019 LLR_PengWang

11/22

estimation strategy for 2t diers from BKY in two ways. First, I will explore the conditional

likelihood function parametrized by R21 and R22: Second, I will augment the likelihood func-

tion by the principal components estimators F : Such a strategy is able to obtain a consistent

estimator for 2t given the consistency of the MLE estimators R21 and R22:

Explore the assumption that conditional on xt and 2; ct+1 is independent normal. Wemay formulate the conditional likelihood

f(cjx; 2) =TYt=1

1p2t

exp[0:5(ct+1 xtt

)2]; or

log f(cjx; 2) = 0:5TXt=1

(ct+1 xt

t)2 0:5

Xlog(2t ) 0:5T log(2):

Replacing xt and 2t by their principal component estimators (parametrized by the rotation

matrix R), one obtains an approximate likelihood function,

L(R21; R22) = 0:5TXt=1

(ct+1 xt

t)2 0:5

TXt=1

log(2t ) 0:5T log(2)

= 0:5TXt=1

(ct+1 xt)2R21 F1t + R22 F2t

0:5TXt=1

log(R21 F1t + R22 F2t) 0:5T log(2)

Maximize the above objective function to obtain consistent estimators for R21; R22: Then we

can construct the time series of state variables:xt2t

= R Ft

A natural extension of the above method is to add the dynamics of 2t to the above

likelihood. Instead of studying the likelihood for ct+1 conditioning on xt and 2t ; we may

11

-

7/29/2019 LLR_PengWang

12/22

formulate the joint likelihood of ct+1; xt; and 2t :

f(c; 2; x)

= f(cj2; x) f(xj2) f(2)= T

Qt=1

f(ct+1j2t ; xt) f(xtjxt1; 2t1) f(2t j2t1)

=TQt=1

1p2t

exp[0:5(ct+1 xtt

)2] 1p2'et1

exp[0:5( xt xt1'et1

)2]

1p2w

exp[0:5(2t v2t1 + (1 v)2

w)2]

TQt=1

1p2t

exp[0:5(ct+1 xtt

)2] 1p2'et1

exp[0:5( xt xt1'et1

)2]

1

p2wexp[

0:5(

2t v2t1 + (1 v)2

w)2]:

In this way, we are able to jointly estimate fR21; R22; w; v; ; 'e; g :An alternative computationally simple approach is to explore the orthogonality condition

implied by the model. First notice that

E

2t2t+1j2t ; xt

= 2tE

2t+1j2t ; xt

= 2t :

More over, let F be the T 2 factor matrix which represent the space spanned by x and 2;then

2t = E

2t2t+1j2t ; xt

= E

2t

2t+1jFt

:

This implies that the projection of 2t2t+1 onto Ft yields

2t : In practice, one may regress

2t 2t+1 on Ft and use the tted value as an estimator of

2t :

3.2.1 Simulation studies

I will simulate the model using the same parameters as previous sections. From an econo-

metricians prospective, the consumption growth and the asset dividend-price ratios are the

observables. Both the state variables fxt; 2tg and the model parameters are unknown and

will be jointly estimated using the optimality conditions implied by the model.

I will focus on the two step approach, namely, obtain the principal component estimators

for the space spanned by the hidden states and then use the likelihood method to estimate

the model parameters.

12

-

7/29/2019 LLR_PengWang

13/22

The following graph shows a typical estimation result from simulated data.

0 20 40 60 80 100-2

-1

0

1

2

3

4

5x 10

-3 LLR component of consumption growth

x

x1(small info)

x2(large info)

x3(pca)

The series x1 and x2 are estimated from the agents prospective, using small and large

information respectively. The series x3 is the econometricians two-step estimates, treating

all model parameters as unknown. As demonstrated by this graph, both x2 and x3 are very

close to the true series x; while the small information approach fails to obtain reasonable

estimates. The results of two-step method is very close to that of the large information

method, in spite of the fact that the former estimates model parameters and the statestogether. The simple regression approach is used to recover the time series of the conditional

variance. As shown in the following graph, the estimates are reasonably close to the true

series. A more detailed Monte Carlo study will be conducted to examine the properties of

13

-

7/29/2019 LLR_PengWang

14/22

the estimator.

0 20 40 60 80 1005

6

7

8

9

10

11x 10

-5 Conditional Variance

2

2

hat

To further compare the econometricians two-step method and the agents large informa-

tion method. I further conduct a Monte Carlo experiment for dierent model sizes. For the

same data generating process, I consider dierent combinations of N and T: I will also con-

sider dierent DGPs for the coecients Ai;j in the log dividend-price ratio equation. Again,

the following table summarizes the Monte Carlo average of mean squared errors of x for

each model design for M = 200 repetitions (Monte Carlo standard errors are in parenthesis):

14

-

7/29/2019 LLR_PengWang

15/22

MSE(x) = 1MT

PMm=1

PTt=1

hx(m)t x(m)t

i2. All values are reported as units of 105:

Ai;j N(0; 1) Ai;j U[0; 1]T N Agent Econometrician Agent Econometrician

50 5 0.0930(0.0532) 0.3726(0.2553) 0.1119(0.0799) 0.3708(0.2689)

10 0.0750(0.0427) 0.3925(0.2912) 0.0959(0.0595) 0.3875(0.2826)

50 0.0328(0.0123) 0.3188(0.2617) 0.0533(0.0214) 0.3853(0.2787)

100 0.0210(0.0064) 0.2871(0.2737) 0.0384(0.0172) 0.3509(0.2505)

200 0.0145(0.0041) 0.3080(0.2278) 0.0269(0.0101) 0.3519(0.2393)

100 5 0.0908(0.0396) 0.2855(0.1479) 0.1264(0.0787) 0.3301(0.2269)

10 0.0706(0.0286) 0.2480(0.1222) 0.1008(0.0509) 0.3239(0.1910)

50 0.0321(0.0096) 0.2021(0.1199) 0.0577(0.0196) 0.2788(0.1437)

100 0.0212(0.0056) 0.1792(0.1414) 0.0389(0.0111) 0.2454(0.1237)200 0.0138(0.0029) 0.1706(0.1578) 0.0277(0.0071) 0.1951(0.1105)

200 5 0.0968(0.0387) 0.2719(0.1368) 0.1202(0.0450) 0.2828(0.1519)

10 0.0731(0.0257) 0.2459(0.1065) 0.1061(0.0492) 0.2991(0.1574)

50 0.0312(0.0068) 0.1449(0.0629) 0.0557(0.0146) 0.2347(0.0878)

100 0.0217(0.0038) 0.1174(0.0707) 0.0383(0.0071) 0.1912(0.0678)

200 0.0139(0.0021) 0.0897(0.0708) 0.0266(0.0046) 0.1428(0.0626)

For T as small as 50; the two-step method not necessarily improves estimation as N becomes

larger. This is consistent with the large sample theory of the second step, which requires

large T to obtain a consistent estimator of the regression coecient. Even if we observe the

factor Ft; the convergence rate of the OLS estimator R for the rotation matrix isp

T : When

T is as large as 100 or 200; combined with large N; the two-step method is able to provide

reasonably good estimation results. In general, a larger N is accompanied by an improved

estimation precision. Such a pattern exists in both DGPs of Ai;j :

4 Issues related to the panel data

Data for the cross-sectional dividend price ratios can be obtained from Fama and Frenchs

website. I will use the 100 portfolio data for estimating the long-run component. There

are several possible issues that might complicate the empirical studies. First, individual

xed eects might present in the panel data model. In the conventional large-N small-T

framework, dierencing method is usually used to remove the individual xed eects and

15

-

7/29/2019 LLR_PengWang

16/22

then the model parameters are estimated based on the transformed model. In an large-

(N; T) framework, the individual xed eects can be consistently estimated (see Hahn, J.,

Kuersteiner, 2002 for an example) without resorting to the dierencing method.

Second, there are some missing observations in the 100 portfolio data constructed by

Fama and French. Although the principal component methods do not directly apply forsuch a data set, the expectation-maximization (EM) algorithm of Stock and Watson (1998)

can be applied to handle panel data with missing observations. Consider the panel data Xit;

i = 1;:::;N;t = 1;:::;T: Let Dit be a binary variable which takes value 0 if the observation

(i; t) is missing and 1 otherwise. The EM algorithm iterates between an expectation step

and a maximization step until some convergence criteria are met.

To initialize the EM algorithm, I replace the missing observations of a portfolio k by i:i:d:

random variables Ukt which are uniformly distributed with mean equal to the sample mean

of that portfolio. Such a replacement is done for all N portfolios to form a balanced panel

X(0) of dimension T N:X

(0)it =

(Xit; if Dit = 1

Uit; if Dit = 0:

Then the maximization step can be carried out using X(0). In a typical (k) th max-imization step, we start with a balanced panel X(k): Principal components estimators

(k)

i

and f(k)t are obtained from X

(k) by solving the restricted least squares problem

n(k)i ; f(k)t o = arg minfi;ftg

N

Xi=1

T

Xt=1

hX(k)it 0ifti

2

s:t:1

T

TXt=1

ftf0t = Ir;

NXi=1

i0i is diagonal.

Let (k) be the Nr loading matrix and F(k) be the Tr factor matrix. Then F(k) consiststhe leading r eigenvectors of the T T matrix 1NTX(k)X(k)0; and (k) = 1TX0F(k):

In the (k) th expectation step, we form a balanced panel X(k+1) :

X

(k+1)

it =( Xit; if Dit = 1

(k)0i f(k)t ; if Dit = 0 :

We keep iterating until some convergence criteria are met, say maxfi;t:Dit=0g

X(k+1)it X(k)it