UK Grid Meeting 22.11.00 Glenn Patrick1 LHCb Grid Activities in UK Grid Prototype and Globus...

-

Upload

thomasina-harrington -

Category

Documents

-

view

216 -

download

0

Transcript of UK Grid Meeting 22.11.00 Glenn Patrick1 LHCb Grid Activities in UK Grid Prototype and Globus...

UK Grid Meeting 22.11.00

Glenn Patrick 1

LHCb Grid Activities in UK

Grid Prototype and Globus Technical Meeting

QMW, 22nd November 2000

Glenn Patrick (RAL)

UK Grid Meeting 22.11.00

Glenn Patrick 2

Aims

In June, LHCb formed Grid technical working group to: Initiate and co-ordinate “user” and production activity in this area. Provide practical experience in Grid tools. Lead into longer term LHCb applications.

Initially based around UK facilities at RAL and Liverpool, as well as CERN, but other countries/institutes are gradually joining in.

IN2P3, NIKHEF, INFN …

UK Grid Meeting 22.11.00

Glenn Patrick 3

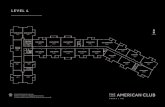

RAL CSF

120 Linux cpu

IBM 3494 tape robot

LIVERPOOL

MAP300 Linux cpu

CERNpcrd25.cern.ch

lxplus009.cern.ch

RAL (PPD)

Bristol

Imperial College

Oxford

GLASGOW/EDINBURGH

“Proto-Tier 2”

Initial LHCb-UK “Testbed”

Institutes

Exists

Planned

RAL DataGrid Testbed

UK Grid Meeting 22.11.00

Glenn Patrick 4

Initial Architecture

Based around existing production facilities (separate Datagrid testbed facilities will eventually exist).

Intel PCs running Linux Redhat 6.1 Mixture of batch systems (LSF at CERN, PBS at RAL,

FCS at MAP). Globus 1.1.3 everywhere. Standard file transfer tools (eg. globus-rcp, GSIFTP). GASS servers for secondary storage? Java tools for controlling production, bookkeeping, etc. MDS/LDAP for bookkeeping database(s).

UK Grid Meeting 22.11.00

Glenn Patrick 5

LHCb Applications

Use existing LHCb code...

Distributed Monte-Carlo Production:

SICBMC LHCb Simulation Program

SICBDST/ LHCb Digitsn/Reconstruction Programs

Brunel & Gaudi

Distributed Analysis:

With datasets stored at different centres - eventually.

UK Grid Meeting 22.11.00

Glenn Patrick 6

Interactive Tests

Accounts, globus certificates and gridmap entries set up for small group of people at RAL and Liverpool sites. (problems at CERN for external people)

Globus 1.1.3 set up at all sites.

Managed to remotely submit scripts and run SICBMC executable between sites via ... globus-job-run

from CERN to RAL and MAP

from RAL to CERN and MAP

from MAP to CERN and RAL

UK Grid Meeting 22.11.00

Glenn Patrick 7

Batch Tests

Mixture of batch systems - LSF at CERN, PBS at RAL, FCS at MAP. LHCb batch jobs remotely run on RAL-CSF Farm

(120 Linux nodes) using PBS (A.Sansum) via... globus-job-submit csflnx01.rl.ac.uk/jobmanager-pbs

globus-job-get-output CERN - access to LSF? MAP - last week setting up LHCb software. Need to interface FCS/mapsub to globus?

UK Grid Meeting 22.11.00

Glenn Patrick 8

Data Transfer

Data transferred back to originating computer via ...globus-rcp

Unreliable for large files. Can use a lot of temporary disk space. Limited proxies breaking scripts when running

commands in batch.

At RAL, now using GSIFTP (uses Globus authentication):

Availability of GSI toolkit at other UK sites & CERN? Consistency of user/security interface.

UK Grid Meeting 22.11.00

Glenn Patrick 9

Secondary/Tertiary Storage

RAL DataStore (IBM 3494) interfaced toprototype GASS server and accessible viaGlobus (Tim Folkes) - 30TB tape robot

Allows remote access to LHCb tapes(750MB) via pseudo URLs...

globus-rcp rs6ktest.cis.rl.ac.uk:/atlasdatastore/lhcb/L42426 myfileglobus-url-copy ...globus-gass-cache-add ...

Location of gass_cache can vary with command (server or client side) - space issue for large-scale transfers.

Also, can use C API interface from code.

10

MANSuperJANET Backbone

SuperJANET III 155 Mbit/s(SuperJANET IV 2.5Gbit/s)

London

RAL

Campus

Univ. Dept

MAN

Networking Bottlenecks?

CERN 100 Mbit/s

34 Mbit/s

622 Mbit/s(March 2001)

TEN-155

Need to study/measure for data transferand replication within UK and to

CERN.

622 Mbit/s

Schematic only

155 Mbit/s

UK Grid Meeting 22.11.00

Glenn Patrick 11

Data and Code Replication?

GDMP(WP2) - Asynchronous replication of Objectivityfederations on top of Globus (Stockinger,Samar - CMS).gdmp_replicate_file_getgdmp_publish_catalogue General replication tool for Objectivity, Root & ZEBRA

files and integrate Grid-FTP (end January 2001). Available in INFN Installation Toolkit.

“Kickstart” & “Update” Kits(WP8) - Installation ofexperiment environments on cpu farms? (eg. done for CMS production executables). Experiments encouragedto provide for WP8 and WP6 testbed...

UK Grid Meeting 22.11.00

Glenn Patrick 12

Problems/Issues

“Seamless” certification and authorisation of all people across all sites (certificates, gridmap files, etc) with common set of tools.

Getting people set up on CERN facilities (need defined technical contact). Central to any LHCb grid work.

Remote access into various batch systems (testbeds to cure?).

Role of w.area filesystems (AFS) & replication (GDMP).

But at least we now have some practical user-experience in a “physics” environment. Helped to shakedown UK systems and provide valuable feedback.

UK Grid Meeting 22.11.00

Glenn Patrick 13

Next?

Migrate LHCb production to Linux (mainly NT till now).

Start to adapt standard LHCb production scripts to use Globus tools, GDMP(?), etc.

Modify book-keeping to make use of LDAP. MDS & access to metadata/services at different sites. Gradually adapt LHCb software to be Grid aware. Try “production style” runs end 2000/start 2001? Expand to include other centres/countries in “LHCb

Grid”. Use available production systems and testbeds.