POLI di MI tecnicolano VISION-AUGMENTED INERTIAL NAVIGATION BY SENSOR FUSION FOR AN AUTONOMOUS...

-

date post

22-Dec-2015 -

Category

Documents

-

view

221 -

download

0

Transcript of POLI di MI tecnicolano VISION-AUGMENTED INERTIAL NAVIGATION BY SENSOR FUSION FOR AN AUTONOMOUS...

PO

LIPO

LI

di

di M

IM

Itecn

ico

tecn

ico

lano

lanoVISION-AUGMENTED

INERTIAL NAVIGATION BY SENSOR FUSION FOR AN

AUTONOMOUS ROTORCRAFT VEHICLE

C.L. Bottasso, D. LeonelloPolitecnico di Milano

AHS International Specialists' Meeting onUnmanned Rotorcraft

Phoenix, AZ, January 20-22, 2009

VISION-AUGMENTED INERTIAL NAVIGATION BY SENSOR FUSION FOR AN

AUTONOMOUS ROTORCRAFT VEHICLE

C.L. Bottasso, D. LeonelloPolitecnico di Milano

AHS International Specialists' Meeting onUnmanned Rotorcraft

Phoenix, AZ, January 20-22, 2009

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

OutlineOutline

• Introduction and motivation

• Inertial navigation by measurement fusion

• Vision-augmented inertial navigation

- Stereo projection and vision-based position sensors

- Vision-based motion sensors

- Outlier rejection

• Results and applications

• Conclusions and outlook

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

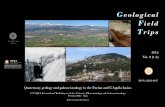

Rotorcraft UAVs at PoliMIRotorcraft UAVs at PoliMI

• Low-cost platform for development and testing of navigationnavigation and controlcontrol strategies (including vision, flight envelope protection, etc.)

• Vehicles: off-the-shelf hobby helicopters

• On-board control hardware based on PC-104 standard

• Bottom-up approach, everything is developedeverything is developed in-housein-house:

- Inertial Navigation System (this paper)

- Guidance and Control algorithms (AHS UAV `07: C.L. Bottasso et al., path planning by motion primitives, adaptive flight control laws)

- Linux-based real-time OS

- Flight simulators

- System identification (estimation of inertia, estimation of aerodynamic model parameters from flight test data)

- Etc. etc.

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

UAV Control ArchitectureUAV Control Architecture

Target

Obstacles

Hierarchical three-layer control architectureHierarchical three-layer control architecture (Gat 1998):

• Strategic layer: assign mission objectives (typically relegated to a human operator)

•Tactical layer: generate vehicle guidance information, based on input from strategic layer and ambient mapping information• Reflexive layer: track trajectory generated by tactical layer, control, stabilize and regulate vehicle

Sense vehicle state Sense vehicle state of motion (to of motion (to enable planning and enable planning and tracking)tracking)

Sense Sense environment (to environment (to enable mapping)enable mapping)

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

AccelerometerAccelerometer

GyroGyro

Sonar altimeterSonar altimeter

MagnetometerMagnetometer

GPSGPS

Sensor fusion algorithm

Sensor fusion algorithm

Laser scannerLaser scanner

Other sensorsOther sensors

Other sensorsOther sensors

Sensor fusion algorithm

Sensor fusion algorithm

State Estimates

Ambient map Obstacle/target recognition

Stereo camerasStereo cameras

Sensing of vehicle motion states

Sensing of environment for mapping

AdvantagesAdvantages:

• Improved accuracy/better estimates, especially when in proximity of obstacles

• Sensor loss tolerant (e.g. because of faults, or GPS loss indoors, under vegetation or in urban canyons, etc.)

Proposed approachProposed approach:

Recruit vision sensors for improved state estimation

(This paper)

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Sensor fusion by Kalman-typeKalman-type filtering to account for measurement and process noise:

States:

Inputs:

Outputs

Measures:

Classical Navigation SystemClassical Navigation System

x := (vET

B ;! BT ;r ET

OB ;q)T

y := (vET

G ;r ET

OG ;h;mBT )T

u := (aT

acc;!Tgyro)

T

z := (vT

gps;rTgps;hsonar;mT

magn)T

_x(t) = f¡x(t);u(t);º (t)

¢

y(tk) = h¡x(tk)

¢

z(tk) = y(tk) + ¹ (tk)

O

B

rOB

GA

S

T

s

h

rBA rBG

b2b3

b1

e2

e1

e3

GPS

Accelerometers

Sonar

GyrosMagnetometer

O

B

rOB

GA

S

T

s

h

rBA rBG

b2b3

b1

e2

e1

e3

OO

BB

rOBrOB

GGAA

SS

TT

ss

hh

rBArBA rBGrBG

b2b2b3b3

b1b1

e2e2

e1e1

e3e3

GPS

Accelerometers

Sonar

GyrosMagnetometer

x̂(tk+1) = ¹x(tk+1)+

K (tk+1)¡z(tk+1) ¡ ¹y(tk+1)

¢

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

AccelerometerAccelerometer

GyroGyro

Sonar altimeterSonar altimeter

MagnetometerMagnetometer

GPSGPS

Stereo cameras

Stereo cameras KLTKLT

Sensor fusion algorithm

Sensor fusion algorithm

Other sensorsOther sensors

State Estimates

Outlier rejectionOutlier rejection

Vision-Based Navigation SystemVision-Based Navigation SystemKanade-Lucas-Tomasi tracker:

Tracks feature points in the scene across the stereo cameras and across time steps

Each tracked point becomes vision-based motion sensor

Has own internal outlier rejection algorithm

Vision sensors

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Feature point projection:

Stereo visionStereo vision:

disparity

computed with Kanade-Lucas-Kanade-Lucas-Tomasi (KLT)Tomasi (KLT) algorithm

p = ¼(d)

dC =bd

pC

d = p1 ¡ p01

P

O

B

b f

CC0

c c01

c03

c02p

rOB

d0

Vision-Based Position SensorVision-Based Position Sensor

Effect of one pixel error on estimated distance (BumbleBee X3 camera) ▶

RemarkRemark: stereo vision info from low res cameras is noisynoisy, need care

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Feature Point TrackingFeature Point Tracking▼ Left camera

Time k ▶

Time k+1 ▶

▼ Right camera

Tracking across cameras

Tracking across time steps

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

P

O

B

b f

CC0

c c01

c03

c02p

rOB

d0

Vision-Based Motion SensorVision-Based Motion Sensor

ddt

(r E +R cB +R C dC) = 0

Differentiate the vector closure expression:

Apparent motionApparent motion of feature point on image plane (motion motion sensorsensor):_pC = ¡ M C T

¡R T vE

B + ! B £ (cB +C dC)¢

AttitudeAttitude

Linear Linear velocityvelocity

Angular velocityAngular velocity

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Vision-Based Motion SensorVision-Based Motion Sensor1. For all tracked feature points, write motion sensor

equation

This defines a new output of the vehicle statesnew output of the vehicle states

2. Measure apparent motion of feature pt:

3. Fuse in parallel with all other sensors using Kalman Kalman filteringfiltering

z := (vT

gps;rTgps;hsonar;mT

magn; : : : ;dTvision;dT 0

vision; : : :)T

Measured apparent velocity (due to vehicle motion)

This defines a new augmented new augmented measurement vectormeasurement vector: GPS, gyro, accelerometer, magnetometer, altimeter readings + two (left & right cameras) vision sensor per tracked feature point

y := (vET

G ;r ET

OG ;h;mBT ; : : : ;d(tk+1)CTk + 1 ;d(tk+1)C0T

k + 1 ; : : :)T

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Outlier RejectionOutlier RejectionOutlierOutlier:

• A point which is not fixed wrt to the scene

• A false positive in the tracking KLT algorithm

Outliers give false info on the state of motionfalse info on the state of motion, need a way to discard them from the process

Apparent point velocity due to estimated vehicle motion

Measured apparent velocity

Drop tracked point if the two vectors differ too

much in lengthlength and directiondirection ▶

Two stage rejectionTwo stage rejection:

1. KLT internal

2. Vehicle motion compatibility check

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Examples: Pirouette Around Point Cloud

Examples: Pirouette Around Point Cloud

Cloud of about 100 points

Temporary loss of GPS signal (for 100 sec < t < 200 sec)

To show conservative resultsconservative results:

• Only <=20 tracked points at each instant of time

• Vision sensors operating at 1Hz

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Examples: Pirouette Around Point Cloud

Examples: Pirouette Around Point Cloud

Filter warm-up

Begin GPS Begin GPS signal losssignal loss

End GPS End GPS signal losssignal loss

Classical non-vision-based IMU Vision-

based IMU

RemarkRemark:

No evident effect of GPS loss on state estimation for vision-augmented IMU

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Examples: Flight in a VillageExamples: Flight in a Village

◀ Scene environment and image acquisition based on Gazebo simulator

Rectangular flight path in a village at 2m of altitude

Three loops:

1. With GPS

2. Without GPS

3. With GPS

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

Filter warm-upBegin GPS Begin GPS signal losssignal loss

End GPS End GPS signal losssignal loss

Some degradation of velocity estimates without GPS

Filter warm-upBegin GPS Begin GPS signal losssignal loss

End GPS End GPS signal losssignal loss

Some degradation of velocity estimates without GPS

Examples: Flight in a VillageExamples: Flight in a Village

◀ No evident effect of GPS loss on attitude estimation

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

Vis

ion

-Au

gm

en

ted

In

ert

ial N

avig

ati

on

POLITECNICO di MILANO DIA

ConclusionsConclusionsProposed novel inertial navigation systeminertial navigation system using vision vision motion sensorsmotion sensors

Basic concept demonstrated in a high-fidelity virtual environment

Observed factsObserved facts:

• Improved observability of vehicle states

• No evident transients during loss and reacquisition of sensor signals

• Higher accuracy when close to objects and for increasing number of tracked points

• Computational cost compatible with on-board hardware (PC-104 Pentium III)

OutlookOutlook:

• Testing in the field

• Adaptive filtering: better robustness/tuning

• Recruitment of additional sensors (e.g. stereo laser-scanner)

BumbleBee X3 camera