Exploiting variable associations to configure efficient local search ...

Transcript of Exploiting variable associations to configure efficient local search ...

Exploiting variable associations to configure efficient local search

algorithms in large-scale binary integer programs∗

Shunji Umetani†

April 29, 2016

AbstractWe present a data mining approach for reducing the search space of local search al-

gorithms in a class of binary integer programs including the set covering and partitioningproblems. We construct a k-nearest neighbor graph by extracting variable associations fromthe instance to be solved, in order to identify promising pairs of flipping variables in theneighborhood search. We also develop a 4-flip neighborhood local search algorithm that flipsfour variables alternately along 4-paths or 4-cycles in the k-nearest neighbor graph. We in-corporate an efficient incremental evaluation of solutions and an adaptive control of penaltyweights into the 4-flip neighborhood local search algorithm. Computational comparison withthe latest solvers shows that our algorithm performs effectively for large-scale set coveringand partitioning problems.

keyword: combinatorial optimization, heuristics, set covering problem, set partitioningproblem, local search

1 Introduction

The set covering problem (SCP) and set partitioning problem (SPP) are representative combi-natorial optimization problems that have many real-world applications, such as crew scheduling[6, 24, 30], vehicle routing [2, 14, 23], facility location [12, 21] and logical analysis of data [11, 22].Real-world applications of SCP and SPP are comprehensively reviewed in [4, 18].

Given a ground set of m elements i ∈ M = {1, . . . ,m}, n subsets Sj ⊆ M (|Sj | ≥ 1), andtheir costs cj ∈ R for j ∈ N = {1, . . . , n}, we say that X ⊆ N is a cover of M if

⋃j∈X Sj = M

holds. We say that X ⊆ N is a partition of M if⋃j∈X Sj = M and Sj1 ∩ Sj2 = ∅ hold for all

j1, j2 ∈ X. The goals of SCP and SPP are to find a minimum cost cover and a partition X ofM , respectively. In this paper, we consider the following class of binary integer programs (BIPs)including SCP and SPP:

minimize∑j∈N

cjxj

subject to∑j∈N

aijxj ≥ bi, i ∈MG,∑j∈N

aijxj ≤ bi, i ∈ML,∑j∈N

aijxj = bi, i ∈ME ,

xj ∈ {0, 1}, j ∈ N,

(1)

∗A preliminary version of this paper was presented in [39].†Graduate School of Information Science and Technology, Osaka University, 1-5 Yamadaoka, Suita, Osaka,

565-0871, Japan. [email protected]

1

arX

iv:1

604.

0844

8v1

[cs

.DS]

28

Apr

201

6

where aij ∈ {0, 1} and bi ∈ Z+ (Z+ is the set of non-negative integer values). We note thataij = 1 if i ∈ Sj holds and aij = 0 otherwise, and xj = 1 if j ∈ X and xj = 0 otherwise.That is, a column vector aj = (a1j , . . . , amj)

T of the matrix (aij) represents the correspondingsubset Sj by Sj = {i ∈ MG ∪ ML ∪ ME | aij = 1}, and the vector x also represents thecorresponding cover (or partition) X by X = {j ∈ N | xj = 1}. For notational convenience, foreach i ∈MG ∪ML ∪ME , let Ni = {j ∈ N | aij = 1} be the index set of subsets Sj that containthe elements i and let si(x) =

∑j∈N aijxj be the left-hand side of the ith constraint.

The SCP and SPP are known to be NP-hard in the strong sense, and no polynomial timeapproximation scheme (PTAS) exists unless P = NP. However, worst case performance analysisdoes not necessarily represent the experimental performance in practice. Continuous devel-opment of mathematical programming has much improved the performance of heuristic algo-rithms and this has been accompanied by advances in computing machinery. Many efficientexact and heuristic algorithms for large-scale SCP and SPP instances have been developed[3, 5, 7, 10, 13, 15, 16, 17, 19, 28, 37, 42, 44], many of which are based on variable fixing tech-niques that reduce the search space to be explored by using the optimal values obtained by linearprogramming (LP) and/or Lagrangian relaxation as lower bounds. However, many large-scaleSCP and SPP instances still remain unsolved because there is little hope of closing the largegap between the lower and upper bounds of the optimal values. In particular, the equality con-straints of SPP often make variable fixing techniques less effective because they often preventsolutions from containing highly evaluated variables together. In this paper, we consider analternative approach for extracting useful features from the instance to be solved with the aimof reducing the search space of local search algorithms for large-scale SCP and SPP instances.

In the design of local search algorithms for large-scale combinatorial problems, as the in-stance size increases, improving the computational efficiency becomes more effective than usingsophisticated search strategies. The quality of locally optimal solutions typically improves if alarger neighborhood is used. However, the computation time of searching the neighborhood alsoincreases exponentially. To overcome this, extensive research has investigated ways to efficientlyimplement neighborhood search, which can be broadly classified into three types: (i) reducingthe number of candidates in the neighborhood [33, 35, 43, 44], (ii) evaluating solutions by incre-mental computation [29, 43, 40, 41], and (iii) reducing the number of variables to be consideredby using linear programming and/or Lagrangian relaxation [15, 19, 38, 44].

To suggest an alternative, we develop a data mining approach for reducing the search space oflocal search algorithms. That is, we construct a k-nearest neighbor graph by extracting variableassociations from the instance to be solved in order to identify promising pairs of swappingvariables in the large neighborhood search. We also develop a 4-flip neighborhood local searchalgorithm that flips four variables alternately along 4-paths or 4-cycles in the k-nearest neighborgraph (Section 3). We incorporate an efficient incremental evaluation of solutions (Section 2)and an adaptive control of penalty weights (Section 4) into the 4-flip neighborhood local searchalgorithm.

2 2-flip neighborhood local search

Local search (LS) starts from an initial solution x and then iteratively replaces x with a bettersolution x′ in the neighborhood NB(x) until no better solution is found in NB(x). For somepositive integer r, let the r-flip neighborhood NBr(x) be the set of solutions obtainable byflipping at most r variables in x. We first develop a 2-flip neighborhood local search (2-FNLS)algorithm as a basic component of our algorithm. In order to improve efficiency, the 2-FNLS firstsearches NB1(x), and then searches NB2(x) \ NB1(x) only if x is locally optimal with respectto NB1(x).

2

The BIP is NP-hard, and the (supposedly) simpler problem of judging the existence of afeasible solution is NP-complete, since the satisfiability (SAT) problem can be reduced to thisproblem. We accordingly consider the following formulation of a BIP that allows violationsof the constraints and introduce over and under penalty functions with penalty weight vectorsw+,w− ∈ Rm+ :

minimize z(x) =∑j∈N

cjxj +∑

i∈ML∪ME

w+i y

+i +

∑i∈MG∪ME

w−i y−i

subject to∑j∈N

aijxj + y−i ≥ bi, i ∈MG,∑j∈N

aijxj − y+i ≤ bi, i ∈ML,∑

j∈Naijxj + y−i − y

+i = bi, i ∈ME ,

xj ∈ {0, 1}, j ∈ N,y+i ≥ 0, i ∈ML ∪ME ,y−i ≥ 0, i ∈MG ∪ME .

(2)

For a given x ∈ {0, 1}n, we can easily compute optimal y+i = |si(x)−bi|+ and y−i = |bi−si(x)|+,

where we denote |x|+ = max{x, 0}.Because the region searched by a single application of LS is limited, LS is usually applied

many times. When a locally optimal solution is found, a standard strategy is to update thepenalty weights and to resume LS from the obtained locally optimal solution. We accordinglyevaluate solutions by using an alternative function z(x), where the original penalty weightvectors w+,w− are replaced by w+, w−, and these are adaptively controlled during the search(see Section 4 for details).

We first describe how 2-FNLS is used to search NB1(x), which is called the 1-flip neighbor-

hood. Let ∆z↑j (x) and ∆z↓j (x) denote the increases in z(x) due to flipping xj = 0 → 1 andxj = 1→ 0, respectively. 2-FNLS first searches for an improved solution by flipping xj = 0→ 1for j ∈ N \ X. If an improved solution is found, it chooses j that has the minimum value of∆z↑(x), otherwise it searches for an improved solution by flipping xj = 1→ 0 for j ∈ X.

We next describe how 2-FNLS is used to search NB2(x) \NB1(x), which is called the 2-flipneighborhood. We derive conditions that reduce the number of candidates in NB2(x) \NB1(x)without sacrificing the solution quality by expanding the results as shown in [44]. Let ∆zj1,j2(x)denote the increase in z(x) due to simultaneously flipping the values of xj1 and xj2 .

Lemma 1. If a solution x is locally optimal with respect to NB1(x), then ∆zj1,j2(x) < 0 holdsonly if xj1 6= xj2.

Proof. See Appendix A.

Based on this lemma, we consider only the set of solutions obtainable by simultaneouslyflipping xj1 = 1→ 0 and xj2 = 0→ 1. We now define

∆zj1,j2(x) = ∆z↓j1(x) + ∆z↑j2(x)−∑

i∈S(x)∩(ML∪ME)

w+i −

∑i∈S(x)∩(MG∪ME)

w−i , (3)

where S(x) = {i ∈ Sj1∩Sj2 | si(x) = bi}. By Lemma 1, the 2-flip neighborhood can be restrictedto the set of solutions satisfying xj1 6= xj2 and S(x) 6= ∅. However, it might not be possibleto search this set efficiently without first extracting it. We thus construct a neighbor list thatstores promising pairs of variables xj1 and xj2 for efficiency (see Section 3 for details).

3

To increase the efficiency of 2-FNLS, we decompose the neighborhood NB2(x) into a number

of sub-neighborhoods. Let NB(j1)2 (x) denote the subset of NB2(x) obtainable by flipping xj1 =

1→ 0. 2-FNLS searches NB(j1)2 (x) for each j1 ∈ X in ascending order of ∆z↓j1(x). If an improved

solution is found, the pair j1 and j2 that has the minimum value of ∆zj1,j2(x) among NB(j1)2 (x)

is selected. 2-FNLS is formally described as follows.

Algorithm 2-FNLS(x, w+, w−)

Input: A solution x and penalty weight vectors w+ and w−.

Output: A solution x.

Step 1: If I↑1 (x) = {j ∈ N \X | ∆z↑j (x) < 0} 6= ∅ holds, choose j ∈ I↑1 (x) that has the minimum

value of ∆z↑j (x), set xj ← 1, and return to Step 1.

Step 2: If I↓1 (x) = {j ∈ X | ∆z↓j (x) < 0} 6= ∅ holds, choose j ∈ I↓1 (x) that has the minimum

value of ∆z↓j (x), set xj ← 0, and return to Step 2.

Step 3: For each j1 ∈ X in ascending order of ∆z↓j1(x), if I(j1)2 (x) = {j2 ∈ N \X | ∆zj1,j2(x) <

0} 6= ∅ holds, choose j2 ∈ I(j1)2 (x) that has the minimum value of ∆zj1,j2(x) and set

xj1 ← 0 and xj2 ← 1. If the current solution x has been updated at least once in Step 3,return to Step 1, otherwise output x and exit.

If implemented naively, 2-FNLS requires O(σ) time to compute the value of the evaluationfunction z(x) for the current solution x, where σ =

∑i∈M

∑j∈N aij denote the number of non-

zero elements in the constraint matrix (aij). This computation is quite expensive if we evaluatethe neighbor solutions of the current solution x independently. To overcome this, we first developa standard incremental evaluation of ∆z↑j (x) and ∆z↓j (x) in O(|Sj |) time by keeping the valuesof the left-hand side of constraints si(x) (i ∈ MG ∪ML ∪ME) in memory. We further develop

an improved incremental evaluation of ∆z↑j (x) and ∆z↓j (x) in O(1) time by keeping additionalauxiliary data in memory (see Appendix B for details). By using this, 2-FNLS is also able toevaluate ∆zj1,j2(x) in O(|Sj |) time by using (3).

3 Exploiting variable associations

It is known that the quality of locally optimal solutions improves if a larger neighborhood is used.However, the computation time to search the neighborhood NBr(x) also increases exponentiallywith r, since |NBr(x)| = O(nr) generally holds. A large amount of computation time is thusneeded in practice in order to scan all candidates in NB2(x) for large-scale instances with millionsof variables. To overcome this, we develop a data mining approach that identifies promising pairsof flipping variables in NB2(x) by extracting variable associations from the instance to be solvedusing only a small amount of computation time.

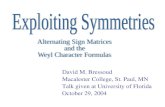

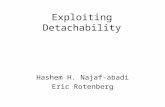

Based on Lemma 1, the 2-flip neighborhood can be restricted to the set of solutions satisfyingxj1 6= xj2 and S(x) 6= ∅. We further observe from (3) that it is favorable to select pairs of flippingvariables xj1 and xj2 which gives a larger size |Sj1∩Sj2 | in order to obtain ∆zj1,j2(x) < 0. Basedon this observation, we keep a limited set of pairs of variables xj1 and xj2 for which |Sj1 ∩Sj2 | islarge in memory, and we call this the neighbor list (Figure 1). We note that |Sj1∩Sj2 | representsa certain kind of similarity between the subsets Sj1 and Sj2 (or column vectors aj1 and aj2 ofthe constraint matrix (aij)) and we keep the k-nearest neighbors for each subset Sj (j ∈ N) inthe neighbor list.

4

x1

x2

x3

x4

x5

x6

x7

x8

x9

x10

x11

x98 x809 x701

x186 x810 x99 x303

x100 x811 x304 x187 x78

x79 x491 x85 x1064 x101

x102 x813 x705 x492

x493 x1066 x731

x494 x309 x104 x86

x495 x87 x105 x708 x83

x496 x311 x106 x193 x84

x194 x85 x497 x735

x108 x821 x86

Figure 1: Example of a neighbor list

For each variable xj1 (j1 ∈ N), we first enumerate xj2 (j2 ∈ N) satisfying j2 6= j1 andSj1 ∩ Sj2 6= ∅ to generate the set L[j1], and store the first min{|N (j1)|, α|MG ∪ ML ∪ ML|}variables xj2 (j2 ∈ L[j1]) in descending order of |Sj1 ∩ Sj2 | in the j1th row of the neighbor list,where N (j1) = {j2 ∈ N | j2 6= j1, Sj1 ∩ Sj2 6= ∅} and α is a program parameter that we set tofive. Let L′[j1] be the set of variables xj2 stored in the j1th row of the neighbor list. We thenreduce the number of candidates in NB2(x) by restricting the pairs of flipping variables xj1 andxj2 to pairs in the neighbor list j1 ∈ X and j2 ∈ (N \X) ∩ L′[j1].

We note that it is still expensive to construct the whole neighbor list for large-scale instanceswith millions of variables. To overcome this, we develop a lazy construction algorithm for theneighbor list. That is, 2-FNLS starts from an empty neighbor list and generates the j1th row

of the neighbor list L′[j1] only when 2-FNLS searches NB(j1)2 (x) for the first time.

x1

x98

x809

x701

x141

x765

x749

x784

x365

x662

x2

x186

x810

x99

x303

x275

x291

x247x204

x766

x825x750

x785

x142

x663

x148

x702

x241

x1040

x417

x3

x100

x811 x304

x187

x78

x143

x703

x891

x767

x826

x751

x786

x418

x657

x205

x292

x276

x995

x391

x166

x682

x4

x79

x491

x85 x1064

x101

x658

x167

x188

x392

x833

x426

x368

x463

x775

x10

x398

x689

x173

x382

x160

x758

x1186

x144

x704

x892 x665

x5

x102

x813

x705

x492

x369

x893

x828

x769

x753x788

x145

x659

x427

x834

x997

x6 x493

x1066

x731

x208

x777

x428

x162

x760

x685

x394

x7

x494

x309

x104

x86

x370

x429

x475

x424

x707

x395

x170

x11x690

x174

x8

x495

x87

x105

x708

x83

x430

x371

x476

x642x1127

x12

x175

x668

x691

x896

x310

x171

x396

x461

x192

x9

x496

x311

x106

x193

x84

x431

x372

x477

x643

x267

x381

x709

x1014

x254

x283

x298

x210

x664

x688x397

x172

x194

x497

x735

x284

x211

x958

x299

x432

x763

x561

x108

x821

x711

x373

x836x761x779

x1190

x1070

x196

x388

x764

x287

x301x212

x499

x13

x500

x274

x416

x435

x185

x900

x14

x109

x318x712

x1151

x901

x260

x601

x437

x15

x199

x502

x110x319

x375

x866

x1115

x242

x153

x713x261

x602

x438

x16

x827x111

x714

x277

x768 x752

x1179

x787

x200

x320

x376

x671

x293

x1153

x903

x17

x112

x1074

x201

x155

x715

x278

x504

x1180

x868

x244

x1117

x18

x1075

x113

x202

x829

x420

x279

x716x322

x378

x869x245

x1118

x754

x770

x789

x19

x203

x114

x487

x1119

x830

x20

x831

x323

x281

x1077

x756

x816

x773

x790

x443

x870

x1140

x191x907

x972

x507

x772

x21

x115

x508

x1078

x324

x717

x154

x380

x56

x282

x444

x832

x871

x22x116

x509

x718x325

x1079

x1185

x818

x792

x672

x675

x445

x1030

x425x774

x23

x117

x510

x326

x1080

x676

x719

x1142

x873

x1143

x446

x1035x1159

x272

x910

x58

x24

x118

x1081

x835x157

x383

x720

x776

x1187

x1188

x251

x1125

x874

x25

x209

x286

x513

x253

x876

x794

x300x195

x912

x448

x1051

x26

x514

x119

x329

x449

x385

x1146

x722

x837

x450

x877

x1147

x1037

x913

x1161

x27

x723

x120x330

x288x386

x679

x677

x1148

x451

x1019

x914

x1162

x467

x28

x289

x331

x915

x948

x453

x1150

x839

x29

x121

x290

x726

x916

x1163

x686

x389

x30

x122 x846

x520

x727

x339

x390

x220

x802

x994x917

x455 x1164

x647

x681

x687

x31

x847

x728

x1091

x123

x221

x408

x1158

x791x803

x521

x340

x1029

x1048

x583

x32

x124

x222

x522

x1092

x729

x1166

x919

x457

x584

x33

x125x730

x523

x342

x223

x684

x393x458

x920

x1160x1016

x464

x849

x34

x126

x525

x224

x1095

x732

x343

x652

x35

x344

x850

x127

x526

x733

x466

x1052

x412

x806

x225

x462

x36

x527

x128

x226

x345

x734

x588x851

x654

x807

x37

x129

x736

x1099

x227

x926

x1173

x399

x38

x1102

x130

x532

x214

x797

x738

x402x470

x39

x739

x1103

x853x131

x176

x692

x403

x215

x533

x798

x348x229

x40

x534

x740

x132

x349

x854

x472

x404

x799

x693

x230

x367

x471x1165

x41

x741

x1105

x350

x855

x133

x694

x405

x217

x535

x800

x231

x42

x134

x856

x351

x742

x179

x232

x1167

x473

x294

x695

x43

x135

x858

x353

x743

x538x234

x1169

x1170

x44

x744x136

x1109

x354

x539

x235

x409

x697

x178

x181

x804

x1000

x1034

x859

x1171

x596

x45 x355x137

x860

x540

x236x1110

x182

x745

x974

x410

x478

x1172

x1015

x1049

x805

x46x138

x861

x356

x746

x411

x237

x183

x699

x696

x47

x238

x139

x862

x543

x1112 x413

x357

x747

x480x1174

x48

x748

x140

x863x358

x700

x239x1038

x49

x302

x481

x50

x1072

x656

x77

x51

x360

x483

x1041

x629

x482x1073x52

x419

x305

x884

x484

x503

x812x53

x362

x631x990

x1043

x306

x485

x54

x146 x263

x421

x660

x1137

x886

x307

x486

x1076

x55

x771

x264

x755

x1138

x887

x147

x265

x889

x1139

x488

x489

x1012

x57

x266

x890 x366

x619

x490x248

x606

x149

x249x1141 x554

x59

x150

x268

x312

x250

x608

x206

x1123

x555

x60

x1082

x269

x1124x1031

x61

x151

x270

x667

x895x1145 x641

x314

x62

x152

x1085

x1018

x315

x271

x515 x1191

x780x63

x781

x1086

x838

x560

x64

x273

x434

x257

x1149

x899

x1054

x628

x65

x1055

x442

x246

x374

x1120

x423

x308

x66

x1056

x670x67

x1057

x1122

x607

x1042

x68

x1058x377

x872

x69

x1059x156

x207

x673

x70

x447

x379

x674x327

x512

x71

x1062

x252

x1126

x313

x1013

x72

x1063

x159

x757

x328

x73

x1128

x567

x74

x161

x759

x255

x878

x819

x1065

x1129

x1050

x511

x568

x75

x1067

x256

x571

x1130

x945

x76

x163

x1068

x1131

x518

x762

x165 x454x332

x519

x883

x683

x1008

x80

x168

x189

x81

x169

x190

x363

x336

x82

x296

x460x1047

x1157

x297

x1001

x528

x341

x572

x400

x737

x88

x407

x1028

x967

x537

x1046

x89

x177

x595

x90

x969

x848

x91

x92

x180

x474

x541

x585x352

x536

x93

x542

x1017

x909

x587

x94

x698

x598

x795

x95

x414x544

x911

x96x415

x184 x545

x97x888

x904

x937

x953x905

x971

x954

x103

x706x894

x666

x897

x107

x710

x898

x669

x498

x865

x1177

x867

x1116

x243

x262

x603

x439

x441

x505

x793

x880

x582

x651

x586

x999

x465

x1020

x347

x1006

x591x592

x593

x844

x1135

x636

x918

x935

x1045

x1011

x506

x158

x1061

x611

x817

x1053

x678

x384

x724

x721

x164

x680

x725

x1069

x334

x295

x959

x530

x469

x1121

x1152

x902

x952

x1154

x1009

x921

x1168

x1155

x922

x338

x908

x923

x559

x941

x973

x924

x612

x942

x626

x985

x1036

x925

x627

x927

x977

x563

x197

x864

x1113

x240

x198

x1114

x600

x259

x422

x618

x213

x576

x840

x987

x961

x796

x882

x1021

x841

x333

x1007

x1023

x578 x989

x216

x335

x1104

x1025

x1010

x991

x965

x1044

x218

x801

x1026

x845

x1106

x857

x604

x992

x219

x993

x605

x1027

x638

x557

x640

x594

x982

x570

x983

x653x984x597

x1003

x976

x228

x968

x620

x649

x980

x621

x650

x981

x996

x622

x233

x1032

x998

x625

x614

x1002

x613

x479

x1004

x258

x1132

x1133

x1134x885

x1136

x949

x565

x951

x955

x562

x929 x1022

x551

x934

x564

x637

x566

x556x639

x950

x843

x440

x280

x906x1156

x337 x459

x558

x940

x1033

x285

x944

x529

x574

x575

x966

x978

x590

x979

x406

x589

x646

x946

x1005

x1039

x814

x524

x617

x623

x316

x433

x823

x782x1193

x317

x436

x501

x546

x928

x630

x547x548

x930

x549

x632

x931

x321

x516

x573

x783

x1194

x456

x957

x346

x468

x517

x531

x552

x635

x938

x359

x361

x577

x579x1024

x364

x553

x581

x936

x387

x879

x824

x970

x1098

x1100

x401

x648

x624

x1111x956

x655

x986x960

x550

x633x932

x1184

x822

x452

x615 x1087

x1178

x943

x644

x975

x1096

x962x963

x964

x580

x634

x933

x609

x645

x569

x875

x599

x610

x939

x616

x947

x661

x1060

x1084

x1192

x1088

x815

x1183

x778 x820

x1083 x1189

x1090

x842

x1093

x1094

x1097

x1101

x852

x1107x1108

x808

x1175

x881

x988

x1071

x1089

x1144

x1176

x1181

x1182

Figure 2: Example of the k-nearest neighbor graph

We can treat the neighbor list as an adjacency-list representation of a directed graph, andrepresent associations between variables by a corresponding directed graph called the k-nearestneighbor graph (Figure 2). Using the k-nearest neighbor graph, we extend 2-FNLS to search aset of promising neighbor solutions in NB4(x). For each variable xj1 (j1 ∈ X), we keep j2 ∈(N \X)∩L′[j1] that has the minimum value of ∆zj1,j2(x) in memory as π(j1). The extended 2-FNLS, called the 4-flip neighborhood search (4-FNLS) algorithm, then searches for an improvedsolution by flipping xj1 = 1 → 0, xπ(j1) = 0 → 1, xj3 = 1 → 0 and xπ(j3) = 0 → 1 for j1 ∈ X

5

and j3 ∈ X ∩ L′[π(j1)] satisfying j1 6= j3 and π(j1) 6= π(j3), i.e., flipping the values of variablesalternately along 4-paths or 4-cycles in the k-nearest neighbor graph. Let ∆zj1,j2,j3,j4(x) denotethe increase in z(x) due to simultaneously flipping xj1 = 1 → 0, xj2 = 0 → 1, xj3 = 1 → 0and xj4 = 0 → 1. 4-FNLS computes ∆zj1,j2,j3,j4(x) in O(|Sj |) time by applying the standardincremental evaluation alternately. 4-FNLS is formally described by replacing the part of the2-FNLS algorithm after Step 2 with the following:

Step 3′: For each j1 ∈ X in ascending order of ∆z↓j1(x), if I(j1)2 (x) = {j2 ∈ (N \X) ∩ L′[j1] |

∆zj1,j2(x) < 0} 6= ∅ holds, choose j2 ∈ I(j1)2 (x) that has the minimum value of ∆zj1,j2(x)

and set xj1 ← 0 and xj2 ← 1. If the current solution x has been updated at least once inStep 3′, return to Step 1.

Step 4′: For each j1 ∈ X in ascending order of ∆zj1,π(j1)(x), if I(j1)4 (x) = {j3 ∈ X ∩ L′[π(j1)] |

j3 6= j1, π(j3) 6= π(j1),∆zj1,π(j1),j3,π(j3)(x) < 0} 6= ∅ holds, choose j3 ∈ I(j1)4 (x) that has

the minimum value of ∆zj1,π(j1),j3,π(j3)(x) and set xj1 ← 0, xπ(j1) ← 1, xj3 ← 0 andxπ(j3) ← 1. If the current solution x has been updated at least once in Step 4′, return toStep 1, otherwise output x and exit.

Although a similar approach has been developed in local search algorithms for the Euclideantraveling salesman problem (TSP) in which a sorted list containing only the k-nearest neighborsis stored for each city by using a geometric data structure called the k-dimensional tree [26],it is not suitable for finding the k-nearest neighbors efficiently in high-dimensional spaces. Wethus extend it for application to the high-dimensional column vectors aj ∈ {0, 1}m (j ∈ N) ofBIPs by using a lazy construction algorithm for the neighbor list.

4 Adaptive control of penalty weights

In our algorithm, solutions are evaluated by the alternative evaluation function z(x) in which thefixed penalty weight vectors w+, w− in the original evaluation function z(x) has been replacedby w+, w−, and the values of w+

i (i ∈ML ∪ME), w−i (i ∈MG ∪ME) are adaptively controlledin the search.

It is often reported that a single application of LS tends to stop at a locally optimal solutionof insufficient quality when large penalty weights are used. This is because it is often unavoidableto temporally increase the values of some violations y+

i and y−i in order to reach an even bettersolution from a good solution through a sequence of neighborhood operations, and large penaltyweights thus prevent LS from moving between such solutions. To overcome this, we incorporatean adaptive adjustment mechanism for determining appropriate values of penalty weights w+

i

(i ∈ML∪ME), w−i (i ∈MG∪ME) [32, 44, 38]. That is, LS is applied iteratively while updatingthe values of the penalty weights w+

i (i ∈ML ∪ME), w−i (i ∈MG ∪ME) after each call to LS.We call this sequence of calls to LS the weighting local search (WLS) according to [34, 36]. Thisstrategy is also referred as the breakout algorithm [31] and the dynamic local search [25] in theliterature.

Let x denote the solution at which the previous local search stops. We assume that theoriginal penalty weights w+

i (i ∈ ML ∪ME), w−i (i ∈ MG ∪ME) are sufficiently large. WLSresumes LS from x after updating the penalty weight vectors w+, w−. Starting from theoriginal penalty weight vectors (w+, w−) ← (w+,w−), the penalty weight vectors w+, w−

are updated as follows. Let x∗ denote the best solution obtained so far with respect to theoriginal evaluation function z(x). If z(x) ≥ z(x∗) holds, WLS uniformly decreases the penalty

6

weights by (w+, w−)← β(w+, w−), where 0 < β < 1 is a program parameter that is adaptively

computed so that the new value of ∆z↓j (x) becomes negative for 10% of variables xj (j ∈ X).Otherwise, WLS increases the penalty weights by

w+i ← w+

i +z(x∗)− z(x)∑l∈M (y+2

l + y−2

l )y+i , i ∈ML ∪ME ,

w−i ← w−i +z(x∗)− z(x)∑l∈M (y+2

l + y−2

l )y−i , i ∈MG ∪ME .

(4)

WLS iteratively applies LS, updating the penalty weight vectors w+, w− after each call to LSuntil the time limit is reached. Note that we modify 4-FNLS to evaluate solutions with bothz(x) and z(x), and update the best solution x∗ with respect to the original objective functionz(x) whenever an improved solution is found. WLS is formally described as follows. Note thatwe set the initial solution to x = 0 in practice.

Algorithm WLS(x)

Input: An initial solution x.

Output: The best solution x∗ with respect to z(x).

Step 1: Set x∗ ← x, x← x and (w+, w−)← (w+,w−).

Step 2: Apply 4-FNLS(x, w+, w−) to obtain an improved solution x′. Let x′ be the bestsolution with respect to the original evaluation function z(x) obtained during the call to4-FNLS(x, w+, w−). Set x← x′.

Step 3: If z(x′) < z(x∗) holds, then set x∗ ← x′. If the time limit is reached, output x∗ andhalt.

Step 4: If z(x) ≥ z(x∗) holds, then uniformly decrease the penalty weights by (w+, w−) ←β(w+, w−), otherwise, increase the penalty weight vectors (w+, w−) by (4). Return toStep 2.

5 Computational results

We report computational results of our algorithm for the SCP instances from [8, 38] and theSPP instances from [10, 20, 27]. Tables 1 and 2 summarize the information about the originaland pre-solved instances. The first column shows the name of the group (or the instance), andthe numbers in parentheses show the number of instances in the group. The second column“zLP” shows the optimal values of the LP relaxation problems. The third column “zbest” showsthe best upper bounds among all algorithms and the settings in this paper. The fourth and sixthcolumns “#cst.” show the number of constraints, and the fifth and seventh columns “#vars.”show the number of variables. Since several preprocessing techniques that often reduce the sizeof instances by removing redundant rows and columns are known [10], all algorithms are testedon the pre-solved instances. The instances marked “?” are hard instances that cannot be solvedoptimally within at least 1 h by the latest mixed integer programming (MIP) solvers.

We first compare our algorithm with three of the latest MIP solvers called CPLEX12.6,Gurobi5.6.3, and SCIP3.1 [1], and a local search solver called LocalSolver3.1 [9]. LocalSolver3.1is not the latest version, but it performs better than the latest version (LocalSolver4.5) forSCP and SPP instances. We also compare our algorithm with a 3-flip local search algorithmdeveloped by [44] (denoted by YKI) for SCP instances. All algorithms are tested on a MacBook

7

Table 1: Benchmark instances for SCP

original pre-solvedinstance zLP zbest #cst. #var. #cst. #var. time limit?G.1–5 (5) 149.48 166.4 1000.0 10000.0 1000.0 10000.0 600 s?H.1–5 (5) 45.67 59.6 1000.0 10000.0 1000.0 10000.0 600 s?I.1–5 (5) 138.97 158.0 1000.0 50000.0 1000.0 49981.0 1200 s?J.1–5 (5) 104.78 129.0 1000.0 100000.0 1000.0 99944.8 1200 s?K.1–5 (5) 276.67 313.2 2000.0 100000.0 2000.0 99971.0 1800 s?L.1–5 (5) 209.34 258.0 2000.0 200000.0 2000.0 199927.6 1800 s?M.1–5 (5) 415.78 549.8 5000.0 500000.0 5000.0 499988.0 3600 s?N.1–5 (5) 348.93 503.8 5000.0 1000000.0 5000.0 999993.2 3600 sRAIL507 172.15 174 507 63009 440 20700 600 sRAIL516 182.00 182 516 47311 403 37832 600 sRAIL582 209.71 211 582 55515 544 27427 600 sRAIL2536 688.40 689 2536 1081841 2001 480597 3600 s?RAIL2586 935.92 947 2586 920683 2239 408724 3600 s?RAIL4284 1054.05 1064 4284 1092610 3633 607884 3600 s?RAIL4872 1509.64 1530 4872 968672 4207 482500 3600 s

Table 2: Benchmark instances for SPP

original pre-solvedinstance zLP zbest #cst. #var. #cst. #var. time limitaa01–06 (6) 40372.75 40588.83 675.3 7587.3 478.7 6092.7 600 sus01–04 (4) 9749.44 9798.25 121.3 295085.0 65.5 85772.5 600 st0415–0421 (7) 5199083.74 5453475.71 1479.3 7304.3 820.7 2617.4 600 s?t1716–1722 (7) 121445.76 158739.86 475.7 58981.3 475.7 13193.6 3600 sv0415–0421 (7) 2385764.17 2393303.71 1479.3 30341.6 263.9 7277.0 600 sv1616–1622 (7) 1021288.76 1025552.43 1375.7 83986.7 1171.9 51136.7 600 s?ds 57.23 187.47 656 67732 656 67730 3600 s?ds-big 86.82 731.69 1042 174997 1042 173026 3600 s?ivu06-big 135.43 166.02 1177 2277736 1177 2197774 3600 s?ivu59 884.46 1878.83 3436 2569996 3413 2565083 3600 s

Pro laptop computer with a 2.7 GHz Intel Core i7 processor, and are run on a single threadwith time limits as shown in Tables 1 and 2.

Tables 3 and 4 show the relative gap (%) z(x)−zbestz(x) ×100 of the best feasible solutions achieved

by the algorithms under the original (hard) constraints. The numbers in parentheses show thenumber of instances for which the algorithm obtained at least one feasible solution within thetime limit.

We first observe that our algorithm achieves good upper bounds comparable to those ofthe 3-flip neighborhood local search [44] for many SCP instances. We note that the 3-flipneighborhood local search algorithm introduces a heuristic variable fixing technique based onLagrangian relaxation that substantially reduces the number of variables to be considered to1.05% from the original SCP instances on average. We next observe that our algorithm achievesbest upper bounds in 17 out of 42 instances for all SPP instances, including 7 out of 11 instancesfor hard instances in particular. We note that local search algorithms and MIP solvers arequite different in character. Local search algorithms do not guarantee optimality because theytypically search only a portion of the solution space. MIP solvers, however, examine everypossible solution, at least implicitly, in order to guarantee optimality. Hence, it is inherentlydifficult to find optimal solutions by local search algorithms even in the case of small instances,

8

Table 3: Comparison of the best gap of the proposed algorithm to those of the latest solvers forSCP instances

instance CPLEX12.6 Gurobi5.6.3 SCIP3.1 LocalSolver3.1 YKI proposed?G.1–5 (5) 0.37% 0.49% 0.24% 45.80% 0.00% 0.00%?H.1–5 (5) 1.92% 2.28% 1.93% 61.54% 0.00% 0.00%?I.1–5 (5) 2.81% 2.72% 1.85% 41.38% 0.00% 0.50%?J.1–5 (5) 8.37% 4.30% 3.59% 58.40% 0.00% 1.53%?K.1–5 (5) 4.77% 4.38% 2.55% 51.22% 0.00% 1.26%?L.1–5 (5) 9.57% 8.44% 3.52% 57.79% 0.00% 2.05%?M.1–5 (5) 18.43% 10.10% 30.71% 71.08% 0.00% 2.65%?N.1–5 (5) 33.13% 12.49% 42.32% 75.63% 0.00% 5.47%RAIL507 0.00% 0.00% 0.00% 5.43% 0.00% 0.00%RAIL516 0.00% 0.00% 0.00% 3.19% 0.00% 0.00%RAIL582 0.00% 0.00% 0.00% 5.80% 0.00% 0.00%RAIL2536 0.00% 0.00% 0.86% 3.50% 0.29% 0.72%?RAIL2586 2.27% 2.17% 2.27% 5.39% 0.00% 1.56%?RAIL4284 5.34% 1.57% 30.55% 6.50% 0.00% 2.12%?RAIL4872 1.73% 1.73% 2.67% 5.61% 0.00% 1.80%avg. (47) 8.64% 4.92% 10.00% 49.99% 0.01% 1.56%

Table 4: Comparison of the best gap of the proposed algorithm to those of the latest solvers forSPP instances

instance CPLEX12.6 Gurobi5.6.3 SCIP3.1 LocalSolver3.1 proposedaa01–06 (6) 0.00% (6) 0.00% (6) 0.00% (6) 13.89% (1) 1.60% (6)us01–04 (4) 0.00% (4) 0.00% (4) 0.00% (3) 11.26% (2) 0.04% (4)t0415–0421 (7) 0.66% (7) 0.60% (7) 1.61% (6) — (0) 0.92% (6)?t1716–1722 (7) 7.63% (7) 15.94% (7) 2.76% (7) 36.09% (1) 1.70% (7)v0415–0421 (7) 0.00% (7) 0.00% (7) 0.00% (7) 0.05% (7) 0.00% (7)v1616–1622 (7) 0.00% (7) 0.00% (7) 0.00% (7) 4.60% (7) 0.09% (7)?ds 8.86% 55.61% 40.53% 85.17% 0.00%?ds-big 62.16% 24.03% 72.01% 92.69% 0.00%?ivu06-big 20.86% 0.68% 17.90% 52.54% 0.00%?ivu59 28.50% 4.36% 37.84% 48.95% 0.00%avg. (42) 4.25% (42) 4.77% (42) 4.93% (40) 17.47% (22) 0.69% (41)

while MIP solvers find optimal solutions quickly for small instances and/or those having a smallgap between the lower and upper bounds of optimal values. In view of this, our algorithmachieves sufficiently good upper bounds compared to the other algorithms on the benchmarkinstances, particularly for the hard SPP instances.

Tables 5 and 6 show the completion rate of the neighbor list by rows, i.e., the proportion ofgenerated rows to all rows in the neighbor list. We observe that our algorithm achieves goodperformance while generating only a small part of the neighbor list for the large-scale instances.

Tables 7 and 8 show the relative gap of the best feasible solutions achieved by our algorithmfor different settings. The second column “no-list” shows the results of our algorithm withoutthe neighbor list, and the third column “no-inc” shows the results of our algorithm without theimproved incremental evaluation (i.e., only applying the standard incremental evaluation). Thefourth column “2-FNLS” shows the results of our algorithm without the 4-flip neighborhoodsearch (i.e., only applying 2-FNLS). Tables 9 and 10 also show the computational efficiency ofour algorithm for different settings with respect to the number of calls to 4-FNLS (and 2-FNLSin the fourth column “2-FNLS”), where the values are normalized so that values of the proposed

9

Table 5: Completion rate of the neighbor list by rows for SCP instances

instance 1 min 10 min 20 min 30 min 1 h?G.1–5 (5) 3.58% 3.95% — — —?H.1–5 (5) 2.12% 2.43% — — —?I.1–5 (5) 1.39% 1.63% 1.71% — —?J.1–5 (5) 0.82% 1.10% 1.16% — —?K.1–5 (5) 1.20% 1.49% 1.57% 1.61% —?L.1–5 (5) 0.57% 0.98% 1.06% 1.10% —?M.1–5 (5) 0.20% 0.58% 0.73% 0.81% 0.93%?N.1–5 (5) 0.01% 0.21% 0.29% 0.35% 0.49%RAIL507 13.41% 22.02% — — —RAIL516 4.73% 8.94% — — —RAIL582 8.19% 10.66% — — —RAIL2536 0.30% 1.36% 1.87% 2.17% 2.73%?RAIL2586 0.44% 1.67% 2.19% 2.54% 3.28%?RAIL4284 0.20% 1.01% 1.43% 1.73% 2.29%?RAIL4872 0.37% 1.56% 2.11% 2.46% 3.10%

Table 6: Completion rate of the neighbor list by rows for SPP instances

instance 1 min 10 min 30 min 1 haa01–06 (6) 40.47% 49.17% — —us01–04 (4) 3.93% 5.17% — —t0415–0421 (7) 83.47% 90.00% — —?t1716–1722 (7) 61.00% 94.38% 97.12% 97.98%v0415–0421 (7) 31.44% 31.64% — —v1616–1622 (7) 6.55% 7.42% — —?ds 2.29% 12.63% 27.11% 40.05%?ds-big 0.21% 2.06% 4.96% 8.10%?ivu06-big 0.01% 0.07% 0.23% 0.45%?ivu59 0.01% 0.05% 0.11% 0.16%

algorithm are set to one.From the results, we can see that the overall computational efficiency of our algorithm was

improved 16.90-fold and 17.26-fold on average for SCP and SPP instances, respectively in com-parison with the naive algorithm that has neither the neighbor list nor the improved incrementalevaluation (i.e., only applying the standard incremental evaluation), and our algorithm attainsgood performance even when the size of the neighbor list is considerably small. We also observethat the 4-flip neighborhood search substantially improves the performance of our algorithm,even though there are fewer calls to 4-FNLS compared to 2-FNLS.

6 Conclusion

We present a data mining approach for reducing the search space of local search algorithms for aclass of BIPs including SCP and SPP. In this approach, we construct a k-nearest neighbor graphby extracting variable associations from the instance to be solved in order to identify promisingpairs of flipping variables in the 2-flip neighborhood. We also develop a 4-flip neighborhood localsearch algorithm that flips four variables alternately along 4-paths or 4-cycles in the k-nearestneighbor graph. We incorporate an efficient incremental evaluation of solutions and an adaptivecontrol of penalty weights into the 4-flip neighborhood local search algorithm. Computational

10

Table 7: Comparison of the best gap of variations of the proposed algorithm for SCP instances

instance no-list no-inc 2-FNLS proposed?G.1–5 (5) 0.00% 0.12% 0.00% 0.00%?H.1–5 (5) 0.31% 0.31% 0.31% 0.00%?I.1–5 (5) 1.24% 0.86% 0.50% 0.50%?J.1–5 (5) 2.42% 1.67% 1.68% 1.53%?K.1–5 (5) 2.12% 1.69% 1.32% 1.26%?L.1–5 (5) 3.44% 3.51% 2.35% 2.05%?M.1–5 (5) 10.97% 8.33% 2.79% 2.65%?N.1–5 (5) 19.11% 22.06% 4.76% 5.47%RAIL507 0.00% 0.57% 0.00% 0.00%RAIL516 0.00% 0.00% 0.00% 0.00%RAIL582 0.47% 0.47% 0.47% 0.00%RAIL2536 2.68% 2.27% 1.29% 0.72%?RAIL2586 2.57% 2.87% 2.27% 1.56%?RAIL4284 5.42% 5.17% 2.74% 2.12%?RAIL4872 4.43% 3.47% 2.36% 1.80%avg. (47) 4.55% 4.42% 1.65% 1.56%

Table 8: Comparison of the best gap of variations of the proposed algorithm for SPP instances

instance no-list no-inc 2-FNLS proposedaa01–06 (6) 2.33% (6) 2.26% (6) 2.07% (6) 1.60% (6)us01–04 (4) 0.04% (4) 1.16% (4) 0.63% (4) 0.04% (4)t0415–0421 (7) 1.46% (5) 1.30% (6) 0.29% (7) 0.92% (6)?t1716–1722 (7) 4.73% (7) 3.58% (7) 4.98% (7) 1.70% (7)v0415–0421 (7) 0.00% (7) 0.00% (7) 0.00% (7) 0.00% (7)v1616–1622 (7) 0.62% (7) 0.17% (7) 0.09% (7) 0.09% (7)?ds 36.03% 33.80% 24.13% 0.00%?ds-big 29.11% 0.00% 40.75% 0.00%?ivu06-big 5.31% 3.83% 2.25% 0.00%?ivu59 15.75% 11.39% 16.01% 0.00%avg. (42) 3.63% (40) 2.47% (41) 3.23% (42) 0.69% (41)

comparison with the latest solvers shows that our algorithm achieves sufficiently good upperbounds for SCP and SPP instances, particularly for hard SPP instances.

We expect that data mining approaches could also be beneficial for efficiently solving otherlarge-scale combinatorial optimization problems, particularly for hard instances that have largegaps between the lower and upper bounds of the optimal values.

References

[1] Achterberg, T. (2009). SCIP: Solving constraint integer programs. Mathematical Program-ming Computation, 1, 1–41.

[2] Agarwal, Y., Mathur, K., & Salkin, H. M. (1989). A set-partitioning-based exact algorithmfor the vehicle routing problem. Networks, 19, 731–749.

[3] Atamturk, A., Nemhauser, G. L., & Savelsbergh, M. W. P. (1995). A combined Lagrangian,linear programming, and implication heuristic for large-scale set partitioning problems. Jour-nal of Heuristics, 1, 247–259.

11

Table 9: Comparison of the computational efficiency of variations of the proposed algorithm forSCP instances

instance no-list no-inc 2-FNLS proposed?G.1–5 (5) 0.51 0.36 1.92 1.00?H.1–5 (5) 0.83 0.35 1.76 1.00?I.1–5 (5) 0.39 0.34 2.33 1.00?J.1–5 (5) 0.47 0.43 2.44 1.00?K.1–5 (5) 0.25 0.31 1.98 1.00?L.1–5 (5) 0.32 0.34 2.31 1.00?M.1–5 (5) 0.19 0.22 2.65 1.00?N.1–5 (5) 0.27 0.23 3.20 1.00RAIL507 0.19 0.27 1.61 1.00RAIL516 0.16 0.27 1.37 1.00RAIL582 0.20 0.27 1.46 1.00RAIL2536 0.13 0.11 1.26 1.00?RAIL2586 0.14 0.13 1.78 1.00?RAIL4284 0.11 0.12 1.58 1.00?RAIL4872 0.08 0.10 1.78 1.00avg. (47) 0.37 0.30 2.21 1.00

Table 10: Comparison of computational efficiency of variations of the proposed algorithm forSPP instances

instance no-list no-inc 2-FNLS proposedaa01–06 (6) 0.18 0.37 1.66 1.00us01–04 (4) 0.36 0.45 1.07 1.00t0415–0421 (7) 0.17 0.33 2.71 1.00?t1716–1722 (7) 0.18 0.35 1.62 1.00v0415–0421 (7) 0.20 0.42 1.53 1.00v1616–1622 (7) 0.15 0.41 1.58 1.00?ds 0.27 0.30 1.24 1.00?ds-big 0.26 0.31 1.33 1.00?ivu06-big 0.22 0.29 1.22 1.00?ivu59 0.32 0.48 1.42 1.00avg. (42) 0.20 0.38 1.70 1.00

[4] Balas, E., & Padberg, M. W. (1976). Set partitioning: A survey. SIAM Review, 18, 710–760.

[5] Barahona, F. & Anbil, R. (2000). The volume algorithm: Producing primal solutions with asubgradient method. Mathematical Programming, A87, 385–399.

[6] Barnhart, C., Johnson, E. L., Nemhauser, G. L., Savelsbergh, M. W. P., & Vance, P. H.(1998). Branch-and-price: Column generation for solving huge integer programs. OperationsResearch, 46, 316–329.

[7] Bastert, O., Hummel, B. & de Vries, S. (2010). A generalized Wedelin heuristic for integerprogramming. INFORMS Journal on Computing, 22, 93–107.

[8] Beasley, J. E. (1990). OR-Library: Distributing test problems by electronic mail. Journal ofthe Operational Research Society, 41, 1069–1072.

12

[9] Benoist, T., Estellon, B., Gardi, F., Megel, R., & Nouioua, K. (2011). LocalSolver 1.x: Ablack-box local-search solver for 0-1 programming. 4OR — A Quarterly Journal of OperationsResearch, 9, 299–316.

[10] Borndorfer, R. (1998). Aspects of set packing, partitioning and covering. Ph. D. Dissertation,Berlin: Technischen Universitat.

[11] Boros, E., Hammer, P. L., Ibaraki, T., Kogan, A., Mayoraz, E., & Muchnik, I. (2000).An implementation of logical analysis of data. IEEE Transactions on Knowledge and DataEngineering, 12, 292–306.

[12] Boros, E., Ibaraki, T., Ichikawa, H., Nonobe, K., Uno, T., & Yagiura, M. (2005). Heuristicapproaches to the capacitated square covering problem. Pacific Journal of Optimization, 1,465–490.

[13] Boschetti, M. A., Mingozzi, A. & Ricciardelli, S. (2008). A dual ascent procedure for theset partitioning problem. Discrete Optimization, 5, 735–747.

[14] Bramel, J., & Simchi-Levi, D. (1997). On the effectiveness of set covering formulations forthe vehicle routing problem with time windows. Operations Research, 45, 295–301.

[15] Caprara, A., Fischetti, M., & Toth, P. (1999). A heuristic method or the set coveringproblem. Operations Research, 47, 730–743.

[16] Caprara, A., Toth, P., & Fischetti, M. (2000). Algorithms for the set covering problem.Annals of Operations Research, 98, 353–371.

[17] Caserta, M. (2007). Tabu search-based metaheuristic algorithm for large-scale set coveringproblems. In W. J. Gutjahr, R. F. Hartl, & M. Reimann (eds.), Metaheuristics: Progress inComplex Systems Optimization (pp. 43–63). Berlin: Springer.

[18] Ceria, S., Nobili, P., Sassano, A. (1997). Set covering problem. In M. Dell’Amico, F. Maffioli& S. Martello (eds.): Annotated Bibliographies in Combinatorial Optimization, (pp. 415–428).New Jersey: John Wiley & Sons.

[19] Ceria, S., Nobili, P., & Sassano, A. (1998). A Lagrangian-based heuristic for large-scale setcovering problems. Mathematical Programming, 81, 215–288.

[20] Chu P. C., & Beasley, J. E. (1998). Constraint handling in genetic algorithms: The setpartitioning problem. Journal of Heuristics, 11, 323–357.

[21] Farahani, R. Z., Asgari, N., Heidari, N., Hosseininia, M., & Goh, M. (2012). Coveringproblems in facility location: A review. Computers & Industrial Engineering, 62, 368–407.

[22] Hammer, P. L., & Bonates, T. O. (2006). Logical analysis of data — An overview: Fromcombinatorial optimization to medical applications. Annals of Operations Research, 148, 203–225.

[23] Hashimoto, H., Ezaki, Y., Yagiura, M., Nonobe, K., Ibaraki, T., & Løkketangen, A. (2009).A set covering approach for the pickup and delivery problem with general constraints on eachroute. Pacific Journal of Optimization, 5, 183–200.

[24] Hoffman, K. L., & Padberg, A. (1993). Solving airline crew scheduling problems by branch-and-cut. Management Science, 39, 657–682.

13

[25] Hutter, F., Tompkins, D. A. D., & Hoos, H. H. (2002). Scaling and probabilistic smoothing:Efficient dynamic local search for SAT. Proceedings of International Conference on Principlesand Practice of Constraint Programming (CP), 233–248.

[26] Johnson, D. S., & McGeoch, L. A. (1997). The traveling salesman problem: A case study.In E. Aarts, & K. Lenstra (eds.), Local Search in Combinatorial Optimization (pp. 215–310).New Jersey: Princeton University Press.

[27] Koch, T., Achterberg, T., Andersen, E., Bastert, O., Berthold, T., Bixby, R. E., Danna, E.,Gamrath, G., Gleixner, A. M., Heinz, S, Lodi, A., Mittelmann, H., Ralphs, T., Salvagnin, D.,Steffy, D. E., & Wolter, K. (2011). MIPLIB2010: Mixed integer programming library version5. Mathematical Programming Computation, 3, 103–163.

[28] Linderoth, J. T., Lee, E. K., & Savelbergh, M. W. P. (2001). A parallel, linear programming-based heuristic for large-scale set partitioning problems. INFORMS Journal on Computing,13, 191–209.

[29] Michel, L. & Van Hentenryck, P. (2000). Localizer. Constraints: An International Journal,5, 43–84.

[30] Mingozzi, A., Boschetti, M. A., Ricciardelli, S., & Bianco, L. (1999). A set partitioningapproach to the crew scheduling problem. Operations Research, 47, 873–888.

[31] Morris, P. (1993). The breakout method for escaping from local minima. Proceedings ofNational Conference on Artificial Intelligence (AAAI), 40–45.

[32] Nonobe, K., & Ibaraki, T. (2001). An improved tabu search method for the weightedconstraint satisfaction problem. INFOR, 39, 131–151.

[33] Pesant, G., & Gendreau, M. (1999). A constraint programming framework for local searchmethods. Journal of Heuristics, 5, 255–279.

[34] Selman, B., & Kautz, H. (1993). Domain-independent extensions to GSAT: Solving largestructured satisfiability problems. Proceedings of International Conference on Artificial Intel-ligence (IJCAI), 290–295.

[35] Shaw, P., Backer, B. D., & Furnon, V. (2002). Improved local search for CP toolkits. Annalsof Operations Research, 115, 31–50.

[36] Thornton, J. (2005). Clause weighting local search for SAT. Journal of Automated Reason-ing, 35, 97–142.

[37] Umetani, S., & Yagiura, M. (2007). Relaxation heuristics for the set covering problem.Journal of the Operations Research Society of Japan, 50, 350–375.

[38] Umetani, S., Arakawa, M., & Yagiura, M. (2013). A heuristic algorithm for the set multi-cover problem with generalized upper bound constraints. Proceedings of Learning and Intel-ligent Optimization Conference (LION), 75–80.

[39] Umetani, S. (2015). Exploiting variable associations to configure efficient local search inlarge-scale set partitioning problems. Proceedings of AAAI Conference on Artificial Intelli-gence (AAAI), 1226–1232.

[40] Van Hentenryck, P., & Michel, L. (2005). Constraint-Based Local Search, Cambridge: TheMIT Press.

14

[41] Voudouris, C., Dorne, R., Lesaint, D., & Liret, A. (2001). iOpt: A software toolkit forheuristic search methods. Proceedings of Principles and Practice of Constraint Programming(CP), 716–729.

[42] Wedelin, D. (1995). An algorithm for large-scale 0-1 integer programming with applicationto airline crew scheduling. Annals of Operations Research, 57, 283–301.

[43] Yagiura, M. & Ibaraki, T. (1999). Analysis on the 2 and 3-flip neighborhoods for the MAXSAT. Journal of Combinatorial Optimization, 3, 95–114.

[44] Yagiura, M., Kishida, M., & Ibaraki, T. (2006). A 3-flip neighborhood local search for theset covering problem. European Journal of Operational Research, 172, 472–499.

Appendix A Proof of Lemma 1

We show that ∆zj1,j2(x) ≥ 0 holds if xj1 = xj2 . First, we consider the case of xj1 = xj2 = 1. Bythe assumption of the lemma,

∆z↓j (x) = −cj −∑

i∈Sj∩(ML∪ME)∩{i|si(x)>bi}

w+i +

∑i∈Sj∩(MG∪ME)∩{i|si(x)≤bi}

w−i ≥ 0 (5)

holds for both j = j1 and j2. We then have

∆zj1,j2(x) = ∆z↓j1(x) + ∆z↓j2(x) +∑

i∈Sj1∩Sj2

∩(ML∪ME)∩M+(x)

w+i

+∑

i∈Sj1∩Sj2

∩(MG∪ME)∩M+(x)

w−i ≥ 0,(6)

where M+(x) = {i ∈ M | si(x) = bi + 1}. Next, we consider the case of xj1 = xj2 = 0. By theassumption of the lemma,

∆z↑j (x) = cj +∑

i∈Sj∩(ML∪ME)∩{i|si(x)≥bi}

w+i −

∑i∈Sj∩(MG∪ME)∩{i|si(x)<bi}

w−i ≥ 0 (7)

holds for both j = j1 and j2. We then have

∆zj1,j2(x) = ∆z↑j1(x) + ∆z↑j2(x) +∑

i∈Sj1∩Sj2

∩(ML∪ME)∩M−(x)

w+i

+∑

i∈Sj1∩Sj2

∩(MG∪ME)∩M−(x)

w−i ≥ 0,(8)

where M−(x) = {i ∈M | si(x) = bi − 1}.

Appendix B Efficient incremental evaluation

We first consider a standard incremental evaluation of ∆z↑j (x) and ∆z↓j (x) in O(|Sj |) time usingthe following formulas:

∆z↑j (x) = cj + ∆p↑j (x) + ∆q↑j (x),

∆p↑j (x) =∑

i∈Sj∩(ML∪ME)

w+i (|(si(x) + 1)− bi|+ − |si(x)− bi|+) ,

∆q↑j (x) =∑

i∈Sj∩(MG∪ME)

w−i (|bi − (si(x) + 1)|+ − |bi − si(x)|+) ,

(9)

15

∆z↓j (x) = −cj + ∆p↓j (x) + ∆q↓j (x),

∆p↓j (x) =∑

i∈Sj∩(ML∪ME)

w+i (|(si(x)− 1)− bi|+ − |si(x)− bi|+) ,

∆q↓j (x) =∑

i∈Sj∩(MG∪ME)

w−i (|bi − (si(x)− 1)|+ − |bi − si(x)|+) ,

(10)

where 2-FNLS keeps the values of the left-hand side of constraints si(x) (i ∈ MG ∪ML ∪ME)in memory. 2-FNLS updates si(x) (i ∈ Sj) in O(|Sj |) time by si(x

′)← si(x) + 1 and si(x′)←

si(x) − 1 when the current solution x moves to x′ by flipping xj = 0 → 1 and xj = 1 → 0,respectively.

We further develop an improved incremental evaluation of ∆z↑j (x) and ∆z↓j (x) in O(1) time

by directly keeping ∆p↑j (x), ∆q↑j (x) (j ∈ N \X) and ∆p↓j (x), ∆q↓j (x) (j ∈ X) in memory. Whenthe current solution x moves to x′ by flipping xj = 0→ 1, 2-FNLS first updates si(x) (i ∈ Sj) in

O(|Sj |) time by si(x′)← si(x)+1, and then updates ∆p↑k(x), ∆q↑k(x), ∆p↓k(x), ∆q↓k(x) (k ∈ Ni,

i ∈ Sj) in O(∑

i∈Sj|Ni|) time using the following formulas:

∆p↑k(x′) ← ∆p↑k(x) +

∑i∈Sj∩Sk∩(ML∪ME)

w+i

(∆y+

i (x′)−∆y+i (x)

),

∆q↑k(x′) ← ∆q↑k(x) +

∑i∈Sj∩Sk∩(MG∪ME)

w−i(∆y−i (x′)−∆y−i (x)

),

∆p↓k(x′) ← ∆p↓k(x) +

∑i∈Sj∩Sk∩(ML∪ME)

w+i

(∆y+

i (x′)−∆y+i (x)

),

∆q↓k(x′) ← ∆q↓k(x) +

∑i∈Sj∩Sk∩(MG∪ME)

w−i(∆y−i (x′)−∆y−i (x)

),

(11)

where∆y+

i (x′) = |(si(x′) + 1)− bi|+ − |si(x′)− bi|+,∆y+

i (x) = |si(x′)− bi|+ − |si(x)− bi|+,∆y−i (x′) = |bi − (si(x

′) + 1)|+ − |bi − si(x′)|+,∆y−i (x) = |bi − si(x′)|+ − |bi − si(x)|+.

(12)

Similarly, when the current solution x moves to x′ by flipping xj = 1→ 0, 2-FNLS first updates

si(x) (i ∈ Sj) in O(|Sj |) time, and then updates ∆p↑k(x), ∆q↑k(x), ∆p↓k(x), ∆q↓k(x) (k ∈ Ni,i ∈ Sj) in O(

∑i∈Sj|Ni|) time. We note that the computation time for updating the auxiliary

data has little effect on the total computation time of 2-FNLS because the number of solutionsactually visited is much less than the number of neighbor solutions evaluated in most cases.

16