EMC_VNX_ESSMatrix

-

Upload

suresh-manikantan-nagarajan -

Category

Documents

-

view

90 -

download

0

description

Transcript of EMC_VNX_ESSMatrix

-

5/21/2018 EMC_VNX_ESSMatrix

1/8

2011 - 2014 EMC Corporation. All Rights Reserved.

EMC believes the information in this publication is accurate as of its publication date. The information is subject to change withoutnotice.

THE INFORMATION IN THIS PUBLICATION IS PROVIDED AS IS. EMC CORPORATION MAKES NO REPRESENTATIONS ORWARRANTIES OF ANY KIND WITH RESPECT TO THE INFORMATION IN THIS PUBLICATION, AND SPECIFICALLYDISCLAIMS IMPLIED WARRANTIES OF MERCHANTABILITY OR FITNESS FOR A PARTICULAR PURPOSE.

Use, copying, and distribution of any EMC software described in this publication requires an applicable software license.EMC2, EMC, and the EMC logo are registered trademarks or trademarks of EMC Corporation in the United State and other countries.All other trademarks used herein are the property of their respective owners.

For the most up-to-date regulator document for your product line, go to EMC Online Support (https://support.emc.com).

The information in this ESSM is a configuration summary. For more detailed information, refer to https://elabnavigator.emc.com.

EMC Simple Support MatrixEMC Unified VNX Series

JUNE 2014

P/N 300-012-396 REV 37

-

5/21/2018 EMC_VNX_ESSMatrix

2/86/6/14

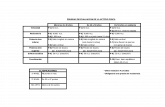

Table 1 EMCE-Lab qualified specific versions or ranges for EMC Unified VNX Series (page 1 of 2)

Platform Support

Path and Volume Management Cluster VProv

ReclPowerPath Native

MPIO/LVMSymantec / Veritas /

DMP / VxVM / VxDMPNative Symantec / Veritas /

SFRAC/VxCFS / SF

HAh

OracleRAC

Target Rev Allowed Target Rev Allowed

AIX 5300-09-01-0847g

5.5 P02 - 5.5 P06, 5.7

5.3 - 5.3SP1 P01c;

5.5 - 5.5 P01

Y

5.1 SP1

5.0 MP3 - 5.1PowerHA(HACMP)5.4 - 7.1

5.0 - 5.0 MP3 10g - 11g Sym

5

AIX 6100-02-01-0847g 5.1 SP1- 6.0.1,6.0.3, 6.1.0 5.0 MP3 - 6.0.3 11g

Sym5.1 S

6.0

AIX 7100-00-02-1041g 5.3 SP1

P01

c

;5.5 - 5.5 P01

5.1 SP1 PR1-

6.0.1, 6.0.3,6.1.0

5.1 SP1 - 6.0.3, 6.1 11g R2

Sym

5.1 S6.0.1,

(AIX) VIOS 2.1.1 or latero 5.5 or later

HP-UX 11iv1 (11.11)g 5.1 SP2

5.1 - 5.1 SP1(Pvlinks)d/

Y

SG 11.16 10g

HP-UX 11iv2 (11.23)g

5.1 SP2 - 5.2 P02

5.0 SG 11.16 -

11.19 5.0

10g R2 -11g R1

HP-UX 11iv3 (11.31)g

Y 5.1 SP1 - 6.0.1,

6.0.35.0 - 6.0.1,

6.0.3SG 11.17 -

11.20 5.0 - 6.0.3

10g R2 -11g R2

Sym5.1 S

IBM i 7.1p5.7 Y

Linux: AX 2.0 GA - 2.0 SP3 b , n, n Ya

Linux: AX 3b 5.5 - 5.6 and5.7 SP1 - 5.7 SP1 P01 k

5.3.xc, k

Y

Linux: AX 4 5.6 and 5.7 SP1k

Linux: Citrix XenServer 6.xm Y

Linux: OL 4 U4 - 4 U7 b , n

5.3.xc, k

5.0 MP2 -5.0 MP4

10g -11g

Linux: OL 5.0, 5.1 - 5. 2 b

5.5k5.0 MP3

Linux: OL 5.3 - 5. 55.1 SP1 5.0 MP3 - 5.1

Sym5

Linux: OL 5.6 5.5 - 5.6k

Linux: OL 5.7- 5.105.6k 6.0

11 g

Sym

Linux: OL 5.6, 6.0 - 6.1 (UEK) 5.6 P01, 5.7 SP1,

5.7 SP1 P01- P02k,

5.7 SP3

Linux: OL 5.7 (UEK) 5.6 P02, 5.7 SP1,

5.7 SP1 P01 - P02k,5.7 SP3

11 g R2Linux: OL 5.8 - 5.9, 6.2 - 6.4 (UEK) 5.7 SP1,

5.7 SP1 P01 - P02k,5.7 SP3

Linux: RHEL 4.0 U2 - U4 n,b,e;

4.5 - 4.9n,b,e

5.3.xc, k

Ya 4.1 MP2 -

5.0 MP4 4.1, 5.0

10g -11g

Linux: RHEL 5.0, 5.1b

5.5 - 5.6.5.7 SP1,

5.7 SP1 P01- P02k,5.7 SP3

Y

5.0 MP3 -5.0 MP4

Y

5.0 MP3 - 5.1Linux: RHEL 5.2b

Linux: RHEL 5.3 - 5.4i5.1 SP1 5.0 MP3 - 5.1

5.0 MP4 - 5.1 SP1

Sym5

Linux: RHEL 5.5 - 5.6i

5.1 SP1 - 6.0.3,6.1.0

5.0 MP4 - 5.1 Sym5.1 S

Linux: RHEL 5.7i

5.6, 5.7 SP1,5.7 SP1 P01- P02k,

5.7 SP3

Linux: RHEL 5.8

Linux: RHEL 5.9

Linux: RHEL 5.10 5.7 SP1 P01- P02k,5.7 SP3

-

5/21/2018 EMC_VNX_ESSMatrix

3/86/6/14

Linux: RHEL 6.0

5.6, 5.7 SP1,

5.7 SP1 P01 - P02k,5.7 SP3

Y

5.1 SP1 PR1 &PR2

Y

5.1 SP1 PR2

11g R2

Sym5.1

Linux: RHEL 6.1, 6.25.1 SP1 PR1 -

6.0.3, 6.1.0 5.1 SP1 PR2 - 6.0.3

Sym5.1

6.0

Linux: RHEL 6.3, 6.4 5.1 SP1 PR2 &RP3, 6.0.1 -6.0.3, 6.1.0

5.1 SP1 RP3, 6.0.1 -6.0.3

Sym5.1 S

RP6.0

Linux: RHEL 6.56.0.3 6.0.3

Sym

Linux: SLES 9 SP3 - SLES 9 SP4 5.55.3.xc, k

5.0 - 5.0 MP4 4.1, 5.0 10 g

Linux: SLES 10 - SLES 10 SP3 b

5.5 - 5.7 SP1,

5.7 SP1 P01 - P02 k5.1 SP1 - 6.0.3

5.0 MP3 - 5.1 5.0 - 5.1,5.1 SP1- 6.0.3

10g R2 -11g

Sym5.1 SLinux: SLES 10 SP4b

5.0 MP4 - 5.1Linux: SLES 11 - SLES 11 SP2 5.1 SP1 - 6.0.3,6.0.4, 6.1.0 5.0 MP3 - 5.1,

5.1 SP1- 6.0.3, 6.111g R2-12c R1

Sym5.1 S

6.0

Linux: SLES 11 SP35.7 SP2 - 5.7 SP3 6.0.4, 6.1.0 6.0.4 11g R2

Sym6.0

Microsoft Windows 2003 (x86 and x64) SP2

and R2 SP25.5 - 5.7 SP2 5.3 -

5.3 SP1c

5.1 SP25.0 - 5.1 SP1 MSCS 5.0- 5.1

10g R2 -11g

Sym5Microsoft Windows 2008 (x86) SP1 and SP2

MPIO/Y

5.1 - 5.1 SP1

FailoverCluster

5.0- 5.1 SP2

Microsoft Windows 2008 (x64) SP1 and SP2 i

5.1 SP2 - 6.0.15.0 - 6.0.1 Sym

5.1 SMicrosoft Windows 2008 R2 and R2 SP1i 5.1 - 6.0.1

Microsoft Windows Server 20125.5 SP1 - 5.7 SP2

6.0.2 6.0.2 Sym

Microsoft Windows Server 2012 R25.7 SP1 - 5.7 SP2

6.1.0 Sym

OpenVMS Alpha v7.3-2, 8.2, 8.3, 8.4g

Y YOpenVMS Integrity v8.2-1, 8.3, 8.3-1H1, 8.4g

Solaris 8 SPARCe Refer to the Solaris Letters of Support at https://elabnavigator.emc.com,

Solaris 9 SPARC

5.3 -5.3 P04c

MPxIO/Y

5.1 SP1

5.0 - 5.1

SC 3 .1 - 3.2 5.0 - 5.1 10g - 11g Sym

5

Solaris 10 SPARC

5.5 - 5.5 P02

5.1 SP1 - 6.0.3,6.1.0

SC 3.1 - 3.3 5.0 - 6.0.1, 6.0.3 10g R2 -

11g

Sym5.1 S

Solaris 10 (x86)5.1 SP1_x64 -

6.0.3_x645.0_x64 -5.1_x64

Sym5.1 S

6.

Solaris 11 SPARC6.0 PR1 - 6.0.1,

6.0.3, 6.1.0 SC 4.0

6.0 PR1 - 6.0.1,6.03, 6.1

11g R2Sym

6.0 P6.0

Solaris 11.1 SPARC 5.5 P01 - 5.5 P02 6.0.3, 6.1.0 SC 4.1

6.0.3, 6.1 11g R2,

12c R1Sym

6.0

Solaris 11 x86 5.5 - 5.5 P02 6.0 PR1_x64 -

6.0.1,6.0.3_x64 SC 4.0 6.0 PR1 - 6.0.1, 6.03 11g R2

Sym6.0

6.0.1

Solaris 11.1 x86 5.5 P01 - 5.5 P02

6.0.3_x64 SC 4.1

6.0.3 11g R2,

12c R1Sym

6.

VMware ESX/ESXi 4.0 (vSphere) PP/VE 5.4 - 5.4 SP1

N/A NMP ESX

11gVMware ESX/ESXi 4.1 (vSphere)iPP/VE 5.4 SP2

VxDMP 6.0 -6.0.1

VMware ESXi 5.0 (vSphere)i,j PP/VE 5.7l,5.7 P02l, 5.8, 5.9 SP1 VxDMP 6.0.1,

6.1VMware ESXi 5.1 (vSphere)i,j PP/VE 5.7 P02l,5.8,5.9, 5.9 SP1

VMware ESXi 5.5 (vSphere)i,j PP/VE 5.9, 5.9 SP1 VxDMP 6.1

Target Rev= EMC recommended versions for new and existing installations. These versions contain the latest features and provide the highest level of reliability.Allowed= Older EMC-approved versions that are still functional, or newer/interim versions not yet targeted by EMC. These versions may not contain the latest fixes and features and mupgrading to resolve issues or take advantage of newer product features. Versions are suitable for sustaining existing environments but should not be targeted for new installations.

Table 1 EMCE-Lab qualified specific versions or ranges for EMC Unified VNX Series (page 2 of 2)

Platform Support

Path and Volume Management Cluster VProv

ReclPowerPath Native

MPIO/LVMSymantec / Veritas /

DMP / VxVM / VxDMPNative Symantec / Veritas /

SFRAC/VxCFS / SF

HAh

OracleRAC

Target Rev Allowed Target Rev Allowed

-

5/21/2018 EMC_VNX_ESSMatrix

4/86/6/14

Table 1 Legend, Footnotes, and Components

LegendBlank = Not supportedY = SupportedNA = Not applicable

a. MPIO with AX supported at AX 2.0 SP1 and later. MPIO with RHEL supported at RHEL 4.0 U3 and later.b. 8 Gbs initiator support begins with AX 2.0 SP3, AX 3.0 SP2, OL 4.0 U7, OL 5.2, RHEL 4.7, RHEL 5.2, SLES 10 SP2.c. These PowerPath versions have reached End-of-Life (EOL). For extended support contact PowerPath Engineering or

https://support.emc.com/products/PowerPath.d. Pvlinks is an HP-UX alternate pathing solution that does not support load balancing.e. Refer to the appropriate Letter of Support on the E-Lab Navigator, Extended Support tab, End-of-Life Configurations heading, for infor

end-of-life configurations.f. Refer to the EMC Virtual Provisioning table (https://elabnavigator.emc.com) for all VP (without host reclamation) qualified configurationg. Only server vendor-sourced HBAs are supported.h. Refer to Symantec Release Notes (www.symantec.com) for supported VxVM versions, which are dependent on the Veritas Cluster veri. For EMC XtremSF and XtremCache software operating system and host server support information, refer to the ESM, located at

https://elabnavigator.emc.com, and use VNX, along with the XtremSF model, in the search engine.j. Refer to the VMware vSphere 5ESSM, at https://elabnavigator.emc.com, Simple Support Matrix tab, Platform Solutions, for details.k. Version and kernel specific.l. Before upgrading to vSphere 5.1, refer to EMC Knowledgebase articles emc302625 and emc305937.m. All guest operating systems and features that Citrix supports, such as XenMotion, XenHA, and XenDesktop, are supported.n. This operating system is currently EOL. All corresponding configurations are frozen. It may no longer be publicly supported by the oper

system vendor and will require an extended support contract from the operating system vendor in order for the configuration listed in thto be supported by EMC. It is highly recommended the customer upgrade the server to an ESSM-supported configuration or install it asmachine on an EMC-approved virtualization hypervisor.

o. Consult the Virtualization Hosting Server (Parent) Solutions table in E-Lab Navigator at https://elabnavigator.emc.com for your specific version prior to implementation.

p. VNX8000, VNX7600, VNX5800, VNX5600, VNX5400 and VNX5200 only support base configurations including FAST Cache, FAST VPCompression. Supported only with VIOS configurations.

Host Servers/ Adapters All Fibre Channel HBAs from Emulex, QLogic (2 Gb/s or greater), and Brocade (8 Gb/s or greater), including vendor rebranded versionrequires an RPQ when using any Brocade HBA. All 10 Gb/s Emulex, QLogic, Brocade, Cisco, Broadcom, and Intel CNAs (10GbE NICsupport FCoE). All 1 Gb/s or 10 Gb/s NICs for iSCSI connectivity, as supported by Server/OS vendor. Any EMC or vendor-supplied Oapproved driver/firmware/BIOS is allowed. EMC recommends using latest E-Lab Navigator listed driver/firmware/BIOS versions. Adapsupport link speed compatible with switch (fabric) or array (direct connect). All host systems are supported where the host vendor allohost/OS/adapter combination. If systems meet the criteria in this ESSM, no further ELN connectivity validation and no RPQ is required

Switches All FC SAN switches (2 Gb/s or greater) from EMC Connectrix, Brocade, Cisco, and QLogic for host and storage connectivity are suRefer to the Switched Fabric Topology Parameters table located on the E-Lab Navigator (ELN) athttps://elabnavigator.emc.comfor supported switch fabric interoperability firmware and settings.

Operating environment R30 VNX 5100/5300/5500/5700/7500; EFD Cache, SATA, EFD support added. Minimum FLARE revision 04.30.000.5.xxx. R30++ VNX 5100/5300/5500/5700/7500; FCoE support added. Minimum FLARE revision 04.30.000.5.5xx. R31 VNX 5100/5300/5500/5700/7500; Unisphere/Navisphere Manager v1.1; Minimum FLARE revision 05.31. R32 VNX 5100/5300/5500/5700/7500; Unisphere/Navisphere Manager v1.2; Minimum FLARE revision 05.32. R33 (only) VNX 5200/5400/5600/5800/7600/8000; Unisphere/Navisphere Manager v1.3; Minimum FLARE revision 05.33.

Table 2 VNX2 Limitations (page 1 of 5)

VNX8000 VNX7600 VNX5800 VNX5600 VNX5400 VNX5

Storage Pool capacity

Maximum number of storage pools 60 40 40 20 15 15

Maximum number of disks in a storage pool 996 996 746 496 246 12

Maximum number of usable disks for all storage pools 996 996 746 496 246 12

Maximum number of disks that can be added to a pool at atime

180 120 120 120 80 80

Maximum number of pool LUNs per storage pool 4000 3000 2000 1000 1000 100

Pool LUN limits

Minimum user capacity 1block 1block 1block 1block 1block 1blo

Maximum user capacity 256 TB 256 TB 256 TB 256 TB 256 TB 256

Maximum number of pool LUNs per storage system 4000 3000 2000 1000 1000 100

SnapView supported configurations

Clones per storage system 2048 2048 2048 1024 256 25

Clones per source LUN 8 8 8 8 8 8

Clones per consistent fracture 64 64 32 32 32 32

Clone groups per storage system 1024 1024 1024 256 128 12

Clone private LUNsa, b per storage system (required) 2 2 2 2 2 2

SnapView Snapsc per storage system 2048 1024 512 512 256 25Snapshots per source LUN 8 8 8 8 8 8

SnapView sessions per source LUN 8 8 8 8 8 8

Reserved LUNsd per storage system 512 512 256 256 128 12

Source LUNs with Rollback active 300 300 300 300 300 30

SAN Copy Storage system limits

Concurrent Executing Sessions 32 32 16 8 8 8

Destination LUNs per Session 100 100 50 50 50 50

Incremental Source LUNse 512 512 256 256 256 25

Defined Incremental Sessionsf 512 512 512 512 512 51

Incremental Sessions per Source LUN 8 8 8 8 8 8

VN X Snapshots Configuration Guidelines

Snapshots per storage system 32000 24000 16000 8000 8000 800Snapshots per Primary LUN 256 256 256 256 256 25

-

5/21/2018 EMC_VNX_ESSMatrix

5/86/6/14

Snapshots per consistency group 64 64 64 64 64 64

VNX8000 VNX7600 VNX5800 VNX5600 VNX5400 VNX5

Consistency Groups 256 256 128 128 128 12

Snapshot Mount Points 4000 3000 2000 1000 1000 100

Concurrent restore operations 512 512 256 128 128 12

MirrorView

Maximum number of mirrors 256 (MVA)1024 (MVS)

256 (MVA)512 (MVS)

256 (MVA)256 (MVS)

256 (MVA)128 (MVS)

256 (MVA)128 (MVS)

128 (M128 (M

Maximum number of consistency groups 64 64 64 64 64 64

Maximum number of members per consistency group 64 (MVA)64 (MVS)

64 (MVA)64 (MVS)

32 (MVA)32 (MVS)

32 (MVA)32 (MVS)

32 (MVA)32 (MVS)

32 (M32 (M

Block compressed LUN limitations

Maximum number of concurrent compression operations perStorage Processor(SP)

40 32 20 20 20 20

Maximum number of compressed LUNs involving migrationper storage system

24 24 16 16 16 16

Maximum number of compressed LUNs 4000 3000 2000 1000 1000 100

Block compression operation limitations

Concurrent compression/decompression operations per SP 40 32 20 20 20 20

Concurrent migration operations per system 24 24 16 16 16 16

Block-level deduplication limitations (VNX for Block)

LUN migrations 8 maximum

Deduplication pass Started every 12 hours upon the last completed run for that pool Will only trigger if 64GBs of new/changed data is found Pass can be paused Resume causes a check to run shortly after resuming

Deduplications processes per SP 3 maximum

Deduplication run time 4 hour maximum After 4 hours, deduplication process is paused and other queued process on the SP are allowed to run If no other processes are queued, deduplication will keep running

Storage systems used for SAN Copy replication

Storage System

Fibre Channel iSCSI

SAN Copy system Target g

system

SAN Copy system TargetSystem

VNX8000, VNX7600, VNX5800, VNX5600h, VNX5400, VNX5200 P P P P

VNX7500, VNX5700, VNX5500, VNX5300, VNX5100 P P P P

CX4-960, CX4-480, CX4-240, CX4-120i P P P P

CX3-80, CX3-40, CX3-20, CX3-10 P P N/A N/A

CX3-40C, CX3-20C, CX3-10C P P P P

CX700, CX500 P P N/A N/A

CX500i, CX300i N/A N/A X P

CX600, CX400 P P N/A N/A

CX300 Xj P N/A N/A

CX200 X P N/A N/A

AX100, AX150, X P N/A N/A

AX100i, AX150i N/A N/A X P

AX4-5F P P N/A N/A

AX4-5SCF X P N/A N/A

AX4-5i, AX4-5SCi N/A N/A X P

Operating Environment for File 8.1 Configuration guidelines

Guideline/ Specificat ion Maximum tested value Comment

CIFS guidelines

CIFS TCP connection 64K (default and theoreticalmax.), 40K (max. tested)

Param tcp.maxStreams sets the maximum number of TCP connections a Data Mover can have. Maxvalue is 64K, or 65535. TCP connections (streams) are shared by other components and should be cin monitored small increments.

With SMB1/SMB2 a TCP connection means single client (machine) connection.

With SMB3 and multi channel, a single client could use several network connections for the same sewill depend on the number of available interfaces on the client machine, or for high speed interface lilink, it can go up to 4 TCP connections per link.

Share name length 80 characters (Unicode). Unicode: The maximum length for a share name with Unicode enabled is 80 characters.

Number of CIFS shares 40,000 per Data Mover(Max.

tested limit)

Larger number of shares can be created. Maximum tested value is 40K per Data Mover.

Table 2 VNX2 Limitations (page 2 of 5)

-

5/21/2018 EMC_VNX_ESSMatrix

6/86/6/14

Guideline/ Specificat ion Maximum tested value Comment

Number of NetBIOS names/compnames per Virtual DataMover

509 (max) Limited by the number of network interfaces available on the Virtual Data Mover. From a local groupperspective, the number is limited to 509. NetBIOS and compnames must be associated with at leasunique network interface.

NetBIOS name length 15 NetBIOS names are l imited to 15 characters (Microsoft l imit) and cannot begin with an@ (at s ign) or acharacter. The name also cannot include white space, tab characters, or the following symbols: / \ : + | [ ] ? < > . If using compnames, the NetBIOS form of the name is assigned automatically and isfrom the first 15 characters of the .

Comment (ASCII chars) for

NetBIOS name for server

256 Limited to 256 ASCII characters.

Restricted Characters: You cannot use double quotation ("), semicolon (;), accent (`), and commcharacters within the body of a comment. Attempting to use these special characters results in anmessage. You can only use an exclamation point (!) if it is preceded by a single quotation mark ()

Default Comments:If you do not explicitly add a comment, the system adds a default comment form EMC-SNAS:T where is the version of the NAS software.

Compname length 63 bytes For integrat ion with Windows environment releases later than Windows 2000, the CIFS server compname length can be up to 21 characters when UTF-8 (3 bytes char) is used.

Number of domains 10 tested 509 (theoret ical max.) The maximum number of Windows domains a Data Mover can be a member increase the max default value from 32, change parameter cifs.lsarpc.maxDomain. TheParameters GVNX for Filecontains more detailed information about this parameter.

Block size negotiated 64 KB128KB with SMB2

Maximum buffer size that can be negotiated with Microsoft Windows clients. To increase the default change param cifs.W95BufSz, cifs.NTBufSz or cifs.W2KBufSz. The Parameters Guidefor VNX for Fcontains more detailed information about these parameters.Note: With SMB2.1, read and write operation supports 1MB buffer (this feature is named large MTU

Number of simultaneousrequests per CIFS session

(maxMpxCount)

127(SMB1)512(SMB2)

For SMB1, value is fixed and defines the number of requests a client is able to send to the Data Movsame time (for example, a change notification request). To increase this value, change wsathe maxM

parameter.For SMB2 and newer protocol dialect, this notion has been replaced by credit number, which has a 512 credit per client but could be adjusted dynamically by the server depending on the load.TheParameters Guide for VNX for Filecontains more detailed information about this parameter.

Total number files/directoriesopened per Data Mover

500,000 A large number of open files could require high memory usage on the Data Mover and potentially leaof-memory issues.

Number of Home Directoriessupported

20,000(Max possible limit, notrecommended)

As Configuration file containing Home Directories info is read completely at each user connection,recommendation would be to not exceed few thousands for easy management.

Number of Windows/UNIX usersconnected at the same time

40,000(limited by the number ofTCP connections)

Earlier versions of the VNX server relied on a basic database, nameDB, to maintain Usermapper andmapping information. DBMS now replaces the basic database. This solves the inode consumption isprovides better consistency and recoverability with the support of database transactions. It also provbetter atomicity, isolation, and durability in database management.

Number of users per TCPconnection

64K To decrease the default value, change param cifs.listBlocks (default 255, max 255). The value of thisparameter times 256 = max number of users.Note:TID/FID/UID shares this parameter and cannot be changed individually for each ID. Use cautio

increasing this value as it could lead to an out-of-memory condition. Refer to the Parameters Guide for Filefor parameter information.

Number of files/directoriesopened per CIFS connection inSMB1

64K To decrease the default value, change param cifs.listBlocks (default 255, max 255). The value of thisparameter times 256 = max number of files/directories opened per CIFS connection.Note: TID/FID/UID shares this parameter and cannot be changed individually for each ID. Use cautioincreasing this value as it could lead to an out-of-memory condition. Be sure to follow the recommendtotal number of files/directories opened per Data Mover. Refer to the System Parameters Guide for VFilefor parameter information.

Number of files/directoriesopened per CIFS connection inSMB2

127K To decrease the default value, change parameter cifs.smb2.listBlocks (default 511, max 511). The vthis parameter times 256 = max number of files/directories opened per CIFS connection.

Number of VDMs per DataMover

128 The total number of VDMs, file systems, and checkpoints across a whole cabinet cannot exceed 204

FileMover

Max connections to secondary

storage per primary (VNX forFile) file system

1024

Number of HTTP threads forservicing FileMover API requestsper Data Mover

64 Number of threads available for recalling data from secondary storage is half the number of whichevlower of CIFS or NFS threads. 16 (default), can be increased using server_http command, max (test

File system guidelines

Mount point name length 255 bytes (ASCII) The "/" is used when creating the mount point and is equal to one character. If exceeded, Error 4105Server_x:path_name: invalid path specified is returned.

File system name length 240 bytes (ASCII)19 chars display for list option

For nas_fs list, the name of a file system will be truncated if it is more than 19 characters. To display file system name, use the info option with a file system ID (nas_fs -i id =).

Filename length 255 bytes (NFS)255 characters (CIFS)

With Unicode enabled in an NFS environment, the number of characters that can be stored dependsclient encoding type such as latin-1. For example: With Unicode enabled, a Japanese UTF-8 characrequire three bytes.With Unicode enabled in a CIFS environment, the maximum number of characters is 255. For filenamshared between NFS and CIFS, CIFS allows 255 characters. NFS truncates these names when they

more than 255 bytes in UTF-8, and manages the file successfully.

Table 2 VNX2 Limitations (page 3 of 5)

-

5/21/2018 EMC_VNX_ESSMatrix

7/86/6/14

Guideline/ Specificat ion Maximum tested value Comment

Pathname length 1,024 bytes Note: Make sure the final path length of restored files is less than 1024 bytes. For example, if a file isup which originally had path name of 900 bytes, and it is restoring to a path with 400 bytes, the final plength would be 1300 bytes and would not be restored.

Directory name length 255 bytes This is a hard limit and is rejected on creation if over the 255 limit.The limit is bytes for UNIX names,characters for CIFS.

Subdirectories (per parentdirectory)

64,000 This is a hard limit, code will prevent you from creating more than 64,000 directories.

Number of f ile systems per VNX 4096 This max number includes VDM and checkpoint f ile systems.

Number of file systems per DataMover

2048 The mount operation will fail when the number of file systems reaches 2048 with an error indicating mnumber of file systems reached. This maximum number includes VDM and checkpoint file systems.

Maximum disk volume size Dependent on RAID group (seecomments)

Unified platforms Running setup_clariion on the VNX7500 platform will provision the storage to uLUN per RAID group which might result in LUNS > 2 TB, depending on drive capacity and number of the RAID group. On all VNX integrated platforms (VNX5300, VNX5500, VNX5700, VNX7500), the 2 limitation has been lifted--this might or might not result in LUN size larger than 2 TB depending on RAsize and drive capacity. For all other unified platforms, setup_clariion will continue to function as in thbreaking large RAID groups into LUNS that are < 2 TB in size.Gateway systems For gateway systems, users might configure LUNs greater than 2 TB up to 16max size of the RAID group, whichever is less. This is supported for VG2 and VG8 NAS gateways w

attached to CX3, CX4, VNX, and Symmetrix DMX-4 and VMAXbackends.Multi-Path File Systems (MPFS) MPFS supports LUNs greater than 2 TB. Windows 2000 and 32Windows XP, however, cannot support large LUNs due to a Windows OS limitation. All other MPFS Wclients support LUN sizes greater than 2 TB if the 5.0.90.900 patch is applied. Use of these 32-bit WiXP clients on VNX7500 systems require request for price quotation (RPQ) approval.

Total storage for a Data Mover(Fibre Channel Only)

VNX Version 7.0200 TB VNX5300

256 TB VNX5500, VNX5700,VNX7500, VG2 & VG8

These total capacity values represent Fibre Channel disk maximum with no ATA drives. Fibre Channcapacity will change if ATA is used. Notes:

On a per-Data-Mover basis, the total size of all file systems, and the size of all SavVols used by Smust be less than the total supported capacity. Exceeding these limit can cause out of memory p Refer to the VNX for file capacity limits tables for more information, including mixed disk type

configurations.

File size 16 TB This hard limit is enforced and cannot be exceeded.

Number of directories supportedper file system

Same as number of inodes in file system. Each 8 KB of space = 1 inode.

Number of f iles per d irectory 500,000 Exceeding this number wil l cause performance problems.

Maximum number of files anddirectories per VNX file system

256 million (default) This is the total number of files and directories that can be in a single VNX file system. This number increased to 4 billion at file system creation time but should only be done after considering recovery arestore time and the total storage utilized per file. The actual maximum number in a given file systemdependent on a number of factors including the size of the file system.

Maximum amount ofdeduplicated data supported

256 TB When quotas are enabled with file size policy, the maximum amount of deduplicated data supportedTB. This amount includes other files owned by UID 0 or GID.

All other industry-standard caveats, restrictions, policies, and best practices prevail. This includes, but is not limited to, fsck times (now made faster through multi-threading), b

and restore times, number of objects per file system, snapshots, file system replication, VDM replication, performance, availability, extend times, and layout policies. Proper pland preparation should occur prior to implementing these guidelines.

Naming services guidelines

Number of DNS domains 3 - WebUIunlimited CLI

Three DNS servers per Data Mover is the limit if using WebUI. There is no limit when using the comminterface (CLI).

Number of NIS Servers per DataMover

10 You can configure up to 10 NIS servers in a single NIS domain on a Data Mover.

NIS record capacity 1004 bytes A Data Mover can read 1004 bytes of data from a NIS record.

Numberof DNSservers perDNSdomain

3

NFS guidelines

Number of NFS exports 2,048 per Data Mover testedUnlimited theoretical max.

You might notice a performance impact when managing a large number of exports using Unisphere.

Number of concurrent NFS

clients

64K with TCP (theoretical)

Unlimited with UDP (theoretical)

Limited by TCP connections.

Netgroup l ine s ize 16383 The maximum l ine length that the Data Mover wil l accept in the local netgroup f ile on the Data Movenetgroup map in the NIS domain that the Data Mover is bound to.

Number of UNIX groupssupported

64K 2 billion max value of any GID. The maximum number of GIDs is 64K, but an individual GID can havethe range of 0- 2147483648.

Networking guidelines

Link aggregation/ether channel 8 ports (ether channel)12 ports (link aggregation(LACP)

Ether channel: the number of ports used must be a power of 2 (2, 4, or 8). Link aggregation: any numports can be used. All ports must be the same speed. Mixing different NIC types (that is copper and fnot recommended.

Number of VLANs supported 4094 IEEE standard.

Number of interfaces per DataMover

45 tested Theoretically 509.

Number of FTP connections Theoretical value 64K By default the value is (in theory) 0xFFFF, but it is also limited by the number of TCP streams that caopened. To increase the default value, change param tcp.maxStreams (set to 0x00000800 by defaulincrease it to 64K before you start TCP, you will not be able to increase the number of FTP connection

to theParameters Guide for VNX for Filefor parameter information.

Table 2 VNX2 Limitations (page 4 of 5)

-

5/21/2018 EMC_VNX_ESSMatrix

8/86/6/14

Guideline/ Specificat ion Maximum tested value Comment

Quotas guidelines

Number of tree quotas 8191 Per file system

Max size of tree quotas 256 TB Includes file size and quota tree size.

Max number of unique groups 64K Per file system

Quota path length 1024

Replicator V2 guidelines

Number of replication sessions

per VNX

1365 (NSX) 682

(other platforms)Max number of replicationsessions per Data Mover

1024 This enforced l imit inc ludes a ll conf igured f ile system, VDM, and copy sessions.

Max number of local and remotefile system and VDM replicationsessions per Data Mover

682

Max number of loopback filesystem and VDM replicationsessions per Data Mover

341

Guideline/ Specificat ion Maximum tested value Comment

Snapsure guidelines

Number of checkpoints per filesystem

96 read-only16 writeable

Up to 96 read-only checkpoints per file system are supported as well as 16 writeable checkpoints.

On systems with Unicode enabled, a character might require between 1 and 3 bytes, depending on encoding type or character used. For example, a Japanese character typica3 bytes in UTF-8. ASCII characters require 1 byte.

VNX for File 8.1 capacity limits

VNX5200 VNX5400 VNX5600 VNX5800 VNX7600 VNX8000 VG10

Usable IP storage capacity limit per

blade (all disk types /uses)k256 TB 256 TB 256 TB 256 TB 256 TB 256 TB 256 TB 256

Max FS size 16 TB 16 TB 16 TB 16T B 16 TB 16 TB 16 TB 16

Max # FS per DM/Bladel 2048 2048 2048 2048 2048 2048 2048 204

Max # FS per cabinet 4096 4096 4096 4096 4096 4096 4096 409

Max configured replication sessions

per DM/blade for Replicator v2m1024 1024 1024 1024 1024 1024 1024 102

Max # of checkpoints per PFSn 96 96 96 96 96 96 96 96

Max # of NDMP sessions per DM/blade

4 4 8 8 8 8 8 8

Memory per DM/blade 6 GB 6 GB 12 GB 12 GB 24 GB 24 GB 6 GB 24Table 2 Footnotes:

a. Each clone private LUN must be at least 1 GB.b. A thin or thick LUN may not be used for a clone private LUN.c. The limits for snapshots and sessions include SnapView snapshots or SnapView sessions as well as reserved snapshots or reserved sessions used in other applications, such as

Copy (incremental sessions) and MirrorView/Asynchronous.d. A thin LUN cannot be used in the reserved LUN pool.e. These limits include MirrorView Asynchronous (MirrorView/A) images and SnapView snapshot source LUNs in addition to the incremental SAN Copy LUNs. The maximum numbe

LUNs assumes one reserved LUN assigned to each source LUN.f. These limits include MirrorView/A images and SnapView sessions in addition to the incremental SAN Copy sessions.g. Target implies the storage system can be used as the remote system when using SAN Copy on another storage system.h. The VNX5400 and VNX5600 may not be supported as target array over Fibre when source array Operating Environment is 04.30.000.5.525 and earlier for CX4 storage systems a

05.32.000.5.207 and earlier for VNX storage systems.i. The VNX storage systems can support SAN Copy over Fibre Channel or SAN Copy over iSCSI only if the storage systems are configured with I/O ports of that type. Please see th

notes for VNX Operating Environment version 05.33.000.5.015.j. The CX300, AX100 and AX150 do not support SAN Copy, but support SAN Copy/E. See the release notes for this related product for details.

k. This is the usable IP storage capacity per blade. For overall platform capacity, consider the type and size of disk drive and the usable Fibre Channel host capacity requirements, Roptions and the total number of disks supported.l. This count includes production file systems, user-defined file system checkpoints, and two checkpoints for each replication session.m. A maximum of 256 concurrently transferring sessions are supported per DM.n. PFS (Production File System).

Table 2 VNX2 Limitations (page 5 of 5)