Center for Computer-Aided Design material/Ki… · Graduate College The University of Iowa Iowa...

Transcript of Center for Computer-Aided Design material/Ki… · Graduate College The University of Iowa Iowa...

TASK-BASED PREDICTION OF UPPER BODY MOTION

by

Zan Mi

A thesis submitted in partial fulfillment of the requirements for the Doctor of Philosophy degree

in Mechanical Engineering in the Graduate College of The University of Iowa

May 2004

Thesis Supervisor: Associate Professor Karim Abdel-Malek

Graduate College The University of Iowa

Iowa City, Iowa

CERTIFICATE OF APPROVAL

_______________________

PH.D. THESIS

_______________

This is to certify that the Ph.D. thesis of

Zan Mi

has been approved by the Examining Committee for the thesis requirement for the Doctor of Philosophy degree in Mechanical Engineering at the May 2004 graduation.

Thesis Committee: ________________________________________ Karim Abdel-Malek, Thesis Supervisor

________________________________________ Jasbir Arora

________________________________________ Kendall Atkinson

________________________________________ James Cremer

________________________________________ Jia Lu

________________________________________ Geb Thomas

To my parents, my sister and

my husband

ii

ACKNOWLEDGMENTS

I first wish to express my sincere gratitude to my thesis supervisor Professor

Karim Abdel-Malek, for his expert guidance, continued encouragement and valuable

suggestions throughout the research work. His incredible creativity and invaluable

advice have deeply influenced me.

I am very grateful to Professor Jasbir Arora, from Department of Civil and

Environmental Engineering, member of my thesis committee, for his valuable

suggestions and kind consultation on the optimization part in my research work. I am

also grateful to my other thesis committee members: Professor James Cremer, from

Department of Computer Science, Professor Kendall Atkinson, from Department of

Mathematics, Professor Jia Lu and Professor Geb Thomas, from Department of

Mechanical and Industrial Engineering, for serving on my committee and providing me

their valuable suggestions.

I would like to extend my appreciation to Professor Laurent Jay, from Department

of Mathematics, for his help and valuable discussions on numerical analysis, Professor

James Schmiedeler, who served on my comprehensive exam committee, now has left

University of Iowa, for his valuable advice and Professor K.K. Choi for serving on my

comprehensive exam committee.

I also would like to thank my colleagues in Digital Humans Lab, Jingzhou Yang

and Joo Hyun Kim, for their encouragement and help from all aspects.

Finally special thanks to my husband, Yonghang, who always encouraged and

supported me throughout the thesis work.

iii

ABSTRACT

The proposed research deals with digital human modeling and simulation. Digital

humans are avatars that are digitally created, have the appearance of human-like motion

and behavior, and are used to simulate human motion and performance. Digital humans

have become a fundamental cornerstone of engineering analysis towards achieving a

higher level of digital prototyping. Our proposed work deals with predicting static and

kinematic motions of digital humans, in the most realistic manner possible, to simulate

their existence in a virtual world and to enable them to test and experience products that

are only defined in the digital world, thus reducing the time and cost associated with

prototyping. To achieve this goal, we have started with a significantly larger number of

degree-of-freedom model than typically used by researchers (15 DOF’s from the waist to

the hand) and have created a formulation that takes into consideration joint ranges of

motion. We have then introduced a unique task-based approach to posture prediction as a

postulate for why people assume specific postures. This postulate led to an optimization-

based approach, where human performance measures are quantified as functions of

variables that evaluate to real numbers, thus can be implemented in a multi-objective

optimization algorithm for arriving at “the best” posture. Of course, real-time

implementation of such an algorithm is no trivial matter; therefore, we have investigated

several optimization methods, including gradient-based and genetic to arrive at a near

real-time solution. Results of predicting postures for a digital human model were then

compared (at a simplified number of DOF’s) with existing codes and with experimental

data for verification purposes. Path trajectories followed by humans in space to execute a

task were also addressed. The concept of admissible kinematically-smooth trajectories

was created to characterize a path that does not admit switching of inverse kinematic

solutions during motion, therefore produce realistic smooth motion…a concept that is

true for humans but not for robotic motions. Because of the underlying formulation, we

iv

are able to design (or predict) such paths for digital humans. We have also investigated

the prediction of joint variables as vector functions of time to predict how human upper

body (including the upper extremities) behaves as the hand moves between any two

points in space. The end result is an optimization-based method using human

performance measures such as discomfort and smoothness in combination with minimum

jerk model for calculating joint path trajectories that look and feel most natural. An

optimization-based methodology for layout design using our human model and posture

prediction algorithm was also presented. Long term goals of this research are to enable

autonomous behavior and realistic motion of digital humans, with the ultimate goal of

reducing or eliminating the use of prototypes in the design cycle.

v

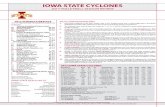

TABLE OF CONTENTS

Page

LIST OF TABLES........................................................................................................... viii

LIST OF FIGURES ........................................................................................................... ix

CHAPTER

1 INTRODUCTION .................................................................................................1

1.1 Motivation....................................................................................................1 1.2 Objectives ....................................................................................................4 1.3 Literature Review.........................................................................................8

1.3.1 Human Modeling .............................................................................8 1.3.2 Posture Prediction ..........................................................................13 1.3.3 Control Barriers .............................................................................20 1.3.4 Trajectory Planning........................................................................20 1.3.5 Layout Design................................................................................25

2 MODELING OPEN LOOP KINEMATIC STRUCTURES ...............................27

2.1 A 15-Degree-of-Freedom Model of Torso and Arm .................................27 2.2 Denavit-Hartenberg Representation Method .............................................34 2.3 Conclusions................................................................................................40

3 TASK-BASED POSTURE PREDICTION .........................................................41

3.1 Task-Based Behavior .................................................................................42 3.2 Cost Functions and Constraints .................................................................44

3.2.1 Discomfort .....................................................................................44 3.2.2 Effort ..............................................................................................45 3.2.3 Potential Energy.............................................................................45 3.2.4 Dexterity ........................................................................................46 3.2.5 Torque ............................................................................................48 3.2.6 Constraints .....................................................................................51

3.3 Optimization Formulation..........................................................................52 3.4 Predicting Postures and Validation............................................................57

3.4.1 Comparison with IKAN.................................................................63 3.4.2 Validation against Experimental Data ...........................................65

3.5 Multi-Objective Optimization....................................................................73 3.6 Real-Time Algorithm.................................................................................75 3.7 Conclusions................................................................................................84

4 HUMAN UPPER EXTREMITY PATH TRAJECTORY DESIGN ...................86

4.1 Non-crossable Surfaces..............................................................................87 4.2 Problem Definition.....................................................................................92 4.3 Problem Formulation .................................................................................94 4.4 Runge-Kutta Method for DAE of Index 2 .................................................95 4.5 Iteration Formulation .................................................................................97

vi

4.6 Optimization ..............................................................................................99 4.7 Examples..................................................................................................100

4.7.1 A Planar 3-DOF Human Arm Model...........................................100 4.7.2 A Spatial 4-DOF Manipulator .....................................................113

4.8 Conclusions..................................................................................................121

5 UPPER BODY MOTION PREDICTION.........................................................122

5.1 Path in Cartesian Space............................................................................123 5.1.1 Unconstrained Point-to-Point Movements...................................123 5.1.2 Curved Point-to-Point Movements ..............................................125

5.2 B-Spline Functions for Joint Variables....................................................133 5.2.1 Definition of B-Spline Curves .....................................................133 5.2.2 Joint B-Spline Functions..............................................................135

5.3 Illustration of Motion Prediction Method................................................136 5.4 Optimization ............................................................................................138 5.5 Results and Discussion ............................................................................141 5.6 Conclusions..............................................................................................156

6 OPTIMIZATION-BASED LAYOUT DESIGN ...............................................157

6.1 Problem Definition...................................................................................158 6.2 Human Model ..........................................................................................159 6.3 Layout Design..........................................................................................161

6.3.1 Cost Functions and Constraints ...................................................161 6.3.2 Optimization Scheme...................................................................164 6.3.3 Comparison of GA and SA..........................................................166

6.4 An Example .............................................................................................168 6.5 Conclusions..............................................................................................173

7 COMPUTER INTERFACE DESIGN ...............................................................174

7.1 Modeling..................................................................................................175 7.2 Posture Prediction ....................................................................................179 7.3 Motion Prediction ....................................................................................186 7.4 Visualization ............................................................................................193 7.5 Layout ......................................................................................................195

8 CONCLUSIONS AND RECOMMENDATIONS ............................................197

8.1 Conclusions..............................................................................................197 8.2 Recommendations....................................................................................199

REFERENCES ................................................................................................................202

vii

LIST OF TABLES

Table

2.1 Joint limits..........................................................................................................35

2.2 The DH Table for the 15-DOF human model....................................................39

3.1 Joint weights used in cost function ....................................................................56

3.2 DH Table of the 15-DOF model ........................................................................58

3.3 Distance and Discom- fort obtained from GA-GBA .........................................81

3.4 Distance and Discomfort obtained from the four faster methods ......................81

3.5 CPU time of computations on a HP-UX workstation........................................82

4.1 Traced results for one unsuccessful trial..........................................................106

4.2 Traced results for the successful trial ..............................................................106

4.3 Traced results for the successful trial without correction............................108 z

4.4 Summarized final result without optimization.................................................109

4.5 Traced optimized result with optimization ......................................................110

4.6 Comparison of results without and with optimization.....................................111

4.7 Comparison of results for different integration step sizes ...............................119

4.8 Comparison of CPU time on a 1.8GHz processor with 512MB memory .......120

6.1 DH Table for upper body.................................................................................160

viii

LIST OF FIGURES

Figure

1.1 One DOF elbow...................................................................................................9

1.2 The shoulder joint (1. Clavicle. 2. Body of scapula. 3. Surgical neck of humerus. 4. Anatomical neck of humerus. 5. Coracoid process. 6. Acromion) ............................................................................................................9

1.3 A model of the shoulder complex......................................................................10

1.4 Modeling of the shoulder complex as three revolute and two prismatic DOF’s.................................................................................................................11

2.1 Modeling of a human using a series of rigid links connected by joints.............28

2.2 Human skeletal system ......................................................................................29

2.3 Anatomy of the spine.........................................................................................29

2.4 Anatomy of the shoulder....................................................................................30

2.5 Anatomy of the elbow........................................................................................31

2.6 Anatomy of the wrist .........................................................................................32

2.7 Modeling of the torso-shoulder-arm..................................................................33

2.8 Joint coordinate system convention and its parameters.....................................37

2.9 A 15-DOF model of the torso, spine, shoulder, arm, and wrist.........................38

3.1 The task-based approach to selecting cost functions .........................................43

3.2 Illustrating the potential energy of the forearm .................................................46

3.3 GA-GBA Algorithm for predicting a posture....................................................53

3.4 Neutral position..................................................................................................56

3.5 Modeling of the torso, shoulder, and arm as a 15-DOF system ........................57

3.6 , Target Point 1 (41.2, -57, 31.5) Discomfort 2.2022=

...............................59 [.0847, -.0007,.0407,.0091,.0567,.0820, -.0019,.0075,=q .0110,.3465,.5328, -.4244, -1.4772, -.1081,.1557]T

3.7 , Target Point 2 (40, 0, 36) Discomfort 7.38=

.............................59 [.1022, -.1310, -.0235,.0198,.0014,.0072, -.0112,.0444,=q - .7829, -.1346,1.3475, -1.2451, -1.4099, -.1625, -.3101]T

ix

3.8 , Target Point 3 (20, 35, 50) Discomfort 12.8254=

.............................60 [.3087, -.2618,.0510,.0843,.0416,.0020,.0022,.2022,=q - .2543, -1.4352,.3640, -1.1986, -1.2240, -.3469,.3487]T

3.9 , Target Point 4 (-30, 10, 20) Discomfort 2.0873=

.................................60 [-.0713,.0075,.1673,.0884,.1153,.0497,.0097,.0760,=q - .6950,1.9193, -.2518,.0000, -2.3357,.4159, -.2897]T

3.10 , Target Point 5 (-40, 0, 36) Discomfort 1.5824=

...............................60 [.0135,.0206,.1265,.0720,.1204,.0805,.0021, -.0005,=q - .8226,1.9184, -.5517, -.0003, -1.7336,.1164, -.1126]T

3.11 , Target Point 6 (-50, -20, 20) Discomfort 1.0783=

................................61 [-.0871, -.0053,.0546,.0661,.0340,.0676,.0074,.0200,=q - .7906,1.5271, -.4086, -.1063, -1.5175,.0095,.2451]T

3.12 , Target Point 7 (0, -60, 5) Discomfort 0.7253=

...............................61 [-.0120, -.0348,.0052,.0096,.0344,.0022,.0016,.0265,=q - .0643,.9742, -.1020, -.5219, -1.7381, -.0602,.1526]T

3.13 , Target Point 8 (30, -40, 60) Discomfort 3.8352=

................................61 [.1634, -.2485,.0292,.0468,.0703,.0418, -.0048,.0400,=q - .1602,.3555,.1484, -1.0230, -1.0510,.0806, -.0517]T

3.14 , Target Point 9 (30, -40, 0) Discomfort 0.4966=

.............................62 [.0568, -.0618,.0135,.0030, -.0030, -.0056, -.0103,.0795,=q - .0314,1.2111,.3822, -.3199, -1.7188, -.0346, -.0813]T

3.15 , Target Point 10 (60, 0, 0) Discomfort 3.3709=

.................................62 [.2311,.0104,.2571,.0849,.1081, -.0150,.0203, -.3032,=q - .0402,1.9117,1.2721, -.0113, -1.6577,.0791,.3176]T

3.16 Marker placement on front of subject................................................................65

3.17 C7 to suprasternal notch ....................................................................................66

3.18 1H and 2H measurements.................................................................................66

3.19 15-DOF model with Michigan measurements...................................................68

3.20 Our 15-DOF model (left) and Michigan model (right)......................................69

3.21 g003h .................................................................................................................69

3.22 g004h .................................................................................................................69

x

3.23 g007h .................................................................................................................69

3.24 g097l ..................................................................................................................70

3.25 g122h .................................................................................................................70

3.26 g170h .................................................................................................................70

3.27 g218l ..................................................................................................................70

3.28 g244h .................................................................................................................71

3.29 g340l ..................................................................................................................71

3.30 g349l ..................................................................................................................71

3.31 g363h .................................................................................................................71

3.32 g458l ..................................................................................................................72

3.33 Posture prediction for ...........................................................73 (41.2, -57, 31.5)

3.34 Posture prediction for (40, 0, 36) .......................................................................73

3.35 Prediction postures based on two different initial postures ...............................74

3.36 A Partitioned Reach Envelope...........................................................................76

3.37 BFGS-BFGS method .........................................................................................77

3.38 DIS-CONS method ............................................................................................78

3.39 MOO method .....................................................................................................79

3.40 CONS-SQP method ...........................................................................................80

4.1 A planar 3-DOF model of human arm.............................................................100

4.2 Singular curves ................................................................................................102

4.3 A non-crossable singular curve and a path ......................................................102

4.4 Movement of the arm for the unsuccessful trial ..............................................107

4.5 Movement of the arm for the successful trial ..................................................107

4.6 Singular configuration and neutral configuration............................................111

4.7 Movement of the arm obtained with optimization...........................................112

4.8 Configurations obtained without (left) and with optimization (right) .............112

4.9 A spatial 4-DOF RPRP manipulator................................................................113

xi

4.10 Singular surfaces..............................................................................................114

4.11 Crossable and non-crossable surfaces..............................................................115

4.12 Path AB and a non-crossable surface S ...........................................................115

4.13 Singular configuration at intersection point.....................................................117

4.14 Snapshots of the spatial manipulator of an unsuccessful planning..................118

4.15 Snapshots of the spatial manipulator during movement of a successful planning............................................................................................................119

5.1 A B-spline ........................................................................................................134

5.2 Modeling of the torso, shoulder, and arm as a 15-DOF system ......................136

5.3 Motion prediction illustration ..........................................................................137

5.4 Refined motion prediction module ..................................................................137

5.5 Path design with control points prediction ......................................................141

5.6 Predicted motion 1 at time 0 ............................................................................142

5.7 Predicted motion 1 at time 0.25 ft ...................................................................143

5.8 Predicted motion 1 at time 0.5 ft .....................................................................143

5.9 Predicted motion 1 at time 0.75 ft ...................................................................144

5.10 Predicted motion 1 at time ft ..........................................................................144

5.11 Predicted joint splines for motion 1.................................................................145

5.12 Predicted motion 2 at time 0 ............................................................................146

5.13 Predicted motion 2 at time 0.35 ft ...................................................................146

5.14 Predicted motion 2 at time 0.5 ft .....................................................................147

5.15 Predicted motion 2 at time 0.65 ft ...................................................................147

5.16 Predicted motion 2 at time ft ..........................................................................148

5.17 Predicted joint splines for motion 2.................................................................148

5.18 Predicted motion 3 with a via point at time 0 ..................................................149

5.19 Predicted motion 3 with a via point at time 0.25 ft .........................................150

5.20 Predicted motion 3 with a via point at time 0.5 ft ...........................................150

5.21 Predicted motion 3 with a via point at time 0.75 ft .........................................151

xii

5.22 Predicted motion 3 with a via point at time ft ................................................151

5.23 Predicted joint splines for motion 3 with a via point .......................................152

5.24 Predicted motion 4 with a via point at time 0 ..................................................153

5.25 Predicted motion 4 with a via point at time 0.3 ft ...........................................153

5.26 Predicted motion 4 with a via point at time 0.5 ft ...........................................154

5.27 Predicted motion 4 with a via point at time 0.7 ft ...........................................154

5.28 Predicted motion 4 with a via point at time ft ................................................155

5.29 Predicted joint splines for motion 4 with a via point .......................................155

6.1 A layout problem .............................................................................................158

6.2 15-DOF model for upper body from waist up to hand ....................................159

6.3 Optimization scheme .......................................................................................164

6.4 A manufacturing cell .......................................................................................168

6.5 Designed layout ...............................................................................................171

6.6 Posture reaching tuff bin..................................................................................172

6.7 Posture pressing button....................................................................................173

7.1 Posture and motion prediction computer interface in 3D Studio MAX ..........175

7.2 15-DOF model of the torso, shoulder, and arm ...............................................176

7.3 15-DOF model in 3D Max...............................................................................177

7.4 Hierarchy of the bone structure .......................................................................178

7.5 Posture prediction interface .............................................................................181

7.6 Cost function interface.....................................................................................182

7.7 Posture prediction interface .............................................................................183

7.8 Real-time posture prediction algorithm ...........................................................184

7.9 Unreachable target point ..................................................................................185

7.10 Prediction for left arm......................................................................................186

7.11 Motion prediction interface .............................................................................188

7.12 Motion prediction interface flowchart .............................................................189

xiii

7.13 Motion prediction algorithm............................................................................190

7.14 Predicted curved motion of upper body with left arm .....................................191

7.15 Predicted joint profiles for a curved motion ....................................................192

7.16 Visualization interface .....................................................................................194

7.17 Layout interface ...............................................................................................195

xiv

1

CHAPTER 1

INTRODUCTION

1.1 Motivation

One of the main industrial applications of virtual human modeling and simulation

is to make an ergonomic evaluation of the man-machine interface of a product in a

computer-aided design (CAD) environment at a very early stage of design (also called

digital prototyping). The cost of developing prototypes is typically waived or completely

reduced when digital prototyping is implemented. While digital mockups have already

made significant impact on manufacturing, the use of digital humans to evaluate a design

has not been extensively used. Today, the development of video games on the internet,

the development of interactive training systems, the computer-aided production of

movies, especially, virtual reality applications, also raise the need for tools that will

facilitate the creation and animation of autonomous virtual characters in 3-D worlds.

Motion planning techniques will be used to direct digital human characters at the task

level and to create highly interactive systems. This motivates us to create fast planners

capable of using physics-based models to generate realistic-looking motions. The long-

term vision for this research is to create and develop methodologies and formulations for

intelligent digital humans that can be launched into a digital environment. These digital

humans will be queried for fundamental questions pertaining to ergonomics,

functionality, and any other aspect that is needed.

Towards this objective, it is necessary to simulate human postures and movements

under different task and environmental conditions. Man models have been used to

simulate human anthropometry and postures in the context of the product or workspace

being evaluated (Dooley, 1982). However, the lack of ease of use has been a problem

common to many of three-dimensional man models, since ergonomists cannot be sure of

2

the operator’s posture and behavior without a mock-up or prototype of the product or

workplace. Moreover, these humans have appeared as animated avatars that can be

manipulated but have lacked the mathematical formulation to render them intelligence,

able to perform tasks unaided by the user.

Difficulties in this respect have been encountered due to lack of biomechanically

modeling large numbers of degrees of freedom (DOF) associated with various joints.

Other difficulties are often caused by various joint angle determinations when a working

posture is to be modified. Models involving many joints are difficult to handle,

especially if accuracy and intelligence are an issue. Further constraints have been

inherently imposed because of the lack of rigor in the field of ergonomics, where rules of

thumb and empirical results have traditionally been used. Due to these limitations,

ergonomic evaluations through the three-dimensional man models have only been

accomplished at an elementary level (Kuusisto and Mattila, 1990).

Simulating human postures is a very difficult and complex problem owing to the

redundancy of the human musculoskeletal system. Inverse kinematics (IK) has

traditionally been used either in terms of geometric closed form methods or as numerical

methods, towards predicting joint values of a kinematic model resembling in output that

of the human. Those methods have been met with success in the robotics field but have

not been able to address human motion prediction. One way of representing realistic

human motions is the method of rotoscopy (Thalmann and Thalmann, 1990), sometimes

called brute-force method. It consists of recording the exact motion based on real

persons off-line, then playing back on demand on-line. In future animation systems,

based on synthetic actors, it is expected that motion control will be automatically

performed using artificial intelligence and robotics techniques, drawing upon cognitive

science and behavior. Our objective is to give our virtual humans naturalistic motion

with an ability to autonomously predict their own motion and behavior. Indeed, motion

3

will be planned at a task level and computed using physical laws (in our case using

kinematics, dynamics, and optimization), which is the focus of this study.

Realistic motion and natural-looking simulations require a thorough

understanding of human movement control strategies. It must take into account not only

the extrinsic geometric constraints (e.g. position and orientation imposed on the hand) by

the task and the intrinsic geometric constraints such as joint limits, but also others than

just geometric aspects of the task (e.g., force level). The arm reach posture of simply

touching a point in space with the index finger is certainly different than that of the

posture assumed for pressing a button in the same location with the index finger.

The purpose of this research is to obtain a better understanding of the

mathematical modeling of human motion using well-established kinematic theories.

Particularly from the field of robotics, we believe there are significant applicable theories

and numerical algorithms that, if appropriate, can be tailored to address long standing

problems in human modeling and simulation…We list some of these long standing

problems that will be addressed in this work.

Prediction of human motions and postures is particularly difficult because of two

main reasons: (i) the large number of degrees of freedom that is required to model

realistic motion and (ii) the inverse kinematic solution (i.e., predicting a posture) is not as

straightforward as in the case of robots, because while many solutions are mathematically

admissible, they do not make sense and are unrealistic! This has been a long standing

problem in human modeling, simulation, and ergonomics. Indeed, traditional algebraic

and geometric IK methods are difficult to implement and yield an infinite number of

solutions, one of which must be selected. Some numerical IK methods have been used to

solve low degree-of-freedom human models. For human models, a realistic solution must

be determined, one that resembles the actual motion.

Ergonomic design has traditionally been associated with rules of thumb and

empirical data, based on thousands of experiments. In this research, we propose,

4

formulate, and demonstrate a number of ergonomic design methods that are

mathematically based, but that yield an optimized result. Most human motion prediction

methods have taken into consideration the anthropometry, but have not taken into

account the task at hand. Our motivation for introducing a task-based approach, one that

simulates how humans react and perform according to different tasks, stems from the fact

that humans perform differently in response to the task at hand.

Robot trajectory planning has been widely studied. However, for humans,

trajectory planning is complex and requires careful analysis and attention. While barriers

in the reachable workspace surrounding a human have been delineated, crossing these

barriers under various conditions has not been addressed. It is our supposition that

humans determine a posture at the onset of motion such that a trajectory is followed in

space, uninterrupted. This initial configuration is chosen based on criteria that we also

introduce and that include crossability, comfort, dexterity, etc. In order to realistically

simulate human motion, it is important to study how humans psychologically determine

an initial posture (from an infinite number of possible postures) to cross a barrier. By

analogy, the same formulation also applies to robot manipulators.

Predicting joint motion variables as a function of time while the hand moves in

space poses a significant number of problems because of the infinite domain available for

a computer to choose. Many researchers have used the concept of minimum jerk alone to

design a path, mostly to study disabilities and human cognitive behavior dealing with

reach; however, predicting joints as a function of time has been a long standing problem,

particularly when coupled with joint limits and larger than seven degrees of freedom.

1.2 Objectives

(1) To develop methods and algorithms for kinematic modeling of realistic human

anatomy.

5

a. To enable a more realistic model of the human upper body, including the waist,

the shoulder complex, and the upper extremities. Limitations of existing human

models can be summarized as follows: (i) Low number of degrees of freedom,

particularly focused on using six or a maximum of seven DOF’s to represent a

biomechanical chain. This limitation is inherent in the fact that most inverse

kinematic solutions are only able to handle a maximum number of seven DOF’s.

Indeed, the four commercial software systems that perform digital human

modeling and simulation are also limited. (ii) Accurate biomechanical models

exist but cannot be executed in real time. We will endeavor to develop a general

mathematical method for representing human segmental motion.

b. Develop a mathematical formulation towards using a systematic method for

representing constrained large degree-of-freedom models of humans. Perhaps

the most difficult element of representing human segmental motion is the

incorporation of unilateral constraints representing joint ranges of motion into

the formulation. We will investigate methods for augmenting our modeling

technique and subsequent numerical methods for human motion prediction to

incorporate joint ranges of motion. Similarly, any such model must have the

ability to include the kinematics and dynamics of motion.

(2) To introduce the concept of task-based posture prediction.

c. Investigate and better understand how humans assume postures in space. Given

our postulate that humans must be represented with a larger number of degrees

of freedom than currently used, the issue of assuming a realistic posture and

subsequently a realistic motion, can now be addressed. Because human upper

extremities and upper bodies in general are highly redundant, predicting a

realistic (naturalistic) posture is typically difficult, if not impossible. The

objective of this work is to understand what is real and what is not, especially

when compared with robotic motions.

6

d. Introduce the concept of task-based driven behavior. We introduce the concept

of task-based posture prediction as a viable approach to predicting naturalistic

final postures of humans represented by a relatively large number of degrees of

freedom. This postulate is as follows: Given a task to be executed, a human will

inherently select one or more human performance measures (which we call cost

function) that will be minimized or maximized. This postulate gives rise to a

mathematical formulation for a computational algorithm, whereby human

performance measures must first be determined as functions that evaluate to real

numbers and that can be optimized. We will develop such functions and will

demonstrate the concept of task-based posture prediction.

e. Investigate various approaches and numerical algorithms for implementing task

based posture prediction using optimization algorithms. While the classical

theory of optimization is readily applicable, methods for implementing the

theory for a 15-DOF model (which we have used to represent the upper body)

are not direct. Indeed, a task in our postulate above comprises one or more cost

functions, which renders the problem a multi-objective optimization algorithm.

Predicting the design variables (in our case the set of 15 joint variables) subject

to a multitude of constraints will be investigated.

f. Investigate real-time (or near real-time) methods for implementing fast posture

prediction formulations. A key element of posture prediction is the ability to

calculate the vector of joint variables on-line, which requires a real- or near real-

time implementation. We will investigate various implementations of our

approach to posture prediction using both gradient based and genetic algorithms

towards a real-time implementation.

g. Validate our task-based posture prediction model against other software

systems and against experimental data. In order to validate our results, we shall

compare a simplified model (i.e., reduce the number of DOF’s to that of an

7

existing system). We shall also compare our results with experimental data

obtained through motion capture.

h. Implement a graphical interface for visualizing digital humans and predicted

motions. Visualization of postures and motions predicted by our numerical

algorithms cannot be performed in existing commercial software systems

because of the limitations in number of degrees of freedom. Therefore, a unique

graphical interface must be developed.

(3) To obtain a better understanding of human motion, particularly realistic prediction of

path trajectories for large DOF models.

i. Introduce the concept of kinematically-smooth trajectories as a method for

predicting initial starting configurations. For a large number of degrees of

freedom, redundancy may present impediments to smooth motion, particularly

when motion of the hand is executed along a path, but when the computational

algorithm yields solutions of the joint variables that would require switching of

the solution during the motion. As a result, we introduce a new concept called

kinematically-smooth motion. We then use this concept to design (hence

predict) path trajectories that would enable completion of the motion

uninterrupted. Because of the complexity of this problem, we shall investigate

converting the problem into a Runga-Kutta index 2 formulation and implement

it within our optimization algorithm.

j. To obtain a better understanding of joint-time variation (motion prediction) of

the upper body while the hand moves along a specified trajectory using the

concept of minimum jerk. While predicting static postures as stated above is a

significant problem in itself, predicting time-dependent joint variables for a

large DOF model of a human is a considerable problem. We will address the

determination of joint functions (parametrically time-dependent) that

8

simultaneously define a prescribed trajectory while taking into account the well-

established concept of minimum jerk.

1.3 Literature Review

Because of the multidisciplinary nature of this research, the literature review

section is partitioned in five sub-sections to review work in human modeling, posture

prediction, control barriers, trajectory planning and layout design.

1.3.1 Human Modeling

To establish a systematic method for biomechanically modeling human anatomy,

researchers have implemented conventions for representing segmental links and joints.

Human anatomy can be represented as a sequence of rigid bodies (links) connected by

joints. Of course, this serial linkage could be an arm, a leg, a finger, a wrist, or any other

functional mechanism. Joints in the human body vary in shape, function, and form. The

complexity offered by each joint must also be modeled, to the extent possible, to enable a

correct simulation of the motion. The degree by which a model replicates the actual

physical model is called the level of fidelity.

Perhaps the most important element of a joint is its function, which may vary

according to the joint’s location and physiology. The physiology becomes important

when we discuss the loading conditions of a joint. In terms of kinematics, we shall

address the function in terms of the number of degrees of freedom associated with its

overall movement. Muscle ligament, tendon and actions at a joint are also important and

contribute to the function.

For example, consider the elbow joint, which is considered a hinge or one degree-

of-freedom (DOF) rotational joint (e.g., the hinge of a door) because it allows for flexion

and extension in the sagittal plane (Figure 1.1) as the radius and ulna rotate about the

humerus. We shall represent this joint by a cylinder that rotates about one axis and has

9

no other motions (i.e., 1 DOF). Therefore, we can now say that the elbow is

characterized by one DOF and is represented as a cylindrical rotational joint also shown

in Figure 1.1.

Figure 1.1 One DOF elbow

Figure 1.2 The shoulder joint (1. Clavicle. 2. Body of scapula. 3. Surgical neck of humerus. 4. Anatomical neck of humerus. 5. Coracoid process. 6. Acromion)

10

On the other hand, consider the shoulder complex (Figure 1.2). The

glenohumeral joint (shoulder joint) is a multi-axial (ball and socket) synovial joint

between the head of the humerus (5) and the glenoid cavity (6). There is a 4 to 1

incongruency between the large round head of the humerus and the shallow glenoid

cavity. A ring of fibrocartilage attaches to the margin of the glenoid cavity forming the

glenoid labrum. This serves to form a slightly deeper glenoid fossa for articulation with

the head of the humerus.

There are a number of methods that can be used to model this complex joint

(Figure 1.3). One such method (Maurel, 1999) is to consider the shoulder girdle

(considering bones in pairs) as four joints that can be distinguished as: the sterno-

clavicular joint, which articulates the clavicle by its proximal end onto the sternum, the

acromio-clavicular joint, which articulates the scapula by its acromion onto the distal end

of the clavicle, the scapulo-thoracic joint, which allows the scapula to glide on the thorax,

and the gleno-humeral joint, which allows the humeral head to rotate in the glenoid fossa

of the scapula.

humerus

scapula

clavicle

thorax

Figure 1.3 A model of the shoulder complex

11

Another method takes into consideration the final gross movement of the joint

(Abdel-Malek et al., 2001), as abduction/adduction (about the anteroposterior axis of the

shoulder joint), flexion/extension and transverse flexion/extension (about the

mediolateral axis of the shoulder joint). Note that these motions provide for three

rotational degrees of freedom having their axes intersecting at one point. This gives rise

to the effect of a spherical joint typically associated with the shoulder joint (Figure 1.4).

In addition, the upward/downward rotation of the scapula gives rise to two substantial

translational degrees of freedom and total 5 DOF’s in the shoulder complex. This model

allows for consideration of the coupling between some of the joints, as is the case in the

shoulder where muscles extend over more than one segment. When muscles are used to

lift the arm in a rotational motion, unwittingly, a translational motion of the shoulder

occurs.

q1

q2

q3

q4

q5xo

yo

Figure 1.4 Modeling of the shoulder complex as three revolute and two prismatic DOF’s

12

Hogfors et al. (1987) introduced a rigid body shoulder model of twelve degrees of

freedom: three orientations for each bone and the position of the center of the humeral

head. Hogfors et al. reported that the twelve descriptive kinematic degrees of freedom

are functionally interrelated due to the constraints among them. The loop conformation

of the trunk, the clavicle and the scapula induces interdependencies between these

parameters, thus reducing the number of true DOF’s. Groot and Brand (2001) developed

a three-dimensional regression model of the shoulder rhythm that showed that the

orientation of the clavicle and the scapula is dependent on the humerus orientation.

Lepoutre (1993) modeled the human as a 10-DOF system, where 6 for the trunk, 3

for arm, 1 for leg and all are rotational joints. Jung and Choe (1996) modeled upper body

with seven degrees of freedom which consist of hip flexion, hip lateral bending, shoulder

flexion, shoulder abduction-adduction, shoulder rotation, elbow flexion and wrist flexion-

extension. Maurel (1999) developed a 10-DOF shoulder-arm model for the CHARM

project of which 8 are for the shoulder, 1 is for the elbow and 1 is for the wrist.

To describe the translational and rotational relationships between adjacent links of

the open kinematic chain, Denavit and Hartenberg (1955) notation (DH notation) has

been used because of its strength in handling large numbers of degrees of freedom and

because of its ability to systematically enable kinematic and dynamic analyses. DH

notation is used to systematically establish a coordinate system (body-attached frame) to

each link of articulated chain in robotics (Asada and Slotine, 1986). The DH notation

uses a minimum number of parameters to completely describe the kinematic relationship,

and the relative location of the two frames can be completely determined by four

parameters. Indeed, the Denavit-Hartenberg representation method has been

demonstrated to yield an effective method for modeling humans (Jung et al., 1995;

Abdel-Malek et al., 2001).

In another modeling method by Wang et al. (1998), a model was developed

expressing the upper arm axial motion limits as a function of elbow position. It has been

13

shown that the axial motion range of the upper arm depends strongly on the position of

the upper arm in the shoulder sinus cone and varies on average from 94° to 157°. The

elbow joint motion range is characterized by the simple inequality from 0 to the elbow

maximum flexion angle, which is on average around 142° (Chaffin and Anderson, 1991).

1.3.2 Posture Prediction

Posture prediction can be considered as an inverse kinematic problem in robotics:

Given a kinematic chain, and given a point in space that must be reached (and sometimes

given the trajectory of the hand and its orientation), it is required to calculate the set of

joint angles that achieve a desired posture. It is evident that this is an ill-posed problem

because of the high level of redundancy inherent in the musculoskeletal system. A

solution to this ill-posed problem in terms of movement control requires in addition to

biophysical and anatomical constraints, some other constraints to reduce the number of

degrees of freedom (Gielen et al., 1995). It is hypothesized that human body control

utilizes a cost function attached to each joint, which defines a cost value for each joint

angle, and a posture configuration is chosen based on the minimum total cost (Cruse et

al., 1990; Jung et al., 1994). Posture prediction models developed under this hypothesis

used cost functions such as joint torque and L5/S1 pressure from the biomechanical

perspective, and joint discomfort from the psychophysical perspective. Among these

functions, L5/S1 pressure and energy consumption have been used mainly to predict

whole body postures and the postures that exert heavy load such as a lifting task (Jung

and Choe, 1996). One method to calculate the discomfort is to conduct a series of

experiments, where the perceived discomfort of human subjects is measured and a model

to calculate the discomfort from the joint angles is obtained through regression analysis

(Jung and Choe, 1996).

There have been two schools of thought regarding posture prediction. The first,

perhaps the more traditional, uses anthropometrical data, collected from performing

14

thousands of experiments by human subjects, or simulation using three-dimensional

computer-aided human-modeling software [see for instance, Porter et al. (1990) and Das

and Sengupta (1995)], which were statistically analyzed to form a predictive model of

posture such as regression models. This school of thought is referred to as empirical-

statistical modeling. These models have been implemented in various simulation

software systems with some variations as to the method for selecting the most probable

posture. Among the empirical-statistical modelers are Beck and Chaffin (1992), Zhang

and Chaffin (1996), Das and Behara (1998), and Faraway et al. (1999).

The second school of thought often used biomechanics and kinematics as a

predictive tool (often referred to as inverse kinematic solutions), on a posture that has not

been observed but has been estimated as a likely posture for a task (Tracy, 1990). This

approach mathematically models the motion of a limb with the goal of formulating a set

of equations that can be solved for the joint variables. Among the researchers who

belong to this school of modeling are Jung et al. (1992; 1995), Kee et al. (1994), Jung and

Choe (1996), Kee and Kim (1997), and Wang (1999).

Researchers that belong to one school of modeling (in particular Beck and

Chaffin, 1992) cautioned that the inverse kinematics algorithm is not necessarily correct

for prediction of posture because of its theoretical foundation, difficulty with evaluating

the Jacobian, determining a closed form equation for the posture, and in modeling large

number of degrees of freedom. On the other hand, others (Abdel-Malek et al., 2001)

have stated that the use of only statistical models do not provide avenues for rigorous

ergonomic design and do not reflect a task-based approach. An impracticable number of

experiments involving human subjects must be conducted for each specific task, for

every gender of every age and anthropometric measure.

The following summarizes the methods that have been proposed for posture

prediction. Generally speaking, methods used in posture prediction can be divided into

four categories: experimental, algebraic, geometric, and iterative IK solutions.

15

Experimental approach is based primarily on statistical regression equations

developed from a large number of measured postures (Beck and Chaffin, 1992; Verriest

et al., 1994). The advantage of statistical regression-based methods is that no numerical

iterations are needed and joint limits are automatically satisfied. However, their

application is limited by the database. Furthermore, to make the prediction accurately,

many experiments will be needed according to different sizes of people. Faraway et al.

(1999) used pseudo-inverse to rectify the posture obtained from the experimental

database to meet position constraints of the end-effector. This method is limited to the

situation that the database of human posture has been generated for a specific desired

task. It becomes very inconvenient or impossible for general task-based posture

prediction.

Algebraic solutions are significantly faster than iterative IK solutions. The

problem with the algebraic method is that not all kinematic chains have a closed-form

solution, especially for kinematic chains with more than 6 DOF’s (McKerrow, 1991).

Furthermore, the derivation of the closed-form equations is a lengthy process, and it is

impossible to apply the algebraic method to general cases.

A geometric algorithm of inverse kinematics was proposed to predict the arm

reach posture (Wang and Verriest, 1998; Wang, 1999). The main advantage of the

geometric method compared with the algebraic one is that the non-linear nature of the

shoulder joint limit can be handled in a direct and easy way and that matrix inverse

calculation is avoided. This geometric algorithm can only be applied to the specific

model of the arm where only the rotational movement of the shoulder was considered

since it used the geometric relation between shoulder, elbow and wrist. Thus, it is not

suitable for general cases when the more complex shoulder model is involved or when

larger number of degrees of freedom is used.

Generalized iterative IK methods enable any structure with an arbitrary number of

degrees of freedom to be animated with minimal human intervention. Because of this

16

advantage, iterative IK methods have become the main approach applied in posture

prediction. As a result, numerous reports have appeared that use this approach, but that

are limited in breadth generality. For example, Goldenberg et al. (1985) reported a

generalized solution to the IK problem in robotic manipulators. A modified Newton-

Raphson method was used where the Jacobian matrix was partitioned according to the six

joint correction variables and (n-6) free joint correction variables. For each iteration, the

free joint correction variables were obtained by optimizing some cost function. This

method is very time-consuming with large redundant DOF system due to the optimization

needed in each iteration step.

The so-called Distributed Positioning (DP) concept was originally developed for

problems where massive robots were involved in fast manipulation (Potkonjak, 1990).

The same author (Potkonjak et al., 1998) later applied this concept to the motion analysis

of redundant anthropomorphic arm/hand with 8 DOF’s during writing. The modeling is

based on the separation of the prescribed movement into two motions: smooth global and

fast local motion. The preceding motion is distributed to the subsystem with greatest

inertia called basic configuration, while the latter is distributed to the redundancy

subsystem. A numerical integration method is then used to solve the basic configuration.

The redundancy subsystem is determined in the second step with the knowledge of the

basic configuration. Although this method avoids the redundancy in the first step, it still

needs to use pseudo-inverse in the second step. Moreover, global and local motion for

each specific task needs to be analyzed precisely prior to the initiation of problem

solving.

The most popular iterative approach to posture prediction is based on the pseudo-

inverse method used for redundant manipulator control in robotics (e.g., Nakamura,

1991; Chiaverini and Siciliano, 1991). The general formulation of pseudo-inverse

method is based on the following equation

17

Δ Δ Δ+ +θ = J x + (I - J J) z (1.3.1)

where Δθ is the vector of the joint variables, Δx describes the main task as a variation of

the end-effector position and orientation in Cartesian space, J is the Jacobian matrix, +J

is the pseudo-inverse of J , Δz describes a secondary task in the joint space. +(I - J J) is

a projection operator on the null space of the linear transformation J , which means any

value of Δz won’t modify the achievement of the main task. Consequently, Eq. (1.3.1)

provides a set of solutions. The solution obtained only by the first term is the one with

minimized norm among all the solutions. Usually Δz is calculated so as to minimize a

cost function. For example, Boulic and Thalmann (1992) proposed an approach for the

animation of articulated figures. The fundamental idea of this approach is to consider

any desired joint space motion as a reference model inserted into the secondary task of an

inverse kinematic control scheme. In other words, Δz is calculated to minimize the

difference between the resolved motion and the reference motion.

Lepoutre (1993) used pseudo-inverse technique to predict upper body postures of

a two-dimensional model and compared different optimization criteria such as

minimization of articular torques and remoteness of articular limits (dexterity criterion).

It appeared that the workspace geometrical organization corresponding to the dexterity

criterion optimal posture is generally the best one. Jung et al. (1995) applied the same

method with a dexterity criterion (joint range availability) to predict upper body postures

of a three-dimensional model, where the DH notation was used to represent human

motion. The same group reportedly demonstrated that humans adopt postures of

minimum discomfort among all feasible body configurations (a result we shall expound

upon in our work). Similar results were reported by Dysart and Woldstad (1996) who

used three separate models and objective functions to predict the postures of humans

performing static sagittal lifting tasks. The models used a common inverse kinematics

characterization to represent mathematically feasible postures, but explored different

18

criteria functions for selecting a final posture, where the minimum total torque was

shown to be more accurate. Their models were limited to planar motion (stick models

were used) with a relatively small number of DOF’s. Jung and Choe (1996) predicted the

arm reach posture using an algorithm that works in several steps. In the first step it

predicts multiple sets of joint angles, which position the hand to a specific target location

using the inverse kinematics technique; secondly, it applies joint range of motion criteria

to find kinematically feasible posture set among the predicted body postures; finally, it

applies the discomfort prediction model to feasible postures and selects the most

favorable upper body posture that has a minimum discomfort value. They showed that

hip lateral bending and wrist flexion are the most sensitive joint movements among the

seven joints they modeled. Boulic et al. (1996) extended the range of inverse kinematics

based on pseudo-inverse by integrating mass distribution information to embody the

position control of the center of gravity of any articulated figure in single support (open

tree structure). Zhang et al. (1998) used a weighted pseudo-inverse method for posture

prediction in seated reaching movements. The weights were obtained through

minimizing the posture difference between the predicted and experiment data.

There are some drawbacks with pseudo-inverse methods. First, the second term

in Eq. (1.3.1) is normally omitted due to the computation complexity. Subsequently,

unnatural motion is yielded sometimes due to the minimized norm nature of the provided

solution of only the first term. If the second term is considered, optimization needs to be

used in every step along a path to calculate Δz which greatly increases the computation

cost. Second, the local nature of this approach provides a local solution based on the

current configuration of the articulated structure. As a consequence, a different initial

configuration leads to a different final configuration. Although this is true under some

circumstances, postures for many other cases mainly relate to discomfort or some other

criteria no matter what kind of initial posture is assumed. Third, pseudo-inverse

approach naturally computes a path for the end-effector going from an initial position to a

19

final position, this is overdone for computation since for the purpose of most ergonomic

evaluation, only the information of final posture is needed. Because of the above

limitations, pseudo-inverse method hasn’t been seen used in large DOF systems that are

very likely for the realistic modeling of human.

Zhao and Badler (1989, 1994) used a non-linear optimization method that can

take into account multiple geometric constraints (e.g. position, orientation, etc.) on the

hand or reference points. These constraints were formulated as a goal function to be

minimized, providing a very solid method especially when the body is overconstrained.

The minimization algorithm of Broyden, Fletcher, Goldfarb, and Shanno (BFGS)

(Fletcher, 1987) is shown to converge quickly using a small number of objective function

evaluations, making it the best method for solving IK systems of those considered in the

studies by Chin et al. (1997) and Zhao and Badler (1994). However, Zhao and Badler

didn’t consider human factors in their optimization method making their solutions less

realistic. Moreover, the gradient-based optimization method they used terminates on the

local minima.

Some other approaches have been presented. Badler et al. (1987) developed a

reach tree-balancing algorithm for multiple reach constraints where different weights

were assigned to different constraints. The reach problem was solved using a greedy

algorithm based on the triangle inequality. Consecutive links are aligned until there is

sufficient length to solve the reach as a triangle or the chain is fully straightened. This

algorithm didn’t consider the joint limits and would incur undesired results. Artificial

neural networks models also emerged and they provide more accurate predictions over

the standard statistical models (Hestenes, 1994; Jung and Park, 1994; Eksioglu et al.,

1996).

20

1.3.3 Control Barriers

The problem of determining kinematically-smooth path trajectories has only

marginally been addressed in the literature. However, delineation of singular behavior

where the manipulator may or may not be able to cross a barrier was addressed by many

researchers and is typically based on a null-space criterion of the manipulator’s Jacobian

(Spanos and Kohli, 1985; Lai and Yang, 1986; Shu et al., 1986; Soylu and Duffy, 1988;

Burdick, 1991; Lipkin and Pohl, 1991; Pai and Leu, 1992; Tourassis and Ang, 1992).

While specific crossability criteria on barriers in the workspace were reported by

Nielsen et al. (1991), the fundamental concept of crossable and noncrossable surfaces

inside a manipulator’s workspace was addressed early on by Oblak and Kohli (1988).

Although most of these works have touched upon the concept of crossable surface using a

singularity criterion of the Jacobian, a unified methodology for identifying position

control problems across a barrier has not been presented. One criterion to define possible

motion (so-called feasible trajectory) from a singularity was presented by Chevallereau

and Daya (1994) and Chevallereau (1996).

Haug et al. (1995) presented a numerical algorithm for identifying and analyzing

barriers to output control of manipulators using first- and second-order Taylor

approximations of the output in selected directions. Haug and colleagues showed that the

output velocity in the direction normal to such curves and surfaces must be zero (Haug et

al., 1996) and manipulator boundaries were consequently mapped.

A nice method was presented recently where singular surfaces in manipulator

workspaces were delineated and acceleration-based crossability criteria were defined

(Yeh, 1996; Abdel-Malek and Yeh, 1997, 2000; Abdel-Malek et al., 1997, 2001).

1.3.4 Trajectory Planning

First, we will review the approaches used for trajectory generation in robotics.

Two common approaches are used to plan manipulator trajectories. The first approach

21

requires the user to explicitly specify a set of constraints (e.g., continuity and

smoothness) on position, velocity, and acceleration of the manipulator’s generalized

coordinates at selected locations (called knot points or interpolation points) along the

trajectory. The trajectory planner then selects a parameterized trajectory from a class of

functions (usually the class of polynomial functions of degree n or less, for some n), in

the time interval [ 0 , ft t ] that “interpolates” and satisfies the constraints at the

interpolation points. In the second approach, the user explicitly specifies the path that the

manipulator must traverse by an analytical function, such as a straight-line path in

Cartesian coordinates, and the trajectory planner determines a desired trajectory either in

joint coordinates or Cartesian coordinates that approximates the desired path.

By using a quaternion to represent rotations and translations, Taylor (1979)

proposed an approach, called bounded deviation joint path. This approach requires a

motion planning phase that selects enough knot points so that the manipulator can be

controlled by linear interpolation of joint values.

Lin et al. (1983) proposed an approach where a set of joint spline functions are

used to fit the segments among the selected knot points along the given Cartesian path.

This approach involves the conversion of the desired Cartesian path into its functional

representation of n joint trajectories, one for each joint. Since cubic polynomial

trajectories are smooth and have small overshoot of angular displacement between two

adjacent knot points, Lin et al. adopted the idea of using cubic spline polynomials to fit

the segment between two adjacent knots. The total traveling time for the manipulator

were minimized by adjusting the time intervals between two adjacent knot points subject

to the velocity, acceleration, jerk, and torque constraints.

Bobrow (1988) presented a path planning technique, which makes use of

approximations of an initial feasible trajectory in conjunction with an iterative, nonlinear

parameter optimization algorithm to produce time-optimal motions for a manipulator

with 3 DOF’s in a workspace containing obstacles. The Cartesian path of the

22

manipulator was represented with B-spline polynomials, and the shape of this path was

varied in a manner that minimized the traversal time. Obstacle avoidance constraints

were included in the problem through the use of distance functions. His method did not

prevent the arm from colliding with the obstacle at points other than the tip.

Yun and Xi (1996) used genetic algorithms for optimum motion planning in joint

space for robot, where some inter-knots were selected and their parameters and the

traveling time of each trajectory segment were coded and optimized. Similarly,

Constantinescu and Croft (2000) put up with a smooth and time-optimal trajectory

planning which minimizes time under path constraints, torque limits and torque rate

limits. The variables of the optimization are the end-effector pseudo-velocities at the pre-

selected knot-points along the path and the slopes of the trajectory in the s- s phase plane

at the path end-points, where s is the path parameter, e.g., the arc length. The path itself

is pre-imposed as a constraint.

Significant research has also been done on collision free motion planning. For

example, in the early 1980s, Lozano-Perez (1983) introduced the concept of a robot’s

configuration space, in which the robot is represented as a point–called a configuration–

in a parameter space encoding the robot’s DOF’s–the configuration space. Path planning

for a dimensioned robot is thus “reduced” to the problem of planning a path for a point in

a space that has as many dimensions as the robot has DOF’s. Two popular approaches

were introduced in the 1980s: approximate cell decomposition, where the free space is

represented by a collection of simple cells (Brooks and Lozano-Perez, 1983), and

potential field (Khatib, 1986). Potential fields are used in path planning to create regions

with numeric values that give an indication of a measure of safety of that region. But

none of these approaches extends well to robots with more than 4 or 5 DOF’s, either the

number of cells becomes too large or the potential field has local minima.

A randomized planner was introduced (Barraquand and Latombe, 1991), which

was able to solve complex path-planning problems for many-DOF robots by alternating

23

“down motions” to track the negated gradient of a potential field and “random motions”

to escape local minima. Later, a probabilistic roadmap (PRM) planner (Kavraki et al.,

1996) was developed. By sampling the configuration space by “local” paths (typically

straight paths), a PRM can be created. Samples and local paths are checked for collision

using a fast collision checker, which avoids the prohibitive computation of an explicit

representation of the free space.

Quinlan and Khatib (1993) put up with an elastic band concept. The free space

around the path was represented as a series of hyperspheres, called bubbles. A bubble

represents a region of configuration space that is free of collision. Covering the path with

those bubbles, a channel of free space was formed through which the robot’s trajectory

could be executed. Later, Khatib et al. (1999) used elastic strip method for the collision-

free path modification behaviors of the robots. An elastic strip represents the workspace

volume swept by a robot along a preplanned trajectory. This representation was

incrementally modified by external repulsive forces originating from obstacles to

maintain a collision-free path.

Above approaches are applied in trajectory planning of manipulators which

normally have only 2 to 3 DOF’s and up to 6 at most. On the other hand, for the realistic

motion generation, human models normally have more than 10 DOF’s. Moreover, the

criteria used for motion planning will be quite different. For example, time optimum is

always selected for the manipulator trajectory planning in application. But for human

motion, this is not always important; instead, human tends to adopt the motion with least

discomfort, effort and most smoothness. This leads to a different research area where

different strategies will be used in human motion planning.

Barring particular overriding circumstances, natural movements–and, more

markedly, hand movements–tend to be smooth and graceful. One can then postulate that

this characteristic feature corresponds to a design principle, or, in other words, that

maximum smoothness is a criterion to which the motor system abides in the planning of

24

end-point movements. Point-to-point movements performed under a wide variety of

conditions using a wide variety of limb segments exhibit the same velocity pattern (Flash

and Hogan, 1985; Hogan and Flash, 1987): a smooth, bell-shaped time course, typically

symmetrical (or nearly so) about the mid-point of the movement, starting from zero,

growing to a single peak and declining again to zero. Many researchers have also

reported that the velocity profiles of rapid-aimed movements have a global asymmetric

bell-shape, which is invariant over a wide range of movement sizes and speeds, and

asymmetry increased with higher accuracy demands (Plamondon, 1995, Part I, Part II;

Plamondon, 1998).

Because the common invariant features of these movements were only evident in

the extracorporal coordinates of the hand, there is a strong indication that planning takes

place in terms of hand trajectories rather than joint rotations. Flash and Hogan (1985)