S3 & Glacier - The only backup solution you'll ever need

-

Upload

matthew-boeckman -

Category

Technology

-

view

1.073 -

download

2

description

Transcript of S3 & Glacier - The only backup solution you'll ever need

S3 & Glacier

Amazon S3 & Glacier - the only backup solution you’ll ever need

Matthew Boeckman, VP - DevOps, Craftsy@matthewboeckman

http://enginerds.craftsy.com http://devops.com/author/matthewboeckman

Who is Craftsy

● Instructor led training videos for passionate hobbyists● #19 on Forbes’ Most Promising Companies 2014

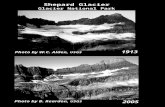

Why do backups matter?

… they don’t, until they do.

At small scale, backup can and is as easy as icloud, or google drive, or github.

As you grow, the situation gets more complex.

In a content creation business, backups are vital.

RESTORE

Define your exposure

daily backups - protect against accidental deletion (users) and system corruption/crash

DR - your building catches on fire. an employee goes rogue. the SAN suffers a total existence failure.

permanent archive - data at rest, legal/business permanence

what are my options

LTO - hope you like lifecycle hardware refreshes! hope your data rests on a 1.5 or 3tb boundry. hope you enjoy swapping tapes… 4-17year durability

~$.008/GB** does not include operational costs **

DISK - good luck offsiting, hope you enjoy hardware maintenance, hope you’ve got a big checkbook

~$5/GB** does not include operational costs **

hobo as a service

● not scalable● expensive● not addressable in code● requires software

systems

welcome to system adminsitrationville, population: you

why did we look for alternatives?

What are the alternatives?

s3

why s3?

● $0.03/GB● 99.999999999% durable● easy interface with glacier● addressable with code

“If you store 10,000 objects with us, on average we may lose one of them every 10 million years or so. This storage is designed in such a way that we can sustain the concurrent loss of data in two separate storage facilities.”

except...

this is a busy space

free tools, one thread, small files

http://s3tools.org/s3cmd ● great sync support● supports multipart● Does not support multithread● tons of other features (du, cp, rm, setpolicy, cloudfront…)

https://github.com/boto ● the aws python library● includes basic cli s3put, great reference point

great, but not fast

free tools, multipass, big files

https://github.com/mumrah/s3-multipart ● zomg multithreaded, multipart up and down● saturated 100M pipe for a single file transfer● does not handle small files

https://github.com/aws/aws-cli ● zomg multithreaded, multipart up and down● saturated 500M pipe for a single file transfer● perfect in every imaginable way

problems

approach single archive (tar)

lots o files, some big some small

le good ● fast transfer● simple● no filename

pain

● restore & navigation granularity

le bad ● restore all● can’t nav your

backup

● processing overhead

● users name things...

our first backup script ... testy.sh

*shitty speed, restore costs, let’s just get to the next slide

to mp or not to mp...

size=$(stat -f %z "$x")

if [ "$size" -gt "100000000" ]; then

s3-mp-upload.py ${S3MPT_OW} -np 10 -s 100 "$x" "s3://$

{BUCKET}/$CID/${x}" >> $LOG 2>&1

else

s3put ${S3PUT_OW} -s $AWS_ACCESS_SECRET -a

$AWS_ACCESS_KEY -b $BUCKET -p "$CPATH" "$x" >> $LOG

2>&1

fi

aws s3 cli

aws s3 sync "$CID" "s3://${BUCKET}/${CID}" --delete

aws s3 cp "$SRC" "s3://${BUCKET}/${CID}" --recursive

simple, multipart, multithreaded and saturates 500mbps both ways

hooray! now what

why glacier?

● $0.01/GB● durable like s3● data sent to glacier via s3 policies cannot be

accidentally deleted

s3->glacier

prefix, delete

restore from glacier

restore, our UI

restore from glacier as code

foreach ($objects as $flerbs) { $key = $flerbs->Key; $restore = $s3->restore_archived_object ($bucket, $key, $days ); //var_dump($restore); $http_code = $restore->status; $xml_resp = $restore->body; $message = $xml_resp->Message; $header = $restore->header; $req_id = $header['x-amz-request-id']; $amz_id2 = $header['x-amz-id-2']; $req_date = $header['date'];}

oooh, a progress gui

restore status as code

$header = $s3->get_object_headers($bucket, $key)->header; $restore = explode(',', $header['x-amz-restore']);$progress = strpos($restore[0], 'true');…if ($sclass == 'GLACIER') { if ($header['x-amz-restore'] == '') { $style = '"background-color: #0ce3ff;"'; // not restored! $note = ''; } elseif ($progress != false) { $style = '"background-color: #ffa391;"'; // restore in progress! $note = 'Restoration in progress'; } else { $style = '"background-color: #95fed0;"'; // restored! $note = "Restored until $expirydate"; }

versioning, another approach

● versioning operates on the bucket level● each version of an object counts as storage ● versioning happens automatically with any PUT● deletion of an object does not explicitly delete versions (!!)

GET /my-image.jpg?versionId=L4kqtJlcpXroDTDmpUMLUo

GET /my-image.jpg?versions HTTP/1.1

DELETE /my-image.jpg?versionId=UIORUnfnd89493jJFJ

restoring a version

Restore to the most recent version?

1. delete the current version!

Restore to an older version?

2. GET the desired version

3. PUT it back on top of the object you’re restoring

where does Craftsy live?

1. Bi-weeklies of ACTIVE content to a non-versioned, non-glaciered bucket (~200TB total, +2TB/wk)

2. Weekly new finished content to glacier-backed s3 bucket (~500TB, +45TB/mo)

3. All code artifacts (dev, staging, prod) to a versioned s3 bucket

4. Postgres backup segments to an non-glaciered bucket

most important, we do all of it in code. we don’t shuffle tapes, upgrade hardware, or system administrate!

Thank you

QUESTIONS!

Matthew Boeckman

@matthewboeckman

http://enginerds.craftsy.com

(deck will be there & slideshare)http://devops.com/author/matthewboeckman