Random numbers generators · Simulations D.Moltchanov, TUT, 2006 1. The need for random numbers...

Transcript of Random numbers generators · Simulations D.Moltchanov, TUT, 2006 1. The need for random numbers...

Random numbers generators

Lecturer: Dmitri A. Moltchanov

E-mail: [email protected]

http://www.cs.tut.fi/˜moltchan/modsim/

http://www.cs.tut.fi/kurssit/TLT-2706/

Simulations D.Moltchanov, TUT, 2006

OUTLINE:

• The need for random numbers;

• Basic steps in generation;

• Getting uniformly distributed random numbers;

• Statistical tests for uniformly distributed random numbers;

• Getting random numbers with arbitrary distribution;

• Statistical tests for random numbers with arbitrary distribution;

• Multidimensional distributions.

Lecture: Random numbers generators 2

Simulations D.Moltchanov, TUT, 2006

1. The need for random numbersExamples of randomness in telecommunications:

• interarrival times between arrivals of packets, tasks, etc.;

• service time of packets, tasks, etc.;

• time between failure of various components;

• repair time of various components;

• . . .

Usage in simulations:

• random events are characterized by distributions;

• simulations: we cannot use distribution directly.

Example: mean waiting time in M/M/1 queuing system:

• arrival process: exponential distribution with mean 1/λ;

• service times: exponential distribution with mean 1/µ.

Lecture: Random numbers generators 3

Simulations D.Moltchanov, TUT, 2006

Discrete-event simulation of M/M/1 queue

INITIALIZATION

time:=0;

queue:=0;

sum:=0;

throughput:=0;

generate first interarrival time;

MAIN PROGRAM

while time < runlength do

case nextevent of

arrival event:

time:=arrivaltime;

add customer to a queue;

start new service if the service is idle;

generate next interarrival time;

departure event:

time:=departuretime;

throughput:=throughtput + 1;

remove customer from a queue;

if (queue not empty)

sum:=sum + waiting time;

start new service;

OUTPUT

mean waiting time = sum / throughput

Lecture: Random numbers generators 4

Simulations D.Moltchanov, TUT, 2006

2. General notesAll computer generated numbers are pseudo ones:

• we know the method how they are generated;

• we can predict any ”random” sequence in advance.

The goal is then: imitate(!) random sequences as good as possible.

Requirements for generators of pseudo random numbers:

• must be fast;

• must have low complexity;

• must be portable;

• must have sufficiently long cycles;

• must allow to generate repeatable sequences;

• numbers must be independent;

• numbers must closely follow a given distribution.

Lecture: Random numbers generators 5

Simulations D.Moltchanov, TUT, 2006

2.1. General approach

General approach nowadays:

• transform of one random variable to another one;

• as a reference distribution a uniform distribution is often used.

Note the following:

• most languages contains generator of uniformly distributed numbers in interval (a, b).

• most languages do not contain implementations of arbitrarily distributed random numbers.

The procedure is to:

• generate RNs with uniform distribution on (a, b);

• transform them somehow to uniformly distributed RNs in (0, 1);

• transform them somehow to random numbers with desired distribution.

Lecture: Random numbers generators 6

Simulations D.Moltchanov, TUT, 2006

2.2. Step 1: uniform random numbers in (a, b)

Basic approach:

• generate random number with uniform distribution on (a, b);

• transform these random numbers to (0, 1);

• transform it somehow to a random number with desired distribution.

Uniform generators:

• old methods: mostly based on radioactivity;

• Neiman’s algorithm;

• congruential methods.

Basic approach: next number is some function of previous one

γi+1 = F (γi), i = 0, 1, . . . , (1)

• γ0 is known and directly computed from the seed.

Lecture: Random numbers generators 7

Simulations D.Moltchanov, TUT, 2006

2.3. Step 2: transforming to random numbers in (0, 1)

Basic approach:

• generate random number with uniform distribution on (a, b);

• transform these random numbers to (0, 1);

• transform it somehow to a random number with desired distribution.

Uniform U(0, 1) distribution has the following pdf:

f(x) =

⎧⎨⎩1, 0 ≤ x ≤ 1

0, otherwise. (2)

Lecture: Random numbers generators 8

Simulations D.Moltchanov, TUT, 2006

Mean and variance are given by:

E[X] =

∫ 1

0

xdx =x2

2

∣∣∣∣∣1

0

=1

2,

σ2[X] =1

12. (3)

How to get U(0, 1):

• by rescaling from U(0, m) as follows:

yi = γi/m, (4)

• where m is some normalizing constant.

What we get:

• something like: 0.12, 0.67, 0.94, 0.04, 0.65, 0.20, . . . ;

• sequence that appears to be independent and uniformly distributed in (0, 1)...

Lecture: Random numbers generators 9

Simulations D.Moltchanov, TUT, 2006

2.4. Step 3: getting non-uniform random numbers

Basic approach:

• generate random number with uniform distribution on (a, b);

• transform these random numbers to (0, 1);

• transform it somehow to a random number with desired distribution.

If we have generator U(0, 1) the following techniques are avalable:

• discretization: bernoulli, binomial, poisson, geometric;

• rescaling: uniform;

• inverse transform: exponential;

• specific transforms: normal and other distribution;

• rejection method: universal method;

• reduction method: Erlang, Binomial;

• composition method: for complex distributions.

Lecture: Random numbers generators 10

Simulations D.Moltchanov, TUT, 2006

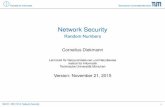

3. Getting uniformly distributed random numbersThe generator is fully characterized by (S, s0, f, U, g):

• S is a finite set of states;

• s0 ∈ S is the initial state;

• f(S → S) is the transition function;

• U is a finite set of output values;

• g(S → U) is the output function.

The algorithm is then:

• let u0 = g(s0);

• for i = 1, 2, . . . do the following recursion:

– si = f(si−1);

– ui = g(si).

Note: functions f(·) and g(·) influence the goodness of the algorithm heavily.

Lecture: Random numbers generators 11

Simulations D.Moltchanov, TUT, 2006

user choice s0

s0

s1

s2

s3

s4

u0

u1

u2

u3

u4

u0=g(s0)

u1=g(s1)

u2=g(s2)

u3=g(s3)

u4=g(s4)

s1=f(s0)

s2=f(s1) s3=f(s2)

s4=f(s3)

Figure 1: Example of the operations of random number generator.

Here s0 is a random seed:

• allows to repeat the whole sequence;

• allows to manually assure that you get different sequence.

Lecture: Random numbers generators 12

Simulations D.Moltchanov, TUT, 2006

3.1. Von Neumann’s generator

The basic procedure:

• start with some number u0 of a certain length x (say, x = 4 digits, this is seed);

• square the number;

• take middle 4 digits to get u1;

• repeat...

• example: with seed 1234 we get 1234, 5227, 3215, 3362, 3030, etc.

Shortcomings:

• sensitive to the random seed:

– seed 2345: 2345, 4990, 9001, 180, 324, 1049, 1004, 80, 64, 40... (will always < 100);

• may have very short period:

– seed 2100: 2100, 4100, 8100, 6100, 2100, 4100, 8100,... (period = 4 numbers).

To generate U(0, 1): divide each obtained number by 10x (x is the length of u0).

Note: this generator is also known as midsquare generator.

Lecture: Random numbers generators 13

Simulations D.Moltchanov, TUT, 2006

3.2. Congruential methods

There are a number of versions:

• additive congruential method;

• multiplicative congruential method;

• linear congruential method;

• tausworthe generator.

General congruential generator:

ui+1 = f(ui, ui−1, . . . ) mod m, (5)

• ui, ui−1, . . . are past numbers.

For example, quadratic congruential generator:

ui+1 = (a1u2i + a2ui−1 + c) mod m. (6)

Note: if here a1 = 1, a2 = 0, c = 0, m = 2 we have the same as in midsquare method.

Lecture: Random numbers generators 14

Simulations D.Moltchanov, TUT, 2006

3.3. Additive congruential method

Additive congruential generator is given:

ui+1 = (a1ui + a2ui−1 + · · · + akui−k) mod m. (7)

The special case is sometimes used:

ui+1 = (a1ui + a2ui−1) mod m. (8)

Characteristics:

• divide by m to get U(0, 1);

• maximum period is mk;

• note: rarely used.

Shortcomings: consider k = 2:

• consider three consecutive numbers ui−2, ui−1, ui;

• we will never get: ui−2 < ui < ui−1 and ui−1 < ui < ui−2 (must be 1/6 in all sequence).

Lecture: Random numbers generators 15

Simulations D.Moltchanov, TUT, 2006

3.4. Multiplicative congruential method

Multiplicative congruential generator is given:

ui+1 = (aui) mod m. (9)

Characteristics:

• divide by m to get U(0, 1);

• maximum period is m;

• note: rarely used.

Shortcomings:

• can never produce 0.

Choice of a, m is very important:

• recommended m = (2p − 1) with p = 2, 3, 5, 7, 13, 17, 19, 31, 61 (Fermat numbers);

• if m = 2q, q ≥ 4 simplifies the calculation of modulo;

• the maximum period is at best equal to m/4.

Lecture: Random numbers generators 16

Simulations D.Moltchanov, TUT, 2006

3.5. Linear congruential method

Linear congruential generator is given:

ui+1 = (aui + c) mod m, (10)

• where a, c, m are all positive.

Characteristics:

• divide by m to get U(0, 1);

• maximum period is m;

• frequently used.

Choice of a, c, m is very important. To get full period m choose:

• m and c have no common divisor;

• a = 1 mod r if r is a prime divisor of m;

• a = 1 mod 4 if m is multiple of 4.

Lecture: Random numbers generators 17

Simulations D.Moltchanov, TUT, 2006

The step-by-step procedure is as follows:

• set the seed x0;

• multiply x by a and add c;

• divide the result by m;

• the reminder is x1;

• repeat to get x2, x3, . . . .

Examples:

• x0 = 7, a = 7, c = 7, m = 10 we get: 7,6,9,0,7,6,9,0,... (period = 4);

• x0 = 1, a = 1, c = 5, m = 13 we get: 1,6,11,3,8,0,5,10,2,7,12,4,9,1... (period = 13);

• x0 = 8, a = 2, c = 5, m = 13 we get: 8,8,8,8,8,8,8,8,... (period = 1!).

Recommended values: a = 314, 159, 269, c = 453, 806, 245, m = 231 for 32 bit machine.

Lecture: Random numbers generators 18

Simulations D.Moltchanov, TUT, 2006

Complexity of the algorithm: addition, multiplications and division:

• division is slow: to avoid it set m to the size of the computer word.

Overflow problem does not exist when m equals to the size of the word:

• values a, c and m are such that the result axi + c is greater than the word;

• it may lead to loss of significant digits but it does not hurt!

Example:

• register can accommodate 2 digits at maximum;

• the largest number that can be stored is 99;

• if m = 100: for a = 8, u0 = 2, c = 10 we get x0 = (aui + c) mod 100 = 26;

• if m = 100: for a = 8, u0 = 20, c = 10 we get x1 = (aui + c) mod 100 = 170;

– multiplication here aui = 8 ∗ 20 = 160 causes overflow;

– first significant digit is lost and register contains 60;

– the reminder in the register (result) is: (60 + 10) mod 70 = 70.

• the same as 170 mod 100 = 70.

Lecture: Random numbers generators 19

Simulations D.Moltchanov, TUT, 2006

3.6. How to get good congruental generator

Characteristics of good generator:

• should provide maximum density:

– no large gaps in [0, 1] are produced by random numbers;

– problem: each number is discrete;

– solution: a very large integer for modulus m.

• should provide maximum period:

– achieve maximum density and avoid cycling;

– achieve by: proper choice of a, c, m, and x0.

• effective for modern computers:

– set modulo to power of 2.

Lecture: Random numbers generators 20

Simulations D.Moltchanov, TUT, 2006

3.7. Tausworthe generator

Tausworthe generator is given by:

zi = (a1zi−1 + a2zi−2 + · · · + akzi−k + c) mod 2 =

(k∑

j=1

ajzi−j + c

)mod 2. (11)

• where aj ∈ {0, 1}, j = 0, 1, . . . , k;

• the output is binary: 0011011101011101000101...

Advantages:

• independent of the system (computer architecture);

• independent of the word size;

• can produce very large periods;

• can be used in composite generators (we consider in what follows).

Note: there are several bit selection techniques to get numbers.

Lecture: Random numbers generators 21

Simulations D.Moltchanov, TUT, 2006

A way to generate numbers:

• choose an integer l ≤ k;

• split in blocks of length l and interpret each block as a digit:

un =l−1∑j=0

znl+j2−(j+1). (12)

In practice, only two ai are set to equal to 1 at places h and k. We get:

zn = (zi−h + zi−k) mod 2. (13)

Example:

• h = 3, k = 4, initial values 1,1,1,1;

• we get: 110101111000100110101111...;

• period is 2k − 1 = 15;

• if l = 4: 13/16, 7/16, 8/16, 9/16, 10/16, 15/16, 1/16, 3/16...

Lecture: Random numbers generators 22

Simulations D.Moltchanov, TUT, 2006

3.8. Composite generator

Idea: use two generators of low period to generate another with wider period.

The basic principle:

• use the first generator to fill the shuffling table (address - entry (random number));

• use random numbers of second generator as addresses in the next step;

• each number corresponding to the address is replaced by new random number of first generator.

The following algorithm uses one generator to shuffle with itself:

1. create shuffling table of 100 entries (i, ti = γi, i = 1, 2, . . . , 100);

2. draw random number γk and normalize to the range (1, 100);

3. entry i of the table gives random number ti;

4. draw the next random number γk+1 and update ti = γk+1;

5. repeat step 1.

Note: table with 100 entries gives fairly good results.

Lecture: Random numbers generators 23

Simulations D.Moltchanov, TUT, 2006

3.9. Important notes

Some important notes on seed number:

• do not use seed 0;

• avoid even values;

• do not use the same sequence for different purposes in a single simulation run.

Note: these instruction may not be applicable for a particular generator.

General notes:

• some common generators are found to be inadequate;

• even if generator passed tests, some underlying pattern might be undetected;

• if the task is important use composite generator.

Lecture: Random numbers generators 24

Simulations D.Moltchanov, TUT, 2006

4. Tests for random number generatorsWhat do we want to check:

• independence;

• uniformity.

Important notes:

• if and only if tests are passed, considered numbers can be treated as random;

• recall: numbers are actually deterministic!

Commonly used tests for independence:

• runs test;

• correlation test.

Commonly used tests for uniformity:

• Kolmogorov’s test;

• χ2 test.

Lecture: Random numbers generators 25

Simulations D.Moltchanov, TUT, 2006

4.1. Independence: runs test

Basic idea:

• compute patterns of numbers (always increase, always decrease, etc.);

• compare to theoretical probabilities.

1/3 1/3 1/3

1/3 1/3 1/3

Figure 2: Illustration of the basic idea.

Lecture: Random numbers generators 26

Simulations D.Moltchanov, TUT, 2006

Do the following:

• consider a sequence of pseudo random numbers: {ui, i = 0, 1, . . . , n};• consider unbroken subsequences of numbers where numbers monotonically increasing;

– such subsequence is called run-up;

– example: 0.78,081,0.89,0.81 is a run-up of length 3.

• compute all run-ups of length i:

– ri, i = 1, 2, 3, 4, 5;

– all run-ups of length i ≥ 6 are grouped into r6.

• calculate:

R =1

n

∑1≤i,j≤6

(ri − nbi)(rj − nbj)aij, 1 ≤ i, j ≤ 6, (14)

where

(b1, b2, . . . , b6) =

(1

6,

5

24,

11

120,

19

720,

29

5040,

1

840

), (15)

Lecture: Random numbers generators 27

Simulations D.Moltchanov, TUT, 2006

Coefficients aij must be chosen as an element of the matrix:

Statistics R has χ2 distribution:

• number of freedoms: 6;

• n > 4000.

If so, observations are i.i.d.

Lecture: Random numbers generators 28

Simulations D.Moltchanov, TUT, 2006

4.2. Independence: correlation test

Basic idea:

• compute autocorrelation coefficient for lag-1;

• if it is statistically significant, numbers are not independent.

Compute statistics (lag-1 autocorrelation coefficient) as:

R =N∑

j=1

(uj − E[u])(uj+1 − E[u])/N∑

j=1

(uj − E[j])2. (16)

Practice: if R is relatively big there is serial correlation.

Important notes:

• exact distribution of R is unknown;

• for large N is uj uncorrelated we have: −2/√

N ≤ R ≤ 2/√

N ;

• therefore: reject hypotheses of non-correlated at 5% level if R not in −2/√

N, 2/√

N .

Notes: other tests for correlation: ’Portmanteau’, modified ’Portmanteau’ test, etc.

Lecture: Random numbers generators 29

Simulations D.Moltchanov, TUT, 2006

4.3. Uniformity: χ2 test

The algorithm:

• divide [0, 1] into k, k > 100 non-overlapping intervals;

• compute the number of numbers that fall in each category, fi:

– ensure that there are enough numbers to get fi > 5, i = 1, 2, . . . , k;

– values fi > 5, i = 1, 2, . . . , k are called observed values.

• if observations are truly uniformly distributed then:

– these values should be ri = n/k, i = 1, 2, . . . , k;

– these values are called theoretical values.

• compute χ2 statistics for uniform distribution:

χ2 =k

n

k∑i=1

(fi − n

k

)2

. (17)

– that must have k − 1 degrees of freedom.

Lecture: Random numbers generators 30

Simulations D.Moltchanov, TUT, 2006

Hypotheses:

• H0: observations are uniformly distributed;

• H1: observations are not uniformly distributed.

H0 is rejected if:

• computed value of χ2 is greater than one obtained from the tables;

• you should check the entry with k − 1 degrees of freedom and 1 − α level of significance.

Lecture: Random numbers generators 31

Simulations D.Moltchanov, TUT, 2006

4.4. Kolmogorov test

Facts about this test:

• compares empirical distribution with theoretical ones;

• empirical: FN(x) – number of smaller than or equal to x, divided by N ;

• theoretical: uniform distribution in (0, 1): F (x) = x, 0 < x < 1.

Hypotheses:

• H0: FN(x) follows F (x);

• H1: FN(x) does not follow F (x).

Statistics: maximum absolute difference over a range:

R = max |F (x) − FN(x)|. (18)

• if R > Rα: H0 is rejected;

• if R ≤ Rα: H0 is accepted.

Note: use tables for N , α (significance level), to find Rα.

Lecture: Random numbers generators 32

Simulations D.Moltchanov, TUT, 2006

Example: we got 0.44, 0.81, 0.14, 0.05, 0.93:

• H0: random numbers follows uniform distribution;

• we have to compute:

0.130.210.04-0.05R(j) – (j-1)/N

0.07-0.160.260.15j/N – R(j)

1.000.800.600.400.20j/N

0.930.810.440.140.05R(j)

0.130.210.04-0.05R(j) – (j-1)/N

0.07-0.160.260.15j/N – R(j)

1.000.800.600.400.20j/N

0.930.810.440.140.05R(j)

• compute statistics as: R = max |F (x) − FN(x)| = 0.26;

• from tables: for α = 0.05, Rα = 0.565 > R;

• H0 is accepted, random numbers are distributed uniformly in (0, 1).

Lecture: Random numbers generators 33

Simulations D.Moltchanov, TUT, 2006

4.5. Other tests

The serial test:

• consider pairs (u1, u2), (u3, u4), . . . , (u2N−1, u2N);

• count how many observations fall into N2 different subsquares of the unit square;

• apply χ2 test to decide whether they follow uniform distribution;

• one can formulate M -dimensional version of this test.

The permutation test

• look at k-tuples: (u1, uk), (uk+1, u2k), . . . , (u(N−1)k+1, uNk);

• in a k-tuple there k! possible orderings;

• in a k-tuple all orderings are equally likely;

• determine frequencies of orderings in k-tuples;

• apply χ2 test to decide whether they follow uniform distribution.

Lecture: Random numbers generators 34

Simulations D.Moltchanov, TUT, 2006

The gap test

• let J be some fixed subinterval in (0, 1);

• if we have that:

– un−1 and un+k+1 not in J and both un ∈ J , un+k ∈ J ;

– we say that there is a gap of length k.

• H0: numbers are independent and uniformly distributed in (0, 1):

– gap length must be geometrically distributed with some parameter p;

Pr{gap of length k} = p(1 − p)k. (19)

Practical issues:

• we observe a large number of gaps, say N ;

• choose an integer and count number of gaps of length 0, 1, . . . , h − 1 and ≥ h;

• apply χ2 test to decide whether they independent and follow uniform distribution.

Lecture: Random numbers generators 35

Simulations D.Moltchanov, TUT, 2006

5. Random numbers with arbitrary distributionContinuous distributions:

• inverse transform;

• rejection method;

• composition method;

• methods for specific distributions.

Discrete distributions:

• discretization;

– a discrete version of inverse transform method;

– for any discrete distribution.

• rescaling:

– for uniform random numbers in (a, b).

• methods for specific distributions.

Lecture: Random numbers generators 36

Simulations D.Moltchanov, TUT, 2006

5.1. Discrete distributions: discretization

Consider arbitrary distributed discrete RV:

Pr{X = xj} = pj, j = 0, 1, . . .,∞∑

j=0

pj = 1. (20)

The following method can be applied:

• generate uniformly distributed RV;

• use the following to generate discrete RV:

• this method can be applied to any discrete RV;

• there are some specific methods for specific discrete RVs.

Lecture: Random numbers generators 37

Simulations D.Moltchanov, TUT, 2006

Figure 3: Illustration of the proposed approach.

Lecture: Random numbers generators 38

Simulations D.Moltchanov, TUT, 2006

The step-by-step procedure:

• compute probabilities pi = Pr{X = xi}, i = 0, 1, . . . ;

• generate RV u with U(0, 1);

• if u < p0, set X = x0;

• if u < p0 + p1, set X = x1;

• if u < p0 + p1 + p2, set X = x2;

• . . .

Note the following:

• this is inverse transform method for discrete RVs:

– we determine the value of u;

– we determine the interval [F (xi−1), F (xi)] in which it lies.

• complexity depends on the number of intervals to be searched.

Lecture: Random numbers generators 39

Simulations D.Moltchanov, TUT, 2006

5.2. Example of discretization: histogram

Example: p1 = 0.2, p2 = 0.1, p3 = 0.25, p4 = 0.45.

Algorithm 1:

• generate u = U(0, 1);

• if u < 0.2, set X = 1, return;

• if u < 0.3, set X = 2;

• if u < 0.55, set X = 3;

• set X = 4.

Algorithm 2 (more effective due to choosing the biggest probability first):

• generate u = U(0, 1);

• If u < 0.45, set X = 4;

• if u < 0.7, set X = 3;

• if u < 0.9, set X = 1;

• set X = 2.

Lecture: Random numbers generators 40

Simulations D.Moltchanov, TUT, 2006

5.3. Example of discretization: Poisson RV

Poisson RV have the following distribution:

pi = Pr{X = i} =λie−λ

i!, i = 0, 1, . . . . (21)

We use the property:

pi+1 =λ

i + 1pi, i = 1, 2, . . . . (22)

The algorithm:

1. generate u = U(0, 1);

2. i = 0, p = eλ, F = p;

3. if u < F , set X = i;

4. p = λp/(i + 1), F = F + p, i = i + 1;

5. go to step 3.

Lecture: Random numbers generators 41

Simulations D.Moltchanov, TUT, 2006

5.4. Example of discretization: binomial RV

Binomial RV have the following distribution:

pi = Pr{X = i} =n!

i!(n − i)!pi(1 − p)n−i, i = 0, 1, . . . . (23)

We are going to use the following property:

pi+1 =n − i

i + 1

p

1 − ppi, i = 0, 1, . . . . (24)

The algorithm:

1. generate u = U(0, 1);

2. c = p/(1 − p), i = 0, d = (1 − p)n, F = d;

3. if u < F , set X = i

4. d = [c(n − i)/(i + 1)]d, F = F + d, i = i + 1;

5. go to step 3.

Lecture: Random numbers generators 42

Simulations D.Moltchanov, TUT, 2006

5.5. Continuous distributions: inverse transform method

Inverse transform method:

• applicable only when cdf can be inversed analytically;

• works for a number of distributions: exponential, unform, Weibull, etc.

Assume:

• we would like to generate numbers with pdf f(x) and cdf F (x);

• recall, F (x) is defined on [0, 1].

The generic algorithm:

• generate u = U(0, 1);

• set F (x) = u;

• find x = F−1(u),

– F−1(·) is the inverse transformation of F (·).

Lecture: Random numbers generators 43

Simulations D.Moltchanov, TUT, 2006

Example:

• we want to generate numbers from the following pdf f(x) = 2x, 0 ≤ x ≤ 1;

• calculate the cdf as follows:

F (x) =

∫ x

0

2tdt = x2, 0 ≤ x ≤ 1. (25)

• let u be the random number, we have u = x2 or x =√

u;

• get the random number.

Lecture: Random numbers generators 44

Simulations D.Moltchanov, TUT, 2006

5.6. Inverse transform method: uniform continuous distribution

Uniform continuous distribution has the following pdf and cdf:

f(x) =

⎧⎨⎩

1(b−a)

, a < x < b

0, otherwise, F (x) =

⎧⎨⎩

(x−a)(b−a)

, a < x < b

0, otherwise. (26)

The algorithm:

• generate u = U(0, 1);

• set u = F (x) = (x − a)/(b − a);

• solve to get x = a + (b − a)u.

Lecture: Random numbers generators 45

Simulations D.Moltchanov, TUT, 2006

5.7. Inverse transform method: exponential distribution

Exponential distribution has the following pdf and cdf:

f(x) = λe−λx, F (x) = 1 − e−λx, λ > 0, x ≥ 0. (27)

The algorithm:

• generate u = U(0, 1);

• set u = F (x) = e−λx;

• solve to get x = −(1/λ) log u.

Lecture: Random numbers generators 46

Simulations D.Moltchanov, TUT, 2006

5.8. Inverse transform method: Erlang distribution

Erlang distribution:

• convolution of k exponential distributions.

The algorithm:

• generate u = U(0, 1);

• sum of exponential variables x1, . . . , xk with mean 1/λ;

• solve to get:

x =k∑

i=1

xi = −1

λ

k∑i=1

log ui = −1

λlog

k∑i=1

ui. (28)

Lecture: Random numbers generators 47

Simulations D.Moltchanov, TUT, 2006

5.9. Specific methods: normal distribution

Normal distribution has the following pdf:

f(x) =1

σ√

2πe−

12

(x−µ)2

σ2 , −∞ < x < ∞, (29)

• where σ and µ are the standard deviation and the mean.

Standard normal distribution (RV Z = (X − µ/)σ) has the following pdf:

f(z) =1√2π

e−12z2

, −∞ < z < ∞, µ = 0, σ = 1. (30)

Lecture: Random numbers generators 48

Simulations D.Moltchanov, TUT, 2006

Central limit theorem:

• assume x1, x2, . . . , xn are independent with E[xi] = µ, σ2[xi] = σ2, i = 1, 2, . . . , n;

• the sum of them approaches normal distribution if n → ∞: E[∑

xi] = nµ, σ2[∑

xi] = nσ2.

The approach:

• generate k random numbers ui = U(0, 1), i = 0, 1, . . . , k − 1;

• each random numbers has: E[ui] = (0 + 1)/2 = 1/2, σ2[ui] = (1 − 0)2/12 = 1/12;

• sum of these number follows normal distribution with:∑ui ∼ N

(k

2,

k√12

), or

∑ui − k/2

k/√

12∼ N(0, 1). (31)

• if the RV we want to generate is x with mean µ and standard deviation σ:

x − µ

σ∼ N(0, 1). (32)

• finally (note that k should be at least 10):

x − µ

σ=

∑ui − k/2

k/√

12, or x = σ

√12

k

(∑ui − k

2

)+ µ. (33)

Lecture: Random numbers generators 49

Simulations D.Moltchanov, TUT, 2006

5.10. Specific method: empirical continuous distributions

Assume we have a histogram:

• xi is the midpoint of the interval i;

• f(xi) is the length of the ith rectangle.

Note: the task is different from sampling from discrete distribution.

Lecture: Random numbers generators 50

Simulations D.Moltchanov, TUT, 2006

Construct the cdf as follows:

F (xi) =∑

k∈{F (xi−1),F (xi)}f(xk), (34)

• which is monotonically increasing within each interval [F (xi−1), F (xi)].

Lecture: Random numbers generators 51

Simulations D.Moltchanov, TUT, 2006

The algorithm:

• generate u = U(0, 1);

• assume that u ∈ {F (xi−1), F (xi)};• use the following linear interpolation to get:

x = xi−1 + (xi − xi−1)u − F (xi−1)

F (xi) − F (xi−1). (35)

Note: this approach can also be used for analytical continuous distribution.

• get (xi, f(xi)), i = 1, 2, . . . , k and follow the procedure.

Lecture: Random numbers generators 52

Simulations D.Moltchanov, TUT, 2006

5.11. Rejection method

Works when:

• pdf f(x) is bounded;

• meaning: x has a finite range, say a ≤ x ≤ b.

The basic steps are:

• normalize the range of f(x) by a scale factor such that cf(x) ≤ 1, a ≤ x ≤ b;

• define x as a linear function of u = U(0, 1), i.e. x = a + (b − a)u;

• generate pairs of random numbers (u1, u2), u1, u2 = U(0, 1);

• accept the pair and use x = a + (b − a)u1 whenever:

– the pair satisfies u2 ≤ cf(a + (b − a)u1);

– meaning that the pair (x, u2) falls under the curve of cf(x).

Lecture: Random numbers generators 53

Simulations D.Moltchanov, TUT, 2006

The underlying idea:

• Pr{u2 ≤ cf(x)} = cf(x);

• if x is chosen at random from (a, b):

– we reject if u2 > cf(x);

– we accept if u2 ≤ cf(x);

– we match f(x).

Lecture: Random numbers generators 54

Simulations D.Moltchanov, TUT, 2006

Example: generate numbers from f(x) = 2x, 0 ≤ x ≤ 1:

1. select c such that cf(x) ≤ 1:

• here we have to choose c = 0.5 (0.5f(1) = 1 and f(1) is maximum of f(x));

2. generate u1 and set x = u1;

3. generate u2:

• if u2 < cf(u1) = 0.5f(1)u1 then accept u2;

• otherwise go back to step 2.

Lecture: Random numbers generators 55

Simulations D.Moltchanov, TUT, 2006

5.12. Convolution method

The basis of the method is the representation of cdf F (x):

F (x) =∞∑

j=1

pjFj(x), (36)

• pj ≥ 0, j = 1, 2, . . . ,∑∞

j=1 pj = 1.

Works when:

• it is easy to to generate RVs with distribution Fj(x) than F (x);

– hyperexponential RV;

– Erlang RV.

The algorithm:

1. generate discrete RV J , Pr{J = j} = pj;

2. given J = j generate RV with Fj(x);

3. compute∑∞

j=1 pjFj(x).

Lecture: Random numbers generators 56

Simulations D.Moltchanov, TUT, 2006

Example: generate from exponential distribution:

• divide (0,∞) into intervals (i, i + 1), i = 0, 1, . . . ;

• the probabilities of intervals are given:

pi = Pr{i ≤ X < i + 1} = e−i − e−(i+1) = e−i(1 − e−1), (37)

– gives geometric distribution.

• the conditional pdfs are fiven by:

fi(x) = e−(x−i)/(1 − e−1), i ≤ x < i + 1. (38)

– in the interval i(X − i) has the pdf e−x/(1 − e−1), 0 ≤ x < 1.

The algorithm:

• get I from geometric distribution pi = e−i/(1 − e−1), i = 0, 1, . . . ;

• get Y from e−x/(1 − e−1), 0 ≤ x < 1;

• X = I + Y .

Lecture: Random numbers generators 57

Simulations D.Moltchanov, TUT, 2006

6. Statistical tests for RNs with arbitrary distributionWhat we have to test for:

• independence;

• particular distribution.

Tests for independence:

• correlation tests:

– ’Portmanteau’ test, modified ’Portmanteau’ test, ±2/√

n, etc.

– note: here we test only for linear dependence...

Tests for distribution:

• χ2 test;

• Kolmogorov’s test.

Lecture: Random numbers generators 58

Simulations D.Moltchanov, TUT, 2006

7. Multi-dimensional distributionsTask: generate samples from RV (X1, X2, . . . , Xn).

Write the joint density function as:

f(x1, x2, . . . , xn) = f1(x1)f2(x2|x1) . . . f(xn|x1 . . . xn−1). (39)

• f1(x1) is the marginal distribution of X1;

• fk(xk|x1, . . . , xk−1) is the conditional pdf of Xk with condition on X1 = x1, . . . , Xk−1 = xk−1.

The basic idea: generate one number at a time:

• get x1 from f1(x1);

• get x2 from f2(x2|x1), etc.

The algorithm:

• get n random numbers ui = U(0, 1), i = 0, 1, . . . , n;

• subsequently get the following RVs:

F1(X1) = u1, F2(X2|X1) = u2, . . . Fn(Xn|X1, . . . , Xn−1) = un. (40)

Lecture: Random numbers generators 59

Simulations D.Moltchanov, TUT, 2006

Example: generate from f(x, y) = x + y:

• marginal pdf and cdf of X are given by:

f(x) =

∫ 1

0

f(x, y)dy = x +1

2, F (x) =

∫ x

0

f(x)dx =1

2(x2 + x). (41)

• conditional pdf and cdf of Y are given by:

f(y|x) =f(x, y)

f(x)=

x + y

x + 12

, F (y|x) =

∫ y

0

f(y|x)dy =xy + 1

2y2

x + 12

. (42)

• by inversion we get:

x =1

2(√

8u1 + 1 − 1),

y =√

x2 + u2(1 + 2x) − x. (43)

Lecture: Random numbers generators 60