New The University of Auckland – Applied Mathematics · 2011. 8. 29. · The University of...

Transcript of New The University of Auckland – Applied Mathematics · 2011. 8. 29. · The University of...

The University of Auckland – Applied Mathematics

Bayesian Methods for Inverse Problems :

why and how

Colin FoxTiangang Cui, Mike O’Sullivan (Auckland), Geoff Nicholls (Statistics, Oxford)

In this talk

• An example in geothermal model calibration

• Inferential solutions to inverse problems, noise and all

• The need to integrate: pixel-wise degraded binary images

• Monte Carlo integration

• Computation (MCMC)

• Output analysis for geothermal problem

• Next talk :: better sampling algorithms

An inverse problem in geothermal fields

Schematic of a geothermal field

����������������������������������������

����������������������������������������

������������������������������

������������������������������

HeatCrystallineRock

Convective Magma

Hig

hD

ensity

Cold

Wate

r

Hig

hD

ensity

Cold

Wate

r

Low DensityHot Water

Rock of LowPermeability

TemperatureContour

Geothermal Well

Permeable Rock

Boiling Zone

1

Given near-surface measurements of temperature, pressure, flow, want

• Model calibration :: rock type, porosity, fracture : heat sources

• Predict :: long term (50 year) power generation, land subsidence, etc

• Decide :: robust investment plan

Wairakei geothermal power generator

Bayesian formulation of inverse problems

d = Ax + n: data d, image x, measurement noise n, forward map A

image spacedata space

xtrue

xML d

dnf

forwardmap

A

A-1

π (x|d, m) ∝ Pr (d|x, m)Pr (x|m) (Bayes’ rule)

Likelihood: measurement and noise (Physics, instrumentation, probability)

Prior / state space: stochastic modelling, physical laws, previous measurements, expert opinion

Inference based on posterior distribution conditioned on data (computational statistics)

Bayesian formulation of inverse problems

d = Ax + n: data d, image x, measurement noise n, forward map A

image spacedata space

xtrue

xML d

dnf

forwardmap

A

A-1

π (x|d, m) ∝ Pr (d|x, m)Pr (x|m) (Bayes’ rule)

Likelihood: measurement and noise (Physics, instrumentation, probability)

Prior / state space: stochastic modelling, physical laws, previous measurements, expert opinion

Inference based on posterior distribution conditioned on data (computational statistics)

Bayesian formulation of inverse problems

d = Ax + n: data d, image x, measurement noise n, forward map A

image spacedata space

xtrue

xML d

dnf

forwardmap

A

A-1

π (x|d, m) ∝ Pr (d|x, m)Pr (x|m) (Bayes’ rule)

Likelihood: measurement and noise (Physics, instrumentation, probability)

Prior / state space: stochastic modelling, physical laws, previous measurements, expert opinion

Inference based on posterior distribution conditioned on data (computational statistics)

Bayesian formulation of inverse problems

d = Ax + n: data d, image x, measurement noise n, forward map A

image spacedata space

xtrue

xML d

dnf

forwardmap

A

A-1

π (x|d, m) ∝ Pr (d|x, m)Pr (x|m) (Bayes’ rule)

Likelihood: measurement and noise (Physics, instrumentation, probability)

Prior / state space: stochastic modelling, physical laws, previous measurements, expert opinion

Inference based on posterior distribution conditioned on data (computational statistics)

Stochastic Model

xforward

mapK

datalossP

trueimage

idealdata

noisefreedata

+

n

d=Ax+n : A=PK

actualdata

imagerecoveryalgorithm

x̂

representation / prior density

estimate

+ noise

Stochastic Model

xforward

mapK

datalossP

trueimage

idealdata

noisefreedata

+

n

d=Ax+n : A=PK

actualdata

imagerecoveryalgorithm

x̂

representation / prior density

estimate

+ noise

if n ∼ fN (·) Pr(d|x,m) = fN (d−Ax)

Stochastic Model

xforward

mapK

datalossP

trueimage

idealdata

noisefreedata

+

n

d=Ax+n : A=PK

actualdata

imagerecoveryalgorithm

x̂

representation / prior density

estimate

+ noise

if n ∼ fN (·) Pr(d|x,m) = fN (d−Ax)

Prior distribution πpr(x) uses physical laws, expert knowledge, stochastic modelling

Stochastic Model

xforward

mapK

datalossP

trueimage

idealdata

noisefreedata

+

n

d=Ax+n : A=PK

actualdata

imagerecoveryalgorithm

x̂

representation / prior density

estimate

+ noise

if n ∼ fN (·) Pr(d|x,m) = fN (d−Ax)

Prior distribution πpr(x) uses physical laws, expert knowledge, stochastic modelling

Focus of inference is posterior distribution for x given d

π (x|d) ∝ Pr (d|x) πpr (x)

Solutions to Inverse Problem = Summary Statistics

Posterior distribution π (x|d) encapsulates all information about x

Regularized Inversion - Modes

x̂MLE = arg max Pr (d|x) x̂MAP = arg max π (x|d)

(least-squares, Moore-Penrose inverse, Tikhonov regularization, Kalman filtering, Backus-

Gilbert, Prussian-hat cleaning)

Inferential Solutions

“Answers” are expectations over the posterior π (x|d)

Eπ [f (x)] =∫

Xf (x) π (x|d) dx

If

f (x) = indicator function that image shows cancer

E [f (x)] is posterior probability (based on measurements, prior) that patient has cancer.

Two modes over 100×100 pixel image

x

π(x|d)

Two modes over 100×100 pixel image

x

π(x|d)

if maximum value (at mode) is twice the value of the lower local maximum

Two modes over 100×100 pixel image

x

π(x|d)

if maximum value (at mode) is twice the value of the lower local maximum

width of global mode is half the width of the lower local mode

Two modes over 100×100 pixel image

x

π(x|d)

if maximum value (at mode) is twice the value of the lower local maximum

width of global mode is half the width of the lower local mode

in each of the coordinate directions x1, x2, · · · , x100×100

Two modes over 100×100 pixel image

x

π(x|d)

if maximum value (at mode) is twice the value of the lower local maximum

width of global mode is half the width of the lower local mode

in each of the coordinate directions x1, x2, · · · , x100×100

there is 210000−1 ≈ 103010 times more probability mass in the lower, broader, peak than around

the mode

Two modes over 100×100 pixel image

x

π(x|d)

if maximum value (at mode) is twice the value of the lower local maximum

width of global mode is half the width of the lower local mode

in each of the coordinate directions x1, x2, · · · , x100×100

there is 210000−1 ≈ 103010 times more probability mass in the lower, broader, peak than around

the mode ∫X

π (x|d) dx =∫

Xπ (x|d) dx1 dx2 · · · dx10000

Mode vs Mean

true noisy

��������������� ������������

������

������

��������

�����

MLE

θ = 0

θ =

0.1

25θ

= 0

.25

θ =

0.3

75θ

= 0

.5

MAP

θ =

0.6

75

mean sample MPM

Pixel-wise noise on binary image, Ising prior with “lumping” constant θ

F and Nicholls “Exact MAP states and expectations from perfect sampling: Greig, Porteous and Seheult

revisited” 2001

Monte Carlo integration + importance sampling

If x(1), . . . , x(n) ∼ π (·|d)

Eπ [f (x)] ≈ 1n

n∑i=1

f(x(i)

)Construct x(1), . . . , x(n) as iterates of an ergodic map

Deterministic iteration: x(n+1) = M(x(n)

)π(x) =

∑M(y)=x

π(y)|M ′(y)|

(Frobenius-Perron)

Stochastic Iteration: Markov chain with transition kernel p (x, y)

∫X

π (x) p (x, y) dx =∫

Xπ (y) p (y, x) dx (global balance)

π (x) p (x, y) = π (y) p (y, x) (detailed balance)

Monte Carlo integration + importance sampling

If x(1), . . . , x(n) ∼ π (·|d)

Eπ [f (x)] ≈ 1n

n∑i=1

f(x(i)

)Construct x(1), . . . , x(n) as iterates of an ergodic map

Deterministic iteration: x(n+1) = M(x(n)

)π(x) =

∑M(y)=x

π(y)|M ′(y)|

(Frobenius-Perron)

Stochastic Iteration: Markov chain with transition kernel p (x, y)

∫X

π (x) p (x, y) dx =∫

Xπ (y) p (y, x) dx (global balance)

π (x) p (x, y) = π (y) p (y, x) (detailed balance)

Sampling Algorithms in Physics

Hamiltonian Dynamics

Equate unknowns x to ‘position’ variables q with potential energy

E(q) = −T log (π(x))− T log (Z)

Auxiliary ’momentum’ variables p with kinetic energy K(p) = 12‖p‖

2.

Hamiltonian H(q, p) = E(q) + K(p) has canonical distribution

P (q, p) =1

ZPexp (−E(q))

1ZK

exp (−K(p))

Hamiltonian dynamics leave P (q, p) invariant + stochastic transitions for ergodicity

Langevin diffusion

dx = s dB +s2

2∇ log (π(x)) dt

Metropolis-Hastings algorithm

1. given state xt at time t generate candidate state x′ from a proposal distribution q (.|xt)

2. With probability α(xt → x′

)= min

(1,

π(x′)q (xt|x′)π(xt)q (x′|xt)

)set Xt+1 = x′

otherwise Xt+1 = xt

3. Repeat

q (.|xt) can be any distribution that ensures the chain is irreducible and aperiodic.

Pros

• Provably convergent to any property, e.g. ‘best’ estimate, uncertainty in estimate

• State space can be continuous, discrete, stochastic, or variable dimension

Cons

• Can be (very) slow

Metropolis-Hastings algorithm

1. given state xt at time t generate candidate state x′ from a proposal distribution q (.|xt)

2. With probability α(xt → x′

)= min

(1,

π(x′)q (xt|x′)π(xt)q (x′|xt)

)set Xt+1 = x′

otherwise Xt+1 = xt

3. Repeat

q (.|xt) can be any distribution that ensures the chain is irreducible and aperiodic.

Pros

• Provably convergent under mild requirements

• State space can be continuous, discrete, stochastic, or variable dimension

Cons

• Can be (very) slow

Results for geothermal field

Time [day]

Time [day]

p[b

ar]

(Wellhead)

h[k

J/kg]

10

10

20

20

30

30

40

40

50

50

60

60

70

70

80

80

90

90

2000

1400

1600

1800

2200

40

50

60

70

80

90

4

Wellhead pressure (top) and flowing enthalpy (bottom).

Black line is estimated result, triangles and squares are observed data.

Tiangang Cui, Bayesian Inference for Geothermal Model Calibration MEng Auckland, 2005

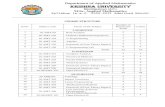

Output analysis for geothermal field

MCMC Updates

Log-lik

elihood

Lag

Auto

corr

ela

tion

0

0

0

1

0.5

30,000 60,000

10,0005,000

-15

-20

-25

-30

-35

φ [-]

m [-]

Srl [-]

log(k [m2])

p0 [bar]

ps [bar]

0

0

0

00

0

00 0.1

0.2

0.2 0.3

0.4

0.4

0.6

0.7

0.8

0.8 0.9 1

10 20 30 40 50

100 120 140 160

-15.15 -15.05 -14.95

10,000

4,000

4,000

4,000

4,000

4,000

8,000

8,000

8,000

log10(k [m2])p0 [bar]

ps

[bar]

10

20

30

40

50

110120

130140

150160

-15.15

-15.05

-14.95

Sv0

Sls

0 0.1 0.2 0.3

0.7

0.8

0.9

1

5

Autocorrelation and MCMC output trace. Histogram for the marginal distribution of param-

eters. Scatter plot of the joint marginal distribution for log(k), p0 and ps. Histogram for the

marginal distribution of Sv0 and Sls and scatter plot of their joint marginal distribution.

Tiangang Cui, Bayesian Inference for Geothermal Model Calibration MEng Auckland, 2005

Inferred iso-temperature surface

Conclusions

1. Bayesian methods can solve substantial real-world inverse problems

2. Time to convergence depends on efficient simulation of the forward map, fast MCMC

algorithms, good proposal distributions

3. Computation required is feasible

![Applied Mathematics Introductory Module Maths Module [final].pdf · 4 Introduction to Applied Mathematics Introduction to Applied Mathematics 1. Title of Module: Introduction to Applied](https://static.fdocuments.net/doc/165x107/5e8a456ab113e23d4c74dc5d/applied-mathematics-introductory-module-maths-module-finalpdf-4-introduction.jpg)