Stereo-image Rectification for Dense 3D Reconstruction in ...

Motion Segmentation and Dense Reconstruction of...

Transcript of Motion Segmentation and Dense Reconstruction of...

1

Motion Segmentation andDense Reconstruction of Scenes

Containing Moving Objects Observed by a Moving Camera

Chang Yuan

Institute of Robotics and Intelligent Systems

Computer Science Department

Viterbi School of Engineering

University of Southern California2/49

Problem Definition

• Scenario: rigidly moving objects + moving camera

• Goal• 2D motion segmentation: motion regions / background area

• 3D dense reconstruction: object shape / background structure

3/49

2D Motion Segmentation

4/49

3D Shape + Trajectory Reconstruction

2

5/49

Challenges & Applications

• Information sources

• Pixel colors + 2D coordinates

• No object model information is available

• Difficulties

• Camera motion

• Multiple moving objects

• 3D static structures (parallax)

• Applications

• Video surveillance

• Image synthesis

• …

6/49

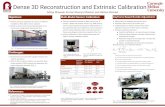

Overview of the Approach

Dynamic voxel

coloring scheme (CVPR’07, Journal(?))

My contributions:

2D => 3D

Sparse => Dense

Sparse => Dense

Parallax rigidity constraint

(ICCV’05, PAMI)

Planar-motion constraint

(CVPR’06, PAMI(?))

7/49

Outline

• Introduction

• 2D Shape Recovery

• Multi-image registration

• Motion segmentation

• Object tracking

• 3D Shape Recovery

• Sparse reconstruction

• Dense volumetric reconstruction

• Summary and Discussion

Math background:

• Linear algebra

• Optimization

8/49

Outline

• Introduction

• 2D Shape Recovery

• Multi-image registration

• Motion segmentation

• Object tracking

• 3D Shape Recovery

• Sparse reconstruction

• Dense volumetric reconstruction

• Summary and Discussion

3

9/49

Motion Segmentation – Overview

• Task: to detect moving objects and track them

• Assumptions• General camera motion

• Distant scene

• Textured background

10/49

Motion Segmentation – Related Work

• Detecting moving objects from static cameras

• Background modeling

• Frame subtraction

• Optical flow based segmentation

• Motion layers (not necessarily a moving object)

• Point clustering

• Divide sparse feature matches into different motion groups

• “Plane+Parallax” approaches

• A constant reference plane + off-plane structure (parallax)

11/49

Feature Extraction & Matching

• Salient parts of the scene

• Extraction• Harris corners

• Multi-scale

• Multi-orientation

• Sub-pixel accuracy

• Matching• Small inter-frame motion

• Gray-scale windows

• Cross correlation

• Large viewpoint change

• Gradient histogram

• Vector angle

12/49

Multiple Image Registration

• Frame motion model

• Assumptions:

• Small inter-frame motion

• Distant planar scene

• 2D affine transform

• Robust estimation

• Random Sample Consensus

(RANSAC)

• Keep the model with the

largest number of inliers

• Non-linear refinement over

the inliers

=

11100

2

2

1

1

232221

131211

v

u

v

u

AAA

AAA

21 pAp =

4

13/49

Frame t-w Frame t+w

Frame t

t: reference framew: half size of the window

Initial Motion Segmentation (1)

• Two-frame pixel-level segmentation?

• Segmentation within a temporal window

• Accumulate the pixels warped from adjacent frames

• K-Means to find the most representative pixel

• Frame differencing and thresholding: |Ioriginal-Imodel|>ΔI

14/49

Initial Motion Segmentation (2)

• Residual pixels

• Motion regions

• Parallax pixels

• Parallax filtering

• Estimate additional geometric constraints

• Epipolar constraint

• Parallax rigidity constraint

• Evaluate the disparities w.r.t. the constraints

• Parallax or motion?

15/49

Epipolar Constraint (1)

1C1e 2e

2C

2l

P

1p

2/)''( 2211 plpl ⋅+⋅=epidDisparity (pixel-to-line distances):

[ ] 0

1

1 1

1

1222 =

v

u

vu F

1'l

2'p

'P

Fundamental matrix:

16/49

Epipolar Constraint (2)

2D: pixels move on the epipolar lines

3D: camera and object are co-planar

Which happens sometimes!

5

17/49

Epipolar Constraint (3)

• Degenerate motion cannot be detected by epipolar constraint

• This is the best we can do in 2 views

• Solution

• Three or more views

• Trilinear Constraint• Hard to estimate

• Large camera baseline

• Sensitive to image noise

• Solution• A novel parallax rigidity constraint

C1

C2

p1

I1

P

C3

p2

I2

I3p3

18/49

Parallax Rigidity Constraint (1)

Plane+Parallax decomposition

[ ]Tkvuk 121112112 1);( == pP

C1

A12p1

C2

e12

I1

P

I2

p2

Projective 3D structure:

Parallax term:

Hz

Hk ∝

[ ]Tkvu 121112 1=P

[ ]Tkvu 232223 1=P

relationship?

19/49

Parallax Rigidity Constraint (2)

• Bilinear relationship:

• The G matrix

• 4×4 matrix

• 10 unknowns (rank-2): camera motion and plane variation

• Disparity computation:

• Estimation: RANSAC (15 points) + non-linear refinement

1223 PGPG

T

d =

[ ] 01

1

12

1

1

2322 =

k

v

u

kvu G[ ] 0

1

1 1

1

1222 =

v

u

vu F

Similar to the

epipolar constraint:

20/49

Sequential Motion Segmentation (1)

• Geometric constraints

• Affine: 2-view

• Epipolar: 2-view

• Parallax: 3-view

• Sequential classification scheme

• Consistency w.r.t. constraints

• Based on a decision tree

• Motion probability

6

21/49

Sequential Motion Segmentation (2)

Frame 55 Frame 60 Frame 65

Epipolar constraint disparity Parallax rigidity disparityBefore parallax filtering After parallax filtering

Initial motion mask

22/49

Sequential Motion Segmentation (3)

• Degenerate cases

• Camera motion and object motion are both co-planar and proportional

Which happens rarely!

23/49

Spatial-temporal Object Tracking

• Graphical representation

• Likelihood (edge weights) of motion regions (nodes)

• Appearance

• 2D velocity

• Motion probability (disparities)

• Finding paths to maximize joint likelihood

• Viterbi algorithm

Frame 45 Frame 50 Frame 55

[Kang’05]

24/49

Experimental Results (1)

Original

Images

Tracking Results

Initial Detection

Results

Motion

Prob. Maps

7

25/49

Experimental Results (2)

Initial Detection

Results

Motion

Prob.

Maps

Tracking

Results

Original

images

26/49

Experimental Results (3)

Framesubtraction

Initial

detectionTracking

results

Original

images

27/49

Experimental Results (4)

A synthesized video without motion regions 28/49

Experimental Results (5)

• Time complexity: O(ImgW*ImgH*W)

• Video frame size: e.g. 720*480

• Temporal window size: e.g. 90 frames

• ~1 frame per second (after GPU acceleration by Qian Yu)

• Quantitative evaluation

• Hand-labeled ground truth (~100 frames per sequence)

Filtered motion mask Labeled motion regionsRecall (detection rate); Precision (1-false alarm rate)

8

29/49

2D Motion Segmentation - Summary

• Geometric representation of motion vs. depth (parallax)

• Contributions

• Sequential motion segmentation

• A novel parallax rigidity constraint

• Applicable sequences

• Distant cluttered background with moving objects

• Future directions

• Region based motion segmentation

• Shadow removal

30/49

Outline

• Introduction

• 2D Shape Recovery

• Multi-frame registration

• Motion segmentation

• Object tracking

• 3D Shape Recovery

• Sparse reconstruction

• Dense volumetric reconstruction

• Summary and Discussion

31/49

3D Shape Recovery - Overview

• Task

• Recover the 3D shape of both moving objects and the static background

• Estimate the 3D motion trajectory of the camera and the objects

• Assumptions

• General camera motion and rigid object motion

• Textured background with a constant ground plane

32/49

Sparse Reconstruction – Related work

• Structure from/and Motion

• A moving camera + a static scene

• Well-developed methods

• Reconstruction of moving objects

• A moving camera + moving objects

• Relative camera-object motion

• Object motion estimation

• Linear trajectory

• More general trajectories

Vidal & Sastry, CVPR’03

Avidan & Shashua, PAMI ‘00

9

33/49

Reconstruction of Static Background

• Perspective projection model

• SaM procedure

• 3D camera motion (R, t): decomposition of fundamental matrices

• Intrinsic parameters K: camera calibration

• 3D point positions P: triangulation

• Bundle Adjustment:

[ ]

≅

1

1z

y

x

v

u

tRKjkkj PMp ≅

2

,

min∑ −jk

jkkj PMp

1C 2C

1p 2p

P

34/49

Shape Recovery for Moving Objects

• Relative camera-object motion

Moving Object

Moving Camera (Real) Moving Camera (Virtual)

Static Objectvirtual camera motion = real camera motion – object motion

35/49

3D Alignment of Moving Objects

• 3D object motion estimation

• Rotation is solved uniquely

• Translation depends on the

object scale

• More constraints are needed!

v

k

r

k

b

k RRR 1)( −=

v

k

b

k

r

k

b

k CRCT σ−=

Object motion

Real & virtualcamera motion

k: frame number

b: object

r: real camera

v: virtual camera

object motion = real camera motion – virtual camera motion

virtual camera motion = real camera motion – object motion

36/49

Planar-motion Constraint (1)

• Object’s motion trajectory must be planar

• Known plane

• Solve the object translation at each frame

• Unknown plane

• The correct scale leads to rank-2-ness

0=⋅b

kTN

v

k

b

k

r

k

b

k CRCT σ−= 0CTCR =−× ) ()( r

k

b

k

v

k

b

k

0=⋅b

kTN

[ ][ ][ ]v

K

b

K

vb

r

K

r

b

K

b

CRCR

CC

TT

�

�

�

11

1

1

σ−

=

10

37/49

Planar-motion Constraint (2)

• Degenerate motion

• Object motion is both parallel and proportional to camera movement

• Can be easily detected

2rank 111=

v

K

b

K

r

K

vbr

CRC

CRC

�

�

[ ][ ][ ]v

K

b

K

vb

r

K

r

b

K

b

CRCR

CC

TT

�

�

�

11

1

1

σ−

=

Which happens rarely!

38/49

Experimental Results – Sparse Reconstruction (1)

39/49

Experimental Results – Sparse Reconstruction (2)

40/49

Experimental Results – Sparse Reconstruction (3)

• Quantitative results

• Average re-projection errors: ∑∑= =

−K

k

N

j

jkkjKN 1 1

1PMp

Reconstruction of Static Background

Reconstruction of Moving Objects

Motion Trajectory Estimation

Unit: pixel

11

41/49

Dense Volumetric Reconstruction

• Extend sparse surface points to dense object shape

• Volumetric decomposition: 3D space => voxels

• Task: to find the voxel labels that match the original images

with minimal variances

T=1

T=2

42/49

• Related work

• Stereo matching: not directly in the 3D object space

• Deterministic methods: voting from multiple cameras

• Optimization based methods: total variance + smoothness

• Photo-motion variance measure

• Color variance:

• Multi-oriented 2D patches projected from 3D voxels

• Normalized correlation

• Motion variance

• Overlap of voxels

Dynamic Voxel Labeling (1)

X

43/49

Dynamic Voxel Labeling (2)

• Initialize a subset of voxels with surface points

• Deterministic voxel labeling method

• Graph Cuts based global optimization

44/49

Experimental Results – Voxel Labeling

12

45/49

3D Shape Recovery - Summary

• A complete 3D replica of dynamic scenes

• Shape + motion trajectories

• Contributions

• 3D alignment process based on the planar motion constraint

• Voxel labeling process with the photo-motion variance measure

• Applicable scenes

• Cluttered background + large-size moving objects

• Future directions

• Surface mesh generation

• Non-rigid object motion

46/49

Outline

• Introduction

• 2D shape recovery

• Multi-frame registration

• Motion segmentation

• Object tracking

• 3D shape recovery

• Sparse reconstruction

• Dense volumetric reconstruction

• Summary and Discussion

47/49

Summary & Discussion

• Geometric analysis of dynamic scenes

• Moving camera + rigid moving objects

• 2D and 3D shape of both static background and moving objects

• Highlights

• Theoretical contributions: linear algebra-based derivations

• Methodological contributions: a multi-stage process

• Encouraging results

• Future directions

• Multi-view geometry + object recognition

• Automatically determination of applicable tasks

48/49

Acknowledgement

• Prof. Gérard Medioni

• Prof. Ram Nevatia and Prof. Isaac Cohen

• Prof. James Moore II and Prof. Alexander Tartakovsky

• Colleagues: Jinman Kang, Douglas Fidaleo, and Qian Yu

• My wife Lan Jiang, my family and Lan’s family

• VACE program