MONOLOGUE: A Tool for Negotiating Exchanges of Private Information in E-Commerce Scott Buffett 1,...

-

Upload

eleanor-austin -

Category

Documents

-

view

214 -

download

1

Transcript of MONOLOGUE: A Tool for Negotiating Exchanges of Private Information in E-Commerce Scott Buffett 1,...

MONOLOGUE: A Tool for Negotiating Exchanges of Private Information in E-Commerce

Scott Buffett1, Luc Comeau2, Mike Fleming2 and Bruce Spencer1

1National Research Council Canada2University of New Brunswick

PST2005, St. Andrews NB, October 14, 2005

2

An E-Commerce Scenario…

• Joe U. Zerr sits at his computer to search for a new novel at Amazin.com, an online book retailer.

• After a short search, Joe finds the book he is looking for and sees that it costs $34.

• After careful consideration of such issues as his bank balance, bills, future income and his interest in obtaining the book, Joe concludes that $34 is a reasonable price for him to pay, and agrees to the transaction.

3

A Privacy Scenario…

• Before he can order the book, Joe is asked to provide a little of his personal information to set up an account.

• In particular, the following are required:– name, address, credit card number, phone number

• Joe is also informed that, as a new member, he can sign up for “The Amazin Club”:– benefits: $30 discount on next purchase, automatic updates on

sales, website personalization– cost: extra info: e-mail address, birthday, interests, hobbies

4

A Privacy Scenario…(cont’d)

• Finally, Joe is informed that he can join the “Amazin Community”, where members can suggest books to each other– cost: names and e-mail addresses of friends and family

• These decisions are not so easy– What are the possible costs?– How do they measure up with the benefits?– Could I do better?

5

Automated Negotiation

• Advantages– Takes the burden off of the user– Performs much more quickly– Acts rationally

• Disadvantages– Requires that preferences are fully specified beforehand– Not “intuitive”; cannot “read” the opponent– Strategies are less dynamic; can be learned and then exploited

6

MONOLOGUE

• Multi-Object Negotiator for On-Line Offers Guided by Utility Elicitation

• An automated negotiation system for finding mutually acceptable deals in an electronic commerce scenario

• Automated has high likelihood of finding mutually acceptable transactions, when they exist

• Useful when the user does not have full information on possible outcomes and consequences of event associated with potential agreements

7

Two-Tiered Architecture

• Client-side application interacts with the user to– determine the user preferences

– inform the user of the current negotiation progress as well as histories of previous negotiations

• Client also– devises the negotiation strategies

– sends and receives offers

– performs decision-making

• Server-side application– maintains a database consisting of utilities of other (anonymous)

registered users for various private items as they pertain to various websites.

8

The MONOLOGUE System

Negotiation Diagram

Send Offer to MONOLOGUE medium to be displayed

Display offer to Customer. Ask customer if they want to Negotiate or not.

NOQuit

YES

MONOLOGUEInterprets the Offer

Offer Accepted Exchange

Private information& certificatesOffer NOT Accepted

Formulate aCounter Offer

Send to BusinessBusinessInterpretsOfferOffe

r Acc

epted

ExchangePrivate

information& certificates

NOT Accepted

Send Offer to MONOLOGUE

Quit

If no acceptable offers left

Formulate a Counter OfferQuit

If no acceptable offers left

StartBusiness’

Initial offer

Business’counter-offer

Customer’scounter-offer

9

Key Features

• Uses the “PrivacyPact” negotiation protocol

• Performs user utility elicitation to determine user preferences over the space of possible deals

• Does “probing” until enough information on the opponent is obtained to make assumptions and inferences on its preferences

10

The PrivacyPact Protocol

• Need a protocol that will force negotiators to make progress, as much as possible.

• PrivacyPact:– an alternating-offers negotiation protocol– offers consist of:

• P3P statements• Rewards

11

P3P Statements

• and each statement describes – what data will be collected– with whom it will be shared– for how long it will be retained– for what purpose

• Partial order over preferences for P3P statements

<STATEMENT>

<PURPOSE>

<telemarketing/> <admin/>

</PURPOSE>

<RECIPIENT>

<ours/>

</RECIPIENT>

<RETENTION>

<indefinitely/>

</RETENTION>

<DATA-GROUP>

<DATA ref="#user.name.given"/>

<CATEGORIES>

<physical/>

</CATEGORIES>

</DATA>

</DATA-GROUP>

</STATEMENT>

12

Rewards

• A website may offer a reward in return for private information

• Let T be the set of reward tokens

• May also have a partial order ≼ over T

– e.g. if t1 = 10% discount and t2 = 15% discount, then t1 ≼ t2

– user prefers t2, website prefers t1

13

PrivacyPact Convergence

• Forces progress:– Offers that are necessarily worse to the opponent than previous

offers are not allowed

• Encourages progress when it cannot be forced– All offers remain on the table for the duration of the negotiation– Incentive to make steady concessions; a larger concession

followed by a return back up the utility scale will likely hamper the negotiator's leverage

14

Eliciting User Utilities

• Before negotiation can commence, must determine user utility functions over the set of offers

• Computing the utility function for rewards can be relatively easy, especially if such rewards are monetary

• On the other hand, determining utilities for privacy policies is difficult

• One method: determine utilities over the set of data items– utility of a statement is then a function of the data utility

15

Elicitation Technique

• Utility for each data element d is drawn from a known distribution

• User is asked questions to reduce uncertainty in the distributions

• Questions are chosen that improve expected utility of the chosen strategy the most

• A good candidate question therefore significantly reduces utility uncertainty for an important d

16

Effective Negotiation Strategies

• Key to building effective negotiation strategies is having some knowledge of opponent’s goals

• Attempt to learn about opponent preferences

• Typically impossible to learn preferences fully

• We attempt to classify the opponent’s preference relation

• If we can, with some confidence, determine correct class, we gain knowledge that can be used to determine strategy

17

Classification Technique

• Partition the set of possible preference relations into a set of classes C

• All relations in a class should be fairly similar in some way

• Observe the opponent’s behaviour during the negotiation

• Based on the evidence obtained – the sequence of the opponent’s offers – determine the likelihood of each class containing true preference relation

• Continue until one class has sufficiently high probability

• Any relation in this class should be similar to the true preference relation

18

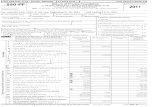

A Few Results…

• Tested the negotiation for convergence efficiency

• Used three simple negotiation strategies:– miser: always gives offer with highest utility for itself– cooperative: gives the offer (acceptable to itself) that is most

similar to the opponent’s offers made thus far– hybrid: considers the best n offers, and gives the one that is

most similar to the opponent’s offers made thus far

19

Number of Negotiable Items

Number of Possible Offers

Business Strategy Customer Strategy Number of Messages to Converge

3 28 miser miser

hybrid

co-op

24

18

4

4 60 miser miser

hybrid

co-op

48

12

6

5 124 miser miser

hybrid

co-op

104

16

4

6 252 miser miser

hybrid

co-op

194

16

10

7 508 miser miser

hybrid

co-op

388

20

4

8 1020 miser miser

hybrid

co-op

818

32

4

9 2044 miser miser

hybrid

co-op

1670

52

38

10 4092 miser miser

hybrid

co-op

3178

66

56

20

Learning Opponent Preferences

• Test the ability to correctly classify the opponent after relatively few offers

• Also check the similarity of all preference relations in the class to the true preference relation

• 5 objects, 9 negotiation rounds

21

Experiment 1:Likelihood of Correct Class

• Measure the average likelihood of correct classification after each round

• For comparison, a simple method would guess correctly with 0.25 probability after each round

Likelihood of Correct Class

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

1 2 3 4 5 6 7 8 9

Number of Offers

Ave

rag

e L

ikel

iho

od

Simple Technique Classification Technique

22

Experiment 2:Likelihood of Correct Preference Relation

• Choose a class randomly according to the likelihood of each class

• Choose a relation within that class that is not violated by the evidence

• The simple method chose a non-violated relation regardless of class

Likelihood of Correct Preference Relation

0

0.05

0.1

0.15

0.2

0.25

0.3

0.35

1 2 3 4 5 6 7 8 9

Number of Offers

Ave

rag

e L

ikel

iho

od

Simple Technique Classification Technique

Stat sig, p<.05

23

Experiment 3:Average Similarity to True Preferences

• Weighted average similarity of all non-violated relations to true relation

• Average is weight according to class likelihoods

• Similarity of two relations is the number of common preference pairs

• Thus a score out of

31C2 = 465

Weighted Average Similarity to True Preference Relation

0

50

100

150

200

250

300

350

1 2 3 4 5 6 7 8 9

Number of Offers

Ave

rag

e S

imil

arit

y

Simple Technique Classification Technique

Stat sig, p<.05

24

MONOLOGUE

25

MONOLOGUE

26

MONOLOGUE

27

MONOLOGUE

28

Current/Future Work

• Eliciting Complex Utility Functions– Extension of a technique which models conditional preference in

multi-attribute outcomes

• Building negotiation strategies– Once opponent has been classified, need to exploit this knowledge

and accelerate convergence (to a favourable end)

• System/User Interaction– Need to query user to determine preferences– Can't bother the user with too many queries– Compute a quantitative "bother cost" of candidate query

29

Thanks for listening

30