Model to Hardware Matching for nm Scale Technologies · 2009-12-29 · Model to Hardware Matching...

Transcript of Model to Hardware Matching for nm Scale Technologies · 2009-12-29 · Model to Hardware Matching...

Model to Hardware Matching for nm Scale Technologies

Sani R. Nassif Manager Tools & Technology Department

IBM Research – [email protected]

Model to Hardware Matching?

DFM Job DescriptionWhat is the real problem?Imagine 109 people being askedto form a human chain.

Maybe the population of Europe.With height and width of eachperson varying by ~50%.Maybe after some beer…

This is what we ask 1B highlyvariable MOSFETs to do!

And we want to predict howlong the chain is going to be,and how much food and beeris required etc…

And… we cannot prototype!We use predictive models, andneed them to match reality.

Realities and MotivationThe IC industry is built on a foundation of models for performance, power, yield, cost…

When models do not predict the correct outcome, we lose money.

Technology complexity near the end of scaling is exacerbating this “prediction gap”.

Investments in technology modeling and understanding are needed!

An Example from IBMOne part of a design was found to be ~15% slower than other parts.Models predict all parts of design are identical.Model/hardware mismatch!

Slower block limits FMAX.Faster block wastes power.

Part-2

Part-0

Part-1

Case study performed byAnne E. Gattiker

Power

Spee

d

Target 0

21

0

21

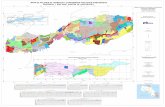

Model to Hardware SupportChip has 12 ring oscillators distributed across the die, and individually measurable.

Chip map with Ring Oscillator locations

1

2

3

4

5

6

7

8

9

10

11

12

~200%

~30%

Strong correlations between:Part-0 and RO 1.Part-2 and RO 8.

Systematic offset between the ROs.Even though the two ROs are identical!

Conclusion:Within-die variability caused systematic spatial mismatch (mean shift + rotation).Magnitude of mismatch large and has –veimpact on design.

Systematic Offsetsx=

y line

RO 1 vs. RO 8

Why? (Historical Perspective)In the beginning, design was hard and only few were able to do it well. This was the age of “chip engineering”.

But with scaling-driven performance, it became possible to abstract design to a few simple rules. This was the age of “chip computer science”!

Intel 8080Intel 8080

Intel 4004Intel 4004Intel 4004

Technology ComplexityTechnology has become so complex that it is not well represented by “rules”.

Design / Technology interface information bandwidth needs are skyrocketing!

Expressing complex non-linear realities via rules is becoming difficult.

100

200

300

400

500

600

700

800

900

1000

500nm 250nm 130nm 65nm

Technology

# Design Rules

Technology Rules (History)Example: wire spacing…

S W

Wmin

WminWidth

Spa

cing

Smin

Legal Region

Two rules suffice to define the “correct”design space!

Technology Rule ExplosionImpact of CMP, Dishing, Erosion as well as lithography...

S W

Wmin

Width

Spa

cing

Four (or more) rules for the same situation in order to accommodate technology complexity!

Wmin Wmax

Smin

“Legal”Region

What Does This Change?Transistor performance is being determined by new physics and complex interactions.

Large variety in behaviors.Systematic & Random variability increasing.

Devices used for technology characterization becoming less typical…

Number of variants is exploding.Ability to bound all behaviors is compromised.

Result: model / hardware mismatch.Gap in our understanding of technology is relatively large and getting larger!

Where Does This Put Us?Our lack of understanding of technology is endangering the way we do design.

The “contract” between manufacturing and design can no longer be just BSIM+DRC.

These changes will have a dramatic impact on CAD and on the Foundry business.

More than just more OPC.

Much of what we observe is being driven by Much of what we observe is being driven by variabilityvariability……

Variability vs. UncertaintyVariability: known quantitative relationship to a source (readily modeled and simulated) – systematic!

Designer has option to null out impact.Example: power delivery (package + grid) noise.

Uncertainty: sources unknown, or model too difficult/costly to generate or simulate – random!

Usually treated by some type of worst-case analysis.Example: ΔVTH within die variation.

Lack of modeling infrastructure and/or resources often Lack of modeling infrastructure and/or resources often transforms variability to uncertainty.transforms variability to uncertainty.

Example: power grid noise when not assessed!Example: power grid noise when not assessed!

TaxonomyIn addition to systematic and random, we also differentiate variability according to source and distribution.

Physical(time scale 109 sec)

Environmental(time scale 10−9 sec)

Spatial ΔL, Δμ, ΔVT Noise coupling

Temporal NBTI, Hot-ē, electro-migration

VDD, T

Variability Interaction with DesignOld view: Die to die variation dominant

Imposed upon design (constant regardless of design).Predominantly random (e.g. wafer location effect).Well modeled via worst-case files.

New view: Within-die variation dominantCo-generated between design & process (depend on details of the design).

Predominantly systematic.Example: CMP-driven RC variation.

Response to VariabilityResponses to variability target different “spatial frequencies”.

No one response is sufficient to tackle the full impact, but all responses require accurate estimates of magnitude and impact.

30nm30nm

Shape-level

OPC/RET

3μm3μm

Librarylevel

regularity

300μm300μm

Fabric-based

regularity

300μm300μm

Chip-level

adaptation

VDD-2

VDD-0

VDD-1

Space

Variability CharacterizationA hard problem.

Requires a large investment in test and characterization in order to understand all aspects of physical, environmental, systematic, random, etc…Also, many factors are design dependent.

So often generic test structures are not useful predictors (e.g. analog circuits)!Number of variants exploding with “transistor weight” problem.

Silicon Information DensityThe efficiency with which we can perform precise variability characterization is going to become important.

No longer sufficient to do it once (technology

bring up). Need to continually model and re-evaluate.As EDA tools ramp up on understanding process, they will enable new methods of design optimization (e.g. during re-spins).

Need vastly more information from scarce Si & test resources (i.e. more density)!

Test Structure Quality?Three relative measures:

Number of individually measurable entities (FETs, ring oscillators, etc…).

Many entities ⇒ statistics!Test time (or test cost).

Lower cost ⇒ statistics!Generality of result: suitability for predicting design outcome.

Modeling & EDA.

Gen

eral

ity Desired Spot

Test

Time

Entities

Example: Device CharacterizationSmall number of devices with various dimensions.

Entities poorpoor.Typically measure many current / voltage points.

Slow test time poorpoor.Used to generate model parameters, which are the basis for everything else… (e.g. BSIM)

i.e. generality excellentexcellent.

Entities

Gen

eral

ity

Test Time

Example: Ring Oscillators (ROs)Few ROs per unit.

Entities poorpoor.Typically measure few frequency & IDD points.

Fast test time, goodgood.Useful to assess overall health of process, but result is unique to RO structure.

i.e. generality poor.

Entities

Gen

eral

ity

Test Time

Example: RO CollectionsVariety of ROs per unit.

Entities OKOK.Typically measure few frequency & IDD points.

Test goodgood.Useful to assess overall health of process, and since a variety of ROs are included, more can be learned.

i.e. generality OKOK.

Entities

Gen

eral

ity

Test Time

high and low Vt

nfet vs. pfet strength

nand nor

nfet Vt

inverter + passgate

Canonical view: Varied ring types versus basic inverter ring

Advanced Modeling Structure

SRAM sized devices arranged in an addressable manner.96 rows, 1000 columns – 96,000 total devices.Complete I/V possible.

DUT Array

Right LSSD bank

Row SenseCurrent Steering

Left LSSD bank

DUT Array

Bottom LSSD bank and column drivers

Top LSSD bank and column drivers

DUT Array

Right LSSD bank

Row SenseCurrent Steering

Left LSSD bank

DUT Array

Bottom LSSD bank and column drivers

Top LSSD bank and column drivers

96 rows

1000 columnsMeasI SinkI

Drain Voltage

Drain Clamp

Gate Voltage

Gate Clamp

96 rows

1000 columnsMeasI SinkI

Drain Voltage

Drain Clamp

Gate Voltage

Gate Clamp

Analog Interface

Packaged Chip(Clamp assembly for C4 contacts)

Digital (Scan) Interface

Analog Interface

Packaged Chip(Clamp assembly for C4 contacts)

Digital (Scan) Interface

Experimental Results

Measure full I/V range.Extract parameter statistics through least-squares based parameter extraction.

Leakage statistics.Lognormal distribution.No spatial correlation.Systematic effects do not appear due to regular layout.Random dopant effect is the dominant source of variation.

Siren Call: Variational ModelingDevice models caught between the need to provide nominal and statistical accuracy.

Nominal accuracy: ever more complex models with many fitting parameters and difficult-to-extract inter-dependencies.

Can you spell BSIM?Statistical accuracy: simple models that exhibit a physically significant and predictive correlation structure.

What is the right solution for this?What is the right solution for this?

Linking Variability & ResilienceMuch current work is focused on the immediate and short term impact of variability.

Examples: statistical timing analysis, CMP-aware routing, new approaches for function-based OPC etc…

But increasing variability will change the character of the impact it has on circuits.

What changes with increased variability?

Circuits can become permanently or intermittently defective.Failure dependence on operating environment makes test coverage very difficult to achieve.This can be viewed as the merger of failure modes due to structural (topological), and parametric (variability) defects.

Via ResistanceVia Resistance

1Ω 1MΩ

CircuitOK

CircuitNot OK

100Ω

Distribution of“Good” vias

Distribution of“Bad” vias

Current viadistribution

Fails that looklike opens!

Other factors, like the environment, make the failure region fuzzy and broad!

How Is This Happening?Increased process complexity and systematic variability.

Example: a VT of 0.25±0.1, a via of 20…200Ω.Multidimensional: combination of multiple sources of variations.

As variability increases, circuit performance passes from a degraded phase to a regime where failure becomes indistinguishable from hard (short/open) faults.

VT

RVIA

Fail

VT

RVIA Fail looks the same as an

“almost open”defect!

VDD & Temp can make this worse. Environment also

plays a part

00

1122

33

Metric is how many sigmas from nominal does failure occur!

Random/Systematic Via Variability

Via Resistance

Double vias appear to

behave fairly consistently

Single vias exhibit high

and systematicvariability

FailOK Adapt Degrade

Performance vs. Sigma

SigmaCircuit delay

exceedsspecification

Circuit delay beyond fixingby adaptation

Circuit doesnot invertany longer

time

outp

ut v

olta

ge

nominal

time

outp

ut v

olta

ge

nominal

time

outp

ut v

olta

ge

nominal

Performanceindistinguishable

from a “stuckat 1” fault!

A B C

Perf

orm

ance

0

5

10

15

20

25

30 50 70 90 110

AB

CProcess σ point

Trend For a Simple Buffer

Simplest possible circuit (if this fails, everything else will).Performed analysis for 90nm, 65nm and 45nm.Clear trend in sigma!

Nominal VDDDelay@150%

VDD@+15%Delay@150%

No edgepropagation

0

2

4

6

8

10

12

14

16

30 50 70 90 110

A

B

CProcess σ point

Technology Trend For a Simple Latch

Pervasive circuit crucial for correct logic operation.Performed analysis for 90nm, 65nm and 45nm.Clear trend in sigma!

Nominal VDDDelay@150%

VDD@+15%Delay@150%

No edgepropagation

0

1

2

3

4

5

6

7

8

9

30 50 70 90 110

Technology Trend for an SRAMSRAM is known to be a more sensitive circuit… (lower σ).But, circuit heavily optimized for each technology.Much lower σ values + similar trend in sigma!

A

B

CProcess σ point

Nominal VCSDelay@150%

VCS@+15%Delay@150%

Cannot writeto cell

0

5

10

15

20

25

30 50 70 90 110

Process σ point

Comparison of Circuits (@Point C)

Technology trend is modulated by circuit innovation and investment in analysis and optimization tools.Global trend remains clear!

SRAM is least robust,but much attentionsis devoted here, e.g.

redundancy & 8T

Latch is less robust+ suffers from SER

Buffer is most robust,unlikely to fail!

Variability and ResilienceWe will likely need wide-spread resilience at and beyond the 32nm technology node.How much resilience and/or adaptation will be determined by how well we understand the fabrication process.

Firm understanding of the sources and impact of systematic variation is needed.Magnitude of random or un-modeled variation will determine design margins (and design profit).Models are critical to both!

Role of ModelingModeling needs to become more aware of systematic and random variability.

As do other parts of the analysis flow, e.g. layout extraction.

We need the ability to mix empirical and physical capabilities in a clean manner…

As we learn to make better characterization structures, the opportunity for early semi-empirical modeling will expand!

Present LearningFuture Learning

ImprovedDesign…

ImprovedDesign…

Ultimate VisionEnable accurate model to hardware correlation and sophisticated design adaptation.

VirtualFab

VirtualFab

Parameter History

Performance History

Model toHardware Matching

Model toHardware Matching

Chip

Teststructure

Wafer0

102030405060

Lot 1 Lot 2 Lot 3 Lot 4 Lot 5 Lot 6

FmaxPowerYield

0.7

0.8

0.9

1

1.1

1.2

Lot 1 Lot 2 Lot 3 Lot 4 Lot 5 Lot 6

Target

VTHDLPSRO

SimulationParameters

& Tools

adaptDesignCycle

DesignCycle

![High-Quality Real-Time Hardware Stereo Matching Based on ...€¦ · frame rates when implemented in hardware [3], they lead to low matching accuracy [4]. As such, a few attempts](https://static.fdocuments.net/doc/165x107/5fac15dd5bac12738515f40b/high-quality-real-time-hardware-stereo-matching-based-on-frame-rates-when-implemented.jpg)