Minqi’s Solutions to the Exercises of Sheldon Axler (2014), Linear Algebra Done ... · 2020. 10....

Transcript of Minqi’s Solutions to the Exercises of Sheldon Axler (2014), Linear Algebra Done ... · 2020. 10....

Minqi’s Solutions to the Exercises of Sheldon Axler

(2014), Linear Algebra Done Right (3rd Edition)

Minqi Pan1

1Website: http://www.minqi-pan.com

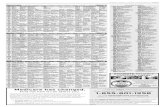

Contents

Preface ix

Chapter 1. Rn and Cn 11.1. Exercise 1.A.1: Multiplicative Inverse of Complex Numbers 11.2. Exercise 1.A.2: A Cubic Root of 1 11.3. Exercise 1.A.3: Square Roots of i 21.4. Exercise 1.A.4: Commutativity of Addition of Complex Numbers 21.5. Exercise 1.A.5: Associativity of Addition of Complex Numbers 21.6. Exercise 1.A.6: Associativity of Multiplication of Complex Numbers 21.7. Exercise 1.A.7: Existence and Uniqueness of Additive Inverse 31.8. Exercise 1.A.8: Existence and Uniqueness of Multiplicative Inverse 31.9. Exercise 1.A.9: The Distributive Property of Complex Numbers 31.10. Exercise 1.A.10: Vector Addition and Scalar Multiplication in R4 41.11. Exercise 1.A.11: A Scalar Multiplication Example in C3 41.12. Exercise 1.A.12: Associativity of Vector Addition in Fn 41.13. Exercise 1.A.13: Associativity of Scalar Multiplication in Fn 51.14. Exercise 1.A.14: Scalar Multiplication by 1 in Fn 51.15. Exercise 1.A.15: Left-distributive Property of Operations in Fn 51.16. Exercise 1.A.16: Right-distributive Property of Operations in Fn 5

Chapter 2. Definition of Vector Space 72.1. Exercise 1.B.1: −(−v) = v in Vector Spaces 72.2. Exercise 1.B.2: av = 0 Implies a = 0 or v = 0 in Vector Spaces 72.3. Exercise 1.B.3: Solutions of a Vector Equation 72.4. Exercise 1.B.4: The Empty Set is NOT a Vector Space 82.5. Exercise 1.B.5: An Alternative Definition of Vector Space 82.6. Exercise 1.B.6: A Definition of R ∪ {∞} ∪ {−∞} 8

Chapter 3. Subspaces 113.1. Exercise 1.C.1: Examples and Counterexamples of Subspaces of F3 113.2. Exercise 1.C.2: Supplying Proofs for Examples of Vector Spaces 123.3. Exercise 1.C.3: A Subspace of R(−4,4) 133.4. Exercise 1.C.4: A Subspace of R[0,1] 133.5. Exercise 1.C.5: R2 is NOT a Subspace of C2 133.6. Exercise 1.C.6: Comparing Subspaces of R3 and C3 133.7. Exercise 1.C.7: A Quasi-subspace Unclosed under Scalar Multiplication 143.8. Exercise 1.C.8: A Quasi-subspace Unclosed under Vector Addition 143.9. Exercise 1.C.9: Periodic Functions is NOT a Subspace of RR 143.10. Exercise 1.C.10: The Intersection of 2 Subspaces is a Subspace 153.11. Exercise 1.C.11: The Intersection of Subspaces is a Subspace 15

v

vi CONTENTS

3.12. Exercise 1.C.12: When the Union of 2 Subspaces is a Subspace 153.13. Exercise 1.C.13: When the Union of 3 Subspaces is a Subspace 163.14. Exercise 1.C.14: An Example of Sums of Subspaces 163.15. Exercise 1.C.15: U + U = U for Any Subspace U 173.16. Exercise 1.C.16: Commutativity of the Sum of Subspaces 173.17. Exercise 1.C.17: Associativity of the Sum of Subspaces 173.18. Exercise 1.C.18: The Identity of Sum of Subspaces 183.19. Exercise 1.C.19: There is NO Cancellation Law for Sums of Subspaces 183.20. Exercise 1.C.20: A Direct Sum of 2 Subspaces that Equals to F4 183.21. Exercise 1.C.21: A Direct Sum of 2 Subspaces that Equals to F5 193.22. Exercise 1.C.22: A Direct Sum of 4 Subspaces that Equals to F5 193.23. Exercise 1.C.23: There is NO Cancellation Law for Direct Sums 203.24. Exercise 1.C.24: A Direct Sum Decomposition of RR 20

Chapter 4. Span and Linear Independence 234.1. Exercise 2.A.1: Alternative Span from an Existing Span 234.2. Exercise 2.A.2: Examples of Linearly Independent Lists 244.3. Exercise 2.A.3: a Linearly Dependent List 254.4. Exercise 2.A.4: Conditions to Linear Dependence 254.5. Exercise 2.A.5: Difference between C and R as Scalar Fields 254.6. Exercise 2.A.6: Constructing Another Independent List from a List 264.7. Exercise 2.A.7: Constructing Another Independent List from a List 274.8. Exercise 2.A.8: Linear Independent List Multiplied by Scalar 274.9. Exercise 2.A.9: Addition of Two Linearly Independent Lists 284.10. Exercise 2.A.10: Adding a Vector to a Linearly Independent List 284.11. Exercise 2.A.11: Concatenating a Vector to an Independent List 284.12. Exercise 2.A.12: Possible Linearly Independent Lists in P4(F) 294.13. Exercise 2.A.13: Possible Spans of P4(F) 294.14. Exercise 2.A.14: Another Definition of Infinite-Dimensional 294.15. Exercise 2.A.15: F∞ is Infinite-Dimensional 294.16. Exercise 2.A.16: R[0,1] is Infinite-Dimensional 304.17. Exercise 2.A.17: Polynomials that Vanish at the Same Point 30

Chapter 5. Bases 335.1. Exercise 2.B.1: Vector Spaces that Have Exactly One Basis 335.2. Exercise 2.B.2: Examples and Counterexamples of Bases 335.3. Exercise 2.B.3: Find and Extend a Basis for a Subspace of R5 345.4. Exercise 2.B.4: Find and Extend a Basis for a Subspace of C5 355.5. Exercise 2.B.5: A Basis of P3(F) without Degree-2 Polynomials 365.6. Exercise 2.B.6: Constructing Another Basis from a Basis 385.7. Exercise 2.B.7: Constructing a Counterexample 395.8. Exercise 2.B.8: Basis Obtained from a Direct Sum 40

Chapter 6. Dimension 416.1. Exercise 2.C.1: A Space with the Same Dimension of its Subspace 416.2. Exercise 2.C.2: Subspaces of R2 416.3. Exercise 2.C.3: Subspaces of R3 416.4. Exercise 2.C.4: Find and Extend a Basis for a Subspace of P4(F) 426.5. Exercise 2.C.9: dim span(v1 + w, . . . , vm + w) > m− 1 43

CONTENTS vii

Chapter 7. The Vector Space of Linear Maps 457.1. Exercise 3.A.1: Conditions for a Mapping to be Linear 457.2. Exercise 3.A.5: L(V,W ) is a vector space 467.3. Exercise 3.A.6: Algebraic Properties of Products of Linear Maps 467.4. Exercise 3.A.7: Linear Map on 1D Space is Scalar Multiplication 477.5. Exercise 3.A.8: Homogeneity Alone does NOT Imply Linearity 477.6. Exercise 3.A.9: Additivity Alone does NOT Imply Linearity 48

Chapter 8. Null Spaces and Ranges 498.1. Exercise 3.B.1: A Linear Map T with dim null T = 3,dim range T = 2 498.2. Exercise 3.B.2: range S ⊂ null T Implies (ST )2 = 0 498.3. Exercise 3.B.3: Spanning = Surjective; Independent = Injective 498.4. Exercise 3.B.4: {T ∈ L(R5,R4) : dim null T > 2} is NOT a Subspace 508.5. Exercise 3.B.5: A Linear Map T such that range T = null T 50

Chapter 9. Matrices 539.1. Exercise 3.C.1: M(T ) Has At Least dim range T Nonzero Entries 539.2. Exercise 3.C.2: Find Bases from the Matrix of D ∈ L(P3(R),P2(R)) 539.3. Exercise 3.C.3: Find Bases for V,W from M(T ) where T ∈ L(V,W ) 549.4. Exercise 3.C.4: Find Bases for W from M(T ) where T ∈ L(V,W ) 549.5. Exercise 3.C.5: Find Bases for V from M(T ) where T ∈ L(V,W ) 54

Chapter 10. Invertibility and Isomorphic Vector Spaces 5710.1. Exercise 3.D.1: (ST )−1 = T−1S−1 5710.2. Exercise 3.D.2: Noninvertible Operators on V is NOT a Subspace 5710.3. Exercise 3.D.3: Injective Subspace Mappings and Invertible Operators 5810.4. Exercise 3.D.4: null T1 = null T2 if and only if T1 = ST2 5810.5. Exercise 3.D.5: range T1 = range T2 if and only if T1 = T2S 59

Chapter 11. Products and Quotients of Vector Spaces 6111.1. Exercise 3.E.1: T is a Linear Map iff graph of T is a Subspace of V ×W 6111.2. Exercise 3.E.2: Vj is Finite-dimensional if V1 × · · · × Vm is Finite-dimensional 6111.3. Exercise 3.E.3: Example of U1 × U2 Isomorphic to Non-Direct-Sum U1 + U2 6211.4. Exercise 3.E.4: L(V1×· · ·×Vm,W ) and L(V1,W )×· · ·×L(Vm,W ) are Isomorphic 6211.5. Exercise 3.E.5: L(V,W1×· · ·×Wm) and L(V,W1)×· · ·×L(V,Wm) are Isomorphic 63

Chapter 12. Duality 6512.1. Exercise 3.F.1: Linear Functionals are either Surjective or Zero 6512.2. Exercise 3.F.2: Examples of Linear Functionals on R[0,1] 6512.3. Exercise 3.F.3: Existence of ϕ ∈ V ′ such that ϕ(v) = 1 6612.4. Exercise 3.F.10: The Dual Map Function that Takes T to T ′ is Linear 6612.5. Exercise 3.F.20: U ⊂W Implies W 0 ⊂ U0 67

Chapter 13. Polynomials 6913.1. Exercise 4.2: {0} ∪ {p ∈ P(F) : deg p = m} is NOT a Subspace 6913.2. Exercise 4.4: There Exists a n-Degree Polynomial with m Zeros 6913.3. Exercise 4.6: p has Distinct Zeros iff p and p′ have NO Common Zeros 7013.4. Exercise 4.7: An Odd-degree, Real-coefficient Polynomial has a Real Zero 7013.5. Exercise 4.8: A Linear Operator on P(R) 70

Bibliography 73

Preface

Remarkable exercises are:

(1) Exercise 1.C.9: Periodic Functions is NOT a Subspace of RR.(2) Exercise 1.C.13: When the Union of 3 Subspaces is a Subspace.(3) Exercise 2.B.7: Constructing a Counterexample.(4) Exercise 2.C.9: dim span(v1 + w, . . . , vm + w) > m− 1.(5) Exercise 3.C.5: Find Bases for V from M(T ) where T ∈ L(V,W ).(6) Exercise 3.D.4: null T1 = null T2 if and only if T1 = ST2.(7) Exercise 3.D.5: range T1 = range T2 if and only if T1 = T2S.(8) Exercise 4.A.7: An Odd-degree, Real-coefficient Polynomial has a Real Zero.

Minqi PanApril 4, 2020

ix

CHAPTER 1

Rn and Cn

1.1. Exercise 1.A.1: Multiplicative Inverse of Complex Numbers

Suppose a and b are real numbers, not both 0. Find real numbers c and d such that

1/(a+ bi) = c+ di

Answer. Since at least one of the real numbers a, b is different from 0, we have a2 + b2 6= 0.We can then let

c =a

a2 + b2,

d =−b

a2 + b2.

Then

(a+ bi)

(a

a2 + b2− b

a2 + b2i

)=

(a

a

a2 + b2− b

(− b

a2 + b2

))+

(a

(− b

a2 + b2

)+ b

a

a2 + b2

)i

= 1 + 0i

= 1.

�

1.2. Exercise 1.A.2: A Cubic Root of 1

Show that

−1 +√

3i

2

is a cube root of 1 (meaning that its cube equals 1).

Answer.

−1 +√

3i

2· −1 +

√3i

2· −1 +

√3i

2

=−2− 2

√3i

4· −1 +

√3i

2

=(−2)(−1)− (−2

√3)(√

3) + (−2√

3 + (−2√

3)(−1))i

8= 1

�

1

2 1. Rn AND Cn

1.3. Exercise 1.A.3: Square Roots of i

Find two distinct square roots of i.

Answer. Note that

i = eiπ2 .

Thus the following two numbers are two distinct square roots of i:

±eiπ4 = ±(

√2

2+

√2

2i).

�

1.4. Exercise 1.A.4: Commutativity of Addition of Complex Numbers

Show that α+ β = β + α for all α, β ∈ C.

Proof. Suppose α = a + bi and β = c + di, where a, b, c, d ∈ R. Then the definition ofaddition of complex numbers shows that

α+ β = (a+ c) + (b+ d)i

and

β + α = (c+ a) + (d+ b)i.

The equations above and the commutativity of addition of real numbers show that α + β =β + α. �

1.5. Exercise 1.A.5: Associativity of Addition of Complex Numbers

Show that (α+ β) + λ = α+ (β + λ) for all α, β, λ ∈ C.

Proof. Suppose α = a+ bi, β = c+ di and λ = e+ fi, where a, b, c, d, e, f ∈ R. Then thedefinition of addition of complex numbers shows that

(α+ β) + λ = ((a+ c) + (b+ d)i) + e+ fi

= ((a+ c) + e) + ((b+ d) + f)i

and

α+ (β + λ) = a+ bi+ ((c+ e) + (d+ f)i)

= (a+ (c+ e)) + (b+ (d+ f))i.

The equations above and the associativity of addition of real numbers show that (α+ β) + λ =α+ (β + λ). �

1.6. Exercise 1.A.6: Associativity of Multiplication of Complex Numbers

Show that (αβ)λ = α(βλ) for all α, β, λ ∈ C.

Proof. Suppose α = a+ bi, β = c+ di and λ = e+ fi, where a, b, c, d, e, f ∈ R. Then thedefinition of multiplication of complex numbers shows that

(αβ)λ = ((ac− bd) + (ad+ bc)i)(e+ fi)

= ((ac− bd)e− (ad+ bc)f) + ((ac− bd)f + (ad+ bc)e)i

= (ace− bde− adf − bcf) + (acf − bdf + ade+ bce)i

1.9. EXERCISE 1.A.9: THE DISTRIBUTIVE PROPERTY OF COMPLEX NUMBERS 3

and

α(βλ) = (a+ bi)((ce− df) + (cf + de)i)

= (a(ce− df)− b(cf + de)) + (a(cf + de) + b(ce− df))i

= (ace− adf − bcf − bde) + (acf + ade+ bce− bdf)i.

The equations above and the commutativity of addition of real numbers show that (αβ)λ =α(βλ). �

1.7. Exercise 1.A.7: Existence and Uniqueness of Additive Inverse

Show that for every α ∈ C, there exists a unique β ∈ C such that α+ β = 0.

Proof. Suppose α = a+ bi where a, b ∈ R, then β = −a− bi satisfies α+ β = 0.Suppose that there exists another β′ ∈ C such that α+ β′ = 0. Then

β = β + (α+ β′)

= (β + α) + β′

= β′.

The equations above show that β′ = β. �

1.8. Exercise 1.A.8: Existence and Uniqueness of Multiplicative Inverse

Show that for every α ∈ C with α 6= 0, there exists a unique β ∈ C such that αβ = 1.

Proof. The existence of β has been shown in Exercise 1.A.1.Suppose that there exists another β′ ∈ C such that and αβ′ = 1. Then

β = β(αβ′)

= (βα)β′

= β′.

The equations above show that β′ = β. �

1.9. Exercise 1.A.9: The Distributive Property of Complex Numbers

Show that λ(α+ β) = λα+ λβ for all λ, α, β ∈ C.

Proof. Suppose α = a+ bi, β = c+ di and λ = e+ fi, where a, b, c, d, e, f ∈ R. Then thedefinition of multiplication and addition of complex numbers shows that

λ(α+ β) = (e+ fi)((a+ c) + (b+ d)i)

= (e(a+ c)− f(b+ d)) + (e(b+ d) + f(a+ c))i

= (ea+ ec− fb− fd) + (eb+ ed+ fa+ fc)i

and

λα+ λβ = (e+ fi)(a+ bi) + (e+ fi)(c+ di)

= ((ea− fb) + (eb+ fa)i) + ((ec− fd) + (ed+ fc)i)

= (ea− fb+ ec− fd) + (eb+ fa+ ed+ fc)i.

The equations above and the commutativity of addition of real numbers show that λ(α+β) =λα+ λβ. �

4 1. Rn AND Cn

1.10. Exercise 1.A.10: Vector Addition and Scalar Multiplication in R4

Find x ∈ R4 such that

(4,−3, 1, 7) + 2x = (5, 9,−6, 8).

Answer.

x =

(1

2, 6,−7

2,

1

2

).

�

1.11. Exercise 1.A.11: A Scalar Multiplication Example in C3

Explain why there does not exist λ ∈ C such that

λ(2− 3i, 5 + 4i,−6 + 7i) = (12− 5i, 7 + 22i,−32− 9i).

Proof. If there exists such λ then the definition of scalar multiplication in C3 shows that,

λ(2− 3i) = 12− 5i.

Thus

λ =12− 5i

2− 3i= 3 + 2i.

But

λ(2− 3i, 5 + 4i,−6 + 7i) = (3 + 2i)(2− 3i, 5 + 4i,−6 + 7i)

= (12− 5i, 7 + 22i,−32 + 9i)

6= (12− 5i, 7 + 22i,−32− 9i).

Therefore λ does not exist. �

1.12. Exercise 1.A.12: Associativity of Vector Addition in Fn

Show that (x+ y) + z = x+ (y + z) for all x, y, z ∈ Fn.

Proof. Suppose x = (x1, . . . , xn), y = (y1, . . . , yn) and z = (z1, . . . , zn) where x1, . . . , xn, y1, . . . , yn, z1, . . . , zn ∈F. Then the definition of addition in Fn shows that

(x+ y) + z = (x1 + y1, . . . , xn + yn) + (z1, . . . , zn)

= ((x1 + y1) + z1, . . . , (xn + yn) + zn)

and

x+ (y + z) = (x1, . . . , xn) + (y1 + z1, . . . , yn + zn)

= (x1 + (y1 + z1), . . . , xn + (yn + zn))

The equations above and the associativity of addition in F show that (x+ y) + z = x+ (y+z). �

1.16. EXERCISE 1.A.16: RIGHT-DISTRIBUTIVE PROPERTY OF OPERATIONS IN Fn 5

1.13. Exercise 1.A.13: Associativity of Scalar Multiplication in Fn

Show that (ab)x = a(bx) for all x ∈ Fn and all a, b ∈ F.

Proof. Suppose x = (x1, . . . , xn) where x1, . . . , xn ∈ F. Then the definition of scalarmultiplication in Fn shows that

(ab)x = ((ab)x1, . . . , (ab)xn)

and

a(bx) = a(bx1, . . . , bxn)

= (a(bx1), . . . , a(xn))

The equations above and the associativity of multiplication in F show that (ab)x = a(bx). �

1.14. Exercise 1.A.14: Scalar Multiplication by 1 in Fn

Show that 1x = x for all x ∈ Fn.

Proof. Suppose x = (x1, . . . , xn) where x1, . . . , xn ∈ F. Then the definition of scalarmultiplication in Fn and the fact that 1 is the multiplicative identity of F shows that

1x = (1x1, . . . , 1xn)

= (x1, . . . , xn)

= x.

�

1.15. Exercise 1.A.15: Left-distributive Property of Operations in Fn

Show that λ(x+ y) = λx+ λy for all λ ∈ F and x, y ∈ Fn.

Proof. Suppose x = (x1, . . . , xn) and y = (y1, . . . , yn) where x1, . . . , xn ∈ F and y1, . . . , yn ∈F. Then the definition of addition and scalar multiplication in Fn shows that

λ(x+ y) = λ(x1 + y1, . . . , xn + yn)

= (λ(x1 + y1), . . . , λ(xn + yn))

and

λx+ λy = (λx1, . . . , λxn) + (λy1, . . . , λyn)

= (λx1 + λy1, . . . , λxn + λyn)

The equations above and the distributive property of F show that λ(x+ y) = λx+ λy. �

1.16. Exercise 1.A.16: Right-distributive Property of Operations in Fn

Show that (a+ b)x = ax+ bx for all a, b ∈ F and all x ∈ Fn.

Proof. Suppose x = (x1, . . . , xn) where x1, . . . , xn ∈ F. Then the definition of scalarmultiplication and addition in Fn shows that

(a+ b)x = ((a+ b)x1, . . . , (a+ b)xn)

and

ax+ bx = (ax1, . . . , axn) + (bx1, . . . , bxn)

= (ax1 + bx1, . . . , axn + bxn).

The equations above and the distributive property of F show that (a+ b)x = ax+ bx. �

CHAPTER 2

Definition of Vector Space

2.1. Exercise 1.B.1: −(−v) = v in Vector Spaces

Prove that −(−v) = v for every v ∈ V .

Proof.

−(−v) = −(−v) + 0

= −(−v) + ((−v) + v)

= (−(−v) + (−v)) + v

= 0 + v

= v

�

2.2. Exercise 1.B.2: av = 0 Implies a = 0 or v = 0 in Vector Spaces

Suppose a ∈ F, v ∈ V , and av = 0. Prove that a = 0 or v = 0.

Proof. For the purpose of arriving at a contradiction, assume that both a 6= 0 and v 6= 0.Then

v = 1v = (1

aa)v =

1

a(av) = 0

which contradicts with v 6= 0.Therefore a = 0 or v = 0. �

2.3. Exercise 1.B.3: Solutions of a Vector Equation

Suppose v, w ∈ V . Explain why there exists a unique x ∈ V such that v + 3x = w.

Proof. We claim that the following x ∈ V satisfies v + 3x = w:

x =1

3(w − v).

We can verify it by showing that,

v + 3x = v + 3× 1

3(w − v)

= v + w − v= w.

Suppose that there exists another x′ ∈ V that also satisfies v + 3x′ = w, then

0 = w − w= (v + 3x)− (v + 3x′)

= v + 3x− v − 3x′

= 3x− 3x′.

7

8 2. DEFINITION OF VECTOR SPACE

Thus

x′ = x′ +1

3× 0

= x′ +1

3(3x− 3x′)

= x′ + x− x′

= x.

�

2.4. Exercise 1.B.4: The Empty Set is NOT a Vector Space

The empty set is not a vector space. The empty set fails to satisfy only one of the require-ments listed in 1.19. Which one?

Answer. The empty set fails to satisfy the “Additive Identity” requirement. Because theredoes not exist an element 0 ∈ ∅. �

2.5. Exercise 1.B.5: An Alternative Definition of Vector Space

Show that in the definition of a vector space (1.19), the additive inverse condition can bereplaced with the condition that

0v = 0,∀v ∈ V.Here the 0 on the left side is the number 0, and the 0 on the right side is the additive identity

of V .

Proof. We will show that the new condition is equal to the original condition, which statesthat for every v ∈ V , there exists w ∈ V such that v + w = 0. That the old condition impliesthe new condition has been shown in Theorem 1.29 “The number 0 times a vector”. Thereforeit suffices to show that the new condition implies the old condition.

Suppose that the new condition holds.Pick v ∈ V , and let w = (−1)v. It follows that

v + w = 1v + (−1)v

= (1− 1)v

= 0v

= 0.

Therefore the old condition holds. �

2.6. Exercise 1.B.6: A Definition of R ∪ {∞} ∪ {−∞}

Let∞ and −∞ denote two distinct objects, neither of which is in R. Define an addition andscalar multiplication on R∪{∞}∪{−∞} as you could guess from the notation. Specifically, thesum and product of two real numbers is as usual, and for t ∈ R define

t∞ =

−∞ if t < 0,

0 if t = 0,

∞ if t > 0,

t(−∞) =

∞ if t < 0,

0 if t = 0,

−∞ if t > 0,

t+∞ =∞+ t =∞, t+ (−∞) = (−∞) + t = (−∞),

∞+∞ =∞, (−∞) + (−∞) = (−∞), ∞+ (−∞) = 0.

Is R ∪ {∞} ∪ {−∞} a vector space over R? Explain.

2.6. EXERCISE 1.B.6: A DEFINITION OF R ∪ {∞} ∪ {−∞} 9

Answer. No. Because the associativity of vector addition fails:

(1024 +∞)−∞ =∞−∞ = 0

but1024 + (∞−∞) = 1024 + 0 = 1024.

�

CHAPTER 3

Subspaces

3.1. Exercise 1.C.1: Examples and Counterexamples of Subspaces of F3

For each of the following subsets of F3, determine whether it is a subspace of F3:

(a) {(x1, x2, x3) ∈ F3 : x1 + 2x2 + 3x3 = 0};(b) {(x1, x2, x3) ∈ F3 : x1 + 2x2 + 3x3 = 4};(c) {(x1, x2, x3) ∈ F3 : x1x2x3 = 0};(d) {(x1, x2, x3) ∈ F3 : x1 = 5x3}.

Answer. (a) Denote A = {(x1, x2, x3) ∈ F3 : x1 + 2x2 + 3x3 = 0}. We claim that Ais a subspace of F3 with the following verifications.(i) (0, 0, 0) ∈ A because 0 + 2 · 0 + 3 · 0 = 0.(ii) Pick u,w ∈ A, where u = (u1, u2, u3), w = (w1, w2, w3). It follows that u + w =

(u1 +w1, u2 +w2, u3 +w3). We can show that u+w ∈ A by the following equations:

(u1 + w1) + 2(u2 + w2) + 3(u3 + w3)

= (u1 + 2u2 + 3u3) + (w1 + 2w2 + 3w3)

= 0 + 0 = 0.

(iii) Pick a ∈ F and u ∈ A, where u = (u1, u2, u3). It follows that au = (au1, au2, au3).We can show that au ∈ A by the following equations:

(au1) + 2(au2) + 3(au3) = a(u1 + 2u2 + 3u3)

= a0 = 0.

(b) Denote B = {(x1, x2, x3) ∈ F3 : x1 +2x2 +3x3 = 4}. B is not a subspace of F3, becausevector (0, 0, 0) /∈ B (as 0 + 2 · 0 + 3 · 0 6= 4).

(c) Denote C = {(x1, x2, x3) ∈ F3 : x1x2x3 = 0}. C is not a subspace of F3 because bysetting u = (0, 1, 1) ∈ C and w = (1, 1, 0) ∈ C, we find out that u+ w = (1, 2, 1) /∈ C.

(d) Denote D = {(x1, x2, x3) ∈ F3 : x1 = 5x3}. We claim that D is a subspace of F3 withthe following verifications.

(i) (0, 0, 0) ∈ A because 0 = 5 ∗ 0.(ii) Pick u,w ∈ A, where u = (u1, u2, u3), w = (w1, w2, w3). It follows that u + w =

(u1 +w1, u2 +w2, u3 +w3). We can show that u+w ∈ A by the following equations:

u1 + w1 = 5u3 + 5w3

= 5(u1 + w3)

(iii) Pick a ∈ F and u ∈ A, where u = (u1, u2, u3). It follows that au = (au1, au2, au3).We can show that au ∈ A by the following equations:

au1 = a(5u3)

= 5(au3).

�

11

12 3. SUBSPACES

3.2. Exercise 1.C.2: Supplying Proofs for Examples of Vector Spaces

Verify all the assertions in Example 1.35.

(a) If b ∈ F, then

{(x1, x2, x3, x4) ∈ F4 : x3 = 5x4 + b}is a subspace of F4 if and only if b = 0.

(b) The set of continuous real-values functions on the interval [0, 1] is a subspace of R[0,1].(c) The set of differentiable real-valued functions on R is a subspace of RR

(d) The set of differentiable real-valued functions f on the interval (0, 3) such that f ′(2) = bis a subspace of R(0,3) if and only if b = 0.

(e) The set of all sequences of complex numbers with limit 0 is a subspace of C∞.

Answer. (a) Denote A = {(x1, x2, x3, x4) ∈ F4 : x3 = 5x4 + b}.If b = 0, then A is a subspace by a verification similar to Exercise 1.C.2 (d).On the other hand, if A is a subspace, then 0 ∈ A. It follows that

0 = 5 ∗ 0 + b = b.

(b) Denote B = {f ∈ R[0,1] : f is continuous}.We can verify that 0 ∈ B because the constant function f = 0 is continuous.Suppose that u,w ∈ B, we can verify that (u+w) ∈ B because the addition of two

complex continuous functions is continuous by Theorem 4.9 of [Rud76].Suppose that a ∈ F and u ∈ B. Note that the constant function f = a is continuous.

We can verify that au ∈ B because the multiplication of two continuous complexfunctions is continuous by Theorem 4.9 of [Rud76].

(c) Denote C = {f ∈ RR : f is differentiable}.We can verify that 0 ∈ C because the constant function f = 0 is differentiable.Suppose that u,w ∈ C, we can verify that (u+w) ∈ C because the addition of two

differentiable real functions is differentiable by Theorem 5.3 of [Rud76].Suppose that a ∈ R and u ∈ C. Note that the constant function a(x) = a is

differentiable. We can verify that au ∈ C because the multiplication of two differentiablereal functions is differentiable by Theorem 5.3 of [Rud76].

(d) Denote D = {f ∈ R(0,3) : f is differentiable and f ′(2) = b}.Suppose that b = 0. We claim that D is a subspace of R(0,3) with the following

verifications.(i) 0 ∈ D because the constant function 0(x) = 0 is differentiable and 0′(2) = 0.(ii) Suppose that u,w ∈ D. (u+w) is also differentiable by Theorem 5.3 of [Rud76].

Also by Theorem 5.3 of [Rud76], (u + v)′(2) = u′(2) + v′(2) = 0 + 0 = 0. Thus(u+ w) ∈ D.

(iii) Suppose that a ∈ R and u ∈ D. Note that the constant function a(x) = a is differ-entiable, so au is differentiable by Theorem 5.3 of [Rud76]. Also by Theorem 5.3of [Rud76], (au)′(2) = a′(2)u(2) +a(2)u′(2) = 0×u(2) +a×0 = 0. Thus au ∈ D.

On the other hand, suppose that D is a subspace. Then it implies that 0 ∈ Dwhere 0(x) = 0, and 0′(x) = 0 implies that 0′(2) = b = 0.

(e) Denote E = {zn ∈ C∞ : limn→∞ zn = 0}.We can verify that 0n ∈ E where 0n = (0, 0, 0, . . . ) because

limn→∞

0n = 0.

Suppose that un, wn ∈ E, we can verify that (un +wn) ∈ C because limn→∞(un +wn) = limn→∞ un + limn→∞ wn = 0 + 0 = 0 by Theorem 3.3 of [Rud76].

3.6. EXERCISE 1.C.6: COMPARING SUBSPACES OF R3 AND C3 13

Suppose that a ∈ F and un ∈ E, we can verify that aun ∈ E because limn→∞ aun =a limn→∞ un = a0 = 0 by Theorem 3.3 of [Rud76].

�

3.3. Exercise 1.C.3: A Subspace of R(−4,4)

Show that the set of differentiable real-valued functions f on the interval (−4, 4) such thatf ′(−1) = 3f(2) is a subspace of R(−4,4).

Proof. Denote X = {f ∈ R(−4,4) : f is differentiable and f ′(−1) = 3f(2)}(1) We claim that 0 ∈ X where 0(x) = 0. Note that 0 is differentiable and 0′(−1) =

0, 0(2) = 0, thus 0′(−1) = 3× 0(2).(2) Pick f, g ∈ X. That f + g is differentiable follows from Theorem 5.3 of [Rud76].

Also by Theorem 5.3 of [Rud76], (f + g)′(−1) = f ′(−1) + g′(−1) = 3f(2) + 3g(2) =3(f(2) + g(2)) = 3((f + g)(2)). Thus f + g ∈ X.

(3) Pick f ∈ X and a ∈ F. That af is differentiable follows from Theorem 5.3 of [Rud76]by considering a as a constant function a(x) = a. Also by Theorem 5.3 of [Rud76],(af)′(−1) = a(f ′(−1)) = a(3f(2)) = 3(af(2)) = 3((af)(2)). Thus af ∈ X.

�

3.4. Exercise 1.C.4: A Subspace of R[0,1]

Suppose b ∈ R. Show that the set of continuous real-valued functions f on the interval [0, 1]

such that∫ 1

0f = b is a subspace of R[0,1] if and only if b = 0.

Proof. Denote X = {f ∈ R[0,1] :f is continuous and∫ 1

0f = b}.

Suppose that b = 0. We now verify that X is a subspace.

(1) We can verify that 0 ∈ X because 0(x) = 0 is continuous and∫ 1

00(x)dx = 0.

(2) Pick f, g ∈ X. It follows that f + g is continuous by Theorem 4.9 of [Rud76] andRiemann-integrable by Theorem 6.8 of [Rud76]. Further by Theorem 6.12 of [Rud76],

we have∫ 1

0(f(x) + g(x))dx =

∫ 1

0f(x)dx+

∫ 1

0g(x) = 0 + 0 = 0.

(3) Pick a ∈ F, f ∈ X. By Theorem 4.9 of [Rud76], af is continuous. Thus af is Riemann-integrable by Theorem 6.8 of [Rud76]. Further by Theorem 6.12 of [Rud76], we have∫ 1

0(af(x))dx = a

∫ 1

0f(x)dx = a× 0 = 0.

On the other hand, suppose that X is a subspace. Then it follows that 0 ∈ X, which implies

that∫ 1

00(x)dx = 0 = b. �

3.5. Exercise 1.C.5: R2 is NOT a Subspace of C2

Is R2 a subspace of the complex vector space C2?

Answer. No. Because multiplying the vector (1, 1) ∈ R2 by scalar i gives (i, i) which is notin R2. �

3.6. Exercise 1.C.6: Comparing Subspaces of R3 and C3

(i) Is {(a, b, c) ∈ R3 : a3 = b3} a subspace of R3?(ii) Is {(a, b, c) ∈ C3 : a3 = b3} a subspace of C3?

Answer. (i) Yes. Let’s observe the real function f(x) = x3. f(x) is a 1-1 correspon-dence between R and R. Therefore the condition a3 = b3 is equivalent to a = b.

14 3. SUBSPACES

(ii) No. Denote X = {(a, b, c) ∈ C3 : a3 = b3}. Set u = (2, 2e2π3 i, 0) and v = (e

2π3 i, 1, 0),

then u+v = (2+e2π3 i, 2e

2π3 i+1, 0). Note that u ∈ X and v ∈ X but u+v /∈ X because,(

2 + e2π3 i)3

= 3√

3i,(2e

2π3 i + 1

)3

= −3√

3i.

�

3.7. Exercise 1.C.7: A Quasi-subspace Unclosed under Scalar Multiplication

Give an example of a nonempty subset U of R2 such that U is closed under addition andunder taking additive inverses (meaning −u ∈ U whenever u ∈ U), but U is not a subspace ofR2.

Answer.U = {(0, 0), (1, 1), (−1,−1)}.

�

3.8. Exercise 1.C.8: A Quasi-subspace Unclosed under Vector Addition

Give an example of a nonempty subset U of R2 such that U is closed under scalar multipli-cation, but U is not a subspace of R2.

Answer.U = {(0, y) : y ∈ R} ∪ {(x, 0) : x ∈ R}.

�

3.9. Exercise 1.C.9: Periodic Functions is NOT a Subspace of RR

A function f : R → R is called periodic if there exists a positive number p such thatf(x) = f(x + p) for all x ∈ R. Is the set of periodic functions from R to R a subspace of RR?Explain.

Lemma 3.1. f(x) = cos(πx) + cos(x) is not periodic.

Proof. Suppose that f(x) = cos(πx) + cos(x) is periodic, then there exists θ ∈ R such thatθ > 0 and f(x+ θ) = f(x) for all x ∈ R. Pick x = 0, we have

(3.1) f(θ + 0) = cos(πθ) + cos(θ) = f(0) = cos(0) + cos(0) = 2.

Note that −1 6 cos(πθ) 6 1 and −1 6 cos(θ) 6 1. However, if cos(πθ) < 1 and cos(θ) < 1,then cos(πθ) + cos(θ) < 1. Thus (3.1) implies that{

cos(πθ) = 1

cos(θ) = 1.

This further implies that there exists some m,n ∈ Z such that,{πθ = 2mπ

θ = 2nπ.

Since θ 6= 0, dividing the above two equations yields

π =m

n.

This contradicts with the fact that π is irrational. �

3.12. EXERCISE 1.C.12: WHEN THE UNION OF 2 SUBSPACES IS A SUBSPACE 15

Main Answer. No, because Lemma 3.1 gives an example of two periodic functions wheretheir addition is not periodic. �

3.10. Exercise 1.C.10: The Intersection of 2 Subspaces is a Subspace

Suppose U1 and U2 are subspaces of V . Prove that the intersection U1 ∩U2 is a subspace ofV .

Proof. We prove by verifying the three conditions for U1 ∩ U2 being a subspace.

(1) The premise that U1 is a subspace implies 0 ∈ U1. The premise that U2 is a subspaceimplies 0 ∈ U2. Therefore 0 ∈ U1 ∩ U2.

(2) Pick u,w ∈ U1 ∩ U2. The premise that U1 is a subspace implies u + w ∈ U1. Thepremise that U2 is a subspace implies u+ w ∈ U2. Therefore u+ w ∈ U1 ∩ U2.

(3) Pick a ∈ F and u ∈ U1 ∩ U2. The premise that U1 is a subspace implies that au ∈ U1.The premise that U2 is a subspace implies au ∈ U2. Therefore au ∈ U1 ∩ U2.

�

3.11. Exercise 1.C.11: The Intersection of Subspaces is a Subspace

Prove that the intersection of every collection of subspaces of V is a subspace of V .

Proof. Pick set I and (Si)i∈I , a family of sets indexed over set I. Suppose that Si isa subspace of V for all i ∈ I. We prove by verifying the three conditions for ∩i∈ISi being asubspace.

(1) For all i ∈ I, the premise that Si is a subspace implies that 0 ∈ Si. Therefore 0 ∈ ∩i∈ISi.(2) Pick u,w ∈ ∩i∈ISi. For all i ∈ I, the premise that Si is a subspace implies u+w ∈ Si.

Therefore u+ w ∈ ∩i∈ISi.(3) Pick a ∈ F and u ∈ ∩i∈ISi. For all i ∈ I, the premise that Ui is a subspace implies that

au ∈ Ui. Therefore au ∈ ∩i∈ISi.

�

3.12. Exercise 1.C.12: When the Union of 2 Subspaces is a Subspace

Prove that the union of two subspaces of V is a subspace of V if and only if one of thesubspaces is contained in the other.

Proof. Pick two subspaces V1, V2 of V .

(1) If V1 ⊂ V2, then V1 ∪ V2 = V2. Since V2 is a subspace, it follows that V1 ∪ V2 is asubspace.

(2) Suppose that V1 ∪ V2 is a subspace. To prove by contradiction, suppose that V1 6⊂ V2

and V2 6⊂ V1. Thus there exists v1 ∈ V1 such that v1 /∈ V2, and there exists v2 ∈ V2

such that v2 /∈ V1.We claim that v1 + v2 /∈ V1. Because if v1 + v2 was in V1, the premise that V1 is a

subspace would imply that v2 = (v1 + v2)− v1 is also in V1.We claim that v1 + v2 /∈ V2. Because if v1 + v2 was in V2, the premise that V2 is a

subspace would imply that v1 = (v1 + v2)− v2 is also in V2.Thus v1 + v2 /∈ V1 ∪ V2, contradicting with the premise that V1 ∪ V2 is a subspace.

�

16 3. SUBSPACES

3.13. Exercise 1.C.13: When the Union of 3 Subspaces is a Subspace

Prove that the union of three subspaces of V is a subspace of V if and only if one of thesubspaces contains the other two.

Proof. Pick three subspaces V1, V2, V3 of V .

(1) If V1 ⊂ V3 and V2 ⊂ V3, then V1 ∪ V2 ∪ V3 = V3. Since V3 is a subspace, it follows thatV1 ∪ V2 ∪ V3 is a subspace.

(2) Suppose that V1 ∪ V2 ∪ V3 is a subspace of V .If V1 ⊂ V2, then V1 ∪ V2 ∪ V3 becomes V2 ∪ V3. By Exercise 1.C.12, it follows that

either V2 ⊂ V3 or V3 ⊂ V2. Thus either V3 or V2 is the subspace that contains the othertwo.

If V2 ⊂ V1, then V1 ∪ V2 ∪ V3 becomes V1 ∪ V3. By Exercise 1.C.12, it follows thateither V1 ⊂ V3 or V3 ⊂ V1. Thus either V3 or V1 is the subspace that contains the othertwo.

If V1 6⊂ V2 and V2 6⊂ V1, then there exists u ∈ V1 and w ∈ V2 such that u /∈ V2 andw /∈ V1.(i) We claim that V1 \ (V1 ∩V2) ⊂ V3. Pick x ∈ V1 \ (V1 ∩V2) ⊂ V3. The premise that

V1∪V2∪V3 is a subspace implies that x+w ∈ V1∪V2∪V3 and 2x+w ∈ V1∪V2∪V3.If x+w was in V1, then w = (x+w)−x would be in V1 which is a contradiction. If2x+w was in V1, then w = (2x+w)− 2x would be in V1 which is a contradiction.If x+w was in V2, then x = (x+w)−w would be in V2 which is a contradiction. If2x+w was in V2, then 2x = (2x+w)−w would be in V2 which is a contradiction.Therefore x+w ∈ V3 and 2x+w ∈ V3. And it follows that x = (2x+w)− (x+w)is in V3.

(ii) We claim that V2 \ (V1 ∩ V2) ⊂ V3. Pick y ∈ V2 \ (V1 ∩ V2) ⊂ V3. The premise thatV1∪V2∪V3 is a subspace implies that u+y ∈ V1∪V2∪V3 and u+2y ∈ V1∪V2∪V3.If u+ y was in V1, then y = (u+ y)−u would be in V1 which is a contradiction. Ifu+ 2y was in V1, then 2y = (u+ 2y)− u would be in V1 which is a contradiction.If u+ y was in V2, then u = (u+ y)− y would be in V2 which is a contradiction. Ifu+ 2y was in V2, then u = (u+ 2y)− 2y would be in V2 which is a contradiction.Therefore u+ y ∈ V3 and u+ 2y ∈ V3. And it follows that y = (u+ 2y)− (u+ y)is in V3.

(iii) We claim that V1 ∩ V2 ⊂ V3. Pick z ∈ V1 ∩ V2. The premise that V1 is a subspaceimplies that u + z ∈ V1. If u + z ∈ V1 ∩ V2, then u = (u + z) − z ∈ V2 which is acontradiction. Thus u+ z ∈ V1 \ (V1 ∩ V2). By (i) we have u+ z ∈ V3. Also by (i)we have u ∈ V3. The premise that V3 is a subspace implies that z = (u+ z)− u isin V3.

The above (i) and (iii) implies that V1 ⊂ V3. The above (ii) and (iii) implies thatV2 ⊂ V3.

�

3.14. Exercise 1.C.14: An Example of Sums of Subspaces

Verify the assertion in Example 1.38: Suppose that U = {(x, x, y, y) ∈ F4 : x, y ∈ F} andW={(x, x, x, y) ∈ F4 : x, y ∈ F}. Then

U +W = {(x, x, y, z) ∈ F4 : x, y, z ∈ F}.

Proof. (1) Pick x ∈ U + W . Then there exists u = (xu, xu, yu, yu) ∈ U and w =(xw, xw, xw, yw) ∈ W such that xu, yu, xw, yw ∈ F and x = u + w. Thus x = (xu +

3.17. EXERCISE 1.C.17: ASSOCIATIVITY OF THE SUM OF SUBSPACES 17

xw, xu + xw, yu + xw, yu + yw), which shows that x ∈ {(x, x, y, z) ∈ F4 : x, y, z ∈ F}.Therefore U +W ⊂ {(x, x, y, z) ∈ F4 : x, y, z ∈ F}.

(2) Pick v ∈ {(x, x, y, z) ∈ F4 : x, y, z ∈ F}. Then there exists x, y, z ∈ F such thatv = (x, x, y, z). By choosing u = (x, x, y, y) ∈ U and w = (0, 0, 0, z − y) ∈ W , we haveu+ w = v. Thus v ∈ U +W . Therefore {(x, x, y, z) ∈ F4 : x, y, z ∈ F} ⊂ U +W .

The above (1) and (2) imply that U +W = {(x, x, y, z) ∈ F4 : x, y, z ∈ F}. �

3.15. Exercise 1.C.15: U + U = U for Any Subspace U

Suppose U is a subspace of V . What is U + U?

Proof. We claim that U + U = U .

(1) Pick z ∈ U +U , then there exists x, y ∈ U such that z = x+ y. Since U is closed underaddition, we have x+ y ∈ U , i.e. z ∈ U . Thus U + U ⊂ U .

(2) Pick u ∈ U , then u = 1u = ( 12 + 1

2 )u = 12u+ 1

2u, i.e. u ∈ U + U . Thus U ⊂ U + U .

The above (1) and (2) imply that U + U = U . �

3.16. Exercise 1.C.16: Commutativity of the Sum of Subspaces

Is the operation of addition on the subspaces of V commutative? In other words, if U andW are subspaces of V , is U +W = W + U?

Proof. We claim that U +W = W + U .

(1) Pick v ∈ U + W , then there exists u ∈ U and w ∈ W such that v = u + w. Since theaddition on V is commutative, u + w = w + u, and this shows that v = w + u is inW + U . Thus U +W ⊂W + U .

(2) Pick v ∈ W + U , then there exists w ∈ W and u ∈ U such that v = w + u. Since theaddition on V is commutative, w + u = u + w, and this shows that v = u + w is inU +W . Thus W + U ⊂ U +W .

The above (1) and (2) imply that U +W = W + U . �

3.17. Exercise 1.C.17: Associativity of the Sum of Subspaces

Is the operation of addition on the subspaces of V associative? In other words, if U1, U2, U3

are subspaces of V , is

(U1 + U2) + U3 = U1 + (U2 + U3)?

Proof. We claim that (U1 + U2) + U3 = U1 + (U2 + U3).

(1) Pick v ∈ (U1 + U2) + U3, then there exists u1, u2, u3 ∈ U such that v = (u1 + u2) + u3.Since the addition on V is associative, (u1 + u2) + u3 = u1 + (u2 + u3), and this showsthat v = u1 + (u2 + u3) is in U1 + (U2 + U3). Thus (U1 + U2) + U3 ⊂ U1 + (U2 + U3).

(2) Pick v ∈ U1 + (U2 + U3), then there exists u1, u2, u3 ∈ U such that v = u1 + (u2 + u3).Since the addition on V is associative, u1 + (u2 + u3) = (u1 + u2) + u3, and this showsthat v = (u1 + u2) + u3 is in (U1 + U2) + U3. Thus U1 + (U2 + U3) ⊂ (U1 + U2) + U3.

The above (1) and (2) imply that (U1 + U2) + U3 = U1 + (U2 + U3). �

18 3. SUBSPACES

3.18. Exercise 1.C.18: The Identity of Sum of Subspaces

Does the operation of addition on the subspaces of V have an additive identity? Whichsubspaces have additive inverses?

Proof. We claim that the subspace {0} is the additive identity, i.e. U + {0} = U for allsubspaces U .

(1) Pick v ∈ U + {0}, then there exists u ∈ U such that v = u + 0, and this shows thatv = u is in U . Thus U + {0} ⊂ U .

(2) Pick u ∈ U , then u = u+ 0 shows that u ∈ U + {0}. Thus U ⊂ U + {0}.The above (1) and (2) imply that U + {0} = U .

We claim that for all subspaces U and W , U +W = {0} implies U = W = {0}, which meansthat the subspaces that have additive inverses are only {0} itself.

Pick subspaces U and W . Assume U + W = {0}. 1.39 of [Axl14] shows that U ⊂ U + Wand W ⊂ U + W . Thus U ⊂ {0} and W ⊂ {0}. Note that the only two subsets of {0} are∅ and {0}. Also, the premise that U and W are subspaces implies 0 ∈ U and 0 ∈ W , thusU = W = {0}. �

3.19. Exercise 1.C.19: There is NO Cancellation Law for Sums of Subspaces

Prove or give a counterexample: if U1, U2,W are subspaces of V such that

U1 +W = U2 +W,

then U1 = U2.

Counterexample. Set V = R2, U1 = R2, U2 = {(x, 0) : x ∈ R},W = {(0, x) : x ∈ R}.Then U1, U2,W are subspaces of V , U1 +W = R2, U2 +W = R2 but U1 6= U2. �

3.20. Exercise 1.C.20: A Direct Sum of 2 Subspaces that Equals to F4

Suppose

U = {(x, x, y, y) ∈ F4 : x, y ∈ F}.Find a subspace W of F4 such that F4 = U ⊕W .

Proof. We claim that the following W is a subspace of F4 such that F4 = U ⊕W :

W = {(0, x, 0, y) ∈ F4 : x, y ∈ F}.We first verify that W is a subspace of F4 by 1.34 of [Axl14].

(1) We can show that 0 ∈W by choosing x = 0, y = 0 in (0, x, 0, y).(2) Suppose that u,w ∈ W where u = (0, xu, 0, yu) and w=(0, xw, 0, yw). Then u + w =

(0, xu + xw, 0, yu + yw) shows that u+ w is in W .(3) Pick a ∈ F and u ∈ W where u = (0, xu, 0, yu). Then au = (0, axu, 0, ayu) shows that

au is in W .

We then verify that U +W is a direct sum by 1.45 of [Axl14].Pick v ∈ U ∩W . Then there exists x, y, s, t ∈ F such that v = (x, x, y, y) = (0, s, 0, t), which

implies that x = 0, s = 0, y = 0, t = 0, i.e. v = 0. Therefore U +W is a direct sum.Finally, we verify that F4 = U ⊕W .

(1) 1.39 of [Axl14] implies that U ⊕W is a subspace of F4. Therefore U ⊕W ⊂ F4.(2) Pick v ∈ F4 where v = (a, b, c, d) and a, b, c, d ∈ F. Define

u = (a, a, c, c), w = (0, b− a, 0, d− c).Then u ∈ U,w ∈W and v = u+ w, which shows that v ∈ U ⊕W . Thus F4 ⊂ U ⊕W .

3.22. EXERCISE 1.C.22: A DIRECT SUM OF 4 SUBSPACES THAT EQUALS TO F5 19

�

3.21. Exercise 1.C.21: A Direct Sum of 2 Subspaces that Equals to F5

Suppose

U = {(x, y, x+ y, x− y, 2x) ∈ F5 : x, y ∈ F}.Find a subspace W of F5 such that F = U ⊕W .

Proof. We claim that the following W is a subspace of F5 such that F4 = U ⊕W :

W = {(0, 0, x, y, z) ∈ F5 : x, y, z ∈ F}.

We first verify that W is a subspace of F5 by 1.34 of [Axl14].

(1) We can show that 0 ∈W by choosing x = 0, y = 0, z = 0 in (0, 0, x, y, z).(2) Suppose that u,w ∈W where

u = (0, 0, xu, yu, zu), w = (0, 0, xw, yw, zw).

Then u+ w = (0, 0, xu + xw, yu + yw, zu + zw) shows that u+ w is in W .(3) Pick a ∈ F and u ∈W where u = (0, 0, xu, yu, zu). Then

au = (0, 0, axu, ayu, azu)

shows that au is in W .

We then verify that U +W is a direct sum by 1.45 of [Axl14].Pick v ∈ U ∩W . Then there exists x, y, a, b, c ∈ F such that v = (x, y, x + y, x − y, 2x) =

(0, 0, a, b, c), which implies that x = 0, y = 0, a = 0, b = 0, c = 0, i.e. v = 0. Therefore U +W isa direct sum.

Finally, we verify that F5 = U ⊕W .

(1) 1.39 of [Axl14] implies that U ⊕W is a subspace of F5. Therefore U +W ⊂ F5.(2) Pick v ∈ F5 where v = (a, b, c, d, e) and a, b, c, d, e ∈ F. Define u = (a, b, a + b, a −

b, 2a), w = (0, 0, c− a− b, d− a+ b, e− 2a). Then u ∈ U,w ∈W and v = u+w, whichshows that v ∈ U +W . Thus F5 ⊂ U ⊕W .

�

3.22. Exercise 1.C.22: A Direct Sum of 4 Subspaces that Equals to F5

Suppose

U = {(x, y, x+ y, x− y, 2x) ∈ F5 : x, y ∈ F}.Find three subspaces W1,W2,W3 of F5, none of which equals {0}, such that F5 = U ⊕W1 ⊕W2 ⊕W3.

Proof. We claim that the following W1,W2,W3 are subspaces of F5 such that F5 = U ⊕W1 ⊕W2 ⊕W3:

W1 = {(0, 0, x, 0, 0) ∈ F5 : x ∈ F},W2 = {(0, 0, 0, x, 0) ∈ F5 : x ∈ F},W3 = {(0, 0, 0, 0, x) ∈ F5 : x ∈ F}.

Suppose that

(3.2) 0 = u+ w1 + w2 + w3

20 3. SUBSPACES

for some u = (x, y, x+ y, x− y, 2x), w1 = (0, 0, a, 0, 0), w2 = (0, 0, 0, b, 0), w3 = (0, 0, 0, 0, c) wherex, y, a, b, c ∈ F. Then (3.2) is equivalent to the following system of linear equations.

1 0 0 0 00 1 0 0 01 1 1 0 01 −1 0 1 02 0 0 0 1

xyabc

=

00000

.It is now clear that the coefficient matrix is nonsingular, thus x = y = a = b = c = 0 is the

only solution. By 1.44 of [Axl14], we conclude that F5 = U ⊕W1 ⊕W2 ⊕W3. �

3.23. Exercise 1.C.23: There is NO Cancellation Law for Direct Sums

Prove or give a counterexample: if U1, U2,W are subspaces of V such that

V = U1 ⊕W and V = U2 ⊕W,

then U1 = U2.

Counterexample. Set V = R2, U1 = {(x, x) : x ∈ R}, U2 = {(−x, x) : x ∈ R},W ={(0, x) : x ∈ R}. Then U1, U2,W are subspaces of V , U1 ⊕ W = R2, U2 ⊕ W = R2 butU1 6= U2. �

3.24. Exercise 1.C.24: A Direct Sum Decomposition of RR

A function f : R→ R is called even if

f(−x) = f(x)

for all x ∈ R. A function f : R→ R is called odd if

f(−x) = −f(x)

for all x ∈ R. Let Ue denote the set of real-valued even functions on R and let Uo denote the setof real-valued odd functions on R. Show that RR = Ue ⊕ Uo.

Proof. We first verify that Ue and Uo are subspaces of RR by 1.34 of [Axl14].

(1) We can show that 0 ∈ Ue and 0 ∈ Uo because the 0(−x) = 0(x) = 0 and 0(−x) =−0(x) = 0 for all x ∈ R.

(2) Suppose that ue, we ∈ Ue, uo, wo ∈ Uo, then

(ue + we)(−x) = ue(−x) + we(−x)

= ue(x) + we(x)

= (ue + we)(x).

Thus ue + we ∈ Ue. Also,

(uo + wo)(−x) = uo(−x) + wo(−x)

= −uo(x)− wo(x)

= −(uo(x) + wo(x))

= −(uo + wo)(x).

Thus uo + wo ∈ Uo.

3.24. EXERCISE 1.C.24: A DIRECT SUM DECOMPOSITION OF RR 21

(3) Pick a ∈ F and ue ∈ Ue, uo ∈ Uo, then

(aue)(−x) = a · ue(−x)

= a · ue(x)

= (aue)(x).

Thus aue ∈ Ue. Also,

(auo)(−x) = a · uo(−x)

= −a · uo(x)

= −(auo)(x).

Thus auo ∈ Uo.

We then verify that Ue + Uo is a direct sum by 1.45 of [Axl14].Pick u ∈ Ue ∩ Uo, then u(−x) = u(x) = −u(x) for all x ∈ R. Thus u(x) = 0 for all x ∈ R,

which means that u is the 0 function.Finally, we prove that RR = Ue ⊕ Uo.

(1) 1.39 of [Axl14] implies that Ue ⊕ Uo is a subspace of RR. Therefore Ue ⊕ Uo ⊂ RR.(2) Pick f(x) ∈ RR. Define

fe(x) =f(x) + f(−x)

2, fo(x) =

f(x)− f(−x)

2.

Then

fe(−x) =f(−x) + f(x)

2

=f(x) + f(−x)

2= fe(x),

which implies that fe(x) ∈ Ue. Also,

fo(−x) =f(−x)− f(x)

2

= −f(x)− f(−x)

2= −fo(x),

which implies that fo(x) ∈ Uo. And,

fe(x) + fo(x) =f(x) + f(−x)

2+f(x)− f(−x)

2

= −f(x) + f(−x) + f(x)− f(−x)

2= f(x),

which shows that f(x) ∈ Ue ⊕ Uo. Therefore RR ⊂ Ue ⊕ Uo.

�

CHAPTER 4

Span and Linear Independence

4.1. Exercise 2.A.1: Alternative Span from an Existing Span

Suppose v1, v2, v3, v4 spans V . Prove that the list

v1 − v2, v2 − v3, v3 − v4, v4

also spans V .

Proof. Denote w1 = v1 − v2, w2 = v2 − v3, w3 = v3 − v4, w4 = v4. Let

A =

1 −1 0 00 1 −1 00 0 1 −10 0 0 1

,then A is nonsingular and

A−1 =

1 1 1 10 1 1 10 0 1 10 0 0 1

.Moreover,

A

v1

v2

v3

v4

=

w1

w2

w3

w4

,v1

v2

v3

v4

= A−1

w1

w2

w3

w4

.(1) Pick x ∈ span(v1, v2, v3, v4), then there exists x1, x2, x3, x4 ∈ F such that

v =[x1 x2 x3 x4

] v1

v2

v3

v4

=[x1 x2 x3 x4

] 1 1 1 10 1 1 10 0 1 10 0 0 1

w1

w2

w3

w4

=[x1 x1 + x2 x1 + x2 + x3 x1 + x2 + x3 + x4

] w1

w2

w3

w4

.Therefore v ∈ span(w1, w2, w3, w4).

23

24 4. SPAN AND LINEAR INDEPENDENCE

(2) Pick w ∈ span(w1, w2, w3, w4), then there exists y1, y2, y3, y4 ∈ F such that

w =[y1 y2 y3 y4

] w1

w2

w3

w4

=[y1 y2 y3 y4

] 1 −1 0 00 1 −1 00 0 1 −10 0 0 1

v1

v2

v3

v4

=[y1 y2 − y1 y3 − y2 y4 − y3

] v1

v2

v3

v4

.Therefore w ∈ span(v1, v2, v3, v4).

The above (1) and (2) imply that span(w1, w2, w3, w4) = span(v1, v2, v3, v4). �

4.2. Exercise 2.A.2: Examples of Linearly Independent Lists

Verify the assertions in Example 2.18.

(a) A list v of one vector v ∈ V is linearly independent if and only if v 6= 0.(b) A list of two vectors in V is linearly independent if and only if neither vector is a scalar

multiple of the other.(c) (1, 0, 0, 0), (0, 1, 0, 0), (0, 0, 1, 0) is linearly independent in F4.(d) The list 1, z, . . . , zm is linearly independent in P(F) for each nonnegative integer m.

Proof. (a) If the list v is linearly independent but v = 0, then 1v = 0 is a nontrivialrepresentation of 0 using v which is a contradiction. If v 6= 0 but the list v is not linearlyindependent, then there exists a ∈ F such that av = 0 and a 6= 0 which contradicts 1.30of [Axl14].

(b) If a list of two vectors v1, v2 in V is linearly independent and assume that, without lossof generality, v1 = av2 for some a ∈ F, then 1v1− av2 = 0 is a nontrivial representationof 0 using v1, v2 which is a contradiction. If a list of two vectors v1, v2 in V is notlinearly independent, then there exist some a1, a2 ∈ F such that a1v1 + a2v2 = 0 andnot both of a1 and a2 are zero. If a1 6= 0, then v1 = −a2

a1v2; if a1 = 0, then v2 = −a1

a2v1.

(c) Consider the equation x(1, 0, 0, 0) + y(0, 1, 0, 0) + z(0, 0, 1, 0) = 0 where x, y, z ∈ F. Inmatrix form, 1 0 0

0 1 00 0 1

xyz

=

000

Since the coefficient matrix is nonsingular, the only solution is x = y = z = 0.

(d) Consider the function f(z) = x0 + x1z + · · · + xN−1zN−1 where N − 1 ≥ 0 and

x0, x1, . . . , xN−1 ∈ F. Suppose f(z) = 0 for all z ∈ F. Then, in particular, f(1) =

4.5. EXERCISE 2.A.5: DIFFERENCE BETWEEN C AND R AS SCALAR FIELDS 25

0, f(2) = 0, . . . , f(N) = 0. In matrix form,

1 1 1 1 · · · 11 2 22 23 · · · 2N−1

1 3 32 33 · · · 3N−1

1 4 42 43 · · · 4N−1

......

......

. . ....

1 N N2 N3 · · · NN−1

x0

x1

...xN−1

=

00...0

.

Note that the above coefficient matrix is a Vandermonde matrix. Since all the num-bers 1, 2, . . . , N are distinct, its determinant is non-zero. Therefore the only solution isx0 = x1 = · · · = xN−1 = 0.

�

4.3. Exercise 2.A.3: a Linearly Dependent List

Find a number t such that

(3, 1, 4), (2,−3, 5), (5, 9, t)

is not linearly independent in R3.

Proof. We claim that t = 2 makes the list not linearly independent in R3. This can beshown by

− 3 · (3, 1, 4) + 2 · (2,−3, 5) + 1 · (5, 9, 2)

= ((−9) + 4 + 5, (−3) + (−6) + 9, (−12) + 10 + 2)

= 0.

�

4.4. Exercise 2.A.4: Conditions to Linear Dependence

Verify the assertion in the second bullet point in Example 2.20: the list

(2, 3, 1), (1,−1, 2), (7, 3, c)

is linearly dependent in F3 if and only if c = 8.

Proof. Let

A =

2 1 73 −1 31 2 c

.Then the list (2, 3, 1), (1,−1, 2), (7, 3, c) is linearly dependent if and only if det(A) = 0. Since

det(A) = 40− 5c, it follows that det(A) = 0 if and only if c = 8. �

4.5. Exercise 2.A.5: Difference between C and R as Scalar Fields

(a) Show that if we think of C as a vector space over R, then the list (1+ i, 1− i) is linearlyindependent.

(b) Show that if we think of C as a vector space over C, then the list (1+ i, 1− i) is linearlydependent.

26 4. SPAN AND LINEAR INDEPENDENCE

Proof. Suppose that there exists a+ bi, c+ di ∈ C such that a, b, c, d ∈ R and (a+ bi)(1 +i) + (c+ di)(1− i) = 0, then

(a− b+ c+ d) + (a+ b− c+ d)i = 0,

Rewrite the above linear system in the matrix form,

[1 −1 1 11 1 −1 1

]abcd

=

[00

],

then its solutions are −yxxy

: x, y ∈ R

.

(a) If we think of C as a vector space over R, then the list is linearly independent because−yxxy

: x, y ∈ R

∩x0y0

: x, y ∈ R

=

0000

.

(b) If we think of C as a vector space over C, then the list is linearly dependent because−1111

∈−yxxy

: x, y ∈ R

.

�

4.6. Exercise 2.A.6: Constructing Another Independent List from a List

Suppose v1, v2, v3, v4 is linearly independent in V . Prove that the list

v1 − v2, v2 − v3, v3 − v4, v4

is also linearly independent.

Proof. Use the same notation as the proof of Exercise 2.A.1. If the list

v1 − v2, v2 − v3, v3 − v4, v4

is linearly dependent, then there exists a1, a2, a3, a4 ∈ F, such that

[a1 a2 a3 a4

] w1

w2

w3

w4

= 0

and [a1 a2 a3 a4

]6=[0 0 0 0

].

Therefore,

[a1 a2 a3 a4

]A

v1

v2

v3

v4

= 0.

4.8. EXERCISE 2.A.8: LINEAR INDEPENDENT LIST MULTIPLIED BY SCALAR 27

Since A is nonsingular, [a1 a2 a3 a4

]A 6=

[0 0 0 0

].

Therefore it follows that the list v1, v2, v3, v4 is linearly dependent. �

4.7. Exercise 2.A.7: Constructing Another Independent List from a List

Prove or give a counterexample: If v1, v2, . . . , vm is a linearly independent list of vectors inV , then

5v1 − 4v2, v2, v3, . . . , vm

is linearly independent.

Proof. We claim that the proposition is true. Define

A =

5 −4 0 . . . 00 1 0 . . . 00 0 1 . . . 0...

......

. . ....

0 0 0 . . . 1

.Suppose that

5v1 − 4v2, v2, v3, . . . , vm

is linearly dependent, then there exists a1, a2, a3, . . . , am ∈ F, such that

[a1 a2 a3 . . . am

]A

v1

v2

v3

. . .vm

= 0

and [a1 a2 a3 . . . am

]6=[0 0 0 . . . 0

].

Since det(A) = 5, the matrix A is nonsingular. Thus[a1 a2 a3 . . . am

]A 6=

[0 0 0 . . . 0

].

Therefore it follows that the list v1, v2, . . . , vm is linearly dependent. �

4.8. Exercise 2.A.8: Linear Independent List Multiplied by Scalar

Prove or give a counterexample: If v1, v2, . . . , vm is a linearly independent list of vectors inV and λ ∈ F with λ 6= 0, then λv1, λv2, . . . , λvm is linearly independent.

Proof. We claim that the proposition is true. Suppose that λv1, λv2, . . . , λvm is linearlydependent, then there exists a1, a2, . . . , am, not all 0, such that

a1λv1 + a2λv2 + · · ·+ amλvm = 0.

Since λ 6= 0, λ−1 exists in F. Multiplying the above equation by λ−1 yields

a1v1 + a2v2 + · · ·+ amvm = 0.

Note that not all of a1, a2, . . . , am are zero. Therefore it follows that v1, v2, . . . , vm is alinearly dependent list. �

28 4. SPAN AND LINEAR INDEPENDENCE

4.9. Exercise 2.A.9: Addition of Two Linearly Independent Lists

Prove or give a counterexample: If v1, . . . , vm and w1, . . . , wm are linearly independent listsof vectors in V , then v1 + w1, . . . , vm + wm is linearly independent.

Counterexample. Set V = R2,m = 2, v1 = (0, 1), v2 = (1, 0), w1 = (0,−1), w2 = (−1, 0).Then v1, v2 is a linearly independent list, and w1, w2 is also a linearly independent list. But

v1 + w1 = (0, 0)

and

v2 + w2 = (0, 0)

is not a linearly independent list. �

4.10. Exercise 2.A.10: Adding a Vector to a Linearly Independent List

Suppose v1, . . . , vm is linearly independent in V and w ∈ V . Prove that if v1 +w, . . . , vm +wis linearly dependent, then w ∈ span(v1, . . . , vm).

Proof. If v1 + w, . . . , vm + w is linearly dependent, then there exists a1, . . . , am, not all 0,such that

a1(v1 + w) + · · ·+ am(vm + w) = 0.

The above equation implies that

w = − a1

a1 + · · ·+ amv1 − · · · −

ama1 + · · ·+ am

vm,

thus w ∈ span(v1, . . . , vm). �

4.11. Exercise 2.A.11: Concatenating a Vector to an Independent List

Suppose v1, . . . , vm is linearly independent in V and w ∈ V . Show that v1, . . . , vm, w islinearly independent if and only if

w /∈ span(v1, . . . , vm).

Proof. (1) Suppose that v1, . . . , vm, w is linearly dependent, then there exists a1, . . . , am, aw ∈F, not all 0, such that

a1v1 + · · ·+ amvm + aww = 0.

If aw = 0, then the above equation would imply that v1, . . . , vm is linearly de-pendent, which is a contradiction. Therefore aw 6= 0 and the above equation impliesthat

w = − a1

awv1 − · · · −

amaw

vm.

Thus w ∈ span(v1, . . . , vm).(2) Suppose that w ∈ span(v1, . . . , vm), then there exists a1, . . . , am ∈ F such that

w = a1v1 + · · ·+ amvm.

The above equation implies that

a1v1 + · · ·+ amvm − w = 0.

Thus v1, . . . , vm, w is linearly dependent.�

4.15. EXERCISE 2.A.15: F∞ IS INFINITE-DIMENSIONAL 29

4.12. Exercise 2.A.12: Possible Linearly Independent Lists in P4(F)

Explain why there does not exist a list of six polynomials that is linearly independent inP4(F).

Proof. It is obvious that the list 1, z, z2, z3, z4 spans P4(F), which has length 5. By 2.23 of[Axl14], the length of every linearly independent list of vectors in P4(F) is therefore less thanor equal to 5. Thus there does not exist a list of 6 polynomials that is linearly independent inP4(F). �

4.13. Exercise 2.A.13: Possible Spans of P4(F)

Explain why no list of four polynomials spans P4(F).

Proof. Exercise 2.A.2 (d) has already proved that the list 1, z, z2, z3, z4 is linearly inde-pendent in P4(F), which has length 5. By 2.23 of [Axl14], the length of every spanning list ofP4(F) is greater than or equal to 5. Thus no list of 4 polynomials spans P4(F). �

4.14. Exercise 2.A.14: Another Definition of Infinite-Dimensional

Prove that V is infinite-dimensional if and only if there is a sequence v1, v2, . . . of vectors inV such that v1, . . . , vm is linearly independent for every positive integer m.

Proof. (1) Suppose that V is finite-dimensional, then there exists a list u1, . . . , ukin V that spans V . By 2.23 of [Axl14], the length of every linearly independent listof vectors in V is less than or equal to k. Therefore, if we pick any vector sequencev1, v2, . . . in V , v1, . . . , vk+1 must be linearly dependent.

(2) Suppose that V is infinite-dimensional. We can construct, by the following steps, asequence v1, v2, . . . of vectors in V such that v1, . . . , vm is linearly independent forevery positive integer m:

Step (1): Pick any v ∈ V such that v 6= 0, and let v1 = v. Note that the list v1 is linearlyindependent.

Step (n): Suppose that Step (n− 1) has already created a vector list v1, . . . , vn−1 such thatv1, . . . , vm is linearly independent for every positive integer m ∈ {1, . . . , n − 1}.Since V is infinite-dimensional, there exists w ∈ V such that w /∈ span(v1, . . . , vn−1).Set vn = w, and we claim that v1, . . . , vn is linearly independent. To prove this,suppose that there exists a1, . . . , an ∈ F, not all zero, such that

a1v1 + · · ·+ anvn = 0.

If an = 0, then a1, . . . , an−1 would be linearly dependent, which is a contradiction.If an 6= 0, then

vn = − a1

anv1 − · · · −

an−1

anvn−1,

which implies that vn ∈ span(v1, . . . , un−1), which is also a contradiction.�

4.15. Exercise 2.A.15: F∞ is Infinite-Dimensional

Prove that F∞ is infinite-dimensional.

30 4. SPAN AND LINEAR INDEPENDENCE

Proof. Suppose that F∞ is finite-dimensional, then there exists a list v1, . . . , vk ∈ F∞ suchthat F∞ = span(v1, . . . , vk). But we may construct the following independent list of vectors inF∞ which has length k + 1:

v1 = (1, 0, 0, . . . , 0, 0, 0, 0, . . . )

v2 = (0, 1, 0, . . . , 0, 0, 0, 0, . . . )

v3 = (0, 0, 1, . . . , 0, 0, 0, 0, . . . )

...

vk−1 = (0, 0, 0, . . . , 1, 0, 0, 0, . . . )

vk = (0, 0, 0, . . . , 0, 1, 0, 0, . . . )

vk+1 = (0, 0, 0, . . . , 0, 0, 1, 0, . . . )

This contradicts with 2.23 of [Axl14]. �

4.16. Exercise 2.A.16: R[0,1] is Infinite-Dimensional

Prove that the real vector space of all continuous real-valued functions on the interval [0, 1]is infinite-dimensional.

Proof. Suppose that R[0,1] is finite-dimensional, then there exists a list

v1, . . . , vk ∈ R[0,1]

such that R[0,1] = span(v1, . . . , vk). But we may construct the following independent list ofvectors in R[0,1] which has length k + 1:

v1(x) = 1 if x =1

k + 2; otherwise 0

v2(x) = 1 if x =2

k + 2; otherwise 0

v3(x) = 1 if x =3

k + 2; otherwise 0

...

vk−1(x) = 1 if x =k − 1

k + 2; otherwise 0

vk(x) = 1 if x =k

k + 2; otherwise 0

vk+1(x) = 1 if x =k + 1

k + 2; otherwise 0.

This contradicts with 2.23 of [Axl14]. �

4.17. Exercise 2.A.17: Polynomials that Vanish at the Same Point

Suppose p0, p1, . . . , pm are polynomials in Pm(F) such that pj(2) = 0 for each j. Prove thatp0, p1, . . . , pm is not linearly independent in Pm(F).

4.17. EXERCISE 2.A.17: POLYNOMIALS THAT VANISH AT THE SAME POINT 31

Proof. Denote pj(z) = aj0 + aj1z + aj2z2 + · · ·+ ajmz

m for each j and let

A =

a00 a01 a02 . . . a0m

a00 a01 a02 . . . a0m

......

.... . .

...am0 am1 am2 . . . amm

.That pj(2) = 0 for each j implies

A

20

21

22

...2m

=

000...0

.Thus A is a singular matrix, and so is AT .Suppose there exists s0, s1, . . . , sm ∈ F such that s0p0 + s1p1 + · · ·+ smpm = 0, then

a00 a10 . . . am0

a01 a11 . . . am1

......

. . ....

a0m a1m . . . amm

s0

s1

...sm

=

00...0

.Note that the coefficient matrix of the above linear system is AT , which we have shown is

a singular matrix. So the linear system has a nonzero solution. We therefore conclude that thelist p0, p1, . . . , pm is linearly dependent. �

CHAPTER 5

Bases

5.1. Exercise 2.B.1: Vector Spaces that Have Exactly One Basis

Find all vector spaces that have exactly one basis.

Proof. We claim that no vector spaces over F (which is either R or C) have exactly onebasis.

Given a vector space V . Suppose that v1, . . . , vk is the only basis of V . Denote

v =

v1

...vk

.Note that Av is also a basis of V for any nonsingular matrix A, then

Av = v, for all nonsingular A.

In particular, take A = 2I. Then

(2I − I)v = 0,

which implies that v = 0. But the list 0 is not linearly dependent, contradicting with thepresumption that v1, . . . , vk is a basis. �

5.2. Exercise 2.B.2: Examples and Counterexamples of Bases

Verify all the assertions in Example 2.28.

(a) The list (1, 0, . . . , 0), (0, 1, 0, . . . , 0), . . . , (0, . . . , 0, 1) is a basis of Fn, called the standardbasis of Fn.

(b) The list (1, 2), (3, 5) is a basis of F2.(c) The list (1, 2,−4), (7,−5, 6) is linearly independent in F3 but is not a basis of F3 because

it does not span F3.(d) The list (1, 2), (3, 5), (4, 13) spans F2 but is not a basis of F2 because it is not linearly

independent.(e) The list (1, 1, 0), (0, 0, 1) is a basis of {(x, x, y) ∈ F3 : x, y ∈ F}.(f) The list (1,−1, 0), (1, 0,−1) is a basis of

{(x, y, z) ∈ F3 : x+ y + z = 0}.

(g) The list 1, z, . . . , zm is a basis of Pm(F).

Proof. (a) Pick v = (v1, v2, . . . , vn) ∈ Fn where v1, v2, . . . , vn ∈ F. Then it is obviousthat v can be written uniquely as

v1(1, 0, . . . , 0) + v2(0, 1, 0, . . . , 0) + · · ·+ vn(0, . . . , 0, 1).

33

34 5. BASES

(b) Pick v = (v1, v2) ∈ F2. Let v = x(1, 2) + y(3, 5) where x, y ∈ F, which corresponds tothe following linear system [

1 32 5

] [xy

]=

[v1

v2

].

Since the coefficient of the above linear system is nonsingular, there is exactly one

solution[x y

]Tfor every

[v1 v2

]T. Therefore (1, 2), (3, 5) is a basis of F2.

(c) As shown in (1), there exists a linearly independent list of length 3 in F3, thus no listsof length 2 can span F3 by 2.23 of [Axl14].

(d) As shown in (1), there exists a list of length 2 that spans F2, thus no lists of length 3can be linearly independent in F2 by 2.23 of [Axl14].

(e) Pick v = (x, x, y) ∈ F3 where x, y ∈ F. Then it is obvious that v can be written uniquelyas

x(1, 1, 0) + y(0, 0, 1).

(f) Denote V = {(x, y, z) ∈ F3 : x+ y+ z = 0}. Pick v = (a, b, c) ∈ V then c = −a− b. Letv = x(1,−1, 0) + y(1, 0,−1) where x, y ∈ F, which corresponds to the following linearsystem 1 1

−1 00 −1

[xy

]=

ab

−a− b

.Note that the above linear system is an overdetermined system, so it has either no

solutions or a unique solution. Moreover, it can be shown that[−ba+ b

]is one possible solution. Therefore every v ∈ V can be written uniquely using (1,−1, 0), (1, 0,−1).

(g) Pick v(z) = a0 + a1z + a2z2 + · · ·+ amz

m ∈ Pm(F) where a0, a1, a2, . . . , am ∈ F. Then4.7 of [Axl14] implies that v can be written uniquely as

a0 · 1 + a1 · z + a2 · z2 + · · ·+ am · zm.

�

5.3. Exercise 2.B.3: Find and Extend a Basis for a Subspace of R5

(a) Let U be the subspace of R5 defined by

U = {(x1, x2, x3, x4, x5) ∈ R5 : x1 = 3x2 and x3 = 7x4}.

Find a basis of U .(b) Extend the basis in part (a) to a basis of R5.(c) Find a subspace W of R5 such that R5 = U ⊕W .

Proof. (a) Let

u1 = (3, 1, 0, 0, 0)

u2 = (0, 0, 7, 1, 0)

u3 = (0, 0, 0, 0, 1).

We claim that the list u1, u2, u3 is a basis of U . To prove this, pick u = (x1, x2, x3, x4, x5) ∈U , then it is obvious that u can be uniquely represented as

x2u1 + x4u2 + x5u3.

5.4. EXERCISE 2.B.4: FIND AND EXTEND A BASIS FOR A SUBSPACE OF C5 35

(b) Let

v1 = (1, 0, 0, 0, 0)

v2 = (0, 1, 0, 0, 0)

v3 = (0, 0, 1, 0, 0)

v4 = (0, 0, 0, 1, 0)

v5 = (0, 0, 0, 0, 1).

Then v1, v2, v3, v4, v5 is a basis of R5. Thus the list

u1, u2, u3, v1, v2, v3, v4, v5

spans R5. The procedure of the proof of 2.31 of [Axl14] deletes v2, v4, v5 and leavesv1, v3 unchanged, resulting in the following basis of R5 extended from the basis in part(a):

u1, u2, u3, v1, v3.

(c) Let W = span(v1, v3). We claim that R5 = U ⊕W . To prove this, by 1.45 of [Axl14],we only need to show that

R5 = U +W and U ∩W = {0}.

To prove the 1st equation above, suppose v ∈ R5. Then because the list u1, u2, u3, v1, v3

spans R5, there exists a1, a2, a3, b1, b3 ∈ F such that

v = a1u1 + a2u2 + a3u3 + b1v1 + b3v3.

In other words, we have v = u + w, where u = (a1u1 + a2u2 + a3u3) ∈ U andw = (b1v1 + b3v3) ∈W . Thus v ∈ U +W , completing the proof that R5 = U +W .

To show that U ∩ W = {0}, suppose v ∈ U ∩ W . Then there exist scalarsa1, a2, a3, b1, b3 ∈ F such that

v = a1u1 + a2u2 + a3u3 = b1v1 + b3v3.

Thus

a1u1 + a2u2 + a3u3 − b1v1 − b3v3 = 0.

Because u1, u2, u3, v1, v3 is linearly independent, this implies that a1 = a2 = a3 =b1 = b3 = 0. Thus v = 0, completing the proof that U ∩W = {0}.

�

5.4. Exercise 2.B.4: Find and Extend a Basis for a Subspace of C5

(a) Let U be the subspace of C5 defined by

U = {(z1, z2, z3, z4, z5) ∈ C5 : 6z1 = x2 and z3 + 2z4 + 3z5 = 0}.

Find a basis of U .(b) Extend the basis in part (a) to a basis of C5.(c) Find a subspace W of C5 such that C5 = U ⊕W .

Proof. (a) Let

u1 = (1, 6, 0, 0, 0)

u2 = (0, 0,−2, 1, 0)

u3 = (0, 0,−3, 0, 1).

36 5. BASES

We claim that the list u1, u2, u3 is a basis of U . To prove this, pick u = (z1, z2, z3, z4, z5) ∈U , then it is obvious that u can be uniquely represented as

x1u1 + x4u2 + x5u3.

(b) Let

v1 = (1, 0, 0, 0, 0)

v2 = (0, 1, 0, 0, 0)

v3 = (0, 0, 1, 0, 0)

v4 = (0, 0, 0, 1, 0)

v5 = (0, 0, 0, 0, 1).

Then v1, v2, v3, v4, v5 is a basis of C5. Thus the list

u1, u2, u3, v1, v2, v3, v4, v5

spans C5. The procedure of the proof of 2.31 of [Axl14] deletes v2, v4, v5 and leavesv1, v3 unchanged, resulting in the following basis of C5 extended from the basis in part(a):

u1, u2, u3, v1, v3.

(c) Let W = span(v1, v3). We claim that C5 = U ⊕W . To prove this, by 1.45 of [Axl14],we only need to show that

C5 = U +W and U ∩W = {0}.To prove the 1st equation above, suppose v ∈ C5. Then because the list u1, u2, u3, v1, v3

spans C5, there exists a1, a2, a3, b1, b3 ∈ F such that

v = a1u1 + a2u2 + a3u3 + b1v1 + b3v3.

In other words, we have v = u + w, where u = (a1u1 + a2u2 + a3u3) ∈ U andw = (b1v1 + b3v3) ∈W . Thus v ∈ U +W , completing the proof that C5 = U +W .

To show that U ∩ W = {0}, suppose v ∈ U ∩ W . Then there exist scalarsa1, a2, a3, b1, b3 ∈ F such that

v = a1u1 + a2u2 + a3u3 = b1v1 + b3v3.

Thus

a1u1 + a2u2 + a3u3 − b1v1 − b3v3 = 0.

Because u1, u2, u3, v1, v3 is linearly independent, this implies that a1 = a2 = a3 =b1 = b3 = 0. Thus v = 0, completing the proof that U ∩W = {0}.

�

5.5. Exercise 2.B.5: A Basis of P3(F) without Degree-2 Polynomials

Prove or disprove: there exists a basis p0, p1, p2, p3 of P3(F) such that none of the polynomialsp0, p1, p2, p3 has degree 2.

Proof. We claim that the following list is a possible choice of such p0, p1, p2, p3:

p0(z) = 1 + z3

p1(z) = z + z3

p2(z) = z2 + z3

p3(z) = z3.

5.5. EXERCISE 2.B.5: A BASIS OF P3(F) WITHOUT DEGREE-2 POLYNOMIALS 37

To prove this, note that p0, p1, p2, p3 each has degree 3. Thus it only suffices to show thatp0, p1, p2, p3 is a basis of P3(F).

Pick p(z) ∈ P3(F). As shown in Exercise 2.B.2, 1, z, z2, z3 is a basis P3(F). Thus there existsa unique list of scalars a0, a1, a2, a3 ∈ F such that

p(z) = a0 + a1z + a2z2 + a3z

3.

Let us denote

A =

1 0 0 10 1 0 10 0 1 10 0 0 1

,then A is a nonsingular matrix and

A−1 =

1 0 0 −10 1 0 −10 0 1 −10 0 0 1

.Using this notation, we have

p0

p1

p2

p3

= A

1zz2

z3

,and

A−1

p0

p1

p2

p3

=

1zz2

z3

.Therefore

p(z) =[a0 a1 a2 a3

] 1zz2

z3

=([a0 a1 a2 a3

]A−1

)p0

p1

p2

p3

.The above equation implies that p0, p1, p2, p3 spans P3(F).To show that p0, p1, p2, p3 is linearly independent, suppose b0, b1, b2, b3 ∈ F are such that

0 =[b0 b1 b2 b3

] p0

p1

p2

p3

.Then the above equation implies that

0 =([b0 b1 b2 b3

]A)

1zz2

z3

.

38 5. BASES

Since 1, z, z2, z3 is linearly independent, this implies that

0 =[b0 b1 b2 b3

]A.

Since A is nonsingular, this further implies that

0 =[b0 b1 b2 b3

].

Thus p0, p1, p2, p3 is linearly independent and hence is a basis of P3(F). �

5.6. Exercise 2.B.6: Constructing Another Basis from a Basis

Suppose v1, v2, v3, v4 is a basis of V . Prove that

v1 + v2, v2 + v3, v3 + v4, v4

is also a basis of V .

Proof. Denote

u1 = v1 + v2

u2 = v2 + v3

u3 = v3 + v4

u4 = v4.

Pick v ∈ V , then there exists a unique list of scalars a1, a2, a3, a4 ∈ F such that

v = a1v1 + a2v2 + a3v3 + a4v4.

Let us denote

A =

1 1 0 00 1 1 00 0 1 10 0 0 1

,then A is a nonsingular matrix and

A−1 =

1 −1 1 −10 1 −1 10 0 1 −10 0 0 1

.Using this notation, we have

u1

u2

u3

u4

= A

v1

v2

v3

v4

,and

A−1

u1

u2

u3

u4

=

v1

v2

v3

v4

.

5.7. EXERCISE 2.B.7: CONSTRUCTING A COUNTEREXAMPLE 39

Therefore

v =[a0 a1 a2 a3

] v1

v2

v3

v4

=([a0 a1 a2 a3

]A−1

)u1

u2

u3

u4

.The above equation implies that u1, u2, u3, u4 spans V .To show that u1, u2, u3, u4 is linearly independent, suppose b1, b2, b3, b4 ∈ F are such that

0 =[b1 b2 b3 b4

] u1

u2

u3

u4

.Then the above equation implies that

0 =([b1 b2 b3 b4

]A)v1

v2

v3

v4

.Since v1, v2, v3, v4 is linearly independent, this implies that

0 =[b1 b2 b3 b4

]A.

Since A is nonsingular, this further implies that

0 =[b1 b2 b3 b4

].

Thus u1, u2, u3, u4 is linearly independent and hence is a basis of V . �

5.7. Exercise 2.B.7: Constructing a Counterexample

Prove or give a counterexample: If v1, v2, v3, v4 is a basis of V and U is a subspace of V suchthat v1, v2 ∈ U and v3 /∈ U and v4 /∈ U , then v1, v2 is a basis of U .

Counterexample. Take V = R4 and define

v1 = (2, 0, 1, 0),

v2 = (0, 0, 0, 1),

v3 = (0, 3,1

2, 1),

v4 = (3, 0, 1,1

2).

We claim that v1, v2, v3, v4 is a basis of V , which can be shown by the following determinantcalculation:

det

2 0 1 00 0 0 10 3 1

2 13 0 1 1

2

= −3.

DefineU = {(2x, y, x, z) ∈ R4 : x, y, z ∈ R}.

40 5. BASES

We claim that U is a subspace of V . To show this, first 0 = (2 · 0, 0, 0, 0) is in U . Supposeu = (2x, y, x, z) ∈ U and w = (2a, b, a, c) ∈ U , we can check that u + w = (2x + 2a, y + b, x +a, z + c) = (2(x+ a), y + b, x+ a, z + c) is in U . Suppose f ∈ F and u = (2x, y, x, z) ∈ U , we cancheck that fu = (2fx, y, fx, z) = (2(fx), y, fx, z) is also in U .

Further, it is clear that v1, v2 ∈ U, v3 /∈ U, v4 /∈ U .Finally, since (0, 1, 0, 0) ∈ U cannot be written as a linear combination of v1 and v2, we

conclude that v1, v2 is not a basis of U . �

5.8. Exercise 2.B.8: Basis Obtained from a Direct Sum

Suppose U and W are subspaces of V such that V = U ⊕W . Suppose also that u1, . . . , umis a basis of U and w1, . . . , wn is a basis of W . Prove that

u1, . . . , um, w1, . . . , wn

is a basis of V .

Proof. (1) We first prove that u1, . . . , um, w1, . . . , wn spans V . Pick v ∈ V . By thedefinition of direct sum, v can be written in the only one way as a sum u + w, whereu ∈ U and w ∈W . By the definition of basis, u can be written as u = a1u1 +· · ·+amumand w can be written as w = b1w1 + · · ·+ bnwn where a1, . . . , am, b1, . . . , bn ∈ F. Takentogether,

v = a1u1 + · · ·+ amum + b1w1 + · · ·+ bnwn.

(2) We then prove that u1, . . . , um, w1, . . . , wn is linearly independent. Suppose there existx1, . . . , xm, y1, . . . , yn ∈ F such that

x1u1 + · · ·+ xmum + y1w1 + · · ·+ ynwn = 0.

Thenx1u1 + · · ·+ xmum = −y1w1 − · · · − ynwn.

Denote p = x1u1 + · · · + xmum and q = −y1w1 − · · · − ynwn, then p ∈ U ∩W andq ∈ U ∩W . By 1.45 of [Axl14], p = q = 0.

Further, since u1, . . . , um is linearly independent, p = 0 implies that all of x1, . . . , xmare 0. Similarly, since w1, . . . , wn is linearly independent, q = 0 implies that all ofy1, . . . , yn are 0.

�

CHAPTER 6

Dimension

6.1. Exercise 2.C.1: A Space with the Same Dimension of its Subspace

Suppose V is finite-dimensional and U is a subspace of V such that dimU = dimV . Provethat U = V .

Proof. Suppose dimU = dimV = n and u1, . . . , un is a basis of U . Since u1, . . . , un is alinearly independent list in V with length dimV , 2.39 of [Axl14] implies that u1, . . . , un is abasis of V . Hence

U = span(u1, . . . , un) = V.

�

6.2. Exercise 2.C.2: Subspaces of R2

Show that the subspaces of R2 are precisely {0}, R2, and all lines in R2 through the origin.

Proof. Pick a subspace U of R2, then dimU ∈ {0, 1, 2} by 2.39 of [Axl14].

(1) If dimU = 0, then U = {0}.(2) If dimU = 1, then there exists a vector v = (x, y) ∈ R2 such that

U = span(v).

Hence, geometrically, U can be viewed as a line in R2 passing through points (0, 0) and(x, y).

(3) If dimU = 2, then Exercise 2.C.1 implies that U = R2.

�

6.3. Exercise 2.C.3: Subspaces of R3

Show that the subspaces of R3 are precisely {0}, R3, all lines in R3 through the origin, andall planes in R3 through the origin.

Proof. Pick a subspace U of R3, then dimU ∈ {0, 1, 2, 3} by 2.39 of [Axl14].

(1) If dimU = 0, then U = {0}.(2) If dimU = 1, then there exists a vector v = (x, y, z) ∈ R3 such that

U = span(v).

Hence, geometrically, U can be viewed as a line in R3 passing through points (0, 0, 0)and (x, y, z).

(3) If dimU = 2, then there exists a vector v = (v1, v2, v3), w = (w1, w2, w3) in R3 suchthat

U = span(v, w).

Geometrically, since the list v, w is linearly independent, v and w are not on thesame line in R3. Hence U is a plane in R3 that passes the following three points:(v1, v2, v3), (w1, w2, w3) and (0, 0, 0).

41

42 6. DIMENSION

(4) If dimU = 3, then Exercise 2.C.1 implies that U = R3.

�

6.4. Exercise 2.C.4: Find and Extend a Basis for a Subspace of P4(F)

(a) Let U = {p ∈ P4(F) : p(6) = 0}. Find a basis of U .(b) Extend the basis in part (a) to a basis of P4(F).(c) Find a subspace W of P4(F) such that P4(F) = U ⊕W .

Proof. (a) We first verify that U is a subspace of P4. First 0 ∈ U since 0(6) = 0. Ifp, q ∈ U , then p+ q ∈ U because (p+ q)(6) = p(6) + q(6) = 0. If a ∈ F and p ∈ U , thenap ∈ U because (ap)(6) = ap(6) = 0.

We claim that the following list is a basis of U :

z − 6, z2 − 62, z3 − 63, z4 − 64.

Clearly each of the above polynomials is in U . Suppose a, b, c, d ∈ F and

a(z − 6) + b(z2 − 62) + c(z3 − 63) + d(z4 − 64) = 0

for every z ∈ F. Without explicitly expanding the left side of the equation above, wecan see that the left side has a dz4 term. Because the right side has no z4 term, thisimplies that d = 0. Because d = 0, we see that the left side has a cz3 term, whichimplies that c = 0. Similarly, b = a = 0.

Thus the equation above implies that a = b = c = d = 0. Hence the list is linearlyindependent in U . Thus dimU > 4. Considering U as a subspace of P4(F), which hasdimension 5, we conclude that

4 6 dimU 6 5.

But Exercise 2.A.17 has shown that any list of vectors in U that has length 5 isnot linearly independent, which means that dimU 6= 5. Thus dimU = 4 and 2.39 of[Axl14] implies that the list is a basis of U .

(b) We claim that the following list is a basis of P4(F):

1, z − 6, z2 − 62, z3 − 63, z4 − 64.

Clearly each of the above polynomials is in P4(F). Suppose

a0, a1, a2, a3, a4 ∈ F

and

a0 + a1(z − 6) + a2(z2 − 62) + a3(z3 − 63) + a4(z4 − 64) = 0

for every z ∈ F. Without explicitly expanding the left side of the equation above, wecan see that the left side has a a4z

4 term. Because the right side has no z4 term, thisimplies that a4 = 0. Because a4 = 0, we see that the left side has a a3z

3 term, whichimplies that a3 = 0. Similarly, a2 = a1 = 0. Because a4 = a3 = a2 = a1 = 0, we canalso conclude that a0 = 0.

Hence the list is linearly independent in P4(F). Since dimP4(F) = 5, 2.39 of[Axl14] implies that the list is a basis of P4.

(c) Define

W ≡ {a0 : a0 ∈ F}and we claim that P4(F) = U ⊕W .

To prove this, by 1.45 of [Axl14], we only need to show that

P4(F) = U +W and U ∩W = {0}.

6.5. EXERCISE 2.C.9: dim span(v1 + w, . . . , vm + w) > m− 1 43

To prove the first equation above, suppose v ∈ P4(F). Then, because the list1, z − 6, z2 − 62, z3 − 63, z4 − 64 spans P4, there exists a0, a1, a2, a3, a4 ∈ F such that

v = a0︸︷︷︸w

+ a1(z − 6) + a2(z2 − 62) + a3(z3 − 63) + a4(z4 − 64)︸ ︷︷ ︸u

.

In other words, we have v = u+w, where u ∈ U and w ∈W are defined as above.Thus v ∈ U +W , completing the proof that P4(F) = U +W .

To show that U ∩ W = {0}, suppose v ∈ U ∩ W . Then there exist scalarsa0, a1, a2, a3, a4 ∈ F such that

v = a0 = a1(z − 6) + a2(z2 − 62) + a3(z3 − 63) + a4(z4 − 64).

Thus

a0 − a1(z − 6)− a2(z2 − 62)− a3(z3 − 63)− a4(z4 − 64) = 0.

Because because the list 1, z − 6, z2 − 62, z3 − 63, z4 − 64 is linearly independent,this implies that a0 = a1 = a2 = a3 = a4 = 0. Thus v = 0, completing the proof thatU ∩W = {0}.

�

6.5. Exercise 2.C.9: dim span(v1 + w, . . . , vm + w) > m− 1

Suppose v1, . . . , vm is linearly independent in V and w ∈ V . Prove that

dim span(v1 + w, . . . , vm + w) > m− 1.

Proof. Define

U ≡ span(v1 + w, . . . , vm + w),

w1 ≡ (v1 + w)− (v2 + w) = v1 − v2,

w2 ≡ (v2 + w)− (v3 + w) = v2 − v3,

...

wm−1 ≡ (vm−1 + w)− (vm + w) = vm−1 − vm,W ≡ span(w1, . . . , wm−1).

Then W is a subspace of U .Suppose a1, . . . , am−1 ∈ F and a1w1 + · · ·+ am−1wm−1 = 0, then

[a1 a2 . . . am−1

]