lecture 10 l2 regularization STAT598z ... · Ridge regression Creditdataset(averagecreditcarddebt)...

Transcript of lecture 10 l2 regularization STAT598z ... · Ridge regression Creditdataset(averagecreditcarddebt)...

lecture 10: l2 regularizationSTAT 598z: Introduction to computing for statistics

Vinayak RaoDepartment of Statistics, Purdue University

February 14, 2019

Ordinary least squares

Consider linear regression:

y = x⊤w+ ϵ

In vector notation:

y = XTw+ ϵ, y ∈ ℜn,w ∈ ℜp, X ∈ ℜp×n

w = arg minw ∥y− X⊤w∥2 = arg minw∑n

i=1(yi − x⊤i w)2

1/10

Ordinary least squares

Consider linear regression:

y = x⊤w+ ϵ

In vector notation:

y = XTw+ ϵ, y ∈ ℜn,w ∈ ℜp, X ∈ ℜp×n

w = arg minw ∥y− X⊤w∥2 = arg minw∑n

i=1(yi − x⊤i w)2

1/10

Ordinary least squares

Consider linear regression:

y = x⊤w+ ϵ

In vector notation:

y = XTw+ ϵ, y ∈ ℜn,w ∈ ℜp, X ∈ ℜp×n

w = arg minw ∥y− X⊤w∥2 = arg minw∑n

i=1(yi − x⊤i w)2

1/10

Ordinary least squares

Problem:w = arg minw ∥y− X⊤w∥2 = arg minw

n∑i=1

(yi − x⊤i w)2

Solution:w = (XX⊤)−1Xy (correlation in 1-d)

(XX⊤) Xy

How to do this in R (without using lm)?• Do not invert with solve and multiply!• Directly solve (XX⊤)w = Xy

2/10

Ordinary least squares

Problem:w = arg minw ∥y− X⊤w∥2 = arg minw

n∑i=1

(yi − x⊤i w)2

Solution:w = (XX⊤)−1Xy (correlation in 1-d)

(XX⊤) Xy

How to do this in R (without using lm)?• Do not invert with solve and multiply!• Directly solve (XX⊤)w = Xy

2/10

Ordinary least squares

Problem:w = arg minw ∥y− X⊤w∥2 = arg minw

n∑i=1

(yi − x⊤i w)2

Solution:w = (XX⊤)−1Xy (correlation in 1-d)

(XX⊤) Xy

How to do this in R (without using lm)?• Do not invert with solve and multiply!

• Directly solve (XX⊤)w = Xy

2/10

Ordinary least squares

Problem:w = arg minw ∥y− X⊤w∥2 = arg minw

n∑i=1

(yi − x⊤i w)2

Solution:w = (XX⊤)−1Xy (correlation in 1-d)

(XX⊤) Xy

How to do this in R (without using lm)?• Do not invert with solve and multiply!• Directly solve (XX⊤)w = Xy

2/10

Prediction error

w is an unbiased estimate of the true w

For a test vector xtest we predict w⊤xtest.

(Squared) prediction error: PE2 = 1k∑k

i=1(ytesti − w⊤xtesti )2

Can show:

• PE is has mean 0• variance grows with number of features (p)

What if p > n?

• XX⊤ is singular

3/10

Prediction error

w is an unbiased estimate of the true w

For a test vector xtest we predict w⊤xtest.

(Squared) prediction error: PE2 = 1k∑k

i=1(ytesti − w⊤xtesti )2

Can show:

• PE is has mean 0• variance grows with number of features (p)

What if p > n?

• XX⊤ is singular

3/10

Prediction error

w is an unbiased estimate of the true w

For a test vector xtest we predict w⊤xtest.

(Squared) prediction error: PE2 = 1k∑k

i=1(ytesti − w⊤xtesti )2

Can show:

• PE is has mean 0• variance grows with number of features (p)

What if p > n?

• XX⊤ is singular

3/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias• λ = ∞? No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?

4/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias• λ = ∞? No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?

4/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias• λ = ∞? No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?

4/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias

• λ = ∞? No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?

4/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias• λ = ∞?

No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?

4/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias• λ = ∞? No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?

4/10

Regularization

p > n:

• Cannot invert XX⊤

• We can invert if we add a small λ to the diagonal

wλ = (XX⊤ + λI)−1Xy (I is the identity matrix)

Introducing λ makes problem well-posed, but introduces bias

• λ = 0 recovers OLS• Larger λ causes larger bias• λ = ∞? No variance!

λ trades-off bias and variance

Maybe a nonzero λ is actually good?4/10

Ridge regression (a.k.a. Tikhonov regularization)

Recall w = (XX⊤)−1Xy solves w = arg min ∥y− X⊤w∥2

wλ = (XX⊤ + λI)−1Xy solves

wλ = argminLλ(w) := argminn∑i=1

(yi − x⊤i w)2 + λ∥w∥22

∥w∥22 =∑p

i=1 w2i is the squared ℓ2-norm

λ∥w∥2 is the shrinkage penalty.

Favours w’s with smaller components

λ trades of small training error with ‘simple’ solutions

ℓ2/ridge/Tikhonov regularization

5/10

Ridge regression (a.k.a. Tikhonov regularization)

Recall w = (XX⊤)−1Xy solves w = arg min ∥y− X⊤w∥2

wλ = (XX⊤ + λI)−1Xy solves

wλ = argminLλ(w) := argminn∑i=1

(yi − x⊤i w)2 + λ∥w∥22

∥w∥22 =∑p

i=1 w2i is the squared ℓ2-norm

λ∥w∥2 is the shrinkage penalty.

Favours w’s with smaller components

λ trades of small training error with ‘simple’ solutions

ℓ2/ridge/Tikhonov regularization

5/10

Ridge regression (a.k.a. Tikhonov regularization)

Recall w = (XX⊤)−1Xy solves w = arg min ∥y− X⊤w∥2

wλ = (XX⊤ + λI)−1Xy solves

wλ = argminLλ(w) := argminn∑i=1

(yi − x⊤i w)2 + λ∥w∥22

∥w∥22 =∑p

i=1 w2i is the squared ℓ2-norm

λ∥w∥2 is the shrinkage penalty.

Favours w’s with smaller components

λ trades of small training error with ‘simple’ solutions

ℓ2/ridge/Tikhonov regularization

5/10

Ridge regression (a.k.a. Tikhonov regularization)

Recall w = (XX⊤)−1Xy solves w = arg min ∥y− X⊤w∥2

wλ = (XX⊤ + λI)−1Xy solves

wλ = argminLλ(w) := argminn∑i=1

(yi − x⊤i w)2 + λ∥w∥22

∥w∥22 =∑p

i=1 w2i is the squared ℓ2-norm

λ∥w∥2 is the shrinkage penalty.

Favours w’s with smaller components

λ trades of small training error with ‘simple’ solutions

ℓ2/ridge/Tikhonov regularization

5/10

Ridge regression (a.k.a. Tikhonov regularization)

Recall w = (XX⊤)−1Xy solves w = arg min ∥y− X⊤w∥2

wλ = (XX⊤ + λI)−1Xy solves

wλ = argminLλ(w) := argminn∑i=1

(yi − x⊤i w)2 + λ∥w∥22

∥w∥22 =∑p

i=1 w2i is the squared ℓ2-norm

λ∥w∥2 is the shrinkage penalty.

Favours w’s with smaller components

λ trades of small training error with ‘simple’ solutions

ℓ2/ridge/Tikhonov regularization

5/10

Ridge regression (a.k.a. Tikhonov regularization)

Recall w = (XX⊤)−1Xy solves w = arg min ∥y− X⊤w∥2

wλ = (XX⊤ + λI)−1Xy solves

wλ = argminLλ(w) := argminn∑i=1

(yi − x⊤i w)2 + λ∥w∥22

∥w∥22 =∑p

i=1 w2i is the squared ℓ2-norm

λ∥w∥2 is the shrinkage penalty.

Favours w’s with smaller components

λ trades of small training error with ‘simple’ solutions

ℓ2/ridge/Tikhonov regularization

5/10

Ridge regression (solution)

Simple modification of the least-squares solution:

wλ = (X⊤X+ λIp)−1X⊤y

In the 1-dimensional case,

wλ = (x⊤x+ λIp)−1x⊤y

=x⊤x

(x⊤x+ λIp)x⊤yx⊤x

= c w (c < 1)

Shrinks least-squares solution.

6/10

Ridge regression (solution)

Simple modification of the least-squares solution:

wλ = (X⊤X+ λIp)−1X⊤y

In the 1-dimensional case,

wλ = (x⊤x+ λIp)−1x⊤y

=x⊤x

(x⊤x+ λIp)x⊤yx⊤x

= c w (c < 1)

Shrinks least-squares solution.

6/10

Ridge regression (solution)

Simple modification of the least-squares solution:

wλ = (X⊤X+ λIp)−1X⊤y

In the 1-dimensional case,

wλ = (x⊤x+ λIp)−1x⊤y

=x⊤x

(x⊤x+ λIp)x⊤yx⊤x

= c w (c < 1)

Shrinks least-squares solution.

6/10

Ridge regression (solution)

Simple modification of the least-squares solution:

wλ = (X⊤X+ λIp)−1X⊤y

In the 1-dimensional case,

wλ = (x⊤x+ λIp)−1x⊤y

=x⊤x

(x⊤x+ λIp)x⊤yx⊤x

= c w (c < 1)

Shrinks least-squares solution.

6/10

Ridge regression (solution)

Simple modification of the least-squares solution:

wλ = (X⊤X+ λIp)−1X⊤y

In the 1-dimensional case,

wλ = (x⊤x+ λIp)−1x⊤y

=x⊤x

(x⊤x+ λIp)x⊤yx⊤x

= c w (c < 1)

Shrinks least-squares solution.

6/10

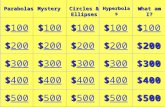

Ridge regression

Credit data set (average credit card debt)

1e−02 1e+00 1e+02 1e+04

−300

−100

0100

200

300

400

Sta

nd

ard

ize

d C

oe

ffic

ien

ts

IncomeLimitRatingStudent

0.0 0.2 0.4 0.6 0.8 1.0

−300

−100

0100

200

300

400

Sta

nd

ard

ize

d C

oe

ffic

ien

ts

λ ‖βR

λ‖2/‖β‖2

James, Witten, Hastic and Tibshirani

7/10

How do we choose λ?

Cross-validaton:

• Pick a set of λ’s• For kth fold of cross-validation:• For each λ:• Solve the regularized least squares problem on training data.• Evaluate estimated w on held-out data (call this PEλ,k).

• Pick λ = argmin mean(PEλ)or (argmin (mean(PEλ) + stderr(PEλ)))

• Having chosen λ solve regularized least square on all data

8/10

How do we choose λ?

Cross-validaton:

• Pick a set of λ’s• For kth fold of cross-validation:

• For each λ:• Solve the regularized least squares problem on training data.• Evaluate estimated w on held-out data (call this PEλ,k).

• Pick λ = argmin mean(PEλ)or (argmin (mean(PEλ) + stderr(PEλ)))

• Having chosen λ solve regularized least square on all data

8/10

How do we choose λ?

Cross-validaton:

• Pick a set of λ’s• For kth fold of cross-validation:• For each λ:• Solve the regularized least squares problem on training data.• Evaluate estimated w on held-out data (call this PEλ,k).

• Pick λ = argmin mean(PEλ)or (argmin (mean(PEλ) + stderr(PEλ)))

• Having chosen λ solve regularized least square on all data

8/10

How do we choose λ?

Cross-validaton:

• Pick a set of λ’s• For kth fold of cross-validation:• For each λ:• Solve the regularized least squares problem on training data.• Evaluate estimated w on held-out data (call this PEλ,k).

• Pick λ = argmin mean(PEλ)or (argmin (mean(PEλ) + stderr(PEλ)))

• Having chosen λ solve regularized least square on all data

8/10

How do we choose λ?

Cross-validaton:

• Pick a set of λ’s• For kth fold of cross-validation:• For each λ:• Solve the regularized least squares problem on training data.• Evaluate estimated w on held-out data (call this PEλ,k).

• Pick λ = argmin mean(PEλ)or (argmin (mean(PEλ) + stderr(PEλ)))

• Having chosen λ solve regularized least square on all data

8/10

Does this work?

1e−01 1e+01 1e+03

010

20

30

40

50

60

Mean S

quare

d E

rror

0.0 0.2 0.4 0.6 0.8 1.0

010

20

30

40

50

60

Mean S

quare

d E

rror

λ ‖βR

λ‖2/‖β‖2

Ridge regression improves performance by reducing variance

• does not perform feature selection• just shrinks components of w towards 0

For the former: Lasso

9/10

Does this work?

1e−01 1e+01 1e+03

010

20

30

40

50

60

Mean S

quare

d E

rror

0.0 0.2 0.4 0.6 0.8 1.0

010

20

30

40

50

60

Mean S

quare

d E

rror

λ ‖βR

λ‖2/‖β‖2

Ridge regression improves performance by reducing variance

• does not perform feature selection• just shrinks components of w towards 0

For the former: Lasso

9/10

Does this work?

1e−01 1e+01 1e+03

010

20

30

40

50

60

Mean S

quare

d E

rror

0.0 0.2 0.4 0.6 0.8 1.0

010

20

30

40

50

60

Mean S

quare

d E

rror

λ ‖βR

λ‖2/‖β‖2

Ridge regression improves performance by reducing variance

• does not perform feature selection• just shrinks components of w towards 0

For the former: Lasso

9/10

Regularization as constrained optimization

argmin(y− X⊤w)2 + λ∥w∥22 is equivalent to

argmin(y− X⊤w)2 s.t. ∥w∥22 ≤ γ

(Note: γ will depend on data)

First problem: regularized optimizationSecond problem: constrained optimization

10/10

Regularization as constrained optimization

argmin(y− X⊤w)2 + λ∥w∥22 is equivalent to

argmin(y− X⊤w)2 s.t. ∥w∥22 ≤ γ

(Note: γ will depend on data)

First problem: regularized optimizationSecond problem: constrained optimization

10/10

Regularization as constrained optimization

argmin(y− X⊤w)2 + λ∥w∥22 is equivalent to

argmin(y− X⊤w)2 s.t. ∥w∥22 ≤ γ

(Note: γ will depend on data)

First problem: regularized optimizationSecond problem: constrained optimization

10/10