ImageNet Classification with Deep Convolutional Neural...

Transcript of ImageNet Classification with Deep Convolutional Neural...

ImageNet Classification with Deep Convolutional NeuralNetworks

Choi Yongchan

Department of Statistics

May 4, 2017

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 1 / 17

Outline

Dataset

Architecture

Reducing Overfitting

Results

Discussion

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 2 / 17

Dataset

ImageNet Large-Scale Visual Recognition Challenge (ILSVRC)

Using ILSVRC2010 data, check the model performance.

Roughly 1000 images in each of 1000 categories.

1.2 million training images, 50,000 validation images, 150,000 testimages

down-sampled the images to a fixed resolution of 256X256

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 3 / 17

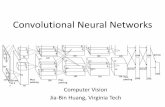

Architecture

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 4 / 17

Architecture

- ReLU Nonlinearity

standard way to model a neuron’s output

f (x) = tanh(x), f (x) = (1 + exp(−x))−1

Non-saturating nonlinearity(ReLU)

f (x) = max(0, x)

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 5 / 17

Architecture

- Training on Multiple GPUs

two GTX 580 3GB GPUs

The GPUs communicate only in certain layers.

This scheme reduces top-1 and top-5 error rates by 1.7, 1.2 percent ascompared with a net with half as may kernels in each convolutional layertrained on one GPU

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 6 / 17

Architecture

- Local Response Normalization

ReLUs have the desirable property that they do not require inputnormalization.

But local nomalization scheme aids generalization.

This scheme reduces the top-1 top-5 error rates by 1.4 and 1.2 percent

bx ,yi = ax ,y

i/(k +

min(N−1,i+n/2)∑j=max(0,i−n/2)

(ax ,yj)2)β

k = 2, n = 5, α = 10−4, β = 0.75

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 7 / 17

Architecture

- Overlapping Pooling

Traditional pooling(s=z)

Overlapping pooling

This scheme reduces the top-1 and top-5 error rates by 0.4 and 0.5percent as compared with the non-overlapping scheme s=2, z=2

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 8 / 17

Architecture

1st Convolutional layer

96 kernals with size 11 X 11 X 3 (stride 4)

2nd Convolutional layer

256 kernals with size 5 X 5 X 48

3rd Convolutional layer (inter GPU connection)

384 kernals with size 3 X 3 X 256

4th Convolutional layer

192 kernals with size 3 X 3 X 192

5th Convolutional layer

256 kernals with size 3 X 3 X 192

Fully connected layers have 4096 neurons each.

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 9 / 17

Reducing Overfitting

On previous network architecture, There are 60 million parameter.

Alexnet takes two primary ways to reduce overfitting(Data augmentation, Dropout)

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 10 / 17

Reducing Overfitting

- Data augmentation

Image translations and horizontal reflection1. extracting 224 X 224 patches from the 256X256 images(get 2048 images per one image)2. At test time, using 10 patches(size 224X224) and averaging thepredictions

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 11 / 17

Reducing Overfitting

- Data augmentation

Altering the intensities of the RGB

Ixy = [IxyR , Ixy

G , IxyB ]

add the following quantity

[p1, p3, p3][α1λ1, α2λ2, α2λ2]T

wherepi and λi

are ith eigenvector and eigenvalue of the 3X3 covariance matrix ofRGB pixel values

αi ∼ N(0, 0.1)

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 12 / 17

Reducing Overfitting

- Dropout

With probability 0.5

At test time, use all the neurons but multiply their outputs by 0.5

Without dropout, the network exhibits substantial overfitting

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 13 / 17

Results

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 14 / 17

Results

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 15 / 17

Results

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 16 / 17

Discussion

The depth of network is really important for achieving our results.

Classfication on video(Video sequences provides temporal structure which is very helpfulinformation)

Choi Yongchan (Department of Statistics) ImageNet Classification with Deep Convolutional Neural NetworksMay 4, 2017 17 / 17

![Optimizing Memory Efficiency for Deep Convolutional Neural ... · The success of the deep Convolutional Neural Network (CNN), Alex-Net [12], in the 2012 ImageNet recognition competition](https://static.fdocuments.net/doc/165x107/5ec67ba3ae6d260984337e23/optimizing-memory-efficiency-for-deep-convolutional-neural-the-success-of-the.jpg)