IIT-TUDA at SemEval-2016 Task 5: Beyond Sentiment Lexicon: Combining Domain Dependency and...

-

Upload

alexander-panchenko -

Category

Science

-

view

102 -

download

0

Transcript of IIT-TUDA at SemEval-2016 Task 5: Beyond Sentiment Lexicon: Combining Domain Dependency and...

IIT-TUDA at SemEval-2016 Task 5: Beyond Sentiment Lexicon:

Combining Domain Dependency and Distributional Semantics Features for Aspect Based Sentiment Analysis

Ayush Kumar1, Sarah Kohail2, Amit Kumar1, Asif Ekbal1, Chris Biemann21IIT Patna, India 2TU Darmstadt, Germany

Presented by: Alexander Panchenko, TU Darmstadt, Germany

2

Motivation

People write blog posts, comments, reviews, tweets, etc. Attitudes, feelings, emotions, opinions, etc.

Mining and summarizing opinions/sentiment from text about specific entities and their aspects can help: Organizations to monitor their reputation and products. Customers to make a decision or choose among multiple options.

3

Opinion target=“battery" category="BATTERY#OPERATION_PERFORMANCE" polarity="negative"

This computer has a super fast processor but the battery last so little

Opinion target=“processor" category="CPU#OPERATION_PERFORMANCE" polarity=“positive”

SemEval-Task 5: Aspect-Based Sentiment Analysis (ABSA)

entity#attribute

Polarity class

Aspect term “opinion target”

4

SemEval-Task 5: Aspect-Based Sentiment analysis (ABSA)

Aspect Based Sentiment Analysis (ABSA) task analysis performs a fine-grained sentiment analysis by addressing three slots:

1. Aspect Category Detection: Identifying the entity#attribute that is referred to by the aspect. E and A should be chosen from predefined inventories of entity types (e.g. LAPTOP, MOUSE, RESTAURANT, FOOD) and attribute labels (e.g. DESIGN, PRICE, QUALITY).

2. Opinion Target (OT) Extraction: Extracting aspects, given a set of sentences with pre-identified entities (e.g., restaurants), identify the aspect terms “opinion target” from the review text which present in the sentence.

3. Sentiment Polarity Classification: Each identified Entity#Attribute, OT tuple has to be assigned one of the following polarity labels: positive, negative, or neutral.

5

Our Submission

We participated in Slot 1 (aspect category detection) and Slot 3 (sentiment polarity classification) for 7 languages and 4 different domains.

We also conducted experiments for Slot 2 (opinion target extraction) for 4 languages in restaurants domain.

Overall, we submitted 29 runs, covering 7 languages (English, Spanish, Dutch, French, Turkish, Russian and Arabic) and 4 different domains (laptop, restaurants, phones, hotels).

6

Experimental Setup: Supervised Models

For Slot 1 and Slot 3, we use supervised classification using Support Vector Machine (SVM) with the linear kernel.

For Slot 2, we use linear-chain Conditional Random Field (CRF) with default parameters.

We perform 5-fold cross-validation on the training set to evaluate the performance.

7

Feature Extraction: Preprocessing

Normalize digits to ‘num’ and remove stop words for tf-idf computation.

For English, we use Stanford tools to tokenize, parse and extract lemma, Part-of-Speech (PoS) and named entity (NE) information.

For the other languages, we use taggers and dependency parsers based on Universal Dependencies (UD).

8

Contribution I: Lexicon Expansion based on DT1. Based on the notion of distributional thesaurus (DTs), we

expand existing lexical resources to reach a higher coverage of sentiment lexicons and improve the extraction of rare/unseen aspect words.

Examples of DT expansions

Token DT Expansiongood bad, excellent, decent, great

powerful potential, influential, strong, sophisticated

small tiny, large, sized, huge, sizable

efficientreliable, effective, energy-efficient, flexible

9

Contribution I: Lexicon Expansion based on DTpos +--------------------------------------------------------------------- w1 w2 w3 .. .. .. .. w100exp1 exp1 exp1 exp1...exp50 exp50 exp50 exp50

neg -----------------------------------------------------------------------w1 w2 w3 .. .. .. .. w100exp1 exp1 exp1 exp1... exp50 exp50 exp50 exp50

15 expansion lists contain w=“terrific”

3 expansion lists contain w=“terrific”

10

Contribution I: Lexicon Expansion based on DT

If the word w=“terrific“ occurs 18 times: + - good (15/18) / 100 (3/18) / 50

results: 0.008 0.003

Sentiment score for an expanded word w:

10

pos +--------------------------------------------------------------------- w1 w2 w3 .. .. .. .. w100exp1 exp1 exp1 exp1...exp50 exp50 exp50 exp50

neg -----------------------------------------------------------------------w1 w2 w3 .. .. .. .. w100exp1 exp1 exp1 exp1... exp50 exp50 exp50 exp50

15 expansion lists contain w=“terrific”

3 expansion lists contain w=“terrific”

11

Contribution I: Lexicon Expansion based on DT Expansion statistics for induced lexicons. Common entries denote the number of words which are

present both in the seed lexicon and the induced lexicon

12

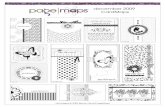

Contribution II: DDGs for Aspect Category Detection

processor

.

.

.

(.....)(.....)(.....)..

(.....)(.....)(.....)..

(.....)(.....)(.....)..

(.....)(.....)(.....)..

(.....)(.....)(.....)..

(.....)(.....)(.....)..

(.....)(.....)(.....)..

(.....)(.....)(.....)..

.

.

.

d1

d2

dn

fast

good

amod(processor, fast)amod(processor, good)conj(good, fast)amod(processor, fast)

#amod(processor, fast) 24amod(processor, good) 13conj(good, fast) 19

amod, 24

amod, 13

conj_and, 19

1. detect topics underlying a mixed-domain dataset using topic modeling.

2. Aggregate individual dependency relations between domain-specific content words, weigh them with tf-idf and select the highest-ranked words and their dependency relations.

13

Contribution II: DDGs for Aspect Category Detection

processorfast

good

amod, 2

amod, 1

#amod(processor, fast) 2amod(processor, good) 1conj(good, fast) 1

#amod(processor, fast) 2amod(processor, good) 1

3. Resulting graphs were filtered and only ‘amod’ (adjective modifying a noun) and ‘nsubj’ (nominal subjects of predicates) relations were selected.

4. For each extracted aspect from the opinion-aspect pairs, we determine the existence or absence of this aspect using a binary feature.

14

Aspect Category Detection: Slot 1

Features: Aspect list produced by Domain Dependency Graphs (DDG).

(0/1) Top 10 DTs expansions for every 5 five words based on tfidf

score in each aspect category (for example: ‘overpriced’, ‘$’, ‘pricey’, ‘cheap’, ‘expensive’ are the most significant terms in ‘food#price’ category). (0/1)

Bag of Words. (freq)

15

Opinion Term “OT” Extraction Features: Slot 2 Features:

PoS context [-2..2] Word and Local Context [-5..5] 5 DT expansions of current token Expansion Score Prefix and Suffix up to 4 characters Noun phrase head word and its PoS Character N-grams Presence of adjective modifier dependency relations Orthographic features (starts with capital letter) Is frequent aspect?

Additional features for English: WordNet (4 noun synsets of current

token) NE information Chunk information Lemma

16

Sentiment Polarity Classification: Slot 3

Features: N-Gram (unigram and bigram) The sum of sentiment scores (including our DT-expanded

lexicons) Entity#Attribute pair given in the training set.

17

Results

DatasetScores

Aspect Category Detection : F1 (Rank / Entries)

OT Extraction: F1* (Rank / Entries)

Polarity Classification:

Acc. (Rank / Entries)English Restaurants 63.0 (17 / 30) 68.45 (3 / 19) 86.70 (2 / 29)

Dutch Restaurants 55.2 (3 / 6) 64.37 (1 / 3) 76.90 (2 / 4)

Spanish Restaurants 59.8 (6 / 9) 69.73 (1 / 5) 83.50 (1 / 5)

French Restaurants 57.8 (2 / 6) 69.94 (1 / 3) 72.20 (5 / 6)

Russian Restaurants 62.6 (3 / 7) - 73.60 (3 / 6)

Turkish Restaurants 56.6 (3 / 5) - 84.20 (1 / 3)

Dutch Phones 45.4 (2 / 4) - 82.50 (2 / 3)English Laptops 43.9 (12 / 22) - 82.70 (1 / 22)Arabic Hotels - - 81.72 (2 / 3)

* scores after a post-competition bug fix

18

Impact of the Induced Lexicon Feature Ablation Experiment for Sentiment Polarity

Classification (Slot 3)

19

Impact of the Induced Lexicon Feature Ablation Experiment for Sentiment Polarity

Classification (Slot 3)

20

Future Work Apply the Aspect-based Sentiment Analysis approach for

German Analysis of the Deutsche Bahn (DB) passenger user

feedback texts

http://lt.informatik.tu-darmstadt.de/de/research/absa-db-aspect-based-sentiment-analysis-for-db-products-and-services

21

Thank You

22

Opinion Target “OT” Extraction: Slot 2

Since we deal with the OT (opinion target) as a sequence labeling problem, we identify the boundary of OT using the standard BIO notation.

We follow the standard BIO notation, where ‘BASP’, ‘I-ASP’ and ‘O’ represent the beginning, intermediate and outside tokens of a multi-word OT respectively.

The (O) Beef (B-ASP) Chow (I-ASP) Fun (I-ASP) was (O) very (O) dry (O) . (O)

’ Beef Chow Fun’ is the OT.