Finding cacheable areas in your Web Site using Python and Selenium David Elfi Intel.

-

Upload

margery-glenn -

Category

Documents

-

view

222 -

download

0

Transcript of Finding cacheable areas in your Web Site using Python and Selenium David Elfi Intel.

Finding cacheable areas in your Web Site using Python

and Selenium

David ElfiIntel

What does this session talk about?

Python Performance Web applications Hands on session

Caching

Hot topic in web applications because- Better response time across geo distribution

- Better scalability

Difficult to focus at development time

Help developers to improve response time

What to do Find text areas repeated in a web resource (page, json response, other dynamic

resources) in order to split them in different responses

Use Cache-Control, Expires and ETag HTTP Headers for caching control

Identify all the dependencies for a given URL

- Even AJAX calls

Proposed Solution Take snapshots in different points in time

- Use selenium for:

- Download ALL the content

- Needs to run JS code for Ajax

Compare the snapshots looking for similarities

- Split the similar text in different HTTP responses

Solution – Snapshots Selenium through a forward proxy

Proxy Twisted

Data

Web ServerStore Content

Running Selenium – Snapshots

Call Selenium from Python

Use of WebDriver

>>> from selenium import webdriver>>>>>> br = webdriver.Firefox()>>> >>> br.get(“http://www.intel.com”)>>> >>> br.close()

Twisted Proxy - Snapshots

class CacheProxyClient(proxy.ProxyClient): def connectionMade(self): # Connection Made. Prepare object properties def handleHeader(self, key, value): # Save response header.

def handleResponsePart(self, buf): # Store response data. def handleResponseEnd(self): # Finished response transmission. Store it

class CacheProxyClientFactory(proxy.ProxyClientFactory): protocol = CacheProxyClient

class CacheProxyRequest(proxy.ProxyRequest): protocols = dict(http=CacheProxyClientFactory)

class CacheProxy(proxy.Proxy): requestFactory = CacheProxyRequest

class CacheProxyFactory(http.HTTPFactory): protocol = CacheProxy

Selenium + Twisted - Snapshots

Run Selenium using Proxy>>> from selenium import webdriver>>> fp = webdriver.FirefoxProfile()>>> fp.set_preference("network.proxy.type", 1)>>> fp.set_preference("network.proxy.http", "localhost")>>> fp.set_preference("network.proxy.http_port", 8080)>>> br = webdriver.Firefox(firefox_profile=fp)

Selenium + Twisted - Snapshots

Configure Twisted and run Selenium in an internal Twisted threadfrom twisted.internet import endpoints, reactor

endpoint = endpoints.serverFromString(reactor, "tcp:%d:interface=%s" % (8080, "localhost"))d = endpoint.listen(CacheProxyFactory()) reactor.callInThread( runSelenium, url_str)

reactor.run()

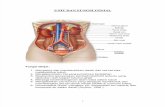

All together running

1 n32

= 1

= 2

= nComparison method

Output

Comparison

''' Equal sequence searcher '''def matchingString(s1, s2): '''Compare 2 sequence of strings and return the matching sequences concatenated''' from difflib import SequenceMatcher matcher = SequenceMatcher(None, s1, s2) output = "" for (i,_,n) in matcher.get_matching_blocks(): output += s1[i:i+n] return output

def matchingStringSequence( seq ): ''' Compare between pairs up to final result ''' try: matching = seq[0] for s in seq[1:len(seq)]: matching = matchingString(matching, s) return matching except TypeError: return ""

Next Steps Split similar texts in different HTTP responses

Set Cache-Control

- Public

- Private

- No-cache

Set Expires

- Depending on the time it should be cache

Set ETag

- If response is big and does change too often

Advanced Features to be done Detect cache invalidation time from snapshots

SSL supports

Wait for all AJAX calls

Selenium Scripting

- Authenticated URLs

- Full feature sequence

Summary If caching areas has not been identified previous to development, this code could

save time and effort in doing so

Caching areas need to be analyzed for looking best cache method (server cache, CDN, browser caching)

Refactoring for maximizing caching data is the next step

Q & A