Fertile Ground for Academic Research in general and ......Networking in the Cloud Era - Technical...

Transcript of Fertile Ground for Academic Research in general and ......Networking in the Cloud Era - Technical...

© 2010 IBM Corporation

IBM Research Division

Networking in the Cloud Era - Technical Seminar, 28 Nov. 2010

Amit Golander, PhD

Lina Battestilli, PhD

Next Generation Compute Systems

IBM's PowerENTM Developer Cloud:

Fertile Ground for Academic Research in general and Networking Research in particular

© 2010 IBM Corporation �

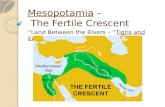

Abstract� IBM’s Power Edge of Network (PowerENTM) processor, merges network and server attributes

to create a new class of wire-speed processor.

� As a novel architecture, the PowerEN processor offers fertile ground for research. IBM encourages academic research and has set up the “PowerEN Developer Cloud”infrastructure to allow it.

General-purpose cores

Integrated IO Special-purpose cores

© 2010 IBM Corporation �

Wire-Speed Processor AttributesA blurring of the Network and ������ worlds

• Highly-multi-threaded low power cores with ��������

� ������ ����� � ���� ������� ����� ��� � ��� ��������

• Smaller memory line sizes• Accelerators: for both Networking & Application tiers• Integrated Network system & Memory I/O� � ������������� ���

� ������������ �������������

• Low total power solution based on thruput optimization

Wire-SpeedProcessing

File ServerApplication &Business processing

DatabaseWeb serving

Tiered ITData CenterFunction:

Network

Server processingNetworkProcessors

© 2010 IBM Corporation �

16 Processor cores, 64 HW threads

�4 At nodes (2MB eDRAM L2 cache each)

�4 A2 cores per At node

�4 threads per core

�64-bit PowerPC ISA

�Designed for power efficiency

�2.3 GHz operation

�Direct Inter-thread communication

�Cache inject and slave memory support

PowerEN Chip Overview

Bus

Bus Internal I/F Controllers

Bus Internal I/F Controllers

MiscI/O

Bus External I/F Controller

4B +4BEI3

4B+4BEI3

4B+4BEI3

x1 PHY

x1 PHY

4x 10GE MAC 2x 1GE MAC

PCIExpress Gen. 2

Ethernet Packet Offload Engine

x4 PHY x4 PHYx4 PHY x4 PHY x4 PHY x4 PHY

Flash ROM

and Misc IO Logic

At0 At1 At2 At3

2 MB L2

A2

A2 A2

A2

2 MB L2

A2

A2

A2

A2

2 MB L2

A2

A2

A2

A2

2 MB L2

A2

A2

A2 A2

At0 At1 At2 At3

2 MB L2

A2

A2 A2

A2

2 MB L2

A2

A2

A2

A2

2 MB L2

A2

A2

A2

A2

2 MB L2

A2

A2

A2 A2

PervasiveLogic

PervasiveLogic

PICPervasiveLogic

PervasiveLogic

PIC

Crypto XML Matching

EngineDecompXMLComp /

Decomp

PatternMatchingEngine

MC

Mem Phy

MC

Mem Phy

MC

Mem Phy

MC

Mem Phy

Memory Controllers

�4 DDR2/DDR3 direct attach channels

Scaling up

�SMP coherencySystem on a Chip

10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

MMT chipSystem on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

System on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

shMMT ChipSystem on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

System on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

MMT chipSystem on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

System on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

MMT chipSystem on a Chip10Gb Ethernet10Gb Ethernet10Gb Ethernet10Gb Ethernet

PC

I Exp

ress

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

DD

R3

DR

AM

Cha

nnel

1Gb

Eth

erne

t1G

b E

ther

net

PC

I Exp

ress

Boo

t Fla

sh

PCI-Express:

�Root-complex and End-point configurations

Integrated Ethernet:

�4 * 10Gb Eth. ports

�Host Ethernet Accelerator (HEA)� End point / Network mode � Advanced multi-queue traffic classification� TCP/IP offload� Programmable packet parsing� Virtualization

© 2010 IBM Corporation �

PowerEN Chip Overview (cont.)

Accelerators:

� Bus attach with DMA engines, address translation and virtual functions

� Synchronous / Asynchronous to processor cores

� Continuous mode support

� Application transparent when used with Zlib, OpenSSL and Regexp libraries

Bus

Bus Internal I/F Controllers

Bus Internal I/F Controllers

MiscI/O

Bus External I/F Controller

4B +4BEI3

4B+4BEI3

4B+4BEI3

x1 PHY

x1 PHY

4x 10GE MAC 2x 1GE MAC

PCIExpress Gen. 2

Ethernet Packet Offload Engine

x4 PHY x4 PHYx4 PHY x4 PHY x4 PHY x4 PHY

Flash ROM

and Misc IO Logic

At0 At1 At2 At3

2 MB L2

A2

A2 A2

A2

2 MB L2

A2

A2

A2

A2

2 MB L2A

2A

2A

2A

2

2 MB L2

A2

A2

A2 A2

At0 At1 At2 At3

2 MB L2

A2

A2 A2

A2

2 MB L2

A2

A2

A2

A2

2 MB L2A

2A

2A

2A

2

2 MB L2

A2

A2

A2 A2

PervasiveLogic

PervasiveLogic

PICPervasiveLogic

PervasiveLogic

PIC

Crypto XML Matching

EngineDecompXMLComp /

Decomp

PatternMatchingEngine

MC

Mem Phy

MC

Mem Phy

MC

Mem Phy

MC

Mem Phy

� Compression / Decompression

� Cryptography

� Regular Expression (Pattern-matching)

� XML accelerator

© 2010 IBM Corporation �

10GbpsEthernet

driver

10GbpsEthernet

driver

10GbpsEthernet

driver

General-purpose server

PowerENas a

co-processor

PCIe

A. Parallel programming research

C. Networking research“bump on the wire”

PowerEN

10GbpsEthernet

driver

B. Hybrid computing research

PowerEN

PowerEN

PowerEN

Or

PowerEN Developer Cloud� IBM encourages research, skill development and open source projects

� Free PaaS delivery models: both simulated and actual hardware.

� Evaluation boards will later be distributed as well when required.

� Cloud is still in beta mode – Working with selected Universities under NDA

PowerEN Developer Cloud – Configuration Examples

PowerEN

PowerEN

PowerEN

© 2010 IBM Corporation �

General-purpose serverCPUs

Ethernet NIC

10Gbps Ethernet ports

PCIe

B. Single hop latency

A. Traditional 3-5 hop compute system

PowerEN

CPUs + Integrated IO

10Gbps Ethernet ports

South bridge / IO Hub

Few Examples of Research Opportunities

� Accelerators usage to shift implementation tradeoffs of specific workloads

� Large scale sort – bypass disk IO bottlenecks (Compression)

� Deduplication (Cryptography)

� XML-based databases (XML)

� Dictionary canonization (RegX)

� Networking & Cloud

� Low latency service applications

� High throughput & QoS

� Virtualization & Consolidation

� Fine-grained cyber security

� Smarter Networking

� Parallel programming, Hybrid computing, Virtualization…

© 2010 IBM Corporation �

Zoom-in on one example – OpenFlow

OpenFlow Status

� Stanford started at 2008

� Vendor Support: NEC, Juniper, HP, Cisco, Arista, Ciena,

� 8 Campus Deployments across the US before by 2010

Reference: GENI Engineering Conference 9, June 2010

PCOpenFlow API

SSL/TCP

Ntwk

App

Ntwk

App

Ntwk

App

����������

FlowTableFlowTable

OpenFlowFirmware

OpenFlowFirmware

Data Pathhw

sw

���� ���� ����

� What is OpenFlow

� Open standard that defines APIs between switch HW and control plane SW

� setup and isolate experimental network with the existing/production network environment

� Per-flow fine granularity traffic control and management

� Why OpenFlow

� We believe it can play an important role in the Cloud Computing Era.

� Faster innovation cycle to explore:� Scaling out Applications via Stateless Load Distribution and Load-Balancing� Guiding Traffic via Middleboxes (Enhanced security, Performance optimization…)� VM Mobility

© 2010 IBM Corporation �

PowerEN can play a role in different OpenFlow areas

PowerEN as an OpenFlow Controller:

Controller

(e.g. NOX, DIFANE)

OpenFlow

Ntwk

App

Ntwk

App

Ntwk

App

Ntwk

App

API not defined yet

Data path ����������� �

Data path

Function Needed Specific problem PowerEN

Packet I/O large fraction of CPU cycles are spent on packet reception and transmission via NICs

Optimization possible via the Integrated network I/O (HEA):

Programmable packet parsing, End point / Network mode, Cache inject and processor selection, Large Send, Checksum Computation/Validation, Multi-core Scaling

Computational Capacity & Acceleration

Network Services Applications may have compute-intensive operations (hashing, encryption, compression, pattern matching) which could create CPU bottleneck

PowerEN Acceleration Engines could offload these intensive packet processing operations from the cores

Packet Processing

Large number of packets need to be processed

MMT --- Inherent parallelism for stateless packet processing

Table Look-Up Need large memory bandwidth and low access to memory

� Independent memory controllers (2) and channels (4)

� Cache injects and Cache-to-cache transfers offload the memory controllers

• OpenFlow Switch + light portion of some network applications

• OpenFlow Controller + network applications

© 2010 IBM Corporation

Many PowerEN papers are already out there – Examples:� IBM’s PowerEN Developer Cloud: Fertile ground for academic research

IEEE 26th convention in Israel, Nov 2010A. Golander, N. Greco, J. Xenidis, M. Hyland, B. Purcell and D. Bernstein

� A Wire-Speed Power Processor: 2.3GHz 45nm SOI with 16 Cores and 64 ThreadsISSCC Feb 2010C. Johnson, D.H. Allen, J. Brown, R. Hoover, H. Achilles, C.Y. Cher, G.A. May, H. Franke, J. Xenidis and C. Basso

� A Proposal for Efficient CPU-Accelerator HandshakeWAHA May 2009G.T. Davis, A. Krishna, S. Ramani, J.H. Derby and K.V. Vu

� Misc articles from the special Network-Optimized Computing journal IBM R&D journal (Vol 54, Num 1, 2010) - http://www.research.ibm.com/journal/rd54-1.html:

� Introduction to the wire-speed processor and architectureH. Franke, J. Xenidis, C. Basso, B.M. Bass, S.S. Woodward, J.D. Brown, and C.L. Johnson

� Workload and network-optimized computing systemsD. LaPotin, S. Daijavad, C. Johnson, S. Hunter, K. Ishizaki, H. Franke, H. Achilles, D. Dumarot, N. Greco, and B. Davari

� Exploiting heterogenous multicore-processor systems for high-performance network processingH. Franke, T. Nelms, H. Yu, H.D. Achilles, and R. Salz

� SoftRDMA: Implementing iWARP over TCP kernel socketsF.D. Neeser, B. Metzler, and P.W. Frey

� Asymmetric flow control for data transfer in hybrid computing systemsF. Iorio, K. Müller, A. Castelfranco, O. Callanan, and T. Sanuki

� SIP server performance on multicore systemsC.P. Wright, E.M. Nahum, D. Wood, J.M. Tracey, and E. C. Hu

� Aggregating REST requests to accelerate Web 2.0 applicationsM. Ohara

� VoIP performance on multicore platformsZ. Zhu, L. Chen, Y. Lin, L. Shao

© 2010 IBM Corporation

Questions?

Come and play with the technology!

amitg @ il.ibm.com