ENGN8530: Computer Vision and Image Understanding...

Transcript of ENGN8530: Computer Vision and Image Understanding...

1

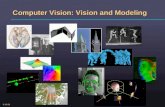

ENGN8530: Computer Vision and Image Understanding:

Theories and Research

Topic 1:Introduction to Computer Vision

and Image Understanding

Dr Chunhua ShenDr Roland Goecke

VISTA / NICTA & RSISE, ANU

ENGN8530: CVIU 2

What is Computer Vision?“Vision is a process that produces, from images of the external world, a description that is useful to the viewer and not cluttered with irrelevant information.” (Marr and Nishihara, 1978)“Computer vision is the science and technology of machines that see. computer vision is concerned with the theory and technology for building artificial systems that obtain information from images or multi-dimensional data. ” (Wikipedia)Reference:D. Marr and K. Nishihara, “Representation and recognition of the spatial organisation of three-dimensional shapes”, Proc. Royal Society, B-200, 1978, pp. 269-294.

ENGN8530: CVIU 3

What is Computer Vision? (2)Sometimes seen as complementary to biological vision.In biological vision, the visual perception of humans and various animals are studied, resulting in models of how these systems operate in terms of physiological processes.

Computer vision, on the other hand, studies and describes artificial vision system that are implemented in software and/or hardware.

ENGN8530: CVIU 4

What is Computer Vision? (3)Applications:

Controlling processes (robots, vehicles) Detecting events (visual surveillance) Organising information (indexing databases of images / videos) Modelling objects or environments (medical image analysis)Interaction (HCI)

Source: Wikipedia

ENGN8530: CVIU 5

Image UnderstandingComputer vision goes hand in hand with image understandingWhat information do we need to know to understand the scene?How can we make decisions about what objects are present, their shape, their positioning? Source: CMU Computer Vision course

ENGN8530: CVIU 6

Image Understanding (2)Many different questions and approaches to solve computer vision / image understanding problems:

Can we build useful machines to solve specific (and limited) vision problems?Is there anything special about the environment which makes vision possible?Can we build a model of the world / scene from 2D images?

Many different fields are involved, e.g. computer science, AI, neuroscience, psychology, engineering, philosophy, art.

ENGN8530: CVIU 7

Sub-areas of CVIUScene reconstructionEvent detectionObject trackingObject recognitionObject structure recoveryEgo-motionMulti-view geometryIndexing of image / video databases…

ENGN8530: CVIU 8

Scene Reconstruction

From stereo From multiple views

ENGN8530: CVIU 9

Event Detection

Source: MERL

Source: Roland Goecke

ENGN8530: CVIU 10

Object Tracking

Source: Roland Goecke

ENGN8530: CVIU 11

Object Recognition

Query

Result

DatabaseSource: David Nister

ENGN8530: CVIU 12

Object Structure Recovery

Reference:A.D. Worrall, J.M. Ferryman, G.D. Sullivan and K.D. Baker, “Pose and structure recovery using

active models”, Proc. 6th British Machine Vision Conference, Vol.1, Birmingham, UK, pp137-146.

ENGN8530: CVIU 13

Ego-motion

Estimated camera path

Optical flow

Source: Roland Goecke

ENGN8530: CVIU 14

Multi-View Geometry

Epipolar geometry

Source: Richard Hartley, Andrew Zisserman

ENGN8530: CVIU 15

Indexing and Retrieval

Results

1

23

Query

Reference: J. Sivic and A. Zisserman, “Video Google: A Text Retrieval Approach to Object Matching in Videos”, Proc. International Conference on Computer Vision, Nice, France, 2003, pp. 1470-1477.

ENGN8530: CVIU 16

The Default Approach (Marr)Work ‘bottom up’ from the image to a 3D world model via hierarchy of representations as follows

Pixel array – the imageRaw primal sketch – edge, corner, etc. representationPrimal sketch – structural information, i.e. groupings, segmentations, etc.2½-D sketch – depth information in image-centred view3-D world model

Reference:D. Marr, “Vision”, Freeman, 1982.

ENGN8530: CVIU 17

The Default Approach (2)

Image sensorVisible,infra-red, radar…

Image captureDigitisation

Image processingFeature detection(edges, corners,

regions)Feature grouping

Characterization of parts

Objectrecognition

ENGN8530: CVIU 18

What is in Image?An image is an array/matrix of values (picture elements = ‘pixels’) on a plane which describe the world from the point of view of the observer.Because of the “line of sight”effect, this is a 2D representation of the 3D world.The meaning of the pixels depends on the sensors used for their acquisition. Source: Antonio Robles-Kelly

ENGN8530: CVIU 19

Imaging Sensors• The information seen by the imaging device is digitised

and stored as pixel values.• Two important quantities of imaging sensors are:

• Spatial resolution: How many pixels are there? → Image size• Signal resolution: How many values per pixel?

There are many different types of sensorsOptical: CCDs, CMOS, photodiodes, photomultipliers, photoresistorsInfrared: BolometersOthers: Range sensors (laser), Synthetic Aperture Radar (SAR), Positron emission tomography (PET), Computed (Axial) Tomography (CAT/CT), Magnetic Resonance Imaging (MRI)

ENGN8530: CVIU 20

Electro-Magnetic SpectrumSWIR MWIR LWIR

1.7µm2.5µm3.0µm 5.0µm 14.0µm8.0µm

NIR

1.0µm

UV Visible0.4µm

•The human eye can see light between 400 and 700 nm.

ENGN8530: CVIU 21

Charge-Coupled Device (CCD)CCDs (Charge-Coupled Devices) were invented in 1969 by Willard Boyle and George Smith at AT&T.They are composed of an array of capacitors which are sensible to light.More modern devices are based upon photodiodes.

Source: Wikipedia

ENGN8530: CVIU 22

CCD (2)Generally, the light-sensitive unit of construction is arranged in an array whose topology is a latticeNot always true, e.g. log-polar CCDsColour CCDs:

Bayer filter: 1x Red, 1x Blue, 2x Green because the human eye is more sensitive to greenRGBE filter: 1x Red, 1x Blue, 1x Green, 1x Emerald (Cyan)

Bayer filter

RGBE filter

Source: Wikipedia

ENGN8530: CVIU 23

BolometersInvented by the astronomer Samuel Pierpont Langley in 1878.It is a device comprised of an "absorber" in contact with a “heat sink” through an insulator. The sink can be viewed as a reference for the absorber temperature, which is raised by the power of the incident electromagnetic wave.

Source: Los Alamos National Laboratory

ENGN8530: CVIU 24

MicrobolometerThe microbolometer, a particular kind of bolometer, is the basis for thermal cameras.It is a grid of vanadium oxide or amorphous silicon heat sensors atop a corresponding grid of silicon. IR radiation from a specific range of wavelengths strikes the vanadium oxide and changes its electrical resistance. This resistance change is measured and processed into temperatures which can be represented graphically. Source: Roland Goecke

ENGN8530: CVIU 25

Synthetic Aperture RadarSAR is an active sensing technique

Active sensor transmits radio wavesAntenna picks up reflections

For a conventional radar, the footprint is governed by the size of the antenna (aperture).SAR creates a synthetic aperture and delivers a 2D image. One dimension is the range (cross track),whereas the other one is the azimuth (along track).Sonar and ultrasound work on the same principles but in different wavelengths

ENGN8530: CVIU 26

SAR (2)

Radar Track

Range

Azimuth

Nadir Track

RADAR = Radio Detection and Ranging

NADIR = Opposite of zenith SAR image of Venus

Source: Wikipedia

ENGN8530: CVIU 27

Positron Emission TomographyActive sensing technique.PET based on measuring emitted radiation.PET is a nuclear medicine imaging technique which uses radiation from a radio-isotope introduced into the target.PET produces a 3D image or map of functional processes in the body.

Source: Wikipedia

ENGN8530: CVIU 28

Magnetic Resonance ImagingActive sensing technique.MRI also based on measuring emitted radiation.MRI simulates the emission of radiation by aligning the spins of water molecules making use of a high energy magnetic field (several Tesla!).Good for showing soft tissueNot good for showing bones

MRI

Magnetic Resonance Angiography

Source: Wikipedia

ENGN8530: CVIU 29

Functional MRIFunctional MRI (fMRI) measures signal changes in the brain that are due to changing neuralactivity.Increases in neural activity cause changes in the MR signal due to change in ratio of oxygenated to deoxygenated haemoglobin.Deoxygenated haemoglobin attenuates the MR signal.

fMRI of head: Highlighted areas show primary visual cortex

Source: Wikipedia

ENGN8530: CVIU 30

Computed (Axial) TomographyEmploys a set of axially acquired x-ray images to recover a 3D representation of the object.Originally, the images were in axial or transverse planes, but the modern CT scanner deliver volumetric data.Digital geometry processing is used to generate a 3D image of the internals of an object from a large series of 2D X-ray images taken around a single axis of rotation.

CT scan of head

Source: Wikipedia

ENGN8530: CVIU 31

CAT/CTGood for showing bonesNot good for showing soft tissue

Modern diagnostic software

ENGN8530: CVIU 32

Camera Geometry

The aperture allows light to enter the cameraThe image plane is where the image is formedThe focal length is the distance between the aperture and the image planeThe optical axis passes through the center of the aperture and is perpendicular to it.

f (focal length)

x'

image planeaperturey'd

z optical axis

ENGN8530: CVIU 33

Camera Geometry (2)

θθ

f (focal length)

x'

x

z optical axis x'

By similar triangles, x'/f=x/z

For small angle

θtanor fxzxfx =′=′

θfx =′

ENGN8530: CVIU 34

Camera Geometry (3)

θ θ

f

x'x'

xt

xb x't

x'bx

And, using the formula in the previous slide

Hence, size transforms aszxf

zfxxxxx

zfxx

zfxx bt

btb

bt

t =−

=′−′=′=′=′)( and ,

θθ ffx ≈⋅=′2

tan2

ENGN8530: CVIU 35

Camera Geometry (4)

Close objectDistant object

Rays that pass through the camera aperture spread out and do not make a sharp point on the image.These rays need to be focussed to make a sharp point in the image.The rays from close objects diverge more than from distant objectsFor very distant objects, the rays are effectively parallel

ENGN8530: CVIU 36

Aperture and ResolutionLight diffracts as it passes through the aperture

A point in the scene spreads out into a ‘blob’ in the image (fundamental limit on image sharpness)

Size of Airy disk (and best resolution) is (Rayleigh criterion)

where λ is the wavelength of the light, d is the aperturedfR

dλλ 22.1 22.1θ minmin ==

Circular apertureAiry disk

Square aperture

Separate points

ENGN8530: CVIU 37

ResolutionThe resolution of a camera is the minimum separation between two points such that they appear separately on the image plane Since distant objects appear smaller and closer together, the resolution varies with respect to the distance. The angle between separable objects does not vary wrtdistance – angular resolutionThe distance on the image plane does not vary – image plane resolution.

ENGN8530: CVIU 38

Camera ModelsPinhole cameraCamera with lenses

ENGN8530: CVIU 39

Pinhole CameraAdvantages

No distortion of imageDepth of field from a few cm to infinityWide angular fieldWorks on ultra-violet and X-rays

DisadvantagesVery limited light gatheringPoor resolution

ENGN8530: CVIU 40

Pinhole Camera (2)

Simplest camera The pinhole (aperture d ) must be small to get a sharp imageBut we need a large pinhole to get enough light!

ENGN8530: CVIU 41

Pinhole Camera (3)For distant objects the geometric limit is

The diffraction limit is

The best resolution occurs when these two are equal:

or

f* is the optimal focal length

d

dR =

dfR /22.1 λ=

dfd /22.1 *λ=

λ22.1/2* df =

R=d

Geometric

Diffraction

f

R

ENGN8530: CVIU 42

Pinhole Camera (4)

Geometric limit

Longer wavelength

Smaller aperture

ENGN8530: CVIU 43

Cameras with LensesFor better light-gathering capabilities, we need to increase the aperture.A lens removes the geometric limit on resolution, since it focuses all light entering through the aperture on the same point on the image.

f

d

Pinhole path

ENGN8530: CVIU 44

Cameras with Lenses (2)We can have apertures as large as we likeThe price to pay: chromatic and spherical aberrationThe image-plane resolution of lens based camera is the diffraction limit of the aperture:

The larger the aperture, the better the resolution

The image-plane resolution is still θf

d/22.1 λθ =

dfR /22.1 λ=

ENGN8530: CVIU 45

Camera Resolution ExamplesPinhole camera, 0.5mm pinhole

Optimal focal length f*=37cmθ=4.6', equivalent to 1mm at 75cm

For a 35mm lens camera and visible light:θ=3.9'', 1mm at 52mFocal length depends on the lens, but typically <10cm for a camera

Human eye, pupil 4.5mm (average)θ=28'', 1mm at 7.4mFocal length ~2cm

ENGN8530: CVIU 46

IlluminationThe amount of light entering the camera is proportional to the area of the lens (πd 2/4)The area covered by the image is proportional to f 2

So, the brightness of the image is proportional to d 2/f 2

Dependent on the focal ratio f /dBrightness is controlled by a moveable aperture which changes dReferred to by a sequence of ‘f-stops’; f:1 is fully open, each successive f-stop halves the brightness (so the aperture is reduced by √2): f:1.4, f:2, f:2.8, f:4, f:5.6 …

ENGN8530: CVIU 47

Absorption and Reflection

Reflection

Transmission

Absorption

Reflected + absorbed + transmitted energy= Incident light energy

All of these are object (material, surface) dependant!

ENGN8530: CVIU 48

The BSDF

Source: Wikipedia

Bidirectional Scattering Distribution FunctionDescribes the way in which light is scattered by a surfaceBSDF = BRDF + BSSRDF + BTDF

BRDF - Bidirectional reflectance distribution functionBSSRDF - Bidirectional surface scattering reflectance distribution function (incl. subsurface scattering)BTDF - Bidirectional transmittance distribution function

ENGN8530: CVIU 49

The BRDFIt describes the reflectance of an object as a function of the illumination, viewing geometry and wavelength.Its given by the ratio of irradiance (incident flux per unit area) to radiance (reflected flux per unit area).

Reference:F. Nicodemus, "Reflectance nomenclature and directional reflectance and emissivity," Appl. Opt., Vol. 9, 1970, pp. 1474–1475.

ENGN8530: CVIU 50

The BRDF (2)The modelling of the lighting conditions in the scene is of pivotal importance for the acquisition and processing of digital imagery.The radiance function can be decomposed into a linear combination of ambient, diffuse and specularcomponents.Recovering the radiance function from a single image is an underconstrained problem.

ENGN8530: CVIU 51

The BRDF (3)In general, the BRDF has the following form

The function depends onIncoming and outgoing angleIncoming and outgoing wavelengthIncoming and outgoing polarisationIncoming and outgoing position (subsurface scattering)Delay between the incoming and outgoing light rays

ENGN8530: CVIU 52

RadiancePower per unit projected area perpendicular to the ray per unit solid angle in the direction of the ray

Flux given by dΦ = L(x,ω) cos θ dω dA

Solid angle is proportional to the surface area, S of a projection of the object onto a sphere divided by the square of its radius R.

dA dw

L(x,w)

ENGN8530: CVIU 53

Example BRDFsOren and Nayar

Cook and Torrance

ENGN8530: CVIU 54

Example BRDFs (2)

where mp is the microfacet slope

ENGN8530: CVIU 55

Example BRDFs (3)Phong