Distributive and parallel problem solving - methods, …Theses 2.1 Concurrent start-up of Erlang...

Transcript of Distributive and parallel problem solving - methods, …Theses 2.1 Concurrent start-up of Erlang...

Distributive and parallel problem solving -methods, data structures and algorithms

Antal Tatrai

Theses of PhD Dissertation

Department of Computer Algebra

Eotvos Lorand Univeristy

Supervisor: Dr. Attila Kovacs PhD

PhD School of Informatics

Dr. Andras Benczur D.Sc.

PhD Program of Basics and methodology of informatics

Dr. Janos Demetrovics

member of the Hungarian Academy of Sciences

2012

Introduction

Nowadays the multiprocessor computers become more and more conventional. Computers

with hundreds or thousands of processors are common in the industry, and probably your

desktop PC at home has more than one processor as well. On the other hand, there

are several problems having only sequential solutions, which often do not satisfy the

needed performance issues. One of the main challenge in the future’s computer science

is developing efficient and safe parallel algorithms instead of the sequential ones.

In my thesis I analized and solved three difficult problems having no or only sequential

solutions earlier.

1.1 Concurrent start-up of Erlang systems

A distributed system is a collection of processors that do not share memory or a clock.

Each processor has its own local memory. The processors in the system are connected

through a communication network. Communication takes place via messages. Erlang is

a distributed programming language and it was designed by the telecommunication com-

pany Ericsson to support fault-tolerant systems running in soft real-time mode. Programs

in Erlang consist of functions stored in modules. Functions can execute concurrently in

lightweight processes, and communicate with each other through asynchronous message

passing. The creation and deletion of processes require little memory and computation

time. Erlang is an open source development system having a distributed kernel. Erlang

is most often used together with the library called Open Telecom Platform (OTP). OTP

consists of a development system platform for building, and a control system platform for

running telecommunication applications. It has a set of design principles (behaviours),

which together with middleware applications yield building blocks for scalable robust

real time systems. The basic concept in Erlang/OTP is the supervision tree. This is a

process structuring model based on the idea of workers and supervisors. The processes

of an Erlang/OTP application can be structured in a (process) tree. Each inner node

is a supervisor, and each leaf is a worker. The worker nodes perform the functionality

of the application. The supervisor nodes monitor their children and can make decisions

2

in case of failures. This process structure is very helpful in case of high performance,

fault-tolerant systems.

The start-up of these systems is a sequential process, because the nodes of the super-

vision tree communicate with each other. Supervisor nodes wait for acknowledge (ACK)

messages from its children and starts its next child just after received the ACK message

from the previous child. Consequently a supervisor node starts its children in left to right

order one after the other.

The sequential start determines an order between the starting processes which will be

vanished by the parallelization. Therefore an alternative parallel ordering is required for

avoiding dead-locks and startup craches.

The challenge:

For the solution of the concurrent Erlang systems’ start-up the following items must

hold:

• The supervision tree structure, as well as other functionalities, must be preserved.

• The start-up must be reliable, and fast (faster than sequential).

• Only ”small” modifications are permitted in the Erlang/OTP stdlib.

1.2 Cache cleaning

Modern computer systems often use caches for reaching slowly accesible data much faster.

One of the most typical example is the Domain Name Service. Usually, the response for

a query arrives from a nearer server instead of the original name server of the target IP

address, and the data is stored in the cache of the nearer server.

Because these data are just a copy of the original, it has to recheck the validity period-

ically. Usually each data that is saved into a cache has a special field called Time To

Live (TTL). This is a time value and means the validity duration of the data. When an

element expired it must drop out from the cache or get it again. Often this functionality

is implemented with a special thread which starts periodically and checks the elements

in the cache.

A cache is an abstract data type which can be implemented in several ways. In most

cases trees and hash tables are the base of this data type. I focused on the linked hash

table implementations. In the parallel case a large number of threads try to access the

cache, and mutual exclusion become the most important aspect of the implementations.

Usually, the cleaning thread has to read the whole data structure. Clearly knowing which

3

elements are expired the algorithms work faster.

The Challenge:

• Develop data structures on which the parallel cache cleaning algorithms work faster.

1.3 Classifications of Zn through generalized number

systems

Let M ∈ Zn×n be the base, and 0 ∈ D ⊆ Zn be a finite set of digits. The (M,D) pair

is called a generalized number system (GNS) if for each x ∈ Zn there is a k ∈ N such

that x =∑k

i=0Midij , where dij ∈ D. There is some necessary conditions for being the

(M,D) pair a GNS, namely M must be expansive, D must be a complete residue system

modulo M , and |M − I| 6= ±1.

Let ϕ : Zn → Zn, ϕ(x) = M−1(x−d) for the unique d ∈ D satisfying x ≡ d (mod M).

The repeated application of ϕ for an x is called the orbit of x. A point x is called periodic

if φk(x) = x for a k > 0. The orbit of a periodic point is called a cycle.

Since M is expansive therefore there exists a norm in Zn such that for the correspond-

ing operator norm ‖M−1‖ < 1. Let Sk = {x ∈ Zn| ‖x‖ < k} for a k ∈ R. Since D is

finite therefore there exists a C ∈ R such that the orbit of any x ∈ Zn eventually enters

into SC and remains there. Now the number system property can be reformulated as

follows. The (M,D) pair is a GNS iff the orbit of every x ∈ Zn reaches 0. The decision

problem means determining the number system property and the classification problem

means computing all the disjoint cycles.

There are sequantial algorithms for the decision and for the classification problem.

Unfortunately, when some of the eigenvalues of M are close to one then the finite set SC

can be extremely large. This is the case in the generalized binary number systems (i.e.

when detM = ±2) in higher dimensions.

The Challenge:

• Develop a parallel algorithm for the decision and classification problem.

4

Theses

2.1 Concurrent start-up of Erlang systems

Thesis 1

I designed and implemented a method for concurrent starting of supervisors’ children

without destroying the supervision trees. This solution does not need significant changes in

the Erlang/OTP stdlib and provides a reliable dependency description between processes.

The dependency graph construction managing the starting order bears a resemblence

to the mechanism used by Apple’s MacOSX StartupItems [5]. Information about Erlang

and OTP can be found in [6, 7, 8, 9, 22].

In order to preserve the supervisor tree structure I introduced the notion of condi-

tions. At the beginning of the start-up all conditions are false. The condition from that

a process’ start depends on, is called precondition. A process can start after all its pre-

conditions became true. When a process started up it sets its condition to true. We can

represent these relations in a dependency graph.

Next, I proposed an Erlang trick, that enables starting processes in a concurrent way.

Here we can set which nodes should start concurrently. When a supervisor process s wants

to start a child process w1 the system starts a dummy (or wrapper) node s1 instead.

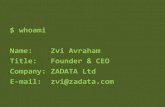

Figure 2.1: Inserting a dummy supervisornode for (1) preserving the supervisor’s restart-ing behaviour, and (2) enabling fast parallelstart-up. Supervisors are denoted by squares,permanent processes by continuous border, andtemporary processes by dashed border. In themiddle of the tree one can see the living pro-cesses (s2, w2) after termination of the dummyfunctions.

5

Figure 2.2: Start-up speed of the sequentialand concurrent versions (in seconds) in case ofdifferent structures of supervision trees.

Then, the dummy process s1 starts a simple function s1,f (which just calls a spawn

function) and sends an ACK message back immediately to its parent s. Consequently

the next child w2 of the supervisor node s can start. Function s1,f spawned function

f . The spawned function f starts process w1 and attaches it into the dummy process

s1. The already started dummy process s1 runs independently (parallel) from the other

parts of the system. The advantage of this method is that if process w1 has a blocking

precondition then only w1 will wait and not the whole system. Figure 2.1 shows the

descibed supervision hierarchy after start.

After implementing the above mentioned mechanism in Erlang I created some exam-

ple program for measuring the performance. Each worker process makes slow floating

point calculations in its init section. My prototype is pessimistic in the sense that the

telecommunication servers usually wait for a connection or a hardware, but waiting does

not load the processors. Figure 2.2 shows the measured speed up.

2.2 Cache cleaning

Thesis 2

I developed and implemented secondary data structures to the hash table. These data

structures hold only pointers and the expire time of the elements. The clearer thread has

to examine only the secondary structure which results a faster availability of the expired

elements.

In the eightees Carla Sch. Ellis described lockbased hash tables [11, 12] which make

better performance than the simple locking. In 2002 a compare-and-set based hash table

was introduced by Maged M. Michael [13,14,15,16,19,20], that showed equal performance

with Ellis hash table if the number of the threads is equal the number of the processors

but was much faster if there are more threads than processors. A serious disadvantage of

this hash table was that the backbone of the hash table was unresizable, and it produced

6

Figure 2.3: Linked hash table with bucket-list secondary structure

long buckets. Somewhat later, a high-performance resizable lock-free hash table was

described by Ori Shalev and Nir Shavit [21]. During the implementation of my solution

I used the Ellis locking mechanism showing a general solution to the problem of cache

cleaning.

Several secondary structures was implemented. First, the well-known binary heap.

The disadvantage of this structure is that (in contrast to Dijkstra algorithm) the cleaning

usually will erase more than one elements. Erasing the elements from the cache implies

an O(log(n)) computational time reordering of the secondary structure for each item.

Moreover, the insertion costs the same overhead. I tried and get similar results in case

of search trees.

Finally, I developed a special data structure, called bucket-list. (Figure 2.3 shows this data

structure.) The base idea behind this structure is time slicing. The time is devided into

parts containing cleaning periods. The parts in which cache elements expire are stored

in a linked list. Each element of the list (bucket) represents a time slice and contains

pointers to the items of the hash table which will expire in this time slice. In each

execution of the cleaning thread only a whole bucket will be erased. The list contains the

time slices in linear order. Consequently the cleaning thread finds the proper time slice

at the end of the list in O(1) comuptation time. In worst case, the insertion takes O(ts)

time, where ts is the number of time slices in the list. However if we assume that TTL

values are constants, then the insertion has O(1) computational time. This restriction is

usually held in case of the DNS.

I implemented the Ellis hash table with a cleaning thread, and with the three sec-

ondary structures above. In case of the bucket-list I found speed up only if the time slices

were very sort (1 second).

7

2.3 Classifications of Zn through generalized number

systems

Thesis 3.1

I developed and implemented two grid solutions for the classification problem using master-

slave grid technology.

It is known that all the periodic elements in the system (M,D) are inside the compact

set −H, where the set H contains the elements with zero integer parts in the systems

(the fractions). There is a method by which set H can be enclosed in an n-dimensional

rectangle [17]. The earlier (sequential) algorithm decides the periodicity of points of this

rectangle by analizing the points’ orbits. My simple grid solution enumerates the points

of the rectangle and cuts this sequence into slices. The server application sends these

slices to the worker machines. Clearly, if during the computation of the function ϕ the

orbit arrives at an already examined point then no more iteration needed. My first type

of enumeration creates slices in a back-tracking order. In this case all workers receive the

start point and the size of a slice.

When the orbit of a point returns to a point which occurs earlier in the enumeration

than the slice’s first point then further iteration of ϕ is unnecessary, consequently the

algorithm can start with the next point. This traversing of the rectangle is similar to a

box (in 3 dimension), in that we fill water. In general, function ϕ is tending to zero and

the water is in the bottom of the box therefore the already checked points are mostly far

from the orbits.

It is possible to improve the previous algorithm with a different rectangle division.

The division contains small rectangles (called bricks). One worker computes bricks one

after the other. The first brick encloses 0. Bricks, which are being computed or have

been computed form a prism if they constitute an n-dimensional rectangle. The master

generates the bricks in spiral order. A worker gets a brick and a prism at a time. It

checks all points of the brick and stops an iteration if it arrives into the prism. In this

way the program checks the points nearer to 0 first. The advantage of this method is

that during the computation of the orbits the iterations come to points near to 0 more

often. Consequently, these points will be accessed essentially only once. Figure 2.4 shows

the factors of the running time of the GRID algorithms.

Thesis 3.3

I created parallel solution for the Brunotte algorithm that were optimized to two different

8

Figure 2.4: The factors of the running time in case of some small dimension cases

paltforms.

The Brunotte algorithm [10, 18] also applies function ϕ to locate a nonzero periodic

point or to conclude that the system is a GNS. But in contrast of the previoused mentioned

solutions in this case the algotihm starts apply the function ϕ on a small set that is

growing in each iteration. This growing is finate. If the set finished the growing and

there is no other periodic elements only 0 then the (M,D) pair is GNS.

I produced two parallel solution for this problem. The first version runs on computers

that have multicore chips and shared memory (SMP platform) and the second version is

optimized to the Cell Broadband Engie Architecture. The two versions were compared

and this shows that the Brunotte algorithm runs faster on SMP platforms. Figure 2.5

shows the running time of the two parallel Brunotte algorithms.

20

40

60

80

100

120

0 2 4 6 8 10 12 14 16

Exe

cutio

n tim

e in

sec

onds

Number of worker threads

Sequential (Opteron)

Sequential (PowerXCell)

Copy stage (opteron)Without copy stage (opteron)

Copy stage (PowerXCell)Without copy stage (PowerXCell)

Figure 2.5: The running time of the SMP and the CBEA optimized Brunotte algorithms

9

Publication of the

author

[1] Bansagi, A., Ezsias, B. G., Kovacs, A.,

and Tatrai, A. Source code scanners in soft-

ware quality management and connections to

international standards. Annales Universitatis

Scientarium Budapestinensis, Sectio Computa-

torica 37 (2012), 81 –93.

[2] Kovacs, A., Burcsi, P., and Tatrai, A.

Start-phase control of distributed systems writ-

ten in Erlang/OTP. Acta Universitatis Sapien-

tiae Informatica 2, 1 (2010), 10 – 28.

[3] Tatrai, A. Parallel implementations of

Brunottes algorithm. Journal of Parallel and

Distributed Computing 71, 4 (2011), 565 – 572.

[4] Tatrai, A., Dezso, B., and Fekete, I.

Concurrent implementation of caches. In 7th

International Conference on Applied Informat-

ics (2007), vol. 1, pp. 361 – 374.

Bibliography

[5] Mac OS X Startup Items

http://developer.apple.com/documentation/MacOSX/Conceptual/

BPSystemStartup/BPSystemStartup.pdf .

[6] Open Source Erlang www.erlang.org.

[7] Armstrong, J. Making reliable distributed systems in the presence

of errors. PhD thesis, Royal Institute of Technology, Stockholm,

2003.

[8] Armstrong, J. L., and Virding, R. One pass real-time generational

mark-sweep garbage collection. In IWMM ’95: Proceedings of the

International Workshop on Memory Management (London, UK,

1995), Springer-Verlag, pp. 313–322.

[9] Blau, S., and Rooth, J. AXD 301 - A New Generation ATM

Switching System, 1998.

[10] Brunotte, H. On trinomial bases of radix representations of al-

gebraic integers. Acta Scientiarum Mathematicarum (2001).

[11] Ellis, C. S. Extendible hashing for concurrent operations and

distributed data. In PODS ’83: Proceedings of the 2nd ACM

SIGACT-SIGMOD symposium on Principles of database systems

(New York, NY, USA, 1983), ACM, pp. 106–116.

[12] Ellis, C. S. Concurrency and linear hashing. In PODS ’85:

Proceedings of the fourth ACM SIGACT-SIGMOD symposium on

Principles of database systems (New York, NY, USA, 1985),

ACM, pp. 1–7.

[13] Fraser, K., and Harris, T. Concurrent programming without

locks. ACM Trans. Comput. Syst. 25, 2 (2007), 5.

[14] Harris, T. L. A pragmatic implementation of non-blocking

linked-lists. In DISC ’01: Proceedings of the 15th Interna-

tional Conference on Distributed Computing (London, UK, 2001),

Springer-Verlag, pp. 300–314.

[15] Herlihy, M. Wait-free synchronization. ACM Trans. Program.

Lang. Syst. 13, 1 (1991), 124–149.

[16] Iii, W. N. S., and Scott, M. L. Nonblocking concurrent data struc-

tures with condition synchronization. In In Proc. of the Intl.

Symp. on Distributed Computing (DISC (2004), pp. 174–187.

[17] Kovacs, A. On computation of attractors for invertible expand-

ing linear operators in Zk. Publ. Math. Debrecen 56/1-2 (2000),

97–120.

[18] Kovacs, A., Burcsi, P., and Papp-Varga, Z. Decision and classifica-

tion algorithms for generalized number systems. Annales Univ.

Sci. Budapest, Sect. Comp. 28 (2008), 141–156.

[19] Michael, M. M. High performance dynamic lock-free hash tables

and list-based sets. In SPAA ’02: Proceedings of the fourteenth

annual ACM symposium on Parallel algorithms and architectures

(New York, NY, USA, 2002), ACM, pp. 73–82.

[20] Michael, M. M. Safe memory reclamation for dynamic lock-free

objects using atomic reads and writes. In PODC ’02: Proceedings

of the twenty-first annual symposium on Principles of distributed

computing (New York, NY, USA, 2002), ACM, pp. 21–30.

[21] Shalev, O., and Shavit, N. Split-ordered lists: Lock-free extensi-

ble hash tables. J. ACM 53, 3 (2006), 379–405.

[22] Virding, R., Wikstrom, C., and Williams, M. Concurrent program-

ming in ERLANG (2nd ed.). Prentice Hall International (UK)

Ltd., Hertfordshire, UK, UK, 1996.

10

![Test-Driven Development of Concurrent Programs using ... · 2. Test-Driven Development in Erlang Test-driven development [2] is a software development practice that suggests using](https://static.fdocuments.net/doc/165x107/5f5aebec8bbac5598c51a484/test-driven-development-of-concurrent-programs-using-2-test-driven-development.jpg)

![Erlang Distributed File System (eDFS)amanmangal10/doc/edfs/edfs.pdf · 2014-06-01 · Erlang [1] is recently developed general purpose highly concurrent func-tional programming language.](https://static.fdocuments.net/doc/165x107/5f4b192ee871b869cb153e73/erlang-distributed-file-system-edfs-amanmangal10docedfsedfspdf-2014-06-01.jpg)