DESCENT, NEWTON LOGISTIC REGRESSION,...

Transcript of DESCENT, NEWTON LOGISTIC REGRESSION,...

-

Matthieu R Bloch Thursday, January 30, 2020

LOGISTIC REGRESSION, GRADIENTLOGISTIC REGRESSION, GRADIENTDESCENT, NEWTONDESCENT, NEWTON

1

-

LOGISTICSLOGISTICSTAs and Office hours

Monday: Mehrdad (TSRB 523a) - 2pm-3:15pmTuesday: TJ (VL C449 Cubicle D) - 1:30pm - 2:45pmWednesday: Matthieu (TSRB 423) - 12:00:pm-1:15pmThursday: Hossein (VL C449 Cubicle B): 10:45pm - 12:00pmFriday: Brighton (TSRB 523a) - 12pm-1:15pm

Homework 1Hard deadline Friday January 31, 2020 (11:59PM EST) (Wednesday February 5, 2020 for DL)

Homework 2 postedDue Wednesday February7,2020 11:59pm EST(Wednesday February14, 2020 for DL)

2

-

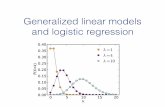

RECAP: GENERATIVE MODELSRECAP: GENERATIVE MODELSQuadratic Discriminant Analysis: classes distributed according to

Covariance matrices are class dependent but decision boundary not linear anymore

Generative model rarely accurate

Number of parameters to estimate: class priors, means, elements of covariance matrix

Works well if Works poorly if without other tricks (dimensionality reduction, structured covariance)

Biggest concern: “one should solve the [classification] problem directly and never solve a more generalproblem as an intermediate step [such as modeling p(xly)].”, Vapnik, 1998

Revisit binary classifier with LDA

We no not need to estimate the full joint distribution!

N ( , )μk Σk

K − 1 Kd d(d + 1)12N ≫ dN ≪ d

(x) = =η1ϕ(x; , Σ)π1 μ1

ϕ(x; , Σ) + ϕ(x; , Σ)π1 μ1 π0 μ0

1

1 + exp(−( x + b))w⊺

3

-

4

-

LOGISTIC REGRESSIONLOGISTIC REGRESSIONAssume that is of the form

Estimate and from the data directly

Plugin the result to obtain

The function is called the logistic function

The binary logistic classifier is (linear)

How do we estimate and ?

From LDA analysis: , Direct estimation of from maximum likelihood

η(x) 11+exp(−( x+b))w⊺

ŵ b̂

(x) =η̂ 11+exp(−( x+ ))ŵ⊺ b̂

x ↦ 11+e−x

(x) = 1{ (x) ≥ } = 1{ x + ≥ 0}hLC η̂ 12 ŵ⊺ b̂

ŵ b̂

= ( − )ŵ Σ̂−1

μ̂1 μ̂0 b = − + log12 μ̂

⊺

0Σ̂−1

μ̂012 μ̂

⊺

1Σ̂−1

μ̂1π̂1π̂0

( , b)ŵ

5

-

MLE FOR LOGISTIC REGRESSIONMLE FOR LOGISTIC REGRESSIONWe have a parametric density model for

Standard trick: and This allows us to lump the offset and write

Given our dataset the likelihood is

For with we obtain

(y|x) = (x)pθ η̂= [1,x~ x⊺]⊺ θ = [b w⊺]⊺

η(x) =1

1 + exp(− )θ⊺x~

{( , )x~i yi }Ni=1 L(θ) ≜ ( | )∏Ni=1 Pθ yi x

~i

K = 2 Y = {0, 1}

L(θ) ≜ η( (1 − η( )∏i=1

N

x~

i)yi x~i )

1−yi

ℓ(θ) = ( log η( ) + (1 − ) log(1 − η( )))∑i=1

N

yi x~i yi x~i

ℓ(θ) = ( − log(1 + ))∑i=1

N

yiθ⊺x~

i eθ⊺x~i

6

-

7

-

8

-

FINDING THE MLEFINDING THE MLEA necessary condition for optimality is

Here this means

System of non linear equations!

Use numerical algorithm to find the solution of Provable convergence when is convex

We will discuss two techniques:Gradient descentNewton’s methodThere are many more, especially useful in high dimension

ℓ(θ) = 0∇θ

( − ) = 0∑Ni=1 x~i yi

11+exp(− )θ⊺x~i

d + 1

− ℓ(θ)argminθ−ℓ

9

-

WRAPPING UP PLUGIN METHODSWRAPPING UP PLUGIN METHODSNaive Bayes, LDA, and logistic regression are all plugin methods that result in linear classifiers

Naive Bayesplugin method based on density estimationscales well to high-dimensions and naturally handles mixture of discrete and continuous features

Linear discriminant analysisbetter if Gaussianity assumptions are valid

Logistic regressionmodels only the distribution of , not valid for a larger class of distributionsfewer parameters to estimate

Plugin methods can be useful in practice, but ultimately they are very limitedThere are always distributions where our assumptions are violatedIf our assumptions are wrong, the output is totally unpredictableCan be hard to verify whether our assumptions are rightRequire solving a more difficult problem as an intermediate step

Py|x Py,x

10

-

GRADIENT DESCENTGRADIENT DESCENTConsider the canonical problem

Find minimum by find iteratively by “rolling downhill”Start from point

; is the step size

Choice of step size really matters: too small and convergence takes forever, too big and mightnever converge

Many variants of gradient descentMomentum: and Accelerated: and

In practice, gradient has to be evaluated from data

f(x) with f : → Rminx∈Rd

Rd

x(0)

= − η∇f(x)x(1) x(0) |x=x(0) η

= − η∇f(x)x(2) x(1) |x=x(1)

⋯

η

= γ + η∇f(x)vt vt−1 |x=x(t) = −x(t+1)

x(t) vt

= γ + η∇f(x)vt vt−1 |x= −γx(t) vt−1 = −x(t+1)

x(t) vt

11

-

NEWTON’S METHODNEWTON’S METHODNewton-Raphson method uses the second derivative to automatically adapt step size

Hessian matrix

Newton’s method is much faster when the dimension is small but impractical when is large

= − [ f(x) ∇f(x)x(j+1) x(j) ∇2 ]−1 |x=xj

f(x) =∇2

⎡

⎣

⎢⎢⎢⎢⎢⎢⎢⎢⎢

f∂2

∂x21

f∂2

∂ ∂x1 x2

⋮f∂2

∂ ∂xd x1

f∂2

∂ ∂x1 x2

f∂2

∂x22

⋮f∂2

∂ ∂xd x2

⋯

⋯

⋱

⋯

f∂2

∂ ∂x1 xd

f∂2

∂ ∂x2 xd

⋮f∂2

∂x2d

⎤

⎦

⎥⎥⎥⎥⎥⎥⎥⎥⎥

d d

12

-

STOCHASTIC GRADIENT DESCENTSTOCHASTIC GRADIENT DESCENTO�en have a loss function of the form where

The gradient is and gradient descent update is

Problematic if dataset if huge of if not all data is availabelUse iterative technique instead

Tons of variations of principleBatch, minibatch, Adagrad, RMSprop, Adam, etc.

ℓ(θ) = (θ)∑Ni=1 ℓi (θ) = f( , , θ)ℓi xi yi

ℓ(θ) = ∇ (θ)∇θ ∑Ni=1 ℓi

= − η ∇ (θ)θ(j+1) θ(j) ∑i=1

N

ℓi

= − η∇ (θ)θ(j+1) θ(j) ℓi

13

-

14