Continuous Distributions The Uniform distribution from a to b.

-

Upload

derek-hines -

Category

Documents

-

view

219 -

download

0

Transcript of Continuous Distributions The Uniform distribution from a to b.

0

0.1

0.2

0.3

0.4

0 5 10 15

1

b a

a b

f x

x0

0.1

0.2

0.3

0.4

0 5 10 15

1

b a

a b

f x

x

1

b a

a b

f x

x

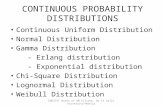

Continuous Distributions

The Uniform distribution from a to b

1

0 otherwise

a x bf x b a

The Normal distribution (mean , standard deviation )

2

221

2

x

f x e

0

0.1

0.2

-2 0 2 4 6 8 10

The Exponential distribution

0

0 0

xe xf x

x

Weibull distribution with parameters and.

Thus 1x

F x e

1and 0x

f x F x x e x

The Weibull density, f(x)

0

0.1

0.2

0.3

0.4

0.5

0.6

0.7

0 1 2 3 4 5

( = 0.5, = 2)

( = 0.7, = 2)

( = 0.9, = 2)

The Gamma distribution

Let the continuous random variable X have density function:

1 0

0 0

xx e xf x

x

Then X is said to have a Gamma distribution with parameters and .

Expectation

Let X denote a discrete random variable with probability function p(x) (probability density function f(x) if X is continuous) then the expected value of X, E(X) is defined to be:

i ix i

E X xp x x p x

E X xf x dx

and if X is continuous with probability density function f(x)

0

0.1

0.2

0.3

0.4

0 1 2 3 4 5 6 7

Interpretation of E(X)

1. The expected value of X, E(X), is the centre of gravity of the probability distribution of X.

2. The expected value of X, E(X), is the long-run average value of X. (shown later –Law of Large Numbers)

E(X)

Example:

The Uniform distribution

Suppose X has a uniform distribution from a to b.

Then:

1

0 ,b a a x b

f xx a x b

The expected value of X is:

1b

b aa

E X xf x dx x dx

2 2 2

1

2 2 2

b

b a

a

x b a a b

b a

Example:

The Normal distribution

Suppose X has a Normal distribution with parameters and .

Then: 2

221

2

x

f x e

The expected value of X is:

2

221

2

x

E X xf x dx x e dx

xz

Make the substitution:1

and dz dx x z

Hence

Now

2

21

2

z

E X z e dz

2 2

2 21

2 2

z z

e dz ze dz

2 2

2 21

1 and 02

z z

e dz ze dz

Thus E X

Example:

The Gamma distribution

Suppose X has a Gamma distribution with parameters and .

Then: 1 0

0 0

xx e xf x

x

Note:

This is a very useful formula when working with the Gamma distribution.

1

0

1 if 0,xf x dx x e dx

The expected value of X is:

1

0

xE X xf x dx x x e dx

This is now equal to 1. 0

xx e dx

1

10

1

1xx e dx

1

Thus if X has a Gamma ( ,) distribution then the expected value of X is:

E X

Special Cases: ( ,) distribution then the expected value of X is:

1. Exponential () distribution: = 1, arbitrary

1E X

2. Chi-square () distribution: = /2, = ½.

21

2

E X

The Gamma distribution

0

0.05

0.1

0.15

0.2

0.25

0.3

0 2 4 6 8 10

E X

0

0.05

0.1

0.15

0.2

0.25

0 5 10 15 20 25

The Exponential distribution

1E X

0

0.02

0.04

0.06

0.08

0.1

0.12

0.14

0 5 10 15 20 25

The Chi-square (2) distribution

E X

Expectation of functions of Random Variables

DefinitionLet X denote a discrete random variable with probability function p(x) (probability density function f(x) if X is continuous) then the expected value of g(X), E[g(X)] is defined to be:

i ix i

E g X g x p x g x p x

E g X g x f x dx

and if X is continuous with probability density function f(x)

Example:

The Uniform distribution

Suppose X has a uniform distribution from 0 to b.

Then:

1 0

0 0,b x b

f xx x b

Find the expected value of A = X2 . If X is the length of a side of a square (chosen at random form 0 to b) then A is the area of the square

2 2 2 1b

b aa

E X x f x dx x dx

3 3 3 2

1

0

0

3 3 3

b

b

x b b

b

= 1/3 the maximum area of the square

Example:

The Geometric distribution

Suppose X (discrete) has a geometric distribution with parameter p.

Then: 1

1 for 1, 2,3,x

p x p p x

Find the expected value of X A and the expected value of X2.

2 32 2 2 2 2 2

1

1 2 1 3 1 4 1x

E X x p x p p p p p p p

2 3

1

1 2 1 3 1 4 1x

E X xp x p p p p p p p

Recall: The sum of a geometric Series

Differentiating both sides with respect to r we get:

2 3

1

aa ar ar ar

r

2 3 1or with 1, 1

1a r r r

r

22 32

11 2 3 4 1 1 1

1r r r r

r

Differentiating both sides with respect to r we get:

Thus

This formula could also be developed by noting:

2 3

2

11 2 3 4

1r r r

r

2 31 2 3 4r r r 2 31 r r r 2 3r r r 2 3r r

3r 2 31

1 1 1 1

r r r

r r r r

2 32

1 1 1 11

1 1 1 1r r r

r r r r

This formula can be used to calculate:

2 3

1

1 2 1 3 1 4 1x

E X xp x p p p p p p p

2 31 2 3 4 where 1p r r r r p

2 31 2 3 4 where 1p r r r r p 22

1 1 1= =

1p p

r p p

To compute the expected value of X2.

2 32 2 2 2 2 2

1

1 2 1 3 1 4 1x

E X x p x p p p p p p p

2 2 2 2 2 31 2 3 4p r r r

we need to find a formula for

2 2 2 2 2 3 2 1

1

1 2 3 4 x

x

r r r x r

Note 2 31

0

11

1x

x

r r r r S rr

Differentiating with respect to r we get

1 2 3 1 11 2

0 1

11 2 3 4

1x x

x x

S r r r r xr xrr

Differentiating again with respect to r we get

2 31 2 3 2 4 3 5 4S r r r r

32 23

1 2

21 1 2 1 1

1x x

x x

x x r x x r rr

23

2

21

1x

x

x x rr

Thus

2 2 2

32 2

2

1x x

x x

x r xrr

2 2 2

32 2

2

1x x

x x

x r xrr

implies

2 2 23

2 2

2

1x x

x x

x r xrr

2 2 2 2 2 3 2

3

22 3 4 5 2 3 4

1r r r r r

r

Thus

2 2 2 2 3 2

3

21 2 3 4 1 2 3 4

1r r r r r r

r

2 3

3

21 2 3 4

1

rr r r

r

3 2 3 3

2 1 2 1 1

1 1 1 1

r r r r

r r r r

3

2 if 1

pr p

p

Thus

2 2 2 2 2 31 2 3 4E X p r r r

2

2 p

p

Moments of Random Variables

Definition

Let X be a random variable (discrete or continuous), then the kth moment of X is defined to be:

kk E X

-

if is discrete

if is continuous

k

x

k

x p x X

x f x dx X

The first moment of X , = 1 = E(X) is the center of gravity of the distribution of X. The higher moments give different information regarding the distribution of X.

Definition

Let X be a random variable (discrete or continuous), then the kth central moment of X is defined to be:

0 k

k E X

-

if is discrete

if is continuous

k

x

k

x p x X

x f x dx X

where = 1 = E(X) = the first moment of X .

The central moments describe how the probability distribution is distributed about the centre of gravity, .

01 E X

and is denoted by the symbol var(X).

= 2nd central moment. 202 E X

depends on the spread of the probability distribution of X about .

202 E X is called the variance of X.

202 E X is called the standard

deviation of X and is denoted by the symbol .

20 22var X E X

The third central moment

contains information about the skewness of a distribution.

303 E X

The third central moment

contains information about the skewness of a distribution.

303 E X

Measure of skewness

0 03 3

1 3 23 02

0

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08

0 5 10 15 20 25 30 35

03 0, 0

Positively skewed distribution

0

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08

0 5 10 15 20 25 30 35

03 10, 0

Negatively skewed distribution

0

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08

0.09

0 5 10 15 20 25 30 35

03 10, 0

Symmetric distribution

The fourth central moment

Also contains information about the shape of a distribution. The property of shape that is measured by the fourth central moment is called kurtosis

404 E X

The measure of kurtosis

0 04 4

2 24 02

3 3

0

0.01

0.02

0.03

0.04

0.05

0.06

0.07

0.08

0.09

0 5 10 15 20 25 30 35

040, moderate in size

Mesokurtic distribution

0

0 20 40 60 80

040, small in size

Platykurtic distribution

0

0 20 40 60 80

040, large in size

leptokurtic distribution

Example: The uniform distribution from 0 to 1

1 0 1

0 0, 1

xf x

x x

11 1

0 0

11

1 1

kk k

k

xx f x dx x dx

k k

Finding the moments

Finding the central moments:

1

0 12

0

1kk

k x f x dx x dx

12making the substitution w x

1122

1 12 2

1 11 1 12 20

1 1

k kkk

k

ww dw

k k

1

1

1if even1 1

2 12 1

0 if odd

kk

k

kk

kk

Thus

0 0 02 3 42 4

1 1 1 1Hence , 0,

2 3 12 2 5 80

02

1var

12X

02

1var

12X

03

1 30

The standard deviation

The measure of skewness

04

1 24

1 803 3 1.2

1 12

The measure of kurtosis

Rules for expectation

Rules:

if is discrete

if is continuous

x

g x p x X

E g Xg x f x dx X

1. where is a constantE c c c

if then g X c E g X E c cf x dx

c f x dx c

Proof

The proof for discrete random variables is similar.

2. where , are constantsE aX b aE X b a b

if then g X aX b E aX b ax b f x dx

Proof

The proof for discrete random variables is similar.

a xf x dx b f x dx

aE X b

2023. var X E X

2 22x x f x dx

Proof

The proof for discrete random variables is similar.

22 22 1E X E X

2 2var X E X x f x dx

2 22x f x dx xf x dx f x dx

2 2

2 2 12E X E X

24. var varaX b a X

Proof

2var aX baX b E aX b

2E aX b a b

22E a X

22 2 vara E X a X

aX b E aX b aE X b a b

Moment generating functions

Definition

if is discrete

if is continuous

x

g x p x X

E g Xg x f x dx X

Let X denote a random variable, Then the moment generating function of X , mX(t) is defined by:

Recall

if is discrete

if is continuous

tx

xtX

Xtx

e p x X

m t E ee f x dx X

Examples

1 0,1,2, ,n xxn

p x p p x nx

The moment generating function of X , mX(t) is:

1. The Binomial distribution (parameters p, n)

tX txX

x

m t E e e p x

0

1n

n xtx x

x

ne p p

x

0 0

1n nx n xt x n x

x x

n ne p p a b

x x

1nn ta b e p p

0,1,2,!

x

p x e xx

The moment generating function of X , mX(t) is:

2. The Poisson distribution (parameter )

tX txX

x

m t E e e p x 0 !

xntx

x

e ex

0 0

using ! !

t

xt xe u

x x

e ue e e e

x x

1te

e

0

0 0

xe xf x

x

The moment generating function of X , mX(t) is:

3. The Exponential distribution (parameter )

0

tX tx tx xXm t E e e f x dx e e dx

0 0

t xt x e

e dxt

undefined

tt

t

2

21

2

x

f x e

The moment generating function of X , mX(t) is:

4. The Standard Normal distribution ( = 0, = 1)

tX txXm t E e e f x dx

2 22

1

2

x tx

e dx

2

21

2

xtxe e dx

2 2 2 22 2

2 2 21 1

2 2

x tx t x tx t

Xm t e dx e e dx

We will now use the fact that 2

221

1 for all 0,2

x b

ae dx a ba

We have completed the square

22 2

2 2 21

2

x tt t

e e dx e

This is 1

1 0

0 0

xx e xf x

x

The moment generating function of X , mX(t) is:

4. The Gamma distribution (parameters , )

tX txXm t E e e f x dx

1

0

tx xe x e dx

1

0

t xx e dx

We use the fact

1

0

1 for all 0, 0a

a bxbx e dx a b

a

1

0

t xXm t x e dx

1

0

t xtx e dx

tt

Equal to 1

Properties of Moment Generating Functions

1. mX(0) = 1

0, hence 0 1 1tX XX Xm t E e m E e E

v) Gamma Dist'n Xm tt

2

2iv) Std Normal Dist'n t

Xm t e

iii) Exponential Dist'n Xm tt

1ii) Poisson Dist'n

te

Xm t e

i) Binomial Dist'n 1nt

Xm t e p p

Note: the moment generating functions of the following distributions satisfy the property mX(0) = 1

2 33212. 1

2! 3! !kk

Xm t t t t tk

We use the expansion of the exponential function:2 3

12! 3! !

ku u u u

e uk

tXXm t E e

2 32 31

2! 3! !

kkt t t

E tX X X Xk

2 3

2 312! 3! !

kkt t t

tE X E X E X E Xk

2 3

1 2 312! 3! !

k

k

t t tt

k

0

3. 0k

kX X kk

t

dm m t

dt

Now 2 332

112! 3! !

kkXm t t t t t

k

2 1321 2 3

2! 3! !kk

Xm t t t ktk

2 13

1 2 2! 1 !kkt t t

k

1and 0Xm

24

2 3 2! 2 !kk

Xm t t t tk

2and 0Xm continuing we find 0kX km

i) Binomial Dist'n 1nt

Xm t e p p

Property 3 is very useful in determining the moments of a random variable X.

Examples

11

nt tXm t n e p p pe

10 010 1

n

Xm n e p p pe np

2 11 1 1

n nt t t t tXm t np n e p p e p e e p p e

2

2

1 1 1

1 1

nt t t t

nt t t

npe e p p n e p e p p

npe e p p ne p p

2 221np np p np np q n p npq

1ii) Poisson Dist'n

te

Xm t e

1 1t te e ttXm t e e e

1 1 2 121t t te t e t e tt

Xm t e e e e

1 2 12 2 1t te t e tt t

Xm t e e e e

1 2 12 3t te t e tte e e

1 3 1 2 13 23t t te t e t e t

e e e

0 1 0

1 0e

Xm e

0 01 0 1 02 22 0

e e

Xm e e

3 0 2 0 0 3 23 0 3 3t

Xm e e e

To find the moments we set t = 0.

iii) Exponential Dist'n Xm tt

1

X

d tdm t

dt t dt

2 21 1t t

3 32 1 2Xm t t t

4 42 3 1 2 3Xm t t t

5 54 2 3 4 1 4!Xm t t t

1

!kk

Xm t k t

Thus

2

1

10Xm

3

2 2

20 2Xm

1 !

0 !kk

k X k

km k

2 33211

2! 3! !kk

Xm t t t t tk

The moments for the exponential distribution can be calculated in an alternative way. This is note by expanding mX(t) in powers of t and equating the coefficients of tk to the coefficients in:

2 31 11

11Xm t u u u

tt u

2 3

2 31

t t t

Equating the coefficients of tk we get:

1 ! or

!k

kk k

k

k

2

2t

Xm t e

The moments for the standard normal distribution

We use the expansion of eu.2 3

0

1! 2! 3! !

k ku

k

u u u ue u

k k

2 2 2

22

2

2 3

2 2 2

212! 3! !

t

kt t t

tXm t e

k

2 4 6 212 2 3

1 1 11

2 2! 2 3! 2 !k

kt t t t

k

We now equate the coefficients tk in:

2 222

112! ! 2 !

k kk kXm t t t t t

k k

If k is odd: k = 0.

2 1

2 ! 2 !k

kk k

For even 2k:

2

2 !or

2 !k k

k

k

1 2 3 4 2

2! 4!Thus 0, 1, 0, 3

2 2 2!