Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

Transcript of Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

1/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

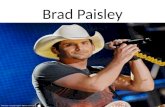

100G Data Center InterconnectsBrad Smith

VP & Chief Analyst

Data Center Interconnects

[email protected](408) 813-4345

3/12/2012 1

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

2/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Waiting for New ServersWaiting for 10GBASE-T

Preparing for the Exa Flood of Data

3/12/2012 2

State of the Data Center Business

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

3/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

State of the Industry

Data centers are largely very cost sensitive; governs all

decision making

Decision algorithm (Cost of new product) / Server cost

Buy 100G LR4 transceiver OR 8 servers?

Average and large data center needs 4-5G, not 10G yet Getting ready for 10G and 40G QSFP

Most popular optical transceiver = 1G SFP+ & 10G SFP+

Top-of-rack & End-of-row switches are 10G SFP+ & 40G QSFP

ready but .

Entire industry is Waiting for new Romley servers

And 10GBASE-T low-power 28-nm parts

3/12/20123

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

4/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Intels Tick-Tock ModelSets the Clock for Data Center Upgrades

Tick= Architecture upgrade ; Tock= Silicon Shrink

3/12/2012 4

Delayed by ~8 months (Leap year effect?)

Data center spending delayed by 8 months

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

5/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

MPUs Key Data center Driver

8cores, 16 threads (AMD 16 Cores)

Increase DRAM support 128G to 768G+

PCI Express 3.0 faster I/O

Energy efficient

Bottom line: 80 percent more performance than theprevious generation Xeon 5600 MPUs

3/12/2012 5

X540 Lan-on-motherboard (LOM) comes in at only 25% of

the cost of an SFP+ fiber per port deployment.

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

6/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Microprocessors

3/12/2012 6

2-4 Cores 10-16 Cores 40-50 Cores

2 MPUs/Server

128GBytes DRAM

PCIe Gen2 (8 lanes @5G/lane

? MPUs/Server

1T+ Bytes DRAM

PCIe Gen3 (8G/lane)

ARM, INTEL, AMD

4 MPUs/Server

768G Bytes DRAM

PCIe Gen3 (8/16 lanes @8G/lane

?Data centerin a server

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

7/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

I/O for Interconnects

Virtualization software Sends jobs to non busy MPUs

Increases utilization from 20% to 90%

I/O increases: Data Direct I/0 - transfer of data directly to from the

network interface and cache, bypassing main memory

PCI Express Gen 2 5G 8b/10b and 8 lanes PCI Express Gen 3 8G 128/132 and 8-16 lanes

66 GB/s in tested I/O bandwidth

Bottom line: ~300 % faster I/O bandwidth!

3/12/2012

7

20% 90%

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

8/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

3/12/2012 8

Traditional Data Center Reality

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

9/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

The Yellow Wall

3/12/2012 9

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

10/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Most High-Speed Interconnects

Approaching the 10G Speed Barrier

Most High-Speed Interconnects

Approaching the 10G Speed Barrier

One of the biggest technical transitions in the communications

industry and huge opportunities for photonic interconnects

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

11/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Major Data Center Protocols

All Hit 10Gbps barrier Direct Attach: 10G = 7m; 14G=3m; 25G will need active DAC?

10GBASE-T needs microprocessor sized PHY to hit 10G

InfiniBand: 10G moving to 14G FDR & 28G EDR

Ethernet: 10G moving to 25G

Fibre Channel: 8G moving to 14G & 28G

SAS: 6G moving to 12G and 24G

PCI Express: Gen2 5G moving to Gen3 8G & Gen4 16G

All pass ~10Gbps where copper has issues

Reaches are becoming longer

Optical value proposition = high data rate + long reach

Many data center protocols transitioning fro Copper-to-Optical

3/12/2012 11

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

12/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

3/12/2012 12

.primecaretech.c

Mega Data Center

Link this rack

To this rack

400-600 metes

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

13/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Data center Optics

Pluggable transceivers

Active Optical Cables

Embedded Optical Modules

FTTChip

Mez cards

Chip/Optical

3/12/2012 13

Finisar

Reflex/Fulcrum

Avago

More high-speed optics

within and between racks

will push more traffic to 40G/100G

uplinks

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

14/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

100G

Lots of discussion and excitement

Google & 10x10MSA woke everyone up to thecoming exa-flood of data

What customers want: inexpensive, QSFPsolutions (or CFP/4), dual and parallel fibers

Waiting for 25G VCSELS for short reach

Waiting for LR4 prices to come down

3/12/2012 14

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

15/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

Why 100G Needed in Data Centers

100G Driving Forces 48 60 Servers/ rack

10Gbps server uplinks

480G -600G problem!

Will demand 100G uplinks

Timing: Romley Q2/2012 : Volume 2H/2012

~ 2H/ 2013 need for 100G transceivers climb rapidly

3/12/2012 15

-

7/22/2019 Brad-Smith_OFC2012_EA_Panel1_why100GinDC.pdf

16/16

LIGHTCOUNTINGMarket Research on High-Speed InterconnectsDatacom, Telecom, CATV, FTTX, Consumer markets Copyright 2012 LightCounting LLC All material proprietary and confidential.

3/12/2012 16

Our Website:

www.lightcounting.com

Headquarters Location:

858 West Park Street

Eugene, OR 97401Tel: 408.962.4851

Email: [email protected]