Analysis of Variance and Contrasts - University of … › ~kkelley › Teaching › Lectures ›...

Transcript of Analysis of Variance and Contrasts - University of … › ~kkelley › Teaching › Lectures ›...

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Analysis of Variance and Contrasts

Ken Kelley’s Class Notes

1 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Lesson Breakdown by Topic

1 Goal of Analysis of VarianceA Conceptual Example Appropriatefor ANOVAExample F -Test for IndependentVariancesConceptual Underpinnings ofANOVAMean Squares

2 The Formal ANOVA ModelA Worked Example

3 Explanation by Example

Example: Weight Loss DrinkANOVA Using SPSS

4 Multiple ComparisonsWhy Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

5 AssumptionsAssumptions of the ANOVAWhat You LearnedNotations

2 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

What You Will Learn from this Lesson

You will learn:

How to compare more than two independent means to assess ifthere are any differences via an analysis of variance (ANOVA).How the total sums of squares for the data can be decomposedinto a part that is due to the mean differences between groupsand to a part that is due to within group differences.Why doing multiple t-tests is not the same thing as ANOVA.Why doing multiple t-tests leads to a multiplicity issue, in thatas the number of tests increases, so to does the probability ofone or more error.How to correct for the multiplicity issue in order for a set ofcontrasts/comparisons has a Type I error rate for the collectionof tests at the desirable (e.g., .05) level.How to use SPSS and R to implement an ANOVA andfollow-up tests.

3 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Motivation

When looking at different allergy medicines, there arenumerous options. So how can it be determined which brandwill work best when they all claim to do so?

Data could be collected to determine the outcomes from eachproduct among numerous individuals randomly assigned todifferent brands.

An ANOVA could be run to infer if there is a performancedifference between these different brands.

If there are no significant results, evidence would not exist tosuggest there are differences in performance among the brands.

If there are significant results, we would infer that the brandsdo not perform the same, but further tests would have to beconducted so as to infer where the differences are .

4 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Goal of Analysis of Variance

The goal of ANOVA is to detect if mean differences existamong m groups.

Recall the independent groups t-test is designed to detectdifferences between two independent groups.

The t-test is a special case of ANOVA when m = 2 (t2df equals

the F(1,df ) from ANOVA for two groups).

5 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Obtaining a statistically significant result for ANOVA conveysthat not all groups have the same population mean.

However, a statistically significant ANOVA with more thantwo groups does not convey where those differences exist.

Follow-up tests (contrasts/comparisons) can be conducted tohelp discern specifically where group means differ.

6 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Consumer Preference

Consider the overall perception of how consumers regarddifferent companies.

An experiment was done in which 30 individuals wererandomly assigned into one of three groups.

All participants saw (almost) the same commercial advertisinga new Android smart phone.

The difference between the groups was that the commercialattributed the phone to either (a) Nokia, (b) Samsung, or (c)Motorola.

Of interest is in whether consumers tend to rate the brandsdifferently, even for the “same” cell phone.

7 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

What are other examples in which ANOVA would be useful?

8 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Consider the null hypotheses of equal variances:

H0: σ21 = σ2

2.

The F -statistic is used to evaluate the above null hypothesis,and is defined as the ratio of two independent variances:

F(df1,df2) =s2

1

s22

,

where df1 and df2 are the degrees of freedom for s21 and s2

2 ,respectively.

Notice that F cannot be negative and is unbound on the highside.

F -is a positively skewed distribution.

9 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Consider the null hypotheses of equal variances:

H0: σ21 = σ2

2.

The F -statistic is used to evaluate the above null hypothesis,and is defined as the ratio of two independent variances:

F(df1,df2) =s2

1

s22

,

where df1 and df2 are the degrees of freedom for s21 and s2

2 ,respectively.

Notice that F cannot be negative and is unbound on the highside.

F -is a positively skewed distribution.

10 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Consider the null hypotheses of equal variances:

H0: σ21 = σ2

2.

The F -statistic is used to evaluate the above null hypothesis,and is defined as the ratio of two independent variances:

F(df1,df2) =s2

1

s22

,

where df1 and df2 are the degrees of freedom for s21 and s2

2 ,respectively.

Notice that F cannot be negative and is unbound on the highside.

F -is a positively skewed distribution.

11 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Examples

We have previously asked questions about the meandifference, but the F -distribution allows us to ask questionsabout variability.

Is the variability of user satisfaction of Gmail users differentthan the variability of user satisfaction of Outlook.com?

Does Mars and their M&M’s production have “better control”(i.e., smaller variance) than Wrigley’s Skittles?

For a given item, are Wal-Mart prices across the country morestable than Kroger’s (for like items)?

Does a particular machine (or location/worker/shift) producemore variable products than a counterpart?

12 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

The standard deviation of Gmail user satisfaction was 6.35based on a sample size of 55.

The standard deviation of Outlook.com user satisfaction was8.90 based on a sample size of 42.

For an F -test of this sort addressing any differences in thevariance (e.g., is there more variability in user satisfaction inone group), there are two critical values, one at the α/2 valueand one at the 1− α/2 value.

The critical values are and for the .025 and.975 quantiles (i.e., when α = .05).

The F -statistic for the test of the null hypothesis is

F =6.352

8.902=

40.3225

79.21= .509.

The conclusion is: .

13 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Thus far, we have talked only about the idea of comparingtwo variances.

But, what does this have to do with comparing means, whichis the question we are interested in addressing?

14 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Analysis of variance (ANOVA) considers two variances:

one variance calculates the variance of the group means;

another variance is the (weighted) mean of within groupvariances (recall s2

p from the two group t-test).

We thus consider the variability of the group means to assessif the population group means differ.

15 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Analysis of variance (ANOVA) considers two variances:

one variance calculates the variance of the group means;

another variance is the (weighted) mean of within groupvariances (recall s2

p from the two group t-test).

We thus consider the variability of the group means to assessif the population group means differ.

16 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Analysis of variance (ANOVA) considers two variances:

one variance calculates the variance of the group means;

another variance is the (weighted) mean of within groupvariances (recall s2

p from the two group t-test).

We thus consider the variability of the group means to assessif the population group means differ.

17 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Conceptual Underpinnings of ANOVA

The null hypothesis in an ANOVA context is that all of thegroup means are the same: µ1 = µ2 = . . . = µm = µ,where m is the total number of groups.

When the null hypothesis is true, we can estimate thevariance of the scores with two methods, both of which areindependent of one another.

18 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

If the ratio of variances (i.e., F -test) is so much larger than 1that it seems unreasonable to have happened by chance alone,then the null hypothesis can be rejected.

Of course, “so much larger than 1 that it seems unreasonable”is defined in terms of the p-value (compared to α).

If the p-value is smaller than α, the null hypothesis of equalpopulation means is rejected.

The variance of the scores can be calculated from within eachgroup and then pooled across the groups (in exactly the samemanner as was done for the independent groups t-test).

19 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Mean Square Within

Recall that the best way to arrive at a pooled within groupvariance is to calculate a weighted mean of the variances:

s2Pooled =

m∑j=1

(nj − 1)s2j

m∑j=1

nj −m

=

m∑j=1

SSj

N −m= s2

Within = MSWithin,

where SS is sum of squares, MS is mean square (i.e., avariance), m is the number of groups, nj is the sample size inthe jth group (j = 1, . . . ,m), and N is the total sample size

(N =m∑j=1

nj).

20 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

In the special case where n1 = n2 = . . . = nm = n, theequation for the pooled variance reduces:

m∑j=1

s2j

m= s2

Within = MSWithin.

Notice that the degrees of freedom here are N −m.

The degrees of freedom are N −m because there are Nindependent observations yet m sample means estimated.

21 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

Mean Square Between

Recall from the single group situation that the variance of themean is equal to the variance of the scores divided by the

sample size

(i.e.,s2

Yj=

s2Yj

nj

).

That is, the variance of the sample means is the variance ofthe scores divided by the sample size.

22 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

However, under the null hypothesis, we can calculate thevariance of the sample means directly by using the m meansas if they were individual scores.

Then, an estimate of the variance of the scores could beobtained by multiplying the variance of the means by samplesize (s2

Between = ns2Y

).

If the F -statistic is statistically significant, the conclusions isthat the variance of the means is larger than it should havebeen, if in fact the null hypothesis was true.

Notice that the degrees of freedom here are m − 1.

23 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

There are thus two variances that estimate the same valueunder the null hypothesis.

One (σ2Within) calculated by pooling within group variances.

The other (σ2Between) by calculating the variance of the means

and multiplying by the within group sample size.

If the null hypothesis is exactly true,σ2Between

σ2Within

= 1.

If the null hypothesis is false and mean differences do exist,s2Between will be larger than would be expected under the null

hypothesis, thens2Between

s2Within

> 1.

24 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

If F =s2Between

s2Within

(i.e., F = MSBetweenMSWithin

) is statistically significant,

we will reject the null hypothesis and conclude thatH0: µ1 = µ2 = . . . = µm = µ is false.

Thus, we are comparing means based on variances!

25 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Conceptual Example Appropriate for ANOVAExample F -Test for Independent VariancesConceptual Underpinnings of ANOVAMean Squares

If F =s2Between

s2Within

(i.e., F = MSBetweenMSWithin

) is statistically significant,

we will reject the null hypothesis and conclude thatH0: µ1 = µ2 = . . . = µm = µ is false.

Thus, we are comparing means based on variances!

26 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

The ANOVA Model

The ANOVA assumes that the score for the ith individual inthe jth group is a function of some overall mean, µ, someeffect for being in the jth group exists, τj , and someuniqueness exists, εij .

Such a scenario implies that

Yij = µ+ τj + εij ,

where

τj = µj − µ,

with τj being the treatment effect of the jth group.

27 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

When the null hypothesis is true, the sum of the τs squared

equals zero:m∑j=1

τ2j = 0.

When the null hypothesis is false, the sum of the τs squared

equals some number larger than zero:m∑j=1

τ2j > 0.

28 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

Thus, we can formally write the null and alternativehypotheses for ANOVA as

H0:m∑j=1

τ2j = 0

and

Ha:m∑j=1

τ2j > 0,

respectively.

Note that H0:m∑j=1

τ2j = 0 is equivalent to

H0: µ1 = µ2 = . . . = µm = µ.

29 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

The null hypothesis can be evaluated by determining,probabilistically, if the sum of the estimated τs squared isgreater than zero by more than what would be expected bychance alone.

The “hard to believe” part is evaluated by the specified αvalue.

30 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

The sums of squares are defined as follows:

SSBetween = SSTreatment = SSAmong =m∑j=1

nj(Yj − Y..)2;

and

SSWithin = SSError = SSResidual =m∑j=1

nj∑i=1

(Yij − Yj)2;

SSTotal =m∑j=1

nj∑i=1

(Yij − Y..)2.

SSTotal = SSBetween + SSWithin

31 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

Like usual, we divide the sums of squares by the appropriatedegrees of freedom in order to obtain a variance.

In the ANOVA context, the sums of squares divided by itsdegrees of freedom is called a mean square: SS

df = MS .

“Mean squares” are so named because when the sums ofsquares is divided by its degrees of freedom, the resultant valueis the mean of the squared deviations (i.e., the mean square).

Mean square simply means variance.

32 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

In general, the ANOVA source table is defined as:

Source SS df MS F p-value

Betweenm∑j=1

nj(Yj − Y ..)2 m − 1 SSBetween

m−1MSBetween

MSWithinp

Withinm∑j=1

nj∑i=1

(Yij − Y.j)2 N −m SSWithin

N−m

Totalm∑j=1

nj∑i=1

(Yij − Y ..)2 N − 1

The ANOVA source table is (very) similar to that used in thecontext of multiple regression, a widely applicable future topic.

33 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

In general, the ANOVA source table is defined as:

Source SS df MS F p-value

Betweenm∑j=1

nj(Yj − Y ..)2 m − 1 SSBetween

m−1MSBetween

MSWithinp

Withinm∑j=1

nj∑i=1

(Yij − Y.j)2 N −m SSWithin

N−m

Totalm∑j=1

nj∑i=1

(Yij − Y ..)2 N − 1

The ANOVA source table is (very) similar to that used in thecontext of multiple regression, a widely applicable future topic.

34 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

A Worked Example

It can also be shown that the expected values of the meansquares are given as

E [MSBetween] = σ2Within +

m∑j=1

njτ2j

m − 1,

E [MSWithin] = σ2Within,

When all of the population means are equal, the secondcomponent of the MSBetween and the expectation of the twomean squares is the same.

When any population mean difference exists,E [MSBetween] > E [MSWithin].

35 / 104

Worked Example – Raw Data

Ratings Ratings Ratings

6 1.5 2.25 10 2 4 10 3 9

6 1.5 2.25 10 2 4 6 11 1

2 12.5 6.25 9 1 1 10 3 9

3 11.5 2.25 4 14 16 5 12 4

4 10.5 0.25 4 14 16 10 3 9

4 10.5 0.25 10 2 4 5 12 4

6 1.5 2.25 10 2 4 2 15 25

2 12.5 6.25 10 2 4 10 3 9

5 0.5 0.25 3 15 25 2 15 25

7 2.5 6.25 10 2 4 10 3 9

Σ 45.00 0.00 28.50 80.00 0.00 82.00 70.00 0.00 104.00

Mean 4.50 0.00 8.00 0.00 7.00 0.00

SD 1.78 1.78 3.02 3.02 3.40 3.40

Variance 3.17 3.17 9.11 9.11 11.56 11.56

Grand=Mean=(======;=y1bar=dot=dot==)=

The=grand=mean=is=the=(weighted)=mean=of=the=sample=means=(here=it=is=simply=equal=to=the=mean=of=the=means=due=to=equal=group=sample=sizes.

Sums%of%SquaresBetween=Sum=of=Squares ===10*(4.5016.50)2=+=10*(8.0016.50)2=+=10*(7.0016.50)2===65.00===SSBetween

This=is=the=weighted=(because=each=score=in=a=group=has=the=same=sample=mean,=of=course)=sum=of=squared=deviation=between=the=group=means=and=the=grand=mean.

Within=Sum=of=Squares

This=is=the=sum=of=each=of=the=within=group=sum=of=squares.

Mean%SquaresNow,=to=obtain=the=mean=squares,=divide=the=sums=of=squares=by=their=appropriate=degrees=of=freedom:

Mean=Square=Between ===65.00/(311)===32.50===MSBetween

Mean=Square=Within= ===214.50/=27===7.94===MSWithin

InferenceNow,=to=obtain=the=F1statistic,=divide=the=Mean=Square=Between=by=the=Mean=Square=Within:

F"=" 32.50/7.94===4.091

To=obtain=the=p1value,=use=the="F.Dist.RT"=formula=for=finding=the=area=in=the=right=tail=that=exceeds=the=F=value=of=4.091

p"=" 0.028061704

Now,=because=the=p1value=is=less=than=α=(.05=being=typical),=we=reject=the=null=hypothesis.=We=infer=that=the=population=group=mean=are=not=all=equal.=

Thus,=the=same=phone=commercial,=as=attributed=to=different=brands,=had=an=effect=on=the=ratings=of=the=phone.=

The=conclusion=is=that=there=is=an=effect=of=brand=on=consumer=sentiment=1=consumers=rate=the=same=thing=differently=depending=on=the=brand=attribution.=

Nokia Samsung Motorola

===(4.50*10=+=8.00*10=+=7.00*10)/30===6.50

===9*3.17=+=9*9.11=+=9*11.56===28.5=+=82=+=104===214.50===SSWithin

ei32 = yi3 − y3( )2ei2

2 = yi2 − y2( )2ei12 = yi1 − y1( )2ei1 = yi1 − y1 ei2 = yi2 − y2 ei3 = yi3 − y3

y..

ei32 = yi3 − y3( )2ei2

2 = yi2 − y2( )2ei12 = yi1 − y1( )2ei1 = yi1 − y1 ei2 = yi2 − y2 ei3 = yi3 − y3

The data are available here: nd.edu/~kkelley/Teaching/Data/Phone_Commercial_Preference.sav.

Worked Example – Summary Statistics

Nokia Samsung MotorolaMean 4.50 8.00 7.00

Standard8deviation 1.78 3.02 3.40Sample8size 10 10 10

Grand8mean

Rather8than8using8the8full8data8set,8only8the8summary8statistics8are8actually8needed.8The8reason8is8because8we8can8determine8the8sums8of8squares8from8the8summary8data.8The8within8sum8of8squares8is8literally8the8sum8of8the8degrees8of8freedom8multiplied8by8the8variance8from8each8group.

Sums%of%SquaresBetween8Sum8of8Squares 8=810*(4.50N6.50)28+810*(8.00N6.50)28+810*(7.00N6.50)28=8865.008=8SSBetweenThis8is8the8weighted8(because8each8score8in8a8group8has8the8same8sample8mean,8of8cousre)8sum8of8squared8deviation8between8the8group8means8and8the8grand8mean.

Within8Sum8of8Squares

Recall8that8the8sums8of8squares8divided8by8its8degree8of8freedom8is8a8variance8Correspdongly,8a8variance8multiplied8by8its8degrees8of8freedom8is8a8sum8of8squares.8Thus,8we8are8able8to8find8the8sum8of8squares8by8multiplying8the8variances8by8their8degrees8of8freedom.8

Mean%SquaresNow,8to8obtain8the8mean8squares,8divide8the8sums8of8squares8by8their8appropriate8degrees8of8freedom:

Mean8Square8Between

Mean8Square8Within8 8=8214.50/8278=87.948=8MSWithin

InferenceNow,8to8obtain8the8FNstatistic,8divide8the8Mean8Square8Between8by8the8Mean8Square8Within:

F"=" 32.50/7.948=84.091

To8obtain8the8pNvalue,8use8the8"F.Dist.RT"8formula8for8finding8the8area8in8the8right8tail8that8exceeds8the8F8value8of84.091p"=" 0.0281

Now,8because8the8pNvalue8is8less8than8α8(.058being8typical),8we8reject8the8null8hypothesis.8We8infer8that8the8population8group8mean8are8not8all8equal.8Thus,8the8same8phone8commercial,8as8attributed8to8different8brands,8had8an8effect8on8the8ratings8of8the8phone.8The8conclusion8is8that8there8is8an8effect8of8brand8on8consumer8sentiment8N8consumers8rate8the8same8thing8difference,8depending8on8the8brand8attribution.8

Summary8Statistics8from8the8Phone8Evaluation

8=8(4.50*108+88.00*108+87.00*10)/(30)8=86.50

8=83.17*(10N1)8+9.11*(10N1)8+811.56*(10N1)8=8214.508=8SSWithin

8=81.782*(10N1)8+83.022*(10N1)8+83.402*(10N1)8=8214.50,8which8in8terms8of8variances8(instead8of8standard8deviations)8can8be8written8as:

8=865.00/(3N1)8=832.508=8MSBetween

yjs jy..

y..

The data are available here: nd.edu/~kkelley/Teaching/Data/Phone_Commercial_Preference.sav.

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

Product Effectiveness: Weight Loss Drinks

Over a two month period in the early spring, 99 participants fromthe midwest were randomly assigned to one of three groups (33each) to assess the effectiveness of meal replacement weight lossdrink.

Study was conducted and analyzed by an independent firm.

The three groups were a (a) control group, (b) SF, and (c) TL.

All participants were encouraged to exercise and given runningshoes, workout outfit, and a pedometer.

38 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

The summary statistics for weight change in pounds (before breakfast)are given as:

Control SF TL Total

Y -1.61 -3.06 -7.29 -3.78

s 1.83 2.12 1.79 3.00

n 26 29 22 77

As can be seen, 22 participants did not compete the study.

Implications?

39 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

The following table is the ANOVA source table:

Source SS df MS F p

Between 408.28 2 204.14 54.567 < .001

Within 276.84 74 3.74

Total 685.12 76

40 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

The critical F -value at the .05 level for 2 and 69 degrees offreedom is F(2,74;.95) = 3.12.

So, given the information, the decision is to.

The one-sentence interpretation of the results is:

41 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

Performing an ANOVA in SPSS

42 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

43 / 104

ANOVA Output from SPSS

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Example: Weight Loss DrinkANOVA Using SPSS

Suggestions when Performing ANOVA in SPSS

Start with Analyze → Descriptives → Explore.

Analyze → Compare Means → One-Way ANOVA for ANOVAprocedure.

In the One-Way ANOVA specification, request a Means Plot(via Options).

Consider using Analyze → General Linear Model → Univariatefor a more general approach.

45 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Omnibus Versus Targeted Tests

Procedures such as the t-test are targeted, and thus testspecific hypotheses.

For example, the independent groups t-test evaluates thehypothesis that µ1 = µ2.

Thus, after an ANOVA is performed, oftentimes we want toknow where the mean differences exist.

However, a rationale of ANOVA was not to perform manysignificance tests.

46 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

An Analogy

Consider an airline scheduling system at the gate of departure.

This system requires all five processes to simultaneouslyfunction:

1 live feed from the corporate server;

2 live feed to the corporate server;

3 live feed to the departing airport;

4 live feed to the arrival airport;

5 computer terminal to function property (e.g., no softwareglitches, no power loss).

Suppose that the “uptime” or ”reliability” of each of theseindependent systems is .95, meaning at any given time thereis a 95% chance each process is working.

47 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

An Analogy

Consider an airline scheduling system at the gate of departure.

This system requires all five processes to simultaneouslyfunction:

1 live feed from the corporate server;

2 live feed to the corporate server;

3 live feed to the departing airport;

4 live feed to the arrival airport;

5 computer terminal to function property (e.g., no softwareglitches, no power loss).

Suppose that the “uptime” or ”reliability” of each of theseindependent systems is .95, meaning at any given time thereis a 95% chance each process is working.

48 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

An Analogy

Consider an airline scheduling system at the gate of departure.

This system requires all five processes to simultaneouslyfunction:

1 live feed from the corporate server;

2 live feed to the corporate server;

3 live feed to the departing airport;

4 live feed to the arrival airport;

5 computer terminal to function property (e.g., no softwareglitches, no power loss).

Suppose that the “uptime” or ”reliability” of each of theseindependent systems is .95, meaning at any given time thereis a 95% chance each process is working.

49 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

What is the probability that the system can be used whenneeded (i.e., that all five systems working properly)?

Recalling the rule of independent events, the probability thatthe system can be used is.95× .95× .95× .95× .95 = .955 = .7738.

Thus, even though each piece of the system has a 95%chance of working properly, there is only a 77.38% chancethat the system itself can be used.The implication here is that an error occurring somewhere inthe set of processes (1-.7738=0.2262) is much higher than forany given system (1-.95=.05).

Note that the rate of errors in the system is (.2262/.05) 4.524times higher than in a given process!

This is the multiplicity issue – an error somewhere among aset of “tests” is higher than for any given test.

50 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

What is the probability that the system can be used whenneeded (i.e., that all five systems working properly)?

Recalling the rule of independent events, the probability thatthe system can be used is.95× .95× .95× .95× .95 = .955 = .7738.

Thus, even though each piece of the system has a 95%chance of working properly, there is only a 77.38% chancethat the system itself can be used.The implication here is that an error occurring somewhere inthe set of processes (1-.7738=0.2262) is much higher than forany given system (1-.95=.05).

Note that the rate of errors in the system is (.2262/.05) 4.524times higher than in a given process!

This is the multiplicity issue – an error somewhere among aset of “tests” is higher than for any given test.

51 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

What is the probability that the system can be used whenneeded (i.e., that all five systems working properly)?

Recalling the rule of independent events, the probability thatthe system can be used is.95× .95× .95× .95× .95 = .955 = .7738.

Thus, even though each piece of the system has a 95%chance of working properly, there is only a 77.38% chancethat the system itself can be used.

The implication here is that an error occurring somewhere inthe set of processes (1-.7738=0.2262) is much higher than forany given system (1-.95=.05).

Note that the rate of errors in the system is (.2262/.05) 4.524times higher than in a given process!

This is the multiplicity issue – an error somewhere among aset of “tests” is higher than for any given test.

52 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

What is the probability that the system can be used whenneeded (i.e., that all five systems working properly)?

Recalling the rule of independent events, the probability thatthe system can be used is.95× .95× .95× .95× .95 = .955 = .7738.

Thus, even though each piece of the system has a 95%chance of working properly, there is only a 77.38% chancethat the system itself can be used.The implication here is that an error occurring somewhere inthe set of processes (1-.7738=0.2262) is much higher than forany given system (1-.95=.05).

Note that the rate of errors in the system is (.2262/.05) 4.524times higher than in a given process!

This is the multiplicity issue – an error somewhere among aset of “tests” is higher than for any given test.

53 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

What is the probability that the system can be used whenneeded (i.e., that all five systems working properly)?

Recalling the rule of independent events, the probability thatthe system can be used is.95× .95× .95× .95× .95 = .955 = .7738.

Thus, even though each piece of the system has a 95%chance of working properly, there is only a 77.38% chancethat the system itself can be used.The implication here is that an error occurring somewhere inthe set of processes (1-.7738=0.2262) is much higher than forany given system (1-.95=.05).

Note that the rate of errors in the system is (.2262/.05) 4.524times higher than in a given process!

This is the multiplicity issue – an error somewhere among aset of “tests” is higher than for any given test.

54 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

What is the probability that the system can be used whenneeded (i.e., that all five systems working properly)?

Recalling the rule of independent events, the probability thatthe system can be used is.95× .95× .95× .95× .95 = .955 = .7738.

Thus, even though each piece of the system has a 95%chance of working properly, there is only a 77.38% chancethat the system itself can be used.The implication here is that an error occurring somewhere inthe set of processes (1-.7738=0.2262) is much higher than forany given system (1-.95=.05).

Note that the rate of errors in the system is (.2262/.05) 4.524times higher than in a given process!

This is the multiplicity issue – an error somewhere among aset of “tests” is higher than for any given test.

55 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Why Multiplicity Matters – Multiple Testing

The probability of making a Type I error out of C (i.e.,independent) comparisons is given as

p(At least one Type I error) = 1−p(No Type I errors) = 1−(1−α)C ,

where C is the number of independent comparisons to be performed(based on rules of probability).

If C = 5, then p(At least one Type I error) = .2262!

Note that this is the same probability that 1 or moreconfidence intervals when 5 are computed, each at the 95%level, do not bracket the population quantity.

The scenario here is analogous to the airline scheduling system.

56 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Types of Error Rates

There are three types of error rates that can be considered:

1 Per comparison error rate (αPC).

Analogous to the per process failure rate (5%) in the theairline system example.

2 Familywise error rate (αFW).

Analogous to the system failure rate (22.62%) of the airlinesystem example above.

3 Experimentwise error rate (αEW).

Analogous to the multiple systems being required to fly theairplane (e.g., not only the scheduling system, but also thatthe plan functions properly, the flight team arrives on time,etc.), which can be much higher than αFW (if there aremultiple families).

57 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Per Comparison Error Rate

αPC: the probability that a particular test (i.e., a comparison)will reject a true null hypothesis.

This is the Type I error rate with which we have always used(as we only worked with a single test at a time).

58 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Familywise Error Rate (αFW)

αFW: the probability that one or more tests will reject a truenull hypothesis somewhere in the “family.”

Defining exactly what a family is can be difficult and open tointerpretation.

As an aside, there are many statistical issues “open tointerpretation.”

Reasonable people can disagree on how to handle variousissues.

Openness about the methods, it’s assumptions, and limitationsis key.

59 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Experimentwise Error Rate (αEW)

αEW: the probability that one or more tests will reject a truenull hypothesis somewhere in the “experiment” (or studymore generally).

Modifying the significance criterion so that αFW is theprobability of a Type I error out of the set C significance testsis the same as forming C simultaneous confidence intervals.

We do not focus on the experiment wise error rate, as we willassume a single family for our set of tests.

60 / 104

A Hypothesis, A Result

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 1/4

ARCHIVEWHAT IF?BLAGSTOREABOUT

A WEBCOMIC OF ROMANCE, SARCASM, MATH, AND LANGUAGE.

THING EXPLAINER IS AVAILABLE AT: AMAZON, BARNES & NOBLE, INDIE BOUND, HUDSON

SIGNIFICANT

|< < PREV RANDOM NEXT > >|

From XKCD: http://xkcd.com/882/

Tests, tests, tests, . . .

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 1/4

ARCHIVEWHAT IF?BLAGSTOREABOUT

A WEBCOMIC OF ROMANCE, SARCASM, MATH, AND LANGUAGE.

THING EXPLAINER IS AVAILABLE AT: AMAZON, BARNES & NOBLE, INDIE BOUND, HUDSON

SIGNIFICANT

|< < PREV RANDOM NEXT > >|

From XKCD: http://xkcd.com/882/

. . . and more tests. . .

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 1/4

ARCHIVEWHAT IF?BLAGSTOREABOUT

A WEBCOMIC OF ROMANCE, SARCASM, MATH, AND LANGUAGE.

THING EXPLAINER IS AVAILABLE AT: AMAZON, BARNES & NOBLE, INDIE BOUND, HUDSON

SIGNIFICANT

|< < PREV RANDOM NEXT > >|

From XKCD: http://xkcd.com/882/

. . . and more tests. . .

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 2/4

|< < PREV RANDOM NEXT > >|

PERMANENT LINK TO THIS COMIC: HTTP://XKCD.COM/882/ IMAGE URL (FOR HOTLINKING/EMBEDDING): HTTP://IMGS.XKCD.COM/COMICS/SIGNIFICANT.PNG

From XKCD: http://xkcd.com/882/

. . . and yet more tests. . .

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 2/4

|< < PREV RANDOM NEXT > >|

PERMANENT LINK TO THIS COMIC: HTTP://XKCD.COM/882/ IMAGE URL (FOR HOTLINKING/EMBEDDING): HTTP://IMGS.XKCD.COM/COMICS/SIGNIFICANT.PNG

From XKCD: http://xkcd.com/882/

A Type I Error (It Seems)

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 1/4

ARCHIVEWHAT IF?BLAGSTOREABOUT

A WEBCOMIC OF ROMANCE, SARCASM, MATH, AND LANGUAGE.

THING EXPLAINER IS AVAILABLE AT: AMAZON, BARNES & NOBLE, INDIE BOUND, HUDSON

SIGNIFICANT

|< < PREV RANDOM NEXT > >|

From XKCD: http://xkcd.com/882/

After Many Tests, A “Finding”

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 2/4

|< < PREV RANDOM NEXT > >|

PERMANENT LINK TO THIS COMIC: HTTP://XKCD.COM/882/ IMAGE URL (FOR HOTLINKING/EMBEDDING): HTTP://IMGS.XKCD.COM/COMICS/SIGNIFICANT.PNG

From XKCD: http://xkcd.com/882/

Error Rate

3/31/2016 xkcd: Significant

http://xkcd.com/882/ 2/4

|< < PREV RANDOM NEXT > >|

PERMANENT LINK TO THIS COMIC: HTTP://XKCD.COM/882/ IMAGE URL (FOR HOTLINKING/EMBEDDING): HTTP://IMGS.XKCD.COM/COMICS/SIGNIFICANT.PNG

The probability of a Type I error for 20 independent tests,which the jelly bean comparisons were, is

1− (1− .05)20 = 1− .0.3584859 = 0.6415141

Thus, there is a 64% chance of a Type I error in such a case!

From XKCD: http://xkcd.com/882/

A Summary. . .

Multiplicity Matters!

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Linear Comparisons: Specifying Contrasts of Interest

Suppose a question of interest is the contrast of group 1 andgroup 3 in a three group design.

That is, we are interested in the following effect: Y1 − Y3.

The above is equivalent to: (1)× Y1 + (0)× Y2 + (−1)× Y3.

70 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Suppose a question of interest is the mean of group 1 andgroup 2 (i.e., the mean of the two group means) and group 3in a three group design.

That is, we are interested in the following effect: Y1+Y2

2 − Y3.

The above is equivalent to: Y1+Y2

2 + (−1)× Y3.

The above is equivalent to: ( 12 )× Y1 + ( 1

2 )× Y2 + (−1)× Y3.

We could also write the above as:(.5)× Y1 + (.5)× Y2 + (−1)× Y3.

71 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Consider a situation in which group 1 receives one type ofallergy medication, group 2 receives another type of allergymedication, and group 3 receives a placebo (i.e., nomedication).

The question here is “does taking medication have an effectover not taking medication on self reported allergy symptoms.”

72 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

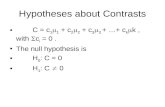

Forming Linear comparisons

In the population, the value of any contrast of interest isgiven as

Ψ = c1µ1 + c2µ2 + c3µ3 + . . .+ cmµm =m∑j=1

c jµj ,

where cj is the comparison weight for the jth group and Ψ isthe population value of a particular linear combination ofmeans.An estimated linear comparisons is of the form

Ψ = c1Y1 + c2Y2 + c3Y3 + . . .+ cmYm =m∑j=1

c j Yj ,

where cj is the comparison weight for the jth group and Ψ isthe particular linear combination of means.

73 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Forming Linear comparisons

The first example from above was comparing the mean ofgroup 1 versus group 2.

In c-weight form the c-weights are [1, 0,−1]:(1)× Y1 + (0)× Y2 + (−1)× Y3.

Comparing one mean to another (i.e., using a 1 and -1c-weight with the rest 0’s) is called a pairwise comparisons (asthe comparison only involves a pair).

74 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Forming Linear comparisons

The second example was comparing the mean of groups 1 and2 versus group 3.

In c-weight form the c-weights are [.5, .5,−1]:(.5)× Y1 + (.5)× Y2 + (−1)× Y3.

Comparing weightings of two or more groups to one or moreother groups is called a complex comparison. That is, if thec-weights are something other than 1 and -1 and the rest 0’s,it is a complex comparison.

75 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

It is required thatm∑j=1

cj = 0.

For example, setting c1 to 1 and c2 to −1 yields the pair-wisecomparison of Group 1 and Group 2:

Ψ = (1× Y1) + (−1× Y2) = Y1 − Y2.

76 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Rules for c-Weights

The sum of the c-weights for a comparison that are positiveshould sum to 1.

The sum of the c-weights for a comparison that are negativeshould sum to -1.

By implication of the two rules above, sum of all c-weights fora comparison should sum to 0 (i.e.,

∑cj = 0).

Otherwise, the corresponding confidence interval is not asintuitive.

However, any rescaling of such c-weights produces the samet-test.The confidence interval will have a different interpretation thanusual, as the effect will be for a specific linear combination(e.g., Ψ = 2Y1 − Y2 − Y3).

77 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Thus, for the mean of Groups 1 and 2 compared to the meanof Group 3, the contrast is

Ψ = (.5× Y1) + (.5× Y2) + (−1× Y3) =Y1 + Y2

2− Y3.

78 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Consider a situation in which one wants to weight the groupsbased on the relative size of an outside factor, such asmarketshare, profit-per-segment, number of users, et cetera.

Suppose that interest is in comparing teens versus a weightedaverage of 20 year olds and 30 year olds in an onlinecommunity, where among the 20 and 30 year olds theproportion of users is 70 percent and 30 percent, respectively.

Ψ = 1× YTeens + (−.70× Y20s) + (−.30× Y30s).

79 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

There are technically an infinite number of comparisons thatcan be formed, but only a few will likely be of interest.

The comparisons are formed so that targeted researchquestions about population mean differences can beaddressed.

But, recall that in general, the sum of the c-weights that arepositive should sum to 1 and the sum of the c-weights that arenegative should sum to -1 so as to have a more interpretableconfidence interval.

80 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

A More Powerful t-Test

The t-test corresponding to a particular contrast it given as

t =

∑cj Yj√

MSWithin

m∑j=1

(c2j

nj

) =Ψ

SE (Ψ),

where the MSWithin is from the ANOVA and is the bestestimate of the population variance.

Importantly, this t-test has N −m degrees of freedom (i.e.,the MSWithin degrees of freedom).

Note that the denominator is simply the standard error of thecontrast, which is used for the corresponding confidenceinterval.

81 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Recall that when the homogeneity of variance assumption holds,there are m different estimates of σ2.

For homogeneous variances, the best estimate of the populationvariance for any group is the mean square error (MSWithin), whichuses information from all groups.

Thus, the independent groups t-test can be given as

t =Yj − Yk√

MSWithin

(1nj

+ 1nk

) ,with degrees of freedom based on the mean square within (N −m),which provides more power.

82 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

The above two-group t-test is still addresses the question“does the population mean of Group 1 differ from thepopulation mean of Group 2?”

However, there is more information is used because the errorterm is based on N −m degrees of freedom instead ofn1 + n2 − 2.

83 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

The MSWithin – Even for a Single Group

Even if we are interested in testing or forming a confidenceinterval for a single group, the mean square within can (andusually should) be used — again, due to having a betterestimate of σ2:

t =Yj − µ0√

MSWithin

(1nj

) .The two-sided confidence interval is thus:

Yj ±

√MSWithin

(1

nj

)× t(1−α/2,N−m).

The degrees of freedom for the above test and confidenceinterval is, because MSWithin is used as the estimate of σ2,N −m. 84 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Thus, using MSWithin is one way to have more power to testthe null hypothesis concerning a single group or two groups,even when more than two groups are available.

Additionally, precision is increased because the confidenceinterval will be narrower (due to the smaller standard errorand smaller critical value).

85 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

The Bonferroni Procedure

The Bonferroni Procedure is also called Dunn’s procedure.

Good for a few pre-planned targeted tests, but doing toomany leads to conservative critical values.

Conservative critical values are those that are bigger (i.e.,harder to achieve significance) than would be the case ideally.

Liberal critical values are those that are smaller (i.e., easier toachieve significance) than would be the case ideally.

86 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

It can be shown that αPC ≤ αFW ≤ CαPC, where C is thenumber of comparisons.

The per comparison error rate can be manipulated by dividingthe desired familywise (or experimentwise) Type I error rate byC , the number of comparisons: αPC = αFW

C .

The standard t-test formula is used, but the obtained t valueis compared to a critical value based on α/C : t(1−(α/C)/2,df ).

The observed p-values (e.g., from SPSS) can be corrected formultiplicity by multiplying the C p-values by C .

If the corrected p value is less than αFW , then the test isstatistically significant in the context of a correct familywiseType I error rate.

87 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

The critical value is what changes in the context of aBonferroni test, not the way in which the t-test and/orconfidence intervals are calculated.

Incorporating√MSWithin into the denominator of the t-test is

not really a change, as√MSWithin is just a pooled variance

based on m (rather than 2) groups.

Recall this is just an extension of s2Pooled when information on

more than two groups is available.

88 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Tukey’s Test

Tukey’s test is used when all (or several) pairwise comparisonsare to be tested.

For comparing all possible pair-wise comparisons, Tukey’s testprovides the most powerful multiple comparison procedure.

There is a Tukey-b in SPSS — I recommend “Tukey.”

The p-values and confidence intervals given by SPSS alreadyyields, for the Tukey procedure, “corrected” p-values andconfidence intervals.

89 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

Pairwise comparisons compare the means of two groups (i.e.,a pair; µ1 − µ3) without allowing any other complexcomparisons (e.g., (Y1 + Y2)/2− Y3).

The observed test statistic is compared to the tabled values ofthe Studentized range distribution.

This is the distribution that the Tukey procedure uses toobtain confidence intervals and p-values.

90 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

The Scheffe Test

For any number of post hoc tests with any linear combinationof means, the Scheffe Test is generally optimal.

Although the Scheffe Test is conservative for a small numberof comparisons, any number of comparisons can be conductedwhile controlling the Type I error rate.

91 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

We compute the F -value (just a t-value squared) in accordwith some linear combination of means, and a critical value isdetermined for the specific context.

The Scheffe critical F -value (take the square root for thecritical t-value) is given as

(m − 1)F(m−1,N−m;α),

which is m − 1 times larger than the critical ANOVA value.

92 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

The Scheffe procedure should not be done for all pairwisecomparisons (it is not as powerful as Tukey’s Test for pairwisecomparisons).

If many complex and other (e.g., pairwise) are to be done,usually the Scheffe procedure is optimal.

93 / 104

Flowchart for Multiple Comparisons

Begin

Testing all pairwise andno complex comparisons

(either planed or posthoc) or choosing to

test only some pairwisecomparisons post hoc?

Use Tukey’s method

Are all comparisonsplanned?

Use Scheffe’s method

Is Bonferroni criticalvalue less than

Scheffe critical value?Use Bonferroni’s method

Use Scheffe’s method (or, priorto collecting the data, reduce thenumber of contrasts to be tested)

Yes

No

No

Yes

Yes

No

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Why Multiplicity MattersError RatesLinear Combinations of MeansControlling the Type I Error

SPSS does not make it easy to get the appropriate p-valuesand confidence intervals for complex comparisons.

The Bonferroni and Scheffe procedures in SPSS are forpair-wise comparisons, which are not of interest becauseTukey is almost always preferred for pair-wise.

For the specified contrasts, SPSS reports only the standardoutput (i.e., not controlling the Type I error rate).

Thus, users need to be really careful they are appropriatelycontrolling the Type I error rate appropriately.

95 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Assumptions of the ANOVA

The assumptions of the ANOVA are the same as for thetwo-group t-test.

1 The population from which the scores were sampled isnormally distributed.

2 The variances for each of the m groups is the same.

3 The observations are independent.

Recall that multiple regression assumes homoscedasticity,which is just an extension of homogeneity of variance.

96 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Also like the independent groups t-test, the first twoassumptions become less important as sample size increases.

This is especially when the per group sample sizes are equal ornearly so.

Thus, the larger the sample size, the more robust the model tothese two assumption violations.

97 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Again, like the t-test, the ANOVA is very sensitive (i.e., it isnot robust) to violations of the assumption of independence.

Observations that are not independent can make the empiricalα rate much different than the nominal α rate.

98 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Analysis of variance procedures test an omnibus (i.e., anoverall) hypothesis.

More specifically, ANOVA models test the hypothesis thatµ1 = µ2 = . . . = µm.

In many situations, primary interest concerns targeted nullhypotheses (not just the omnibus hypothesis).

Thus, additional analyses may be necessary.

99 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

A Summary from Designing Experiments and AnalyzingData

This discussion “focuses on special methods that are needed when the

goal is to control αFW instead of to control αPC . Once a decision has

been made to control αFW , further consideration is required to choose an

appropriate method of achieving this control for the specific

circumstance. One consideration is whether all comparisons of interest

have been planned in advance of collecting the data. If so, the Bonferroni

adjustment is usually most appropriate, unless the number of planned

comparisons is quite large. Statisticians have devoted a great deal of

attention to methods of controlling αFW for conducting all pairwise

comparisons, because researchers often want to know which groups differ

from other groups. We generally recommend Tukey?s method for

conducting all pairwise comparisons. Neither Bonferroni nor Tukey is

appropriate when interest includes complex comparisons chosen after

having collected the data, in which case Scheffe’s method is generally

most appropriate” (notation changed to reflect current use).

100 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

What You Learned from this Lesson

You learned:

How to compare more than two independent means to assess ifthere are any differences via analysis of variance (ANOVA).

How the total sums of squares for the data can be decomposedto a part that is due to the mean differences between groupsand to a part that is due to within group differences.

Why doing multiple t-tests is not the same thing as ANOVA.

Why doing multiple t-tests leads to a multiplicity issue, in thatas the number of tests increases, so to does the probability ofone or more error.

How to correct for the multiplicity issue in order for a set ofcontrasts/comparisons has a Type I error rate for the collectionof tests at the desirable (e.g., .05) level.

How to use SPSS to implement an ANOVA and follow-uptests.

101 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Notations

H0: σ21 = σ2

2 - The null hypothesis of equal variances

F(df1,df2) - The F -statistic with df1 and df2 as the degrees offreedom

s21 and s2

2 - The variances for group 1 and group 2, respectively

s2Pooled - Pooled within group variance

m - Number of groups

nj - Sample size in the jth group

(j = 1, . . . ,m)

N - Total sample size

N =m∑j=1

nj

102 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Notations Continued

SS - Sum of squares

This can be for the Between, Treatment, Among, Within,Error, or Total Sum of Squares

MS - Mean square (i.e., a variance)

MSWithin is the mean square within a group

Yij - The score for the ith individual in the jth group

τj - The treatment effect of the jth group

εij - Some uniqueness for the ith individual in the jth group

E[MSWithin] - The expected value of the mean squares withina group

103 / 104

Goal of Analysis of VarianceThe Formal ANOVA Model

Explanation by ExampleMultiple Comparisons

Assumptions

Assumptions of the ANOVAWhat You LearnedNotations

Notations Continued

C - The number of independent comparisons to be performed

αPC - Per comparison error rate

αFW - Familywise error rate

αEW - Experimentwise error rate

Ψ - The particular linear combination of means

cj - Comparison weight for the jth group

104 / 104