A classification of user experience frameworks for ...€¦ · frameworks or user experience...

Transcript of A classification of user experience frameworks for ...€¦ · frameworks or user experience...

1

A classification of user experience frameworks for movement-based interaction design

Ricardo Cruz Mendoza1, Nadia Bianchi-Berthouze2, Pablo Romero1, and Gustavo

Casillas Lavín3

1 IIMAS, Universidad Nacional Autónoma de México 2 UCL Interaction Centre, University College London

3 CIDI, Universidad Nacional Autónoma de México

This is an Accepted Manuscript of an article published by Taylor & Francis in The Design Journal: An International Journal for All Aspects of Design on 06/11/2015, available online: http://www.tandfonline.com/10.1080/14606925.2015.1059606

Abstract Recent technological developments have made it possible for digital technology to offer interaction modes that can engage the whole body. These systems can track the users’ movements to support rehabilitation, to play games or to create music, among other activities. One important implication of interacting with the whole body is the increased relevance of the user’s experience. A number of approaches and frameworks have been proposed to design these movement-based systems and some of them pay special attention to the user’s experience. This paper maps the space of design frameworks for movement-based interaction that focus on user experience. It classifies existing frameworks by their type and by characteristics that could help practitioners intending to design applications of that sort to make informed decisions about what framework to employ. Additionally, and to illustrate the use of the classification, we include a case study related with selecting and deploying some of these frameworks for a design exercise. Keywords: User experience, Movement-based interaction, Design Frameworks 1. Introduction Involving more of the human body when interacting with digital technology enhances the relevance of the user’s experience. Bodily movement can be a source of aesthetic experiences (when dancing for example), of feelings of accomplishment and pride when mastering sophisticated skills and of meanings that we convey not only to others but also to ourselves through our proprioceptive experience (Bianchi-Berthouze, 2013). However designing for human movement with the idea of involving an affective component is not straightforward and can be significantly different from traditional interaction design. In traditional interaction design there are already standard conceptual models such as the desktop metaphor (Sharp, Rogers, & Preece, 2007); there are no analogous conventions for embodied interaction. Additionally, in traditional interaction design there are common, well-known devices for input and output; this is not the case for movement-based interaction. There are no standard devices and their affordances are not common knowledge. For example, movement-based interaction implemented through an unobtrusive sensing system can give too few hints about its use and therefore produce a sense of loss of control in users (Rogers & Muller, 2006). Although movement-based interaction is relatively recent as a field of study, there are already several successful commercial systems (like Microsoft’s Kinect (Ashlee, 2010), for example) and

2

more importantly for this paper, a good number of approaches, frameworks and methods for performing design that focus on promoting a user experience of good quality. This paper looks at that literature to map the space of design frameworks for movement-based interaction that focus on user experience, classifying them by their type and by some additional characteristics. The aim of this classification is to guide practitioners intending to design applications of that sort when deciding what framework or frameworks to employ. Additionally, and to illustrate the use of the classification, we include a case study related with the use of the classification for the selection and deployment of some of its frameworks for a design exercise. The paper is organised as follows. Section 2 presents some of the definitions that have been offered for the user experience concept; Section 3 looks at the contribution that different research areas have made to the intersection of user experience and movement-based interaction; Section 4 reviews frameworks and approaches for the design of movement-based interaction that pays special attention to the user’s experience; Section 5 presents a case study; and finally Section 6 discusses the classification and suggests topics for further research. 2. User Experience There have been several attempts at providing a definition of user experience (P. Desmet & P. Hekkert, 2007; Forlizzi & Ford, 2000; Hassenzahl & Tractinsky, 2006; Law, Roto, Hassenzahl, Vermeeren, & Kort, 2009). In a survey of researchers and practitioners of the area, Law et al (2009) define user experience as a subjective, dynamic and context dependent perception of a system, object, product or service that a person interacts with through a user interface. In this way, the main difference between experience in general and user experience is the digital system and the fact that experience arises from an interaction with it. Although Law et al (2009) acknowledge that social context can affect user experience, they recommend that this term should be understood as something individual instead of social. There are a number of user experience frameworks and models; some of them focus around the user (Forlizzi & Ford, 2000; Hassenzahl, 2004; Mäkelä & Fulton Suri, 2001), the system (Alben, 1996), the interaction (Wright, McCarthy, & Meekison, 2004) or important aspects such as the types of experience (Forlizzi & Ford, 2000) or the fact that studying user experience is a multidisciplinary activity (Davis, 2003; Forlizzi & Battarbee, 2004). These frameworks are generic in the sense that they do not focus on a specific type of user interface. The user interface in a digital system could be implemented in a traditional way (through keyboard and mouse), or through a more modern interaction mechanism such as touch-screen devices or optical recognitions systems like Microsoft’s Kinect. Describing those generic user experience frameworks is however beyond the scope of this paper, we will instead focus on frameworks and approaches that are specific to user experience within the context of movement interaction systems. Before that the next section looks at some theoretical background for those two areas. 3. Research areas that have contributed to user experience and movement interaction The two main areas contributing to the intersection of user experience and movement interaction are HCI and product design. Research in the HCI and product design areas have started to develop a shared understanding of concepts such as user experience and movement-based interaction. They have also elaborated a number of frameworks, approaches and methods to understand and apply

3

those concepts. The product design area has developed a strong interest in movement-based interaction given that products are nowadays frequently augmented with digital, interactive properties (Hassenzahl & Tractinsky, 2006). There is also the growing acknowledgment that other areas such as dance or theatre have more experience and knowledge about movement. Therefore, there has been an open attitude to explore and adapt frameworks, methods and techniques from fields such as the performing arts and social science. Within HCI, most of the interest in user experience and movement-based interaction has originated from the Ubiquitous Computing and Tangible User Interfaces (TUIs) areas. As can be seen in the review of frameworks and approaches in the next section, only one framework of those reviewed has been partially developed in Video Gaming studies. Also, while TUIs and Ubiquitous Computing have frequently collaborated with product design, Video Gaming has been more independent. This is probably because, as this area has been traditionally linked with sport, the ways in which user experience have been conceptualised and assessed have comprised a dual motivation, the need to increase physical fitness levels as well as the affective and aesthetic aspects of the interaction with the game (Thin & Poole, 2010). The theoretical foundations behind user experience and movement-based interaction can be found in Embodied affect or social embodiment (Ziemke, 2003). Embodied affect suggests that embodied functions can help to explain some affective attitudes and social behaviours (Barsalau, 2008). For example, posture and proprioceptive cues can influence motivation, affect and evaluative processes. When people sit in a slumped posture, for example, they can develop feelings of helplessness more readily (Riskind & Gotay, 1982) and show less pride about their performance (Stepper and Strack, 1993) than those who sit in an upright posture. In a similar vein, employing proprioceptive cues like head nodding while listening to persuasive messages about a product promoted a more favourable reception to the messages than head shaking (Wells and Petty, 1980). Other research in the area (Marsh, Richardson, & Schmidt, 2009; Valdesolo & DeSteno, 2011; Valdesolo, Ouyang, & DeSteno, 2010) has also shown that movement synchrony may affect social experience, for example synchrony of movement between people is pleasurable and build trust and connection. Biological explanation to these effects have been investigated by Cuddy and her team showing that the type of stance maintained for 2 minutes produces an increase or decrease in hormones related to specific affective processes (Carney, Cuddy, & Yap, 2010). Within HCI, a very influential framework that looks at notions of embodiment is embodied interaction (Dourish, 2001). Embodied interaction considers embodiment as a participative status, where people act with and through technology using the tacit knowledge stemming from our familiarity with the everyday world and makes explicit reference to the importance of experiencing, acting and being in the world. 4. The Design Approaches The work that has been considered for this review is mainly about frameworks for the design of movement interaction that pay special attention to user experience. We consider the definition of the term ‘framework’ similarly to Rogers & Muller (2006), as an articulatory device that is explicated in a particular way and that can be useful to inform design. As far as we know, there is no other work that has attempted to review the research on frameworks for the design of movement interaction that pay special attention to user experience. There have

4

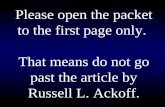

been surveys of movement-based interaction in video games but without a specific interest in frameworks or user experience aspects ((Nijholt, van Dijk, & Reidsma, 2008), of frameworks for tangible user interaction but without the focus on user experience (Mazalek & van den Hoven, 2009) and special issues of user experience (Hassenzahl & Tractinsky, 2006) and of movement based interaction (Larssen, Robertson, Loke, & Edwards, 2007) but not of the intersection of these areas. Also, although there has been very interesting work on the social issues of movement-based interaction (Robertson, 1997, 2002), this paper focuses on individual rather than social aspects. One of the main motivations of this review is to support practitioners to make informed decisions about what framework to use when designing for movement-based interaction. Table 1 provides a quick guide to the conceptual space covered by this review. This table can be navigated like a decision tree. We consider that the questions at the top of the table would be particularly relevant when designers are selecting a suitable framework to deploy. By selecting an answer to these questions, from left to right, the reader can home in on a suitable framework to use.

How specific should it be?

What is its main theme?

What is its scope?

What is its main focus? Framework

Prescriptive Method

The sensing system

The sensing potential

Best use of sensors Microsoft, 2012

Designing through moving

Enacting movement

Designing for and through aesthetic interaction

Ross & Wensveen, 2010

Designing through movement metaphors

Jensen & Striestra, 2007

Kinaesthetic awareness

Designing through external and internal perception

Loke & Robertson, 2007, 2010

Analytical Construct

The sensing system

The sensing potential

Classifying movement according to what sensors can detect

Benford, Schnädelbach, Koleva, et al., 2005

Considering space, relations and feedback in video-sensor interaction

Ericksson, Hansen & Lykke-Olesen, 2007

Sensor interaction

Establishing basic interaction conventions

Belloti, Back, Edwards et al., 2002

Physical interactivity

Aesthetics of movement

Design for bodily skills Djajaningranat, Matthews & Stienstra, 2007

Generative Construct

The sensing system

Promoting engagement

Using sensors to support affective activities

Höök, 2008

Physical Interactivity

Link between action and function

Making interaction intuitive Wensveen, Djajadiningrat, & Overbeeke, 2004

Sensitize designers on how users experience a system

Larssen, Robertson & Edwards, 2007

Aesthetics of movement

Designing for socio-cultural aesthetics

Graves-Petersen, Iversen, Krogh & Ludvigsen, 2004

Kinesthetic knowledge

Designing through and from the body

Larssen, Robertson & Edwards, 2008

Mapping between bodily activity and emotions

Kleinsmith and Bianchi-Berthouze, 2013

Designing Kinesthetic Increasing awareness of Schiphorts, 2007

5

Table 1. Overview of movement-‐based interaction frameworks

The first of those questions, “How specific should it be?”, refers to the role a framework can serve (Rogers & Muller, 2006). This role can go from prescriptive to explanatory. For example, a prescriptive method will enumerate a number of specific steps that need to be performed, while an explanatory account typically articulates a view on how to structure our thinking about designing for this area and usually consist of a set of concepts or dimensions to take into account. Somewhere in the middle are the analytical and generative constructs. These are abstract devices that can serve to either analyse or generate a design through a systematic process. The second question is about the main theme of the framework. There are roughly three main themes: the sensors or sensing system, the (physical) interactivity, and designing through moving. The following subsections are organised based on those themes. The third and fourth questions refer to the scope of the framework and to the main point it aims to make, respectively. The frameworks in the conceptual space illustrated by Table 1 are discussed in more detail in the next subsections. 4.1 The focal role of the sensing system In this theme, the focus of the design effort is on the properties, affordances and potential of the system of sensors employed in movement-based interaction. Unsurprisingly, a good number of approaches focusing on the sensing system come from HCI. These approaches have looked at the system from the point of view of the sensors employed and their affordances at different levels of abstraction, from what they can and cannot sense to basic interaction conventions with users up to how can sensors and system promote reflection and emotional communication among other human activities. 4.1.1 The sensing potential The first framework that looks at the affordances of sensors is the Kinect human interface guidelines (Microsoft, 2012). These are a set of design guidelines specific for the kinect sensor. The kinect sensor enables optical and voice recognition of users, it can track the movements of users and understand voiced keywords or phrases. The movement interaction guidelines include technical advice to make the best use of the device, and to improve usability and user experience. Tips for improving user experience include advice on considering gesture ergonomy and avoiding fatigue in the user as well as taking into account that appropriate movements for gaming might be different from those of generic applications. An approach focusing on what sensors can detect is the Expected, Sensed and Desired framework (Benford, et al., 2005). This framework aims to help designers discover potential interaction issues as well as inspire new features by looking at movement-based interaction from the point of view of

through moving

awareness movement Disrupting habitual interaction for users

Ghazali & Dix, 2005

Explanatory Account

The sensing system

Promoting engagement

Using sensors to promote exploration and play

Rogers & Muller, 2006

Physical interactivity

Aesthetics of movement

Modulating experience through proprioceptive cues

Bianchi-Berthouze, N, 2013

Kinesthetic knowledge

Design that aims to address bodily skills and potential

Fogmann et al., 2008

6

the relationship between movement and the sensors employed in the application. The focus is on the expected movements (those that users naturally perform), the sensed movements (those that the sensors employed can detect) and the desired movements (those that are required by the application). By applying the framework, the designer can classify and analyse movement in a target application in terms of those categories. This analysis can reveal overlaps; boundary conditions as well as gaps or movements that fall outside any of the categories and that therefore can represent potential problems or opportunities to incorporate innovative features. The framework is applicable to a wide range of movement interaction systems and can bridge analytic and inspirational design approaches. Another framework that looks at the sensing potential of the system is the one by Eriksson, Hansen & Lykke-Olesen (2007). They propose a conceptual framework specific to movement-based interaction that uses video cameras as the main input device. This framework can be used to assist the practitioner in exploring novel variants and approaches when designing this type of applications. The framework comprises three main concepts: space, relations and feedback. Space refers to the properties of the camera’s field of view where movement can be detected and registered, whereas relations means the mapping between the actual physical movement and corresponding entities in the application’s domain, and feedback is related to how the digital events are presented to the user. The framework allows the designer to spot potential problems and consider alternative design configurations associated with those aspects of video-based systems. 4.1.2 Interaction conventions The next framework makes the point that basic interaction conventions have not been established in sensing systems yet (Bellotti, et al., 2002). For example, in traditional text-based applications, a blinking cursor is a well-known convention to inform the user the system is attending and ready for a command. There is no analogous convention in sensing systems. Bellotti, et al’s framework supports the designer by providing five questions associated with basic interaction conventions: i) How does the system know I’m addressing it?, ii) How do I know what the system is attending to, iii), How does the system know what I mean when I issue a command? iv) How do I know the system has done the correct thing? iv) How do I recover from mistakes? The framework supports the analysis of those questions by using concepts from human – human communication in social science research such as interaction analysis, conversation analysis and repair in conversation, among others (Goffman, 1981; Sacks, Schegloff, & G., 1974; Schegloff, Jefferson, & Sacks, 1977). 4.1.3 Promoting reflection and emotional communication Two approaches that, although concerned with sensors and the digital system, focus on supporting higher-level cognitive and affective human activities are those by Rogers & Muller (2006) and by Höök (2008). Rogers & Muller’s (2006) framework is built around the properties of sensors and the notion of transforms or couplings between user actions and the system reactions. Rogers & Muller are concerned with applications that foster engaging and creative user experiences and therefore see an opportunity on the uncertainty that can be produced by sensor limitations. Specifically, they consider the explicit reasoning about the world that intriguing couplings can promote as central to creating engaging experiences. Their framework prompts designers to analyse the phenomenological processes involved when users encounter a transform and also to consider how the properties of sensors fit with the characteristics of user activities. Höök’s (2008) view originates from work in emotion and HCI and proposes that designing for movement-based interaction should be more about leaving open ‘surfaces’ for people to appropriate

7

and to recognise themselves socially rather than attempting to monitor or measure their experiences. By open surfaces Höök means that the functionality provided by the system should be designed with the idea to give flexibility to users on how they interpret and employ it. 4.2 Physical interactivity Although product design has always been interested in the sensory and dynamic aspects of interaction with products, the fact that products nowadays are more and more frequently augmented with digital properties has increased the interest in physical interactivity. This concern has been reflected in approaches that focus on the intuitiveness of physical interaction; on the aesthetic experience users can derive from such interaction and on the need for characterising bodily knowledge. We review two frameworks concerned with the intuitiveness of physical interaction, three that are centred around the notion of the aesthetics and affective aspects of movement and two that focus on the need for taking into account knowledge about human movement and sensory aspects. 4.2.1 The link between movement and function Two frameworks that focus on the link between user’s movement and product’s function are the Interaction Frogger (Wensveen, Djajadiningrat, & Overbeeke, 2004) and the Feel Dimension (Larssen, Robertson, & Edwards, 2007). The Interaction Frogger is a framework from product design concerned with the freedom and intuitiveness of interaction and with the couplings between user’s action and the product’s feedback. The framework breaks down those couplings and feedback into several characteristics and analyses the resulting matrix of crossings. In this way, the framework can support the designer in improving interaction in an existing system or inform the design of a new system. The Feel Dimension framework aims to help designers consider how users might experience a system kinaesthetically. It focuses on the role the proprioceptive, kinaesthetic and haptic senses play in experiencing objects and environments. The framework is structured as a set of themes that can support the designer’s understanding of the users’ kinaesthetic experience of the system. The aim of those themes is to sensitise designers as well as to provide a language for thinking and talking about kinaesthetic aspects of movement-based interaction. The themes are: body-thing dialogue, potential for action, actions in space and movement expression. Body-thing dialogue has to do with the interaction that takes place within the space provided by the object or environment. Potential for action refers to people’s intentions and the characteristics of their bodies. Actions in space is concerned with whether user’s actions are within or out of the reach of objects (in either a physical or cultural sense). Finally, movement expression refers to the way in which people execute movements when interacting with objects or environments. 4.2.2 Aesthetic and affective experience of movement The dialogue between a mover and the product’s space hints to the fact that this interaction might have an aesthetic content. The next two approaches make this point. The first approach, by Djajadiningrat, Matthews & Stienstra (2007), complements the Interaction Frogger framework (Wensveen, et al., 2004) by looking at the aesthetics of the couplings of user’s action with product’s function. They articulate the view that bodily skill and movement in product reactions can have an aesthetic component and therefore an important role to play both on usability and aesthetics. Interfaces could take advantage of skilled bodily movement to make interaction more effective, make motor skills a source of challenge and pride for users, and even lessen cognitive overflow.

8

They consider the coupling of bodily action and product physical reaction as an interesting and challenging field for movement-based interaction, both in terms of its usability and aesthetics. The next framework is theoretically grounded in pragmatist aesthetics (Graves-Petersen, et al., 2004). This framework, as the previous one, also considers the user’s aesthetic experience that can be derived from movement. However it looks at aesthetics from a socio cultural point of view, taking into account that objects and systems do not exist in isolation but are embedded in the real world and therefore tightly associated with people’s aims and values and with other social factors. This view considers aesthetics as tightly connected with value and practical use: an aesthetic experience can invigorate and vitalise people and therefore help them achieve their aims. Similarly to Rogers & Muller’s (2006) notion of transforms, the framework also encourages designers to include intriguing or even ambiguous aspects as a way for users to appropriate products by exploration and improvisation. One framework that has its theoretical grounding on the notion of embodied affect is the one by Bianchi-Berthouze, 2013). This is the only framework in the review that has been partially developed from Video Gaming studies. It aims at supporting the designer in the use of body movements to modulate the user’s experience through proprioceptive cues. It proposes a taxonomy of movements that occur when interacting with technology: movements required to control the task; movements not required but that facilitate the control of the task; movements that are related to the role the task offers (e.g, the role of a rock player in a music computer game) even if they interfere with the control of the task; and finally emotional and social expressive movements expressing the person engagement in the task and with other agents involved in the task. The framework proposes that those three categories may reinforce each other through a proprioceptive loop and provide a wider emotional, social and fantasy experience. Bianchi-Berthouze therefore highlights the importance of designers to more systematically tackle the design of physical interaction by taking into account the proprioceptive loop to enhance and steer the game experience. 4.2.3 Kinesthetic knowledge The next three approaches focus on the need for characterising bodily or kinesthetic knowledge. The first one comes from the Affective Computing field and is related with knowledge about mappings between bodily activity and emotions. Kleinsmith, Bianchi-Berthouze & Steed (2011) and extended in Griffin et al. (2013) analyse how naturalistic body expressions are perceived by others and how technology can be endowed with the capability to read such cues. They propose computational models that map low-level body posture primitives (body configuration) into emotional interpretations. Furthermore, Kleinsmith & Bianchi-Berthouze (2013) review the growing number of studies in this area and provide a framework that maps such kinematics primitives into affective categories and affective dimensions providing the ground for both measuring and synthetize emotions in interactive technology. Such mappings are starting to be investigated even at muscle activity levels (Veld, Boxtel, de Gelder, 2014). The second approach is by Fogtmann, et al. (2008). Their framework can provide an overview of a system’s capacity to address bodily potential. This potential is analysed in terms of the system aims as well as of important characteristics of kinesthetic interaction. The aims are expressed as three design themes and the characteristics as seven design parameters. The system aims considered in the framework are:

- Kinesthetic development: acquiring, developing or improving bodily skills; - Kinesthetic means: using bodily skills for a goal other than kinesthetic development;

9

- Kinesthetic disorder: challenging the kinaesthetic experience by somehow changing it. Some of the design parameters are: engagement (the degree of user engagement), sociality (the social and collaborative aspects) and motivation (whether the interaction with the system is somehow prescribed or restricted). In order to apply the framework, the aim of the system is associated with a design theme or themes and then each of these is described in terms of the design parameters. The framework can be used to analyse existing systems but also to develop designs that can explore innovative kinesthetic interactions. The second approach focuses on the need for characterising kinesthetic knowledge and is proposed by Larssen, et al. (2008). This approach explores the nature of experiential bodily knowledge, identifies some of its characteristics and discusses what this means for movement interaction design. Larssen, et al. suggest that experiential bodily knowledge allows designers to understand how people might experience movement and that this kind of knowledge is acquired through moving with awareness. They believe that acquiring experiential bodily knowledge is similar to developing intellectual skills in that students go through several learning stages and develop a skill to perform movements as well as to recognise that knowledge. Larssen et al. suggest that to be able to design movement-based interaction of high quality, designers need to explore, reason and reflect about movement and this can only be achieved through moving. This concern with designers exploring their design space through the knowledge and practice of their own movement is the central theme of the next set of approaches. 4.3 Exploring the design space through moving This section looks at concrete methods and techniques that can be employed for movement-based interaction design. Very frequently those methods and techniques use Labanotation (Hutchinson Guest, 1977) to this end. Labanotation is a system for analysing and recording movement devised by Rudolph Laban for dance choreography and that describes movement in terms of its main feature, the effort or energy content and its structural aspects. 4.3.1 Enacting movement Similarly to the framework by Graves-Petersen et al. (2004), Ross & Wensveen (2010) propose a design approach for movement interaction based on pragmatist aesthetics. Their approach, designing for Aesthetic Interaction through Aesthetic Interaction, tries to incorporate the instrumentality, use, interactivity and context aspects of aesthetics within three concrete steps: 1) creating behaviours through acting out choreographies, 2) specifying behaviour in dynamic form language and 3) implementing experiential prototypes. In the first step they suggest to act out the product’s functions (in the case study presented they ask professional dancers to participate by improvising choreographies to represent the product’s functions). In the second step the authors employ a modified version of Labanotation (Hutchinson Guest, 1977) to specify the product’s behaviour. Finally the product and its behaviour are implemented in functional prototypes. Another method that relies on designers enacting movement is the Metaphor Lab (Jensen, 2007; Jensen & Stienstra, 2007). This is a method for identifying and transferring interaction qualities from observed movements into non-digital interaction artifacts. The Metaphor Lab comprises four

10

steps: observing, enacting and describing movement videos (relevant to the application domain), identifying and describing interaction characteristics, developing metaphors to describe those characteristics and designing interactive (non-digital) artifacts that embody those metaphors. The description of the movement videos is done using Labanotation (Hutchinson Guest, 1977). The method emphasises the importance of a clear metaphor to illustrate the qualities of the target movements. The method, however, does not make clear how to translate those qualities into actual digital artifacts. 4.3.2 Disrupting habits The next two approaches point out that one of the main obstacles to designing for user experience is human tendency to automatise perception to the point that ‘we start to feel very little of what we touch, to listen to very little of what we hear and to see very little of what we look at’ (Schiphorst, 2007). These approaches suggest that in order to come to fresh perspectives, designers should not just enact movement but also disrupt habitual movement patterns. The first one of them is Making Strange (Loke & Robertson, 2007, 2010). This is a method for video-based movement interaction based on the tactic of disrupting habitual ways of doing things with the aim of going against the automatism of perception and arriving in this form at fresh appreciations and perspectives that can be used in design. Disrupting habits can enable our kinaesthetic sense to come to the fore and allow designers to explore external as well as internal perception. The method comprises three parts: ways of accessing the moving body, ways of describing movement and ways of inventing and devising movement. Accessing the moving body can be done by performing familiar movements differently or by performing unfamiliar movements. Describing movement can be done either in a first or third person perspectives and the method proposes specific strategies for each case. Devising movement can be split into two categories: intentionally modifying the parameters and qualities of movement and drawing inspiration from concepts, text and images in a similar way to the cultural probe method (Gaver, Dunne, & Pacenti, 1999). Similarly to Making Strange, the method proposed by Schiphorst (2007) also emphasises the need for designers to explore their internal perceptions. Her method includes workshops that employ performance techniques from theatre and dance to model experiences that could be replicated in digitally enhanced environments. In these workshops, participants increase awareness of their bodily states, for example, by slowing movement down as much as possible. In this way, this design method has the goals of cultivating people’s attention and creating systems that promote a user experience that, through generating attention, can balance inner and outer perception. A framework that aims to disrupt habitual interaction for users, instead of designers, is Visceral Interaction (Ghazali & Dix, 2005). This framework aims to create more natural and intuitive interaction by exploiting deep-seated human abilities to manipulate physical objects. Ghazali & Dix (2005) illustrate the meaning of Visceral Interaction with an example in which users have to perform a sophisticated coordination of intellectual, visual and kinetic information to overcome the unstable mappings of a tangible interface. Despite the apparent poor usability, users found the experience enjoyable. Ghazali and Dix claim this is because of the intuitiveness of visceral interaction. 5. Case study In order to illustrate the use of our classification, this section introduces the description of a case study. This case study is related with the selection and use of two of the frameworks in the

11

classification for a movement-based interaction undergraduate course at the Universidad Nacional Autónoma de México (UNAM). The case study is described in terms of the generic information about the course, the selection and use of the frameworks and a summary of the opinions of the students about the course. 5.1 A movement-based interaction course The aim of the course was for students to learn how to design movement-based interaction applications with an emphasis in user experience. It was an 18 week, 3 hours per week undergraduate course that was taken by both students from engineering and product design (12 students from engineering and 27 from product design). The course was given by two lecturers, one from engineering and the other from product design (two of the authors). The hardware made available was Microsoft’s Kinect sensor. Besides movement-based interaction design and user experience, the course included a component of Kinect programming (8 weeks) and of movement awareness (1 week). The main project of the course was to design a movement-based interaction application (of their choosing) using the Kinect. Students worked in groups of 3, roughly with one engineering student and two from product design. 5.2 Selecting and deploying the frameworks When planning the course, one of the most important decisions to make was what framework or frameworks were going to be employed; that is, what frameworks were going to be taught to the students in order for them to be able to design a movement interaction application. Given that the hardware made available to students was Microsoft’s Kinect sensor, it would be reasonable to assume that the chosen framework would be the Kinect human interface guidelines (Microsoft, 2012). However, this was not the case because 1) it was not desirable that the course project would be driven by technical considerations of the sensor to be employed, and 2) the course emphasised the importance of the design embodying a suitable metaphor and conveying a specific value. Therefore it was decided to employ a combination of the Ross & Wensveen (2010) and Jensen (2007) frameworks. Jensen’s (2007) framework highlights the importance of a clear metaphor, while Ross & Wensveen’s (2010) framework makes emphasis on the ethical value transmitted by a product or system. Additionally, given that both are prescriptive methods, they would provide more concrete guidance to students. These two frameworks were easily combined because they are both of the same type (see Section 4.3.1 Enacting Movement), had the same organisation (sets of steps) and expressed the resulting design in a similar notation. The combination of frameworks had 5 steps: 1) creating behaviours through movement, 2) identifying and describing interaction characteristics and ethical values, 3) developing metaphors to describe those characteristics, 4) specifying behaviour in dynamic form language (Ross & Wensveen, 2010) and through storyboards (Rogers, Sharp, & Preece, 2012) and 5) implementing part of the design into a working prototype. Both frameworks highlight the importance of enacting movement so a professional movement awareness teacher was invited to lead sessions for one week. The objective of this component of the course was to sensitise students to movement so they could approach the movement interaction design with more confidence. The decision to require a description additional to the one in dynamic form was because we thought the Laban-like notation was still quite far from programming code. So we introduced the additional activity of expressing typical uses of the intended systems through storyboards. A storyboard consists of a series of sketches showing how a user would progress through a task using the intended system (Rogers, et al., 2012). We considered that a storyboard was a more concrete

12

outcome from the design process, and that the combination of those two formalisms would be able to guide the prototype implementation in a more effective way. A part of the design was implemented as a functional Kinect prototype. The prototype typically included a Kinect sensor and a large screen. The design and prototype were evaluated by postgraduate students, all of them working on movement interaction design for their own dissertation projects. The aim of this evaluation was to assess whether the design and prototype successfully conveyed the intended metaphor and ethical value. After been presented with the designs and prototypes, evaluators would be asked what they thought the metaphor and ethical value were. Overall, evaluators managed to grasp the gist of those aspects of the designs. The rest of this section briefly describes three of those projects and their evaluations. Who’s cooking. This application aimed to be an important element of future intelligent kitchens and intends to improve the experience of someone cooking. The design included several modules: a cookbook, cooking lessons and a personalised diet plan. The main metaphor was that of a book, users would employ a flipping page gesture to see a different recipe or to go backwards or forwards on a lesson video (see Figure 2 for a sketch of the interface illustrating the book metaphor). The ethical value of the application was related with helping users to adopt and keep a healthy diet. Overall the metaphor and ethical value were clear for the evaluators. One of them identified the metaphor but related it with similar metaphors employed in touch-screen devices: “I think the metaphor is that of a touch-screen in which users can turn pages by sliding their hand across”. Additionally they noted some issues with tracking users, the prototype was not giving enough feedback to users about their status.

Figure 2. Storyboard sketch of who's cooking. Users move through recipes with a flipping page gesture.

Pirulí. The aim of this application was to support programmes for weight control for children. The design included two main modules, one to promote a more active lifestyle and the other to provide nutritional information. A movement-interaction game was implemented as part of the first module. The metaphor of this game was similar to the game of tetris as it consisted of collecting different types of (falling) food and grouping them through colours in different baskets (see Figure 3 for a storyboard sketch illustrating this aspect of the metaphor). Its ethical value was related with promoting a healthier diet and lifestyle. It seems the evaluators could identify the metaphor tacitly but not explicitly. Within seconds of starting playing the game and without any indication they knew what to do, however when queried about the game’s metaphor they could not articulate it. On the other hand they did not have any problems with the value, they could identify and describe it clearly. Additionally they highlighted some usability issues in the game related with the way users were supposed to collect and drop the food.

13

Figure 3. Storybook sketch of Piruli. Players classify falling objects by intercepting them.

Kinect in the shower. The aim of this project was to improve the experience of people in the shower, specifically by providing gesture control for handling water flow and also for playing music. Although the design included those two modules, the prototype only implemented gesture control for playing music (which was good because the evaluation did not have to take place in the shower). This was the only prototype that did not employ a large screen, feedback was mainly tactile (warm or cold water) and auditory (the played music). The main metaphor of the application was a shower tap with its convention of turning left for hot water and right for cold, in this case users had to lift either their left or right arms for the desired effect (see Figure 4). The ethical value was related with providing a pleasurable shower experience and at the same time encouraging water saving. The evaluators commented that the metaphor, although clear, did not work very well for playing music: “The metaphor is based on the use of the traditional shower taps, where you have taps for hot and cold water. However this is mixed up with the music control, it would be better to separate these two aspects of the design”. This prompted the designers to consider other gestures and perhaps a different metaphor for the music control module. The evaluators also commented that although the pleasurable aspect of the ethical value was clear, this might be at odds with saving water.

Figure 4. Storyboard sketches of Kinect in the shower. Users have to lift their right arm for cold water and

their left for hot.

5.3 The students’ point of view Students had the chance to evaluate the course in several ways: through informal, face-to-face comments to the course tutors, through comments in the course online discussion forum, through an institutional evaluation and through a questionnaire designed by the tutors and whose aim was to

14

evaluate the deployment of the movement-interaction frameworks. Although the students’ point of view was similar throughout all of those evaluation channels, in this section we only report the outcome of the latter questionnaire. Overall, students thought the course was interesting and engaging. They liked its innovative aspects, specifically the fact that the studied theories and methods were located in the conceptual space between product and interaction design, as well as the opportunity to design for an innovative device like the Kinect. However they also thought that there were a few aspects that could be improved. The most commonly referred of those aspects were related with difficulties to integrate kinesthetic knowledge into their designs, with working with students of other disciplines and, for the design students, with the programming component of the course. Regarding kinesthetic knowledge, there were issues with the movement awareness component of the course as well as with the notations employed. Regarding the movement awareness component, students valued the movement awareness sessions, they thought those sessions promoted a friendly environment that enabled even shy students to explore this dimension, and they could see the potential of kinesthetic knowledge to enrich their designs. However, in general they found it difficult to apply the experience from those sessions into their projects. We believe that the movement awareness sessions could have been more helpful if all of the groups had been working on the same design and hence the session could have focused on the design problem. In the case studies presented in the papers describing the two selected frameworks (Ross & Wensveen, 2010; Jensen, 2007), all of the teams were working on the same design, but that was not our case. The fact that teams were working on different designs meant that the movement awareness sessions had to be quite generic and therefore potentially less helpful to each of the teams. The other aspect related to kinesthetic knowledge was the employed notations. In Section 5.2 we talked about the need to introduce storyboards as a notation that is less abstract than dynamic form language. Students thought that although storyboards indeed offered a more concrete method of expressing their designs, they suggested that video could perhaps be more suitable to capture the dynamic aspects of movement interaction design. Regarding the difficulties with interdisciplinary teams, students commented that the most frequent issues were related to communication and working patterns. According to them, establishing an effective communication with students of a different discipline was frequently difficult, and this was sometimes exacerbated by different ways to approach project work. Finally, design students frequently commented that a particularly difficult aspect of the course were the programming sessions. When we were planning the course we thought this would be a difficult issue for them and selected a programming language oriented for graphic design; however, in future offerings of the course we will investigate whether it would be possible to use a rapid prototyping tool instead of a programming language. 6. Discussion and conclusions The paper has presented a classification of user experience frameworks for movement-based interaction design. The aim of this classification is to guide practitioners when deciding what framework or frameworks to employ for a specific design exercise. Frameworks have been classified according to several aspects: type, theme, scope and main focus. We believe these aspects

15

can be particularly helpful in providing an overview of the space of design frameworks in this area. Type gives a generic idea about the role a framework can play; while theme, scope and main focus can provide a specific notion about the central concepts of the framework. The use of the classification was illustrated through a case study. In this case study there were specific requirements regarding particular aspects of the design that needed prioritised (a clear metaphor and ethical value). Overall, the classification was useful in identifying and selecting two frameworks based on those requirements. The selected frameworks worked well for the design exercise although some modifications related with specific aspects of the case were needed. An issue we believe is important in this respect has to do with the notation of the design output. One of the most concrete notations employed by some of those frameworks is Labanotation. Although this notation can specify at a detailed level the main aspects of a movement-based design, it is still quite far from a notation such as programming code and therefore there might be a wide gap between the expressed design and its implementation. Overall, it can be seen that there are a variety of approaches and frameworks for the design of movement-based interaction that pays special attention to user experience. This review has highlighted several themes and issues around those approaches. One of the most interesting themes is exploring the design space through moving and through experiential knowledge about movement. Section 4.3 introduces several frameworks that advocate this design strategy. The idea that a designer “has to be an expert in movement, not just theoretically, by imagination or on paper, but by doing and experiencing while designing” (Hummels, Overbeeke, & Klooster, 2007), has far reaching implications. It might mean, for example, that the design curriculum would have to include a strong component of theoretical, practical, and even experiential knowledge about movement. Additionally, a focus on experiencing one’s own movement while designing would require practitioners to split their attention to include both the details of the design process as well as an awareness of their own experience. This might prove too taxing for someone’s cognitive processes. However, some strategies to address this potential issue have been developed by the frameworks in Section 4.3.2. The review has highlighted some differences between the research areas contributing to the intersection of movement-based design and user experience. The main difference is the level of maturity of Video Gaming and the rest of them. TUIs, Ubiquitous Computing and product design have already produced a number of frameworks and methods while Video Gaming research has been more concerned with proposing compelling games and addressing technical aspects (Mueller, Agamanolis, Vetere, & Gibbs, 2009). As Sinclair, Hingston, & Masek (2007) have expressed it: ‘the idea of exergaming, while not new, is still in its infancy when it comes to systematic research, particularly in terms of game design’. However, we are witnessing an increasing number of studies in this area that explore how the quality of body movement contribute and steer the emotional, social and learning experience in entertainment and serious games. Isbister et al. (2012a) are investigating the use power pose (Carney, et al., 2010) to boost confidence in kids playing serious game for math learning and (2012b) now to build social bonding and trust. Walter et al. (2013) are exploring how gesture interfaces in public displays can be used to motivate movement-based interaction in social contexts. Gao et al., (2012) extend the study of body gesture to affective touch behaviour investigating how such modality can be used to capture and steer the emotional experience of the player. This has also been explored in devices that engage people with handing digital textiles (Atkinson et al., 2013). This study explores gesture and touch behaviour as

16

modalities to understand the properties of this material but also to engage and experience it in a playful way. Additionally, in gaming an important motivation has been to promote physical activity and fitness, and therefore considerations about user experience are frequently combined with those of sport (Sinclair, et al., 2007; Sinclair, Hingston, & Masek, 2009). Some research has suggested however that those motivations can some times be incompatible (Thin & Poole, 2010). Recent research in video gaming, however, has started to investigate issues more directly related with user experience. Besides the work by Bianchi-Berthouze (2013), which has been developed mostly within the area of video gaming, other movement-based interaction studies are beginning to focus on user experience. Although not at the level of producing theoretical frameworks yet, those studies have started to tap into some of the themes identified in this review. Thin & Poole (2010), for example, have suggested that if the focus is on user experience, movement-based interaction games bring the possibility of making the physical experience of gaming central to game design and therefore transforming game experience into an opportunity for expression and for developing bodily knowledge and skills. Also, Behrenshausen (2007) and Kristiansen (2008) have looked at the dynamic aspects of design and more specifically considering bodily movement itself as an aesthetic experience and how this can bring opportunities for viewing gaming as a type of performance (Kristiansen, 2008). Nihjar et al. (2012) are investigating how movement strategies reveals the motivations of the player, and Savva et al. (2012) have investigated how body expressions emerge through interaction and allow the player to have an active role in the creation of the aesthetic experience. Also, a potentially important theme would be exploring the design space through one’s own movement. In order to explore this theme, studies could start by employing methods and techniques similar to those used in HCI and product design, for example methods that disrupt the designer’s habits or those that allow them to explore their internal perception. Another important difference is the disciplines that have influenced the three research areas. HCI and product design have searched for theoretical grounding from philosophy, aesthetics and social science among others fields. Also, they have drawn inspiration for methods and techniques from performance disciplines such as dance and theatre. Video Gaming, on the other hand, has relied mainly on sports as a source of inspiration for design requirements. In this respect, gaming could benefit from looking at other fields with a tradition of work on lived experience and human movement. Obvious candidates would be those that have influenced HCI and product design but their relevance needs to be assessed. Finally, more research linking movement-based interaction with the embodied affect area is needed. Bianchi-Berthouze’ studies suggest that taking into account emotional and social expressive movements in the design process could have very important implications for areas such as learning (Isbister et al., 2012b) or technology for physical rehabilitation (Singh et al., 2014), for example.

17

References Alben, L. (1996). Quality of experience: defining the criteria for effective interaction design.

Interactions, 3(3), 11-‐15. Ashlee, V. (2010). With Kinect, Microsoft Aims for a Game Changer. The New York Times

Online. Atkinson, D., Orzechowski, P., Petreca, B., Watkins, P., Baurley, S., Bianchi-‐Berthouze, N., et al.

(2013). Tactile Perceptions of Digital Textiles: a design research approach. Paper presented at the SIGCHI Conference on Human Factors in Computing Systems 2013.

Barsalou, L. W. (2008). Grounded Cognition. Annual Review of Psychology, 59, 617-‐645. Behrenshausen, B. G. (2007). Toward a (kin)aesthetic of video gaming: The case of dance

dance revolution. Games and Culture, 2(4), 335-‐354. Bellotti, V., Back, M., Edwards, W. K., Grinter, R. E., Henderson, A., & Lopes, C. (2002). Making

sense of sensing systems: five questions for designers and researchers. Paper presented at the Proceedings of the SIGCHI conference on Human factors in computing systems: Changing our world, changing ourselves.

Benford, S., Schnädelbach, H., Koleva, B., Anastasi, R., Greenhalgh, C., Rodden, T., et al. (2005). Expected, sensed, and desired: A framework for designing sensing-‐based interaction. ACM Transactions on Computer-‐Human Interaction, 12(1), 3-‐30.

Bianchi-‐Berthouze, N. (2013). Understanding the role of body movement in player engagement. Human Computer Interaction, 28 (1), 42-‐75. Carney, D. R., Cuddy, A. J., & Yap, A. J. (2010). Power Posing: Brief Nonverbal Displays Affect

Neuroendocrine Levels and Risk Tolerance. Psychological Science, 21(10), 1363-‐1368. Desmet, P. M. A., & Hekkert, P. (2007). Framework of product experience. International

Journal of Design, 1(1), 57-‐66. Djajadiningrat, T., Matthews, B., & Stienstra, M. (2007). Easy doesn’t do it: skill and expression

in tangible aesthetics. Personal and Ubiquitous Computing, 11(8), 657-‐676. Dourish, P. (2001). Where the Action Is: The Foundations of Embodied Interaction: The MIT

Press. Eriksson, E., Hansen, T. R., & Lykke-‐Olesen, A. (2007). Movement-‐based interaction in camera

spaces: a conceptual framework. Personal Ubiquitous Comput., 11(8), 621-‐632. Fogtmann, M. H., Fritsch, J., & Kortbek, K., J. (2008). Kinesthetic interaction: revealing the bodily

potential in interaction design. Paper presented at the Proceedings of the 20th Australasian Conference on Computer-‐Human Interaction: Designing for Habitus and Habitat.

Forlizzi, J., & Battarbee, K. (2004). Understanding experience in interactive systems. Paper presented at the Proceedings of the 5th conference on Designing interactive systems: processes, practices, methods, and techniques.

Forlizzi, J., & Ford, S. (2000). The building blocks of experience: an early framework for interaction designers. Paper presented at the Proceedings of the 3rd conference on Designing interactive systems: processes, practices, methods, and techniques.

Griffin, H. J., Aung, M. S. H., Romera-‐Paredes, B., McKeown, G., Curran, W., McLoughlin, C., Bianchi-‐Berthouze, N. (2013). Laughter Type Recognition from Whole Body Motion. IEEE Proceedings of Affective Computing and Intelligent Interaction, 349-‐355.

Gao, Y., Bianchi-‐Berthouze, N., & Meng, H. (2012). What does touch tell us about emotions in touchscreen-‐based gameplay? ACM Transactions on Computer -‐ Human Interaction, 39-‐70.

Gaver, B., Dunne, T., & Pacenti, E. (1999). Design: Cultural probes. interactions, 6(1), 21-‐29.

18

Ghazali, M., & Dix, A. (2005). Visceral Interaction. Paper presented at the 10th British HCI Conference.

Goffman, E. (1981). Forms of talk. Oxford: Basil Blackwell. Graves-‐Petersen, M., Iversen, O. S., Krogh, P. G., & Ludvigsen, M. (2004). Aesthetic interaction: a

pragmatist's aesthetics of interactive systems. Paper presented at the Proceedings of the 5th conference on Designing interactive systems: processes, practices, methods, and techniques.

Hassenzahl, M. (2004). The thing and I: understanding the relationship between user and product. Funology (pp. 31-‐42): Kluwer Academic Publishers.

Hassenzahl, M., & Tractinsky, N. (2006). User Experience – a Research Agenda. Behaviour and Information Technology, 25(2), 91-‐97.

Höök, K. (2008). Knowing, Communication and Experiencing through Body and Emotion. Learning Technologies, IEEE Transactions on, 1(4), 248-‐259.

Hummels, C., Overbeeke, K. C., & Klooster, S. (2007). Move to get moved: a search for methods, tools and knowledge to design for expressive and rich movement-‐based interaction. Personal and Ubiquitous Computing, 11(8), 1617-‐4909.

Hutchinson Guest, A. (1977). Labanotation The System of Analyzing and Recording Movement (Revised third edition ed.). New York: Routledge, Chapman and Hall.

Isbister, K., Karlesky, M., & Frye, J. (2012a). Scoop! Using Movement to Reduce Math Anxiety and Affect Confidence. Interactivity exhibition at CHI 2012.

Isbister, K. (2012b). How to Stop Being a Buzzkill: Designing Yamove!, A Mobile Tech Mash-‐up to Truly Augment Social Play. Proceedings of MobileHCI 2012, San Francisco, CA.

Jensen, M. V. (2007). A physical approach to tangible interaction design. Paper presented at the 1st international conference on Tangible and embedded interaction.

Jensen, M. V., & Stienstra, M. (2007). Making sense: interactive sculptures as tangible design material. Paper presented at the Proceedings of the 2007 conference on Designing pleasurable products and interfaces.

Kleinsmith, A., and Bianchi-‐Berthouze, N. (2013). Affective Body Expression Perception and Recognition: A Survey. IEEE Trans. on Affective Computing, 4, 15-‐33.

Kristiansen, E. (2008). Designing innovative video games. In J. Sundbo & P. Darmer (Eds.), Creating experiences in the experience economy (Vol. (Services, economy and innovation series), pp. 33-‐59): Edward Elgar.

Larssen, A. T., Robertson, T., & Edwards, J. (2007). The feel dimension of technology interaction: exploring tangibles through movement and touch. Paper presented at the Proceedings of the 1st international conference on Tangible and embedded interaction.

Larssen, A. T., Robertson, T., & Edwards, J. (2008). Experiential Bodily Knowing as a Design (Sens)-‐ability in Interaction Design. Paper presented at the 3rd European Conference on Design & Semantics of Form & Movement (DeSForM), Newcastle, UK: Northumbria University.

Larssen, A. T., Robertson, T., Loke, L., & Edwards, J. (2007). Introduction to the special issue on movement-‐based interaction. Personal Ubiquitous Computing, 11(8), 607-‐608.

Law, E. L., Roto, V., Hassenzahl, M., Vermeeren, A. P., & Kort, J. (2009). Understanding, scoping and defining user experience: a survey approach. Paper presented at the Proceedings of the 27th international conference on Human factors in computing systems.

Loke, L., & Robertson, T. (2007). Making Strange with the Falling Body in Interactive Technology Design. Paper presented at the Design and semantics of form and movement.

19

Loke, L., & Robertson, T. (2009). Design representations of moving bodies for interactive, motion-‐sensing spaces. International Journal of Human-‐Computer Studies, 67(4), 394-‐410.

Loke, L., & Robertson, T. (2010). Studies of Dancers: Moving from Experience to Interaction Design. International Journal of Design, 4(2), 1-‐16.

Mäkelä, A., & Fulton Suri, J. (2001). Supporting Users’ Creativity: Design to Induce Pleasurable Experiences. Paper presented at the Conference on Affective Human Factors, London.

Marsh, K. L., Richardson, M. J., & Schmidt, R. C. (2009). Social connection through joint action and interpersonal coordination. Topics in Cognitive Science, 1, 320-‐339.

Mazalek, A., & Van den Hoven, E. (2009). Framing tangible interaction frameworks. Artificial Intelligence for Engineering Design, Analysis and Manufacturing, 23(3), 225-‐235.

Microsoft. (2012). Kinect for Windows Human Interface Guidelines v1.5.0. Mueller, F., Agamanolis, S., Vetere, F., & Gibbs, M. (2009). Brute force interactions: leveraging

intense physical actions in gaming. Paper presented at the Proceedings of the 21st Annual Conference of the Australian Computer-‐Human Interaction Special Interest Group: Design: Open 24/7.

Nijhar, J., Bianchi-‐Berthouze, N., & Boguslawski, G. (2012). Does Movement Recognition Precision affect the Player Experience in Exertion Games? . Paper presented at the International Conference on Intelligent Technologies for interactive entertainment (INTETAIN).

Nijholt, A., van Dijk, B., & Reidsma, D. (2008). Design of Experience and Flow in Movement-‐Based Interaction. In A. Egges, A. Kamphuis & M. Overmars (Eds.), Motion in Games (Vol. 5277, pp. 166-‐175): Springer Berlin / Heidelberg.

Riskind, J. H., & Gotay, C. C. (1982). Physical posture: Could it have regulatory or feedback effects on motication and emotion? Motivation and Emotion, 6, 273– 298.

Robertson, T. (1997). Cooperative work and lived cognition: a taxonomy of embodied actions. Paper presented at the Proceedings of the fifth conference on European Conference on Computer-‐Supported Cooperative Work.

Robertson, T. (2002). The Public Availability of Actions and Artefacts. Computer Supported Cooperative Work, 11(3), 299-‐316.

Rogers, Y., & Muller, H. (2006). A framework for designing sensor-‐based interactions to promote exploration and reflection in play. International Journal of Human-‐Computer Studies, 64(1), 1-‐14. Rogers, Y., Sharp, H., & Preece, J. (2012). Interaction Design -‐ Beyond Human-‐Computer

Interaction (3rd. ed.): Wiley. Ross, P., R., & Wensveen, S. A. G. (2010). Designing aesthetics of behavior in interaction: Using

aesthetic experience as a mechanism for design. International Journal of Design, 4(2), 3-‐13.

Sacks, H., Schegloff, E., & G., J. (1974). A simplest systematics for the organisation of turn-‐taking for conversation. Language, 50, 696-‐735.

Savva, N., Scarinzi, A., Bianchi-‐Berthouze, N. (2012). Continuous recognition of player's affective body expression as dynamic quality of aesthetic experience. IEEE Transactions on Computational Intelligence and AI in Games, 4 (3), 192-‐212 Schegloff, E., Jefferson, G., & Sacks, H. (1977). The preference for self-‐correction in the

organization of repair in conversation. Language, 53, 361-‐382. Schiphorst, T. (2007). Really, really small: the palpability of the invisible. Paper presented at the

Proceedings of the 6th ACM SIGCHI conference on Creativity & Cognition.

20

Sharp, H., Rogers, Y., & Preece, J. (2007). Interaction design: Beyond human-‐computer interaction. Hoboken, N.J.: Wiley.

Sinclair, J., Hingston, P., & Masek, M. (2007). Considerations for the design of exergames. Paper presented at the Proceedings of the 5th international conference on Computer graphics and interactive techniques in Australia and Southeast Asia.

Singh, A., Klapper, A., Jia, J., Fidalgo, A., Tajadura-‐Jimenez, A., Kanakam, N., Bianchi-‐Berthouze, N., CdeC Williams, A. (2014). Motivating People with Chronic Pain to do Physical Activity: Opportunities for Technology Design. ACM Proceedings of the SIGCHI Conference on Human Factors in Computing Systems. 2803-‐2012.

Stepper, S., & Strack, F. (1993). Proprioceptive Determinants of Emotional and Nonemotional Feelings. Journal of Personality and Social Psychology, 64(2), 211-‐220.

Thin, A., & Poole, N. (2010). Dance-‐Based ExerGaming: User Experience Design Implications for Maximizing Health Benefits Based on Exercise Intensity and Perceived Enjoyment. In Z. Pan, A. Cheok, W. Müller, X. Zhang & K. Wong (Eds.), Transactions on Edutainment IV (Vol. 6250, pp. 189-‐199): Springer Berlin / Heidelberg.

Valdesolo, P., & DeSteno, D. (2011). Synchrony and the social tuning of compassion. Emotion, 11(262-‐266), 262-‐266.

Valdesolo, P., Ouyang, J., & DeSteno, D. (2010). The rhythm of joint action: Synchrony promotes cooperative ability. Journal of Experimental Social Psychology, 46, 693-‐695.

Veld, E.M.J., van Boxtel, G.J.M., & de Gelder, B. (2014). The Body Action Coding System I: Muscle activations during the perception and expression of emotion. Social Neuroscience, 9(3), 249-‐264.

Walter, R., Bailly, G., & Müller, J. (2013). StrikeAPose: Revealing Mid-‐Air Gestures on Public Displays. Paper presented at the SIGCHI Conference on Human Factors in Computing Systems 2013.

Wells, G. L., & Petty, R. E. (1980). The Effects of Overt Head Movements on Persuasion: Compatibility and Incompatibility of Resources. Basic and Applied Social Psychology, 1(13), 219-‐230.

Wensveen, S. A. G., Djajadiningrat, J. P., & Overbeeke, C. J. (2004). Interaction frogger: a design framework to couple action and function through feedback and feedforward. Paper presented at the Proceedings of the 5th conference on Designing interactive systems: processes, practices, methods, and techniques.

Ziemke, T. (2003). What's that thing called embodiment? (pp. 1305-‐1310): Proceedings of the 25th Annual Conference of the Cognitive Science Society.

21

Biographies Pablo Romero is a Senior Researcher in the Computer Science Department at UNAM (Universidad

Nacional Autónoma de México). Previously he was a lecturer at the Informatics Department of Sussex University, England. His research interests include the Psychology of Programming and more recently User Experience and Movement-Based Interaction. He was a co-investigator in the ESRC-EPSRC funded project Stage which explored Movement-Interaction in the area of video game creation and is currently the principal investigator of a UNAM funded project on optimal user experience in movement-based interaction games.

Nadia Bianchi-Berthouze is a Reader at the UCL Interaction Centre, University College London,

UK. Her current research focuses on studying body movement as a medium to induce, recognize, and measure the quality of experience of humans interacting and engaging with/through whole-body technology. In 2006, she was awarded an EU FP6 International Marie Curie Reintegration Grant (AffectME) to investigate the above issues in the clinical and entertainment contexts. She is the principal investigator in Emo&Pain, an EPSRC funded project on the design of affective- aware multimodal technology to motivate physical activity in chronic pain. She is also investigating the role of body expressions in emotional contagion processes in human-avatar interaction within the EU-FP7 funded project ILHAIRE.

Ricardo Cruz is a Ph.D. Student at the Instituto de Investigaciones en Matemáticas Aplicadas y

Sistemas (IIMAS) and also lecturer in the Faculty of Science (UNAM). His research interests include User Experience, Flow and Movement-Based Interaction. The theme of his PhD project is about Movement-Interaction and User Experience in the area of physical rehabilitation.

Gustavo Casillas Lavín is a Senior Lecturer at the Industrial Design Research Center (CIDI) at

UNAM (Universidad Nacional Autónoma de México). His research interests include Interface and Interaction Design, and more recently User Experience through whole-body interaction with digital and non-digital products. He is also working in the epistemology of Design and the links between Cognitive Sciences and Design.

Addresses for Correspondence Pablo Romero, Departamento de Ciencias de la Computación, IIMAS, Circuito Escolar s/n México DF, 4to piso, Cub. 407. Ciudad Universitaria, Coyoacan, CP 04510, México. Tel: +52 55 56223619 Email: [email protected] Nadia Bianchi-Berthouze, UCLIC, University College London MPEB Gower Street, London, WC1E 6BT, United Kingdom. Tel: +44 20 7679 0690 Email: [email protected] Ricardo Cruz, Departamento de Ciencias de la Computación, IIMAS, Circuito Escolar s/n México DF, 4to piso, Area de Doctorados. Ciudad Universitaria, Coyoacan, CP 04510, México. Email: [email protected]

22

Gustavo Casillas Lavín, Centro de Investigaciones de Diseño Industrial, CIDI, Circuito Escolar s/n México DF, Ciudad Universitaria, Coyoacan, CP 04510, México. Tel. +52 55 56220835 Email: [email protected]